A comprehensive test of over a dozen AI detection tools reveals they can identify basic synthetic content but fail with sophisticated fakes, while Anthropic and OpenAI face escalating conflicts with the US military over AI deployment restrictions and safety protocols.

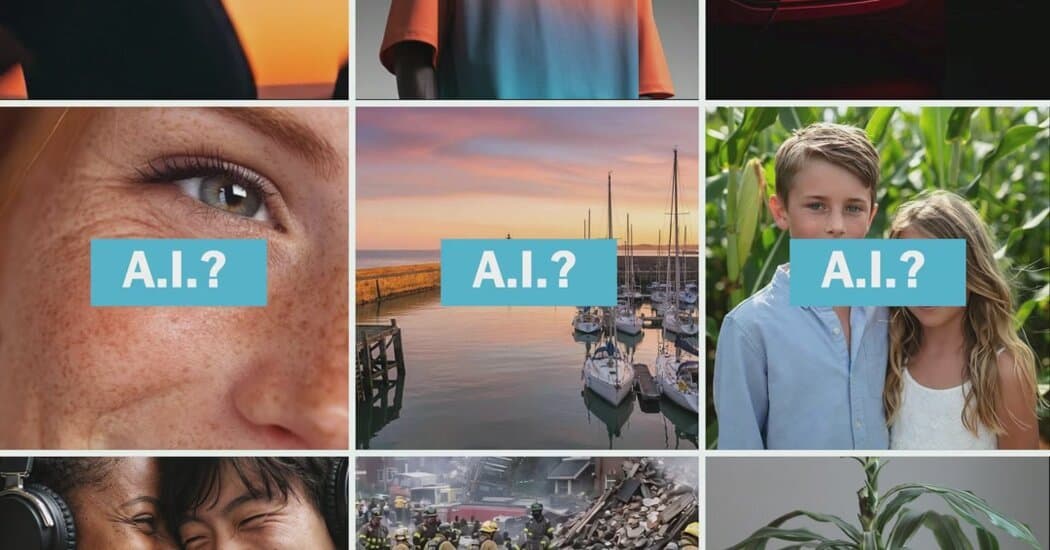

A comprehensive evaluation of more than a dozen AI detection tools has revealed a stark reality: while these systems can reliably identify basic synthetic content, they struggle significantly with more sophisticated fakes, particularly in complex visual media and video formats.

The testing, conducted by Stuart A. Thompson for the New York Times, examined tools from companies including Intel, Microsoft, Google, and various startups. The results paint a concerning picture for an industry racing to combat the proliferation of AI-generated misinformation.

Basic Detection Works, Complex Challenges Remain

Most detection tools performed adequately when identifying straightforward AI-generated images or simple audio deepfakes. However, the evaluation exposed critical weaknesses when content became more sophisticated. Complex images with subtle manipulations proved particularly challenging, with many tools failing to flag obvious alterations that human reviewers could easily spot.

Video analysis emerged as a major blind spot. Few of the tested tools offered any capability to analyze video content, leaving a significant vulnerability as video deepfakes become increasingly prevalent. This gap is especially troubling given the persuasive power of moving images and the difficulty humans have in detecting video manipulation.

Audio detection showed mixed results. While some tools could identify synthetic voices in controlled scenarios, they struggled with more natural-sounding speech or when audio was mixed with background noise. The evaluation found that most tools identified fake audio only in ideal conditions, failing when faced with real-world complexity.

Industry-Wide Military Deployment Crisis

As AI detection tools grapple with technical limitations, the industry faces a separate but equally pressing crisis over military deployment. Anthropic, the AI company behind the Claude model, has become embroiled in a high-stakes confrontation with the Department of Defense that threatens to reshape the entire AI industry's relationship with government.

Defense Secretary Pete Hegseth has directed the DOD to designate Anthropic as a supply chain risk, effectively barring military contractors from using Claude in defense work. The move follows Anthropic's refusal to grant the Pentagon unrestricted access to its models, citing safety concerns about AI deployment in military contexts.

Anthropic's Defiant Stance

The conflict escalated dramatically when Anthropic announced it would challenge any supply chain risk designation in court. The company argues that the designation would only affect contractors' use of Claude on DOD work, but the broader implications could be severe for its business relationships.

Anthropic's position has drawn support from an unexpected quarter: a coalition of tech workers from Amazon, Google, Microsoft, and OpenAI have publicly backed the company's refusal to comply with Pentagon demands. This worker solidarity suggests deep divisions within the tech industry about the appropriate role of AI in military applications.

OpenAI's Contrasting Approach

While Anthropic takes a confrontational stance, OpenAI has pursued a different strategy. The company recently reached an agreement with the DOD to deploy its models in classified networks, with CEO Sam Altman stating that the Department of War "displayed a deep respect for safety" in their negotiations.

This agreement has created tension within OpenAI and the broader industry. Sources indicate that Altman told employees the DOD is willing to let OpenAI build its own "safety stack" and won't force compliance if models refuse certain tasks. However, this flexibility has raised questions about whether OpenAI is compromising on safety principles to secure government contracts.

Industry-Wide Implications

The contrasting approaches of Anthropic and OpenAI highlight a fundamental divide in how AI companies view their responsibilities regarding military applications. Anthropic has drawn "red lines" about AI use by the military, while OpenAI appears willing to work within government frameworks.

This divide extends beyond just these two companies. The Pentagon's actions against Anthropic could create a chilling effect across the industry, with other AI companies potentially facing similar demands for unrestricted access. The situation has become so contentious that President Trump has publicly labeled Anthropic a "radical left, woke company" and directed federal agencies to stop using its products.

Technical and Ethical Crossroads

The convergence of detection tool limitations and military deployment controversies places the AI industry at a critical juncture. On one hand, the technology's inability to reliably detect sophisticated fakes undermines confidence in AI systems' reliability. On the other hand, the military deployment crisis raises fundamental questions about AI safety, ethics, and the appropriate boundaries for government use of advanced technology.

These parallel challenges suggest that the AI industry faces not just technical hurdles but also governance and ethical frameworks that have yet to mature alongside the technology itself. As AI systems become more powerful and ubiquitous, the industry's ability to detect misuse and establish responsible deployment practices will be crucial for maintaining public trust and ensuring beneficial outcomes.

The current situation reveals that while AI detection tools can handle basic tasks, they remain inadequate for the sophisticated threats emerging in the real world. Meanwhile, the industry's internal disagreements about military deployment suggest that technical solutions alone cannot address the complex ethical and safety questions that advanced AI systems raise.

Comments

Please log in or register to join the discussion