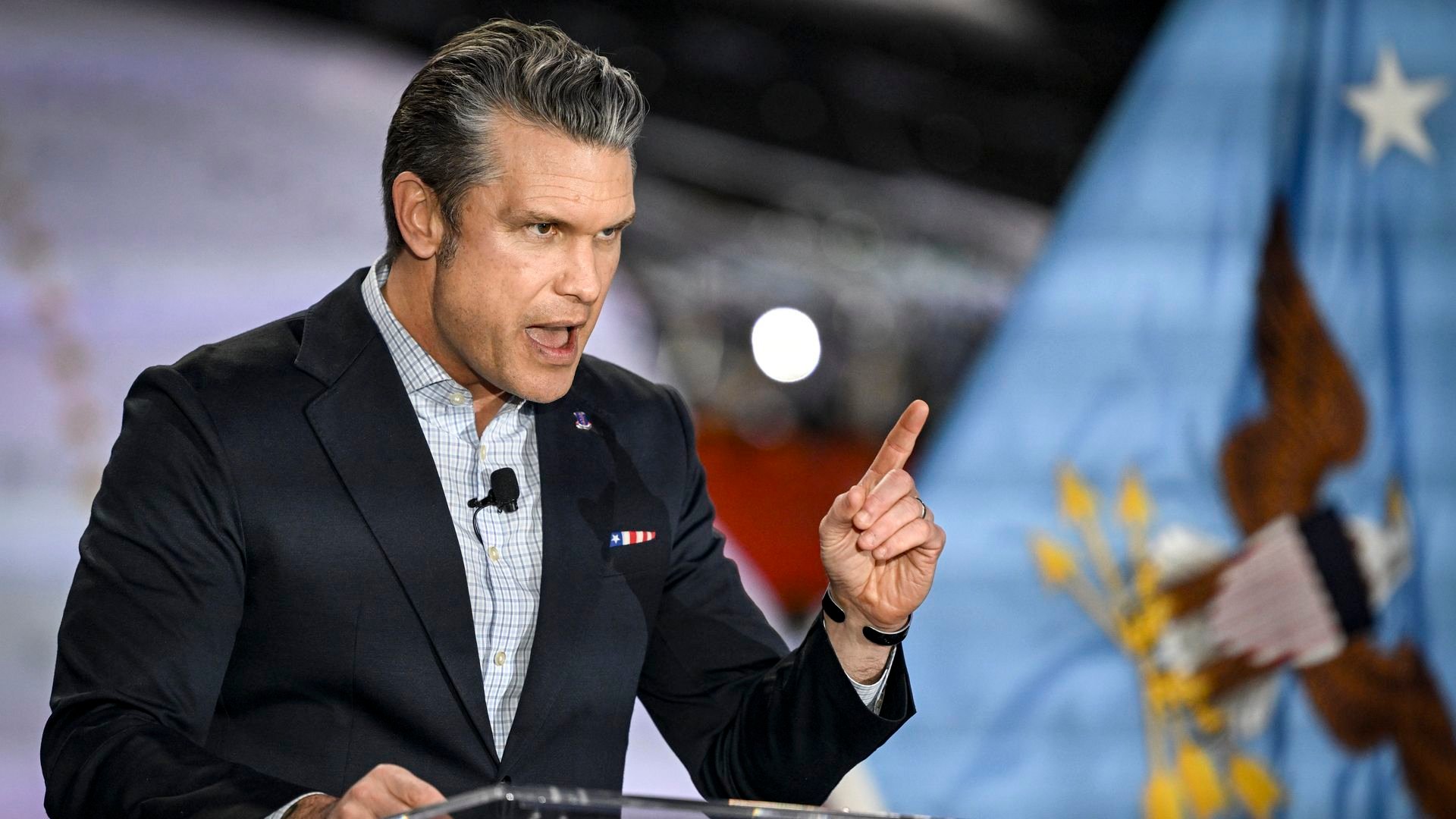

Defense Secretary Pete Hegseth has added Anthropic to a federal procurement blacklist, citing national security concerns and labeling the AI company a 'supply chain risk' that could compromise sensitive government operations.

The Trump administration has escalated its scrutiny of artificial intelligence companies by adding Anthropic, the maker of the Claude AI model, to a federal procurement blacklist. Defense Secretary Pete Hegseth issued the directive this week, citing national security concerns and labeling Anthropic a "supply chain risk" that could compromise sensitive government operations.

This move effectively bars federal agencies from contracting with Anthropic or using its AI technologies in government systems. The decision comes amid growing tensions over AI development and deployment in sensitive government contexts.

What the blacklisting means

The procurement blacklist restricts federal agencies from entering into new contracts with Anthropic or renewing existing ones. This includes cloud computing services, AI model access, and any integration of Claude AI into government systems.

For Anthropic, this represents a significant blow to its government business prospects. The company had been pursuing federal contracts as part of its expansion strategy, positioning Claude as a secure, enterprise-ready AI solution.

National security concerns cited

While the administration hasn't released detailed justification, sources familiar with the decision say concerns centered on:

- Data handling practices and potential foreign access to sensitive information processed by Claude

- Anthropic's corporate structure and potential vulnerabilities in its supply chain

- The AI model's training data and possible security implications

- Competition concerns with other government-preferred AI providers

Industry reaction

The decision has sent shockwaves through the AI industry, with competitors and partners watching closely for potential ripple effects. Some see this as part of a broader administration push to control AI deployment in government contexts.

"This is unprecedented action against a major AI company," said one industry analyst who requested anonymity. "It suggests the government is taking a much harder line on AI security than many expected."

Anthropic's response

Anthropic has not yet issued a formal statement, but sources indicate the company is preparing a response and may challenge the designation through administrative channels.

The company has emphasized its commitment to AI safety and security in previous statements, positioning itself as a responsible developer of frontier AI models.

Broader implications

This blacklisting could have several downstream effects:

- Other AI companies may face increased scrutiny of their government contracts

- Federal agencies may need to accelerate migration away from Anthropic technologies

- The decision could influence how other governments approach AI procurement

- It may accelerate the development of government-specific AI models by traditional defense contractors

What happens next

The blacklisting is likely to face legal challenges, and Anthropic may seek to have the designation reversed or modified. The company's government business, while not publicly quantified, is believed to represent a meaningful portion of its revenue.

For federal agencies that have already integrated Claude AI into their systems, the directive creates immediate compliance challenges and potential operational disruptions.

This development marks a significant escalation in the government's approach to AI regulation and procurement, suggesting that national security concerns may increasingly trump commercial considerations in federal technology adoption.

Comments

Please log in or register to join the discussion