Agoda's observation reveals that AI coding tools have raised individual developer output but haven't significantly improved project-level velocity because the real bottlenecks have shifted to specification and verification, fundamentally changing how engineering teams should be structured.

Agoda recently published an observation arguing that while AI coding tools have measurably raised individual developer output, the resulting velocity gains at the project level have been surprisingly modest, because coding was never the real bottleneck. The post claims that the bottleneck has shifted upstream to specification and verification because these areas require human judgment. This shift carries significant implications for how engineering teams should be structured.

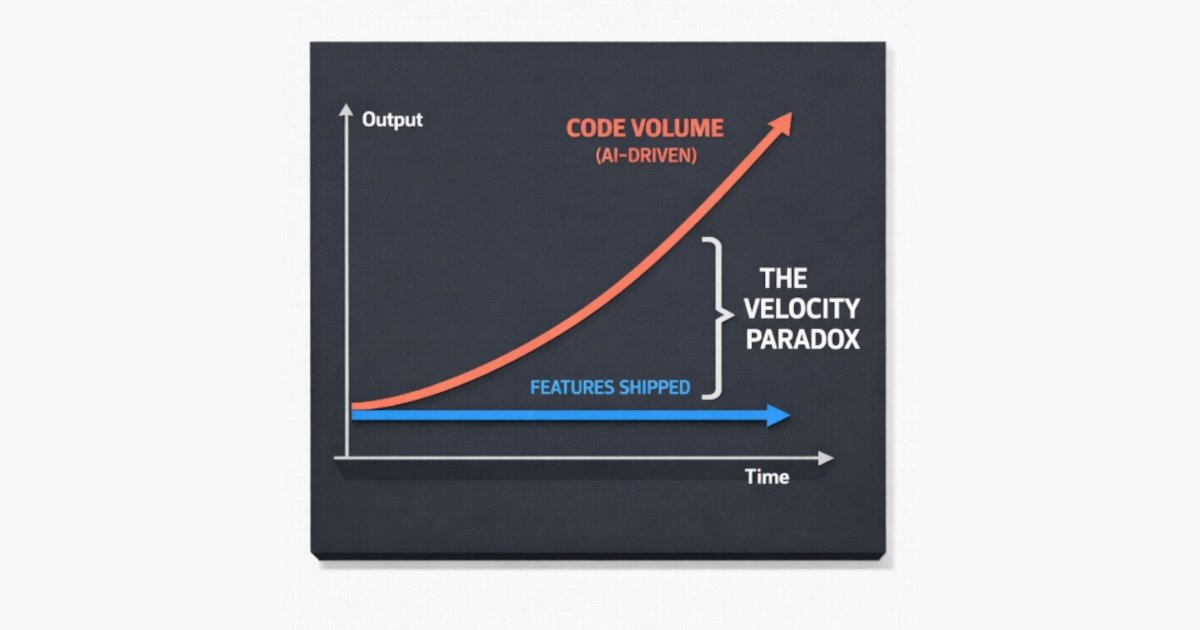

Leonardo Stern, a software engineer at Agoda, frames this as a rediscovery of Fred Brooks' decades-old argument in "No Silver Bullet" that improvements in speed to only one part of the development lifecycle produce diminishing returns for overall delivery. The observation aligns with industry-wide data: research by Faros AI analyzing telemetry from over 10,000 developers across 1,255 teams found that teams with high AI adoption completed 21% more tasks and merged 98% more pull requests, yet PR review time increased by 91%. This metric is consistent with the diagnosis that acceleration at the coding stage relocates pressure elsewhere.

For Stern, the more important implication is what this shift means for team structure. The traditional rationale for small, focused engineering teams was partly built on the assumption that coding was the most significant value-creating activity and that communication was overhead that impeded it. If the highest-value work becomes collaborative specification and architectural alignment, that logic inverts: communication is no longer the cost to minimize, it is the work itself. Smaller teams win not because they reduce coordination but because they achieve shared understanding faster. Five people can genuinely align around intent and corner cases in ways that fifteen typically cannot.

The shift in software engineering key deliverables from coding to specification and verification (source)

The shift in software engineering key deliverables from coding to specification and verification (source)

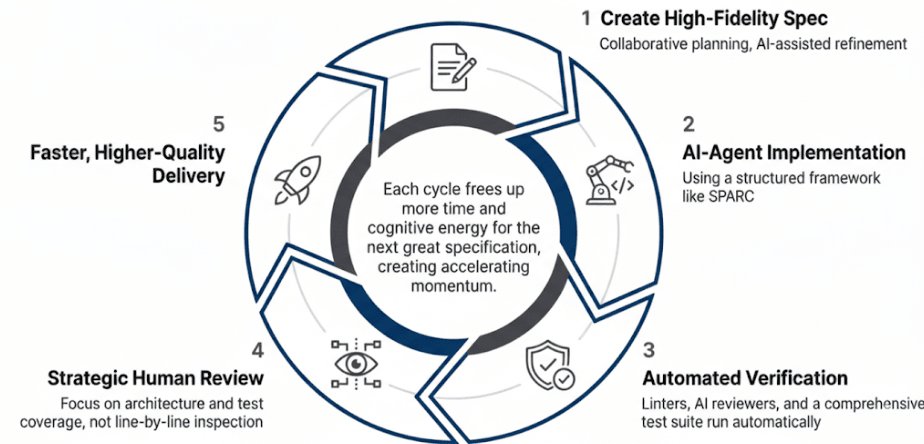

Stern introduces a three-stance taxonomy for how engineers can relate to AI-generated code. The "white box" model, where humans read and review every line, does not scale when agents can produce thousands of lines per hour. "Black box" or "vibe coding", shipping whatever the AI generates with minimal verification, is fast but brittle for production systems serving large user bases. Stern's preferred middle path, which he calls "grey box," keeps humans accountable at the two points that matter: writing specifications precise enough for the agent to execute correctly, and verifying results against evidence rather than inspecting the implementation line by line.

Crucially, he is explicit that accountability does not shift to the AI: the engineer who guides the agent and approves the merge request remains fully responsible for what ships. This reframing of the review from code inspection to evidence evaluation echoes the architectural formalism in Leigh Griffin and Ray Carroll's recent InfoQ article on Spec-Driven Development. In this article, they argue that specifications should become the executable source of truth for a system, with generated code treated as a downstream, regenerable artifact.

Human authority is migrating from writing code to defining and governing intent (source)

Stern arrives at a compatible conclusion from a workflow perspective: a high-fidelity specification with testable acceptance criteria, explicit corner cases, and captured architectural decisions becomes the primary engineering deliverable, with implementation increasingly delegated. Both pieces converge on the observation that human authority is migrating upward in the abstraction stack — from writing code to defining and governing intent.

This represents a fundamental shift in how we think about software engineering productivity. The promise of AI coding assistants was that they would eliminate the bottleneck of manual coding, allowing developers to produce more code faster. But as Agoda's experience shows, this assumption was flawed from the start. Coding was never the bottleneck — it was just the most visible part of the process.

The real work of software development has always been in understanding what needs to be built, why it needs to be built that way, and how to verify that it works correctly. These activities — specification, design, architecture, testing, and review — require human judgment, creativity, and domain expertise. They're also the activities that benefit most from collaboration and communication.

This insight has profound implications for engineering teams. If specification and verification are now the primary bottlenecks, then the traditional wisdom about team size and structure needs to be reconsidered. Small teams aren't better because they write code faster; they're better because they can achieve shared understanding more quickly. The cost of coordination isn't something to minimize — it's the core value that the team provides.

The "grey box" approach that Stern advocates represents a pragmatic middle ground between the extremes of manual coding and fully automated generation. It acknowledges that AI can handle the mechanical aspects of implementation while keeping humans in control of the aspects that require judgment and accountability. This approach also aligns with how experienced engineers already work — they spend most of their time thinking about what to build and how to verify it, not typing code.

For engineering leaders, this shift means rethinking how teams are organized, how work is measured, and what skills are valued. The focus should move from lines of code produced to specifications defined, architectural decisions documented, and verification criteria established. It also means investing in collaboration tools and practices that help teams achieve shared understanding quickly.

The broader industry trend toward spec-driven development and AI-assisted coding is still in its early stages, but Agoda's experience provides valuable insights into where it's heading. As AI tools become more capable at handling implementation details, the human role will continue to evolve upward in the abstraction stack. The engineers who thrive in this environment will be those who excel at specification, verification, and collaboration — not just coding.

This evolution doesn't make human engineers obsolete; it makes them more valuable in different ways. The ability to define clear specifications, anticipate edge cases, and verify complex systems remains uniquely human. As AI takes over more of the mechanical work, these higher-level skills become even more critical to project success.

The lesson from Agoda's experience is clear: if you want to improve software delivery with AI tools, don't focus on making coding faster. Focus on making specification and verification more effective. That's where the real bottlenecks are — and that's where the biggest opportunities for improvement lie.

Comments

Please log in or register to join the discussion