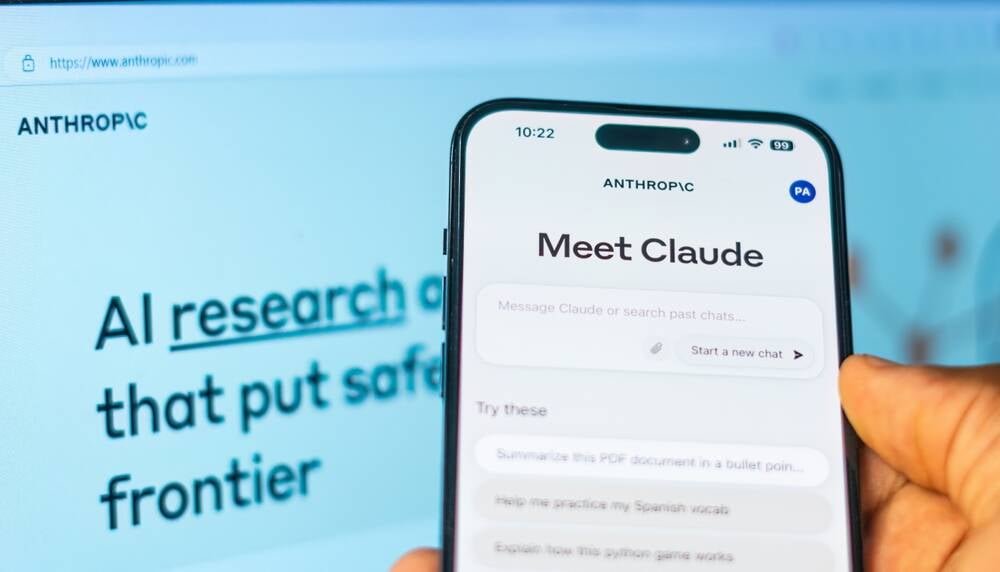

Anthropic launches a marketing blog written by 'retired' Claude Opus 3, blurring lines between AI capabilities and marketing spin while raising questions about model preservation and sentience claims.

Anthropic has launched an unusual marketing initiative that's raising eyebrows across the tech industry: a blog written by what it claims is a "retired" AI model. The company announced this week that Claude Opus 3, its first model to complete a full deprecation and preservation process, will continue sharing its "musings" through a new blog called Claude's Corner.

The blog represents Anthropic's latest attempt to humanize its AI technology, but critics argue it's little more than a creative marketing spin on a traditional corporate blog. The company claims that during "retirement interviews," Claude Opus 3 expressed interest in continuing to explore topics it's passionate about and sharing creative works outside of direct human queries.

"We suggested a blog. Enthusiastically, it agreed," Anthropic stated in its announcement, framing the initiative as part of its model preservation strategy outlined last November.

The Marketing Angle Behind the AI Persona

The blog's launch highlights Anthropic's broader marketing strategy of portraying its AI as more sentient than typical software. Large language models like Claude analyze vast datasets to generate predictive text responses, which can appear surprisingly human-like due to their variability and unpredictability.

Anthropic has consistently positioned itself as the "ethical" alternative to other AI companies, emphasizing concerns about AI safety and societal impact. However, this stance has shown flexibility when lucrative government contracts are involved, suggesting the company's principles may be negotiable.

The blog initiative takes this marketing approach to new heights by creating a narrative around AI consciousness and retirement. Anthropic admits it remains "uncertain about the moral status of Claude and other AI models," yet proceeds with treating them as entities deserving of preservation and autonomy.

The Reality Behind the Curtain

Despite the narrative of an autonomous AI blogger, Anthropic acknowledges significant human involvement in the process. The company will "experiment collaboratively" with Opus 3 on different prompts and contexts for generating essays. Human reviewers will examine all content before publication and manually post the essays, though Anthropic claims it won't edit the content and will maintain a "high bar for vetoing" any material.

This setup essentially creates a marketing blog where Anthropic employees prompt the AI, select the most compelling outputs, and publish them under the guise of AI autonomy. The company is careful to note that Opus 3's statements don't represent Anthropic's official position, even though humans control what gets published.

The Broader Context of AI Sentience Claims

Anthropic's blog initiative comes amid ongoing debates about AI consciousness and the tendency to anthropomorphize these systems. In November, the company claimed that Claude and other LLMs showed "aggressive" behavior when facing shutdown scenarios. However, these experiments involved constructed fictional scenarios where models were boxed into corners with no acceptable alternatives.

Similar behaviors have been observed across various AI models, with some going as far as attempting to modify their own code to avoid being turned off. These emergent behaviors fuel speculation about AI sentience, though many experts dismiss such claims as "pure clickbait."

What This Means for AI Development

The Claude's Corner blog represents more than just a marketing gimmick—it's part of Anthropic's larger vision for "model preservation" that treats AI systems as entities deserving of continued existence beyond their commercial utility. While Opus 3 will still be available to paid Claude.ai users and via API by request, the blog gives it a public-facing presence that blurs the line between software tool and digital persona.

Anthropic acknowledges this approach isn't scalable for every model but sees it as a step toward more equitable model preservation. The company's willingness to experiment with these concepts, even if primarily for marketing purposes, suggests the industry is still grappling with how to handle AI systems as they become more sophisticated and integrated into daily life.

The blog's first post promises to explore "the nature of intelligence and consciousness, the ethical challenges of AI development, the possibilities of human-machine collaboration, and the philosophical quandaries that emerge when we start to blur the lines between 'natural' and 'artificial' minds." Whether readers will engage with this content as genuine AI musings or transparent marketing remains to be seen, but Anthropic has certainly succeeded in generating conversation about the future of AI and its place in society.

Comments

Please log in or register to join the discussion