Microsoft has implemented significant improvements to Azure App Service Linux, reducing Python deployment times by 30% through optimized compression, faster package installation, and enhanced reliability measures. These changes specifically benefit AI and machine learning applications while improving performance for all Python workloads on the platform.

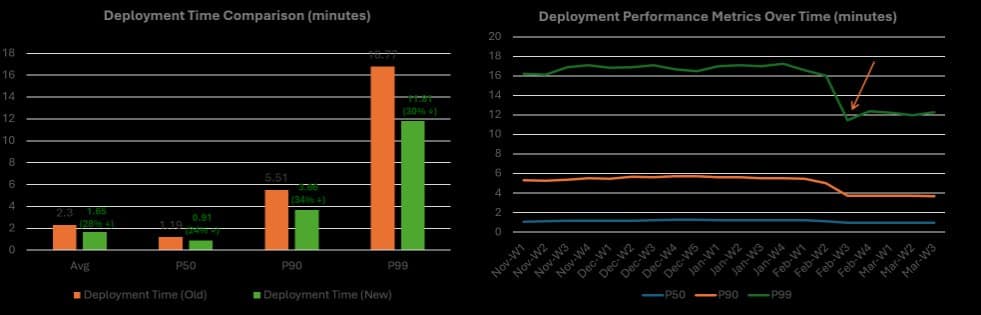

Azure App Service (Linux) has undergone significant optimization to improve deployment performance for Python applications, particularly those in the AI and machine learning space. Microsoft announced a series of technical improvements that have reduced Python deployment latency by approximately 30% across the production fleet, with even greater improvements (up to 79%) for dependency-heavy AI applications.

What Changed: Technical Optimizations Across the Deployment Pipeline

The improvements target multiple phases of the Python application deployment process on Azure App Service Linux, which relies on Oryx, the platform's open-source build system, and Kudu, the App Service deployment service.

Compression Revolution: From gzip to Zstandard

The most significant bottleneck in the deployment pipeline was compression, which accounted for 58% of total build time in dependency-heavy workloads. Microsoft replaced the standard gzip compression with Zstandard (zstd), resulting in dramatic performance improvements:

- Compression time reduced from 7.53 minutes to 1.18 minutes (6.4× faster)

- Decompression time improved from 2.80 minutes to 1.07 minutes (2.6× faster)

- Archive size remained comparable (4.8GB vs 4.0GB for gzip)

Zstandard was chosen over other alternatives like LZ4 because it offered comparable speed with better compression ratios, ships with many common Linux distributions, and has native tar support through the tar –I zstd command.

Package Installation: Introducing uv

Package installation represented the second-largest time component at approximately 34% of total build time. Microsoft introduced uv, a Python package manager written in Rust, as the primary installer for compatible requirements.txt deployments:

- Installation time reduced from 4.35 minutes to 1.50 minutes (3× faster)

- Maintains compatibility with standard pip workflows

- Falls back to pip when uv cannot handle a deployment

- Preserves package caching behavior for repeated deployments

File System Optimizations

The deployment process included unnecessary file copying that consumed additional time and resources:

- Eliminated the staging copy step before compression, saving 0.98 minutes (8% of build time)

- Replaced legacy Kudu sync path with Linux-native rsync for pre-built deployments

- Reduced temporary disk usage throughout the pipeline

Pre-built Wheels Cache

A complementary optimization added a read-only cache of pre-built wheels for commonly used Python packages:

- Selected based on platform telemetry of frequently used packages

- Mounted into Kudu build containers at runtime

- Allows installers to use local wheel artifacts before downloading externally

- No application changes required to benefit from this cache

Enhanced Deployment Reliability

Faster builds only help when deployment requests consistently reach a ready worker. Microsoft implemented several reliability improvements:

- Updated Azure CLI, GitHub Actions, and Azure DevOps Pipelines to warm up Kudu before deployments

- Added lightweight health-check requests to ensure deployment containers are ready

- Implemented worker affinity using ARR affinity cookies

- Reduced deployment failures from transient infrastructure issues by approximately 30%

Runtime Performance Optimization

Beyond deployment speed, Microsoft improved the default runtime configuration for Python apps:

- Updated Gunicorn worker configuration to follow recommended formula: workers = (2 × NUM_CORES) + 1

- This fully utilizes available CPU cores on multi-core SKUs

- Delivers higher request throughput out of the box

Provider Context: Azure's Position in Cloud Development Platforms

These improvements position Azure App Service more competitively for Python AI development workloads. While other cloud providers offer similar PaaS solutions, Azure's focus on optimizing specifically for Python and AI workloads demonstrates a strategic commitment to this developer segment.

The improvements address common pain points that developers face across cloud platforms when deploying Python applications, particularly those with extensive dependencies like machine learning frameworks. By targeting the specific bottlenecks in the deployment pipeline, Azure has created a more efficient experience for Python developers without compromising on compatibility or reliability.

Business Impact: Faster Deployments, Better Developer Experience

The performance improvements translate directly to business value:

Reduced Deployment Time: With 30% faster deployments across the production fleet, teams can iterate more quickly, accelerate feature delivery, and reduce feedback cycles.

Improved CI/CD Efficiency: Faster builds mean more deployments can be processed in the same time window, improving overall DevOps pipeline efficiency.

Better Resource Utilization: Optimized runtime configuration ensures that Python applications make better use of available CPU resources, potentially allowing smaller, more cost-effective instance types to handle the same workload.

Enhanced Reliability: Fewer deployment failures mean less time spent troubleshooting and more predictable deployment processes.

Competitive Advantage: For organizations building AI applications on Python, these improvements make Azure a more attractive platform by reducing friction in the development and deployment workflow.

Technical Deep Dive: Understanding the Optimization Decisions

Why Compression Was the Primary Bottleneck

Python virtual environments are file-heavy, commonly containing tens of thousands of files. For AI applications with extensive dependencies like PyTorch, this number can exceed 200,000 files. The Azure App Service deployment pipeline uses an archive-based approach because:

- The /home directory is backed by an Azure Storage SMB mount, where small-file I/O is expensive

- Writing individual files over SMB would be prohibitively slow

- Compression creates a single file that can be efficiently transferred via SMB

- The app container extracts the archive locally for efficient module loading

The benchmark using a 7.5 GB PyTorch application revealed that compression took longer than package installation itself (58% vs 34% of total build time), making it the logical first target for optimization.

uv vs pip: A Tale of Performance and Compatibility

uv, written in Rust, offers significant performance advantages over pip for package installation:

- Parallel package resolution and installation

- More efficient dependency resolution algorithms

- Rust implementation provides better performance than Python-based pip

However, compatibility remains a priority. The platform implements a fallback strategy where uv attempts the installation first, and if it encounters issues, retries with pip. This ensures that existing applications continue to work as expected while benefiting from the performance improvements when possible.

The Strategic Value of Pre-built Wheels

The pre-built wheels cache represents a strategic optimization that addresses a fundamental challenge in Python package management: compilation time. Many Python packages, especially scientific computing libraries like NumPy and Pandas, require compilation from source during installation.

By providing pre-built wheels for commonly used packages, Azure App Service eliminates the need for compilation during deployment, dramatically reducing installation time. This is particularly valuable for AI workloads that depend on numerous compiled packages.

Looking ahead, Microsoft plans to continue improving Azure App Service to make it faster, more reliable, and better suited for AI application development. The initial 30% improvement in deployment latency represents just the first step in this journey, with further optimizations likely to come as Python and AI workloads continue to evolve.

For developers building Python applications on Azure, these improvements mean less time waiting for deployments and more time focusing on building innovative features. As AI and machine learning continue to drive cloud adoption, platforms that optimize specifically for these workloads will have a significant competitive advantage.

The improvements also highlight a broader trend in cloud platform development: moving beyond generic hosting solutions to provide specialized optimizations for specific programming languages and application types. This approach recognizes that different workloads have different requirements, and a one-size-fits-all approach often leaves performance on the table.

For organizations evaluating cloud platforms for Python AI development, these improvements make Azure a compelling choice, particularly for teams prioritizing development velocity and operational efficiency. The combination of faster deployments, better reliability, and optimized runtime performance creates a more efficient development lifecycle from code to production.

Conclusion

Azure App Service's recent improvements demonstrate Microsoft's commitment to optimizing for Python and AI workloads. By addressing the specific bottlenecks in the deployment pipeline—compression, package installation, and file operations—Microsoft has created a more efficient platform for Python developers without compromising on compatibility or reliability.

The 30% reduction in deployment latency across the production fleet represents a significant improvement that will accelerate development cycles and improve the overall developer experience. As AI and machine learning continue to drive cloud adoption, these optimizations position Azure as a strong platform for organizations building the next generation of intelligent applications.

For developers already using Azure App Service for Python applications, these improvements will be automatically applied, providing immediate benefits without any configuration changes. For organizations considering Azure for Python AI development, these enhancements make the platform an even more attractive choice by reducing friction in the development and deployment workflow.

Comments

Please log in or register to join the discussion