Fastify can process roughly 80 000 requests per second while Express peaks at 25 000, yet Express remains the most downloaded Node.js framework. This gap reflects a trade‑off between raw throughput and ecosystem breadth, and it influences how developers design scalable APIs, choose consistency models, and structure plugin ecosystems.

Fastify delivers up to five times the request throughput of Express in synthetic and real‑world benchmarks, but Express still dominates npm weekly downloads by a factor of fourteen. The discrepancy is not a marketing artifact; it stems from architectural choices that affect scalability, consistency, and API design patterns. Understanding these differences helps teams decide which framework aligns with their operational constraints and long‑term maintenance goals.

Problem: Performance vs Ecosystem Maturity

Node.js developers often face a binary decision when selecting a web framework. On one side, Express provides a familiar API surface, a massive middleware catalog, and a decade of community support. On the other side, Fastify offers built‑in validation, optimized serialization, and a plugin system that reduces per‑request overhead. The choice becomes more pronounced as services scale beyond a few thousand concurrent connections.

The performance gap is visible in benchmark suites such as the one published by PkgPulse. Fastify reaches 70 000–80 000 requests per second on a single core, whereas Express settles between 20 000 and 30 000. In extreme cases, Fastify exceeds 114 000 requests per second, a five‑point‑six multiplier over Express. These numbers are reproducible across different hardware configurations and HTTP load generators.

Solution Approach: Architectural Drivers

Fastify’s speed advantage originates from three concrete design decisions.

Radix‑Tree Routing

Fastify uses a radix‑tree implementation for route matching, which reduces the number of regular‑expression evaluations required by Express’s path‑to‑regexp approach. The radix tree stores static segments as nodes and captures dynamic parameters in a single lookup, cutting routing time by roughly three times.

Schema‑Based Serialization

JSON Schema definitions are compiled into validation and serialization functions at startup. This eliminates runtime reflection that Express relies on for manual validation libraries. The compiled functions produce JSON output directly from the schema, shaving milliseconds off each response.

Worker‑Thread Logging

Fastify bundles Pino, a logger that offloads I/O to dedicated worker threads. This prevents the event loop from being blocked during log writes, a scenario that can degrade throughput when Express uses synchronous console logging or middleware‑based logging.

Plugin Encapsulation

Fastify treats plugins as isolated units that register routes, decorators, and hooks once. Express, by contrast, walks a global middleware chain for each request, which adds overhead proportional to the number of middleware functions. The encapsulation model also simplifies dependency injection and reduces memory fragmentation.

Trade‑offs: When the Gap Matters

For CRUD APIs handling fewer than 1 000 requests per second, both frameworks comfortably meet latency targets. The performance difference becomes material in scenarios that stress the event loop or require low per‑request cost.

- High concurrent connections: Real‑time services, webhook receivers, and IoT backends often see thousands of simultaneous connections. Fastify’s lower per‑request CPU usage translates into fewer required instances.

- Latency‑sensitive APIs: Financial trading platforms, gaming matchmaking services, and edge functions benefit from reduced request latency, which can be measured in microseconds.

- Cloud cost optimization: Faster response times allow a service to run on fewer virtual machines or serverless invocations, directly lowering operational spend.

In these cases, the extra engineering effort to adopt Fastify’s schema‑first model can be justified by the reduction in infrastructure overhead.

Developer Experience: Validation, Logging, and TypeScript

Express leaves validation, logging, and serialization to the developer. Typical patterns involve installing express-validator, pino, and manually wiring JSON serialization. TypeScript support relies on DefinitelyTyped definitions, which do not capture route‑specific types automatically.

Fastify integrates validation through JSON Schema, logging via Pino, and serialization through compiled schema functions. The framework also generates TypeScript interfaces from route schemas, providing compile‑time guarantees that Express cannot offer without additional tooling.

The trade‑off is verbosity. A Fastify route definition includes a schema object that describes request parameters, response payloads, and status codes. This upfront cost yields automatic validation, structured logging, and API documentation generation.

Ecosystem: Plugin Availability and Compatibility

Express’s ecosystem includes thousands of middleware packages. Auth solutions such as Passport.js, validation libraries like Joi, and templating engines are abundant. The framework’s minimal core means developers can compose any combination of tools.

Fastify’s ecosystem is smaller but growing. Core plugins such as @fastify/auth, @fastify/jwt, @fastify/cors, and @fastify/rate-limit cover most common needs. The @fastify/express compatibility layer allows existing Express middleware to be mounted directly, easing migration.

Both frameworks support meta‑frameworks. NestJS defaults to Express but offers a Fastify adapter, enabling developers to switch the underlying HTTP layer without changing the application structure.

Migration Path: Incremental Adoption

Teams that already use Express should avoid wholesale rewrites. The pragmatic migration strategy is:

- Start new services in Fastify: Leverage its performance benefits from day one.

- Mount Express middleware via

@fastify/express: This preserves existing code while exposing Fastify’s core capabilities. - Migrate high‑traffic routes first: Move endpoints that handle the largest request volume to Fastify to capture the biggest throughput gains.

- Introduce schemas gradually: Begin with response schemas to gain serialization speed, then add request validation as confidence builds.

The compatibility layer reduces the learning curve because developers can reuse familiar middleware patterns while still benefiting from Fastify’s internal optimizations.

When to Choose Express

- The team already knows Express and can deliver features faster.

- The project is a prototype or MVP where simplicity outweighs raw performance.

- Specific middleware exists only for Express, such as certain legacy authentication strategies.

- Performance is not a bottleneck, and the cost of additional instances is acceptable.

- The team uses NestJS with the Express adapter, which provides richer documentation.

When to Choose Fastify

- Throughput requirements exceed 30 000 requests per second.

- Built‑in validation and auto‑generated API documentation are required.

- TypeScript is a primary language, and first‑class type inference from schemas is desired.

- Observability demands structured logging at high volume.

- Cloud cost reduction is a priority, and fewer server instances translate to lower spend.

- New projects start without legacy Express constraints.

Verdict: Adoption Trends and Future Outlook

Express remains the most downloaded Node.js framework, with 76.6 million weekly downloads versus Fastify’s 5.4 million. However, Fastify’s download growth rate outpaces Express’s, and new projects increasingly adopt Fastify as the default HTTP layer. The trend is evident in the rise of schema‑first APIs and the demand for low‑latency microservices.

Both frameworks satisfy different architectural constraints. Express excels when ecosystem breadth and developer familiarity are paramount. Fastify shines when raw performance, built‑in validation, and TypeScript ergonomics are critical.

For teams evaluating a switch, the PkgPulse comparison page provides real‑time metrics on health scores, bundle sizes, and download trends. The live comparison is available at https://pkgpulse.com/compare/express-vs-fastify.

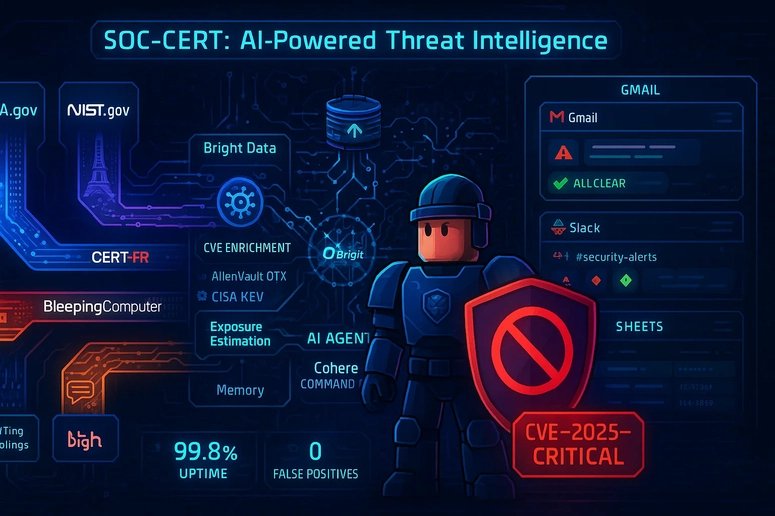

The Bright Data n8n Challenge illustrates how Fastify’s performance can be leveraged in AI‑driven workflows. The submission uses Fastify to serve webhook endpoints that process data from Bright Data’s API, demonstrating the framework’s suitability for high‑throughput, low‑latency pipelines. More details can be found at https://brightdata.com/ai-agents-challenge.

In summary, the decision hinges on whether the cost of additional infrastructure outweighs the engineering effort required to adopt Fastify’s schema‑centric model. Teams that anticipate scaling beyond a few thousand concurrent connections should weigh the performance benefits against the learning curve. Those with modest traffic and a strong preference for existing middleware can continue with Express without sacrificing reliability.

Comments

Please log in or register to join the discussion