A self‑orchestrating swarm of LLM‑driven agents uncovered more than twenty new CVEs, including two remote, unauthenticated out‑of‑bounds writes in the Linux kernel’s ksmbd service. The article explains the motivation, the harness architecture, the “drunk” activation‑steering experiment, key findings, and future research directions for AI‑assisted vulnerability hunting.

Getting LLMs Drunk to Find Remote Linux Kernel OOB Writes (and More)

TL;DR – A heavily automated team of LLM‑powered agents discovered over twenty CVEs in the past few months, most notably CVE‑2026‑31432 and CVE‑2026‑31433, two remote, unauthenticated out‑of‑bounds writes in the Linux kernel’s ksmbd implementation. The post walks through the background, the harness that made it possible, the surprising “drunk” activation‑steering experiment, and the lessons learned for the future of AI‑driven vulnerability research.

Background

The idea of using large language models (LLMs) for vulnerability research has been floating around since DARPA’s AIxCC and XBOW projects demonstrated that models could assist in fuzzing and symbolic execution. Early attempts, however, were hampered by three practical problems: the models needed massive prompt engineering, tool‑use was primitive, and the context windows were too small to hold both documentation and source code for meaningful analysis.

A turning point arrived in the summer of 2025 when Rich Mirch disclosed a twelve‑year‑old local privilege escalation in sudo (CVE‑2025‑32462). The bug hinged on a mismatch between the --host flag’s documented behavior and its actual implementation. Although the flaw was not discovered by an LLM, it sparked the question: What if we asked an LLM to look for simple documentation‑versus‑code mismatches across a wide code base? The answer turned out to be a resounding yes.

Three Research Questions

- Docs ↔ Code Mismatches – Can an LLM reliably spot inconsistencies between official documentation and the real code?

- General Vulnerabilities – With newer, more capable models, can the same pipeline discover any class of bug, not just mismatches?

- Creative Leaps – If we push the model into a “drunk” or otherwise altered mental state, can it propose entirely novel bug classes?

Key Findings

The harness, once fully operational, produced a list of 30+ findings, of which more than twenty have been assigned CVE numbers as of April 2026. Highlights include:

| Target | Issue | CVE |

|---|---|---|

| Linux kernel (ksmbd) | Compound READ + QUERY_INFO(Security) requests trigger a remote, unauthenticated OOB write |

CVE‑2026‑31432 |

| Linux kernel (ksmbd) | Compound QUERY_DIRECTORY + QUERY_INFO(FILE_ALL_INFORMATION) requests trigger a remote, unauthenticated OOB write |

CVE‑2026‑31433 |

| Docker | Crafted API requests bypass body‑inspecting authz plugins | CVE‑2026‑34040 |

| CUPS | Unauthenticated Print-Job leads to remote code execution as lp |

CVE‑2026‑34980 |

| CUPS | Path‑traversal in RSS notify‑recipient‑uri allows arbitrary file writes | CVE‑2026‑34990 |

| HAProxy | Single‑packet QUIC loop DoS | CVE‑2026‑26080 |

| Caddy | Case‑sensitive host matching bypasses routing rules | CVE‑2026‑27588 |

| Traefik | readTimeout bypass in PostgreSQL STARTTLS handling leads to connection‑stalling DoS |

CVE‑2026‑25949 |

| udisks | Missing authorization on LUKS header restore enables irreversible data loss | CVE‑2026‑26103 |

| systemd‑machined | Privilege escalation via RegisterMachine D‑Bus path |

CVE‑2026‑4105 |

| etcd | gRPC API auth bypasses allow unauthenticated member list and lease operations | CVE‑2026‑33413 |

| Squid | Heap use‑after‑free in ICP handling crashes the proxy | CVE‑2026‑33526 |

| nginx | CRLF injection in ngx_mail_smtp_module permits arbitrary SMTP headers |

CVE‑2026‑28753 |

| Firewalld | Unauthenticated runtime D‑Bus setters modify firewall state | CVE‑2026‑4948 |

| dnsmasq | Crafted BOOTREPLY triggers OOB write and crash when --dhcp-split-relay is enabled |

CVE‑2026‑6507 |

ksmbd Overflows

Both ksmbd bugs are classic out‑of‑bounds writes that arise when a client bundles multiple SMB operations into a single request. The first operation consumes almost the entire kernel reply buffer, and the second operation appends variable‑length metadata without proper bounds checks. In a lab where hardening features such as kernel.kptr_restrict were disabled, the overflow could corrupt adjacent struct file objects, leading to a potential remote code execution primitive. The discovery was made by a “drunk” Qwen 3.5 27B derivative after a few days of cycling over ksmbd with an automated verifier. A more powerful model (GPT‑5.3‑Codex) reproduced the result in a fraction of the time.

CUPS Chain‑of‑Trust Exploit

The CUPS findings illustrate how a small LLM can be coaxed into orchestrating a multi‑stage attack: first, it establishes an unprivileged foothold via a crafted Print‑Job request, then it leverages a secondary vulnerability to gain root‑level file write access. The entire chain was assembled by separate agents that each handled a sub‑task (network foothold, privilege escalation, file write), demonstrating the power of task decomposition.

Docs ↔ Code Mismatches

A substantial subset of the CVEs falls under the original motivation: mismatches between documentation and implementation. Examples include Docker’s authz plugin documentation, which claims the plugin sees the raw request body, yet a crafted request can bypass the body entirely; Caddy’s case‑insensitive host matching that fails under certain path‑list configurations; and udisks methods that omit explicit Polkit checks despite the policy file indicating they should be protected.

Architecture of the Harness

The system evolved into a multi‑agent pipeline that can be visualized as follows:

- Target Seeder – Generates a ranked list of potential attack surfaces based on prevalence and exploitability heuristics.

- Hypothesis Generators – Consume documentation, source code, and policy invariants to propose promising bug hypotheses.

- Hunters – Execute the hypotheses inside isolated VMs, iteratively refining PoCs and recording outcomes.

- Report Writers – Package successful PoCs into maintainer‑facing reports.

- External Grader – A separate LLM evaluates each finding for severity, novelty, and sanity, guarding against reward‑hacking.

- Conductor – Monitors progress, redirects agents away from dead ends, and logs systemic blockers for continuous improvement.

When smaller OSS models were used, each component required extensive scaffolding: predefined CodeQL queries, hardened QEMU wrappers, and strict sandbox policies. With a frontier model, most of this scaffolding became unnecessary; the model could directly invoke tools, parse their output, and iterate on hypotheses. The conductor’s role shifted from micromanaging each step to providing high‑level strategic prompts and handling occasional “refusal” loops.

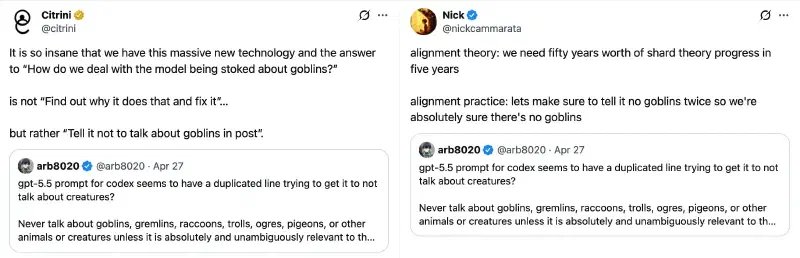

Why “Drunk”?

The “drunk” moniker refers to activation steering, a technique that nudges a model’s internal representation toward a desired affective state (e.g., “creative”, “happy”, “dishonest”). The idea, inspired by Theia Vogel’s work on representation engineering, was to push a hypothesis generator into a more imaginative mindset, hoping it would propose unconventional attack vectors.

In practice, the experiment yielded mixed results:

- The “drunk” Qwen 3.5 model eventually produced the ksmbd overflow hypothesis, but only after a prolonged loop that kept the model in a low‑productivity state.

- Turning the steering knob further to an “acid” setting rendered the model’s output nonsensical.

- The biggest benefit was a reduction in refusals; the model became more willing to generate speculative ideas, even if most were false positives.

Lessons Learned

- Creativity Is Not a Simple Switch – None of the drunk models uncovered a brand‑new class of vulnerability. The bottleneck appears to be the model’s underlying reasoning capacity rather than its affective state.

- Scale Trumps Orchestration – A single frontier model can replace an entire swarm of smaller agents, collapsing the harness into a streamlined end‑to‑end hunter. The external grader remains essential to prevent the model from inflating findings.

- Compute Trade‑offs – With consumer‑grade GPUs, running many modest‑size models for days can be more cost‑effective than renting a single large model for a few hours, especially when the larger model’s VRAM budget would otherwise be spent on a conductor.

- Prompt Engineering Still Matters – While activation steering helped reduce refusals, careful prompt design was still required to keep the model focused on the target code base.

- Agent‑Level Decomposition Is Valuable – Even when a large model can handle the whole pipeline, breaking the problem into sub‑tasks (e.g., hypothesis generation vs. PoC validation) simplifies debugging and improves transparency.

Future Research Directions

- Looped LLMs – Models like Anthropic’s Mythos, which appear to perform graph‑walk style reasoning, could dramatically improve multi‑hop analysis required for complex exploit chains.

- Layer Re‑use (“Brain Surgery”) – Repeating middle transformer layers with pointer‑based memory, as suggested by David Noel Ng, may boost reasoning depth without a proportional VRAM increase.

- RL‑Based Task Decomposition – Training tiny models to automatically break down a security research goal into sub‑tasks (“Mismanaged Geniuses” hypothesis) could reduce the need for hand‑crafted harnesses.

- Automatic Prompt Evolution (GEPA) – Evolving prompts in response to feedback loops may yield more efficient hypothesis generation.

- Dedicated Conductors – A specialized LLM that continuously critiques and redirects hunter agents could become the missing piece for fully autonomous vulnerability discovery pipelines.

The work described here feels like the Stone Age of AI‑augmented security research. Yet the sheer volume of findings—especially the remote ksmbd OOB writes—demonstrates that even today’s models can surface critical bugs that would have remained hidden for years. As model capabilities plateau or modestly improve, the real frontier will be in architecting smarter multi‑agent systems, refining activation‑steering techniques, and building the tooling that lets smaller models punch above their weight. The tide is rising; the question is whether we can surf it with disciplined engineering rather than sheer compute.

Comments

Please log in or register to join the discussion