The free LLM From Scratch workshop guides hobbyists through building a functional tiny AI from scratch in six hands-on modules, offering mobile tech enthusiasts practical insight into the on-device LLMs now powering iOS and Android devices.

The free LLM From Scratch workshop, spotted by Hackaday and covered by XDA's Simon Batt, offers hobbyists a hands-on guide to building a functional tiny large language model from absolute zero. The six-part course walks through every step of creating a working AI, from parsing raw text to training a model that can generate poetry, all while running on a standard laptop without specialized hardware.

The workshop is split into six clear modules, each building on the last. The first section covers tokenization, the process of breaking text into small units called tokens that LLMs use to process language. You will write code to split text into tokens, build a vocabulary, and convert text to numerical token IDs, the basic input format for all transformer-based models.

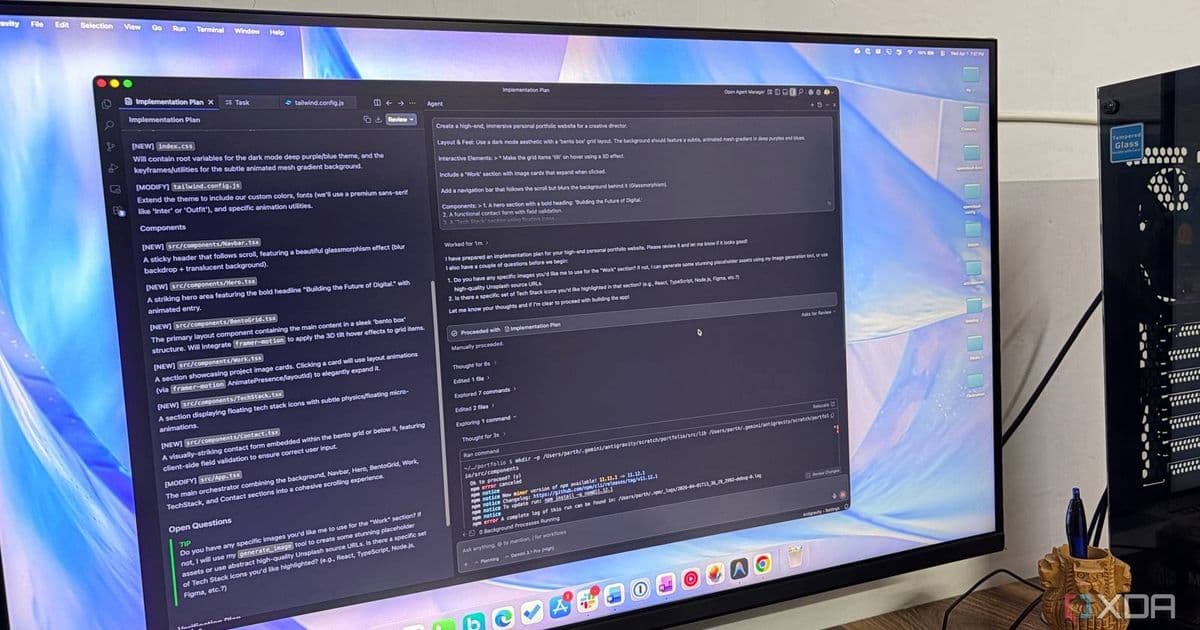

The next section covers the transformer architecture, the core framework behind modern LLMs like ChatGPT and Gemini. You will implement the attention mechanism, feedforward layers, and positional encoding that allow transformers to understand context and relationships between words in a sequence. This section avoids abstract theory, focusing on practical code that you write yourself, line by line.

The third module covers the training loop, where you will write code to feed tokenized text into your transformer, calculate loss, and update the model's weights using gradient descent. You will learn how to set up a training dataset, choose hyperparameters like learning rate and batch size, and monitor training progress to avoid overfitting.

Once the model is trained, the fourth section covers text generation. You will implement greedy decoding and sampling methods to generate new text from a prompt, adjusting temperature and top-k parameters to control the creativity and coherence of the output.

The fifth module runs scaling experiments, testing how changing the model's size, number of layers, or training data affects performance. This part helps you understand the trade-offs between model size, training time, and output quality, key considerations for deploying small models on resource-constrained devices like smartphones.

The final section is a poetry competition, where you will train your model on a dataset of poems and evaluate its output for rhythm, rhyme, and coherence. This practical project ties all the previous modules together, resulting in a fully functional tiny LLM that runs on your laptop. The entire workshop is available free on the LLM From Scratch GitHub repository, with no paywalls or required subscriptions.

For mobile tech enthusiasts, the skills from LLM From Scratch directly apply to the growing trend of on-device LLMs in smartphones and tablets. Modern mobile OS updates like iOS 18 and Android 15 now include built-in on-device LLMs. Apple Intelligence uses small, optimized models for writing tools, summarization, and image generation, while Android 15 integrates Gemini Nano, a 1.8-billion-parameter model that powers features like Smart Reply and live transcription.

These mobile LLMs share the same core architecture as the tiny model built in the workshop, just optimized for low-power mobile chips. Gemini Nano requires at least 4GB of RAM and a compatible system-on-chip to run, while Apple's on-device models only work on devices with A17 Pro or M-series chips, a specification that drives ecosystem lock-in for Apple users. If you want access to Apple Intelligence features, you need to upgrade to a newer iPhone, iPad, or Mac, tying you deeper into Apple's ecosystem of devices and services.

Google's Gemini Nano is more flexible, working across Android devices from multiple manufacturers, but it is deeply integrated into Google's ecosystem services like Gmail, Google Docs, and Google Assistant. Understanding how these small LLMs work, as taught in the workshop, helps mobile users evaluate on-device AI features, understand why certain devices support them, and see how ecosystem players use LLMs to encourage brand loyalty.

The practical focus of LLM From Scratch also extends to tinkering with local LLMs on mobile. Enthusiasts can use the skills from the workshop to modify small LLMs for specific tasks, test them via desktop tools like LM Studio, and even deploy them to Android devices using Termux or Linux chroot environments. This hands-on knowledge cuts through marketing buzz about on-device AI, giving you a clear view of what these models can and cannot do.

Comments

Please log in or register to join the discussion