Microsoft now supports deploying self-hosted OpenClaw AI agents on Azure Linux VMs with hardened security configurations, offering enterprises a middle ground between public AI services and fully self-hosted solutions.

Microsoft Enhances Azure Infrastructure with Secure OpenClaw Agent Deployment for Enterprise AI Workflows

Microsoft has expanded its Azure ecosystem to support OpenClaw, a self-hosted AI agent platform, with enterprise-grade security configurations. This development enables organizations to deploy persistent AI assistants on their own Azure infrastructure while maintaining security boundaries that satisfy enterprise requirements.

What Changed: Secure OpenClaw Deployment on Azure

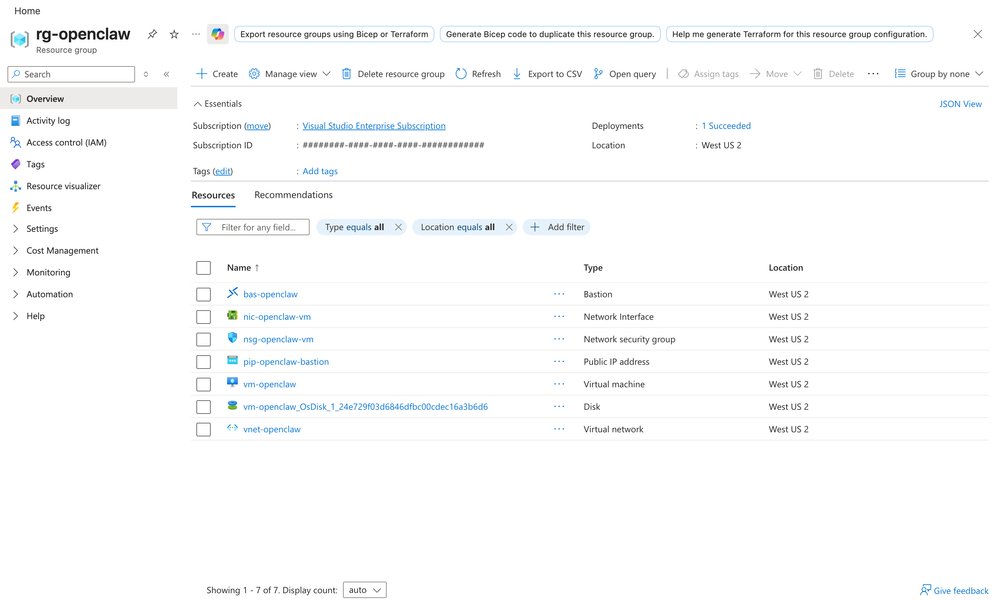

The new capability allows enterprises to deploy OpenClaw AI agents on Azure Linux VMs with Network Security Group (NSG) hardening and Azure Bastion-secured access. This implementation addresses a growing need for organizations that want AI assistants operating on infrastructure they control, rather than relying solely on third-party hosted services.

"Many teams want an enterprise-ready personal AI assistant, but they need it on infrastructure they control, with security boundaries they can explain to IT," explains the documentation. "That is exactly where OpenClaw fits on Azure."

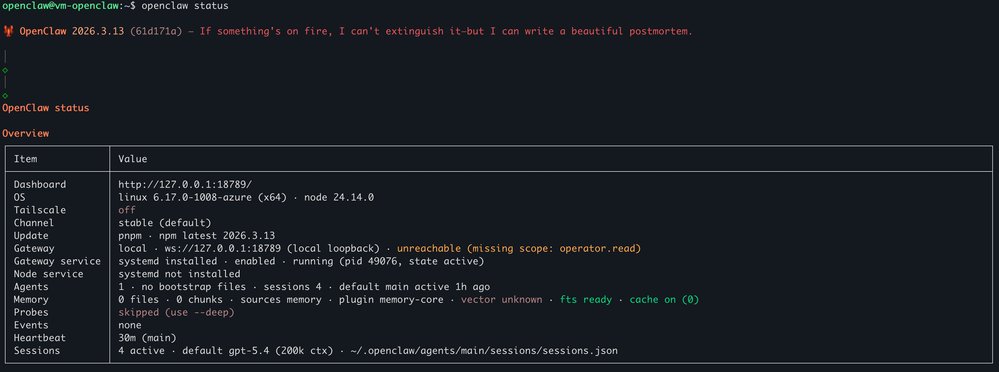

Unlike traditional chat applications tied to specific model providers, OpenClaw operates as an always-on runtime that organizations can manage themselves while connecting to various AI model providers including GitHub Copilot, Azure OpenAI, OpenAI, Anthropic Claude, and Google Gemini.

Provider Comparison: Azure vs. Alternatives for Self-Hosted AI

Azure's implementation of OpenClaw presents several advantages compared to other cloud providers and deployment methods:

Azure Implementation Benefits:

- Integrated Identity Management: Leverages Azure's enterprise identity workflows for authentication and authorization

- Network Security: Native NSG hardening with configurable rules that restrict SSH access to Azure Bastion only

- Managed Access: Azure Bastion provides secure SSH tunneling without exposing public IP addresses

- Resource Management: Seamless integration with Azure CLI for reproducible deployments

- Hybrid Capabilities: Compatible with existing Azure OpenAI deployments and other model APIs

Comparison with Other Approaches:

| Approach | Control Level | Security Burden | Integration Complexity | Cost Model |

|---|---|---|---|---|

| Azure OpenClaw | High | Medium (managed) | Low (integrated) | Pay-as-you-go + VM costs |

| Public AI APIs | Low | Minimal | Medium (API management) | Per-token pricing |

| Full Self-Hosted | Maximum | High | High (full stack) | Infrastructure costs |

| Competitor Cloud Vendors | Medium | Medium | Medium | Variable |

Azure's implementation strikes a balance by providing infrastructure control while reducing the operational burden compared to full self-hosting solutions. The integration with Azure Bastion and NSG hardening addresses key security concerns that often prevent enterprises from adopting self-hosted AI solutions.

Business Impact: Strategic Implications for Enterprise AI Adoption

The deployment of OpenClaw on Azure with secure defaults represents a strategic shift in how enterprises approach AI assistants:

Security and Compliance Advantages:

- Reduced Attack Surface: NSG rules ensure SSH access is restricted to Azure Bastion only, eliminating exposure to public internet attacks

- Auditability: All access goes through Azure's authentication systems, providing clear audit trails

- Comprehensive Control: Organizations maintain full control over their AI environment, addressing data residency and privacy concerns

Operational Benefits:

- Always-On Availability: Unlike browser-based AI tools, OpenClaw operates continuously as a background service

- Multi-Channel Integration: Agents can be accessed through Microsoft Teams, Slack, Telegram, WhatsApp, and other messaging platforms

- Scalable Architecture: VM sizing can be adjusted based on workload requirements, from light usage to heavy automation

Strategic Considerations:

Enterprises evaluating this solution should consider several strategic factors:

Total Cost of Ownership: While VM infrastructure costs are additional, organizations may offset these by eliminating per-user subscription fees for AI services

Resource Allocation: The recommended VM size (Standard_B2as_v2 with 64GB OS disk) provides a balance between cost and performance, with options to scale based on specific needs

Skill Requirements: Implementation requires Azure CLI familiarity and SSH key management, though the deployment process is well-documented

Model Flexibility: Organizations can leverage existing AI investments, whether through GitHub Copilot licenses, Azure OpenAI deployments, or API plans with other providers

Implementation Walkthrough

The deployment process follows a structured approach:

- Resource Provisioning: Create Azure networking resources including VNet, subnets, and NSG with hardened rules

- Security Configuration: Implement NSG rules that allow SSH only from the Bastion subnet while blocking all other access

- Compute Setup: Deploy Azure Linux VMs without public IP addresses, ensuring all external access is through controlled pathways

- Secure Access: Configure Azure Bastion for managed SSH access, eliminating the need for public exposure

- Agent Installation: Install OpenClaw through the provided installation script, which handles dependency management

The entire deployment can be completed in approximately 20-30 minutes, with most of the time spent on resource provisioning rather than complex configuration.

Future Implications

This capability positions Azure as a preferred platform for enterprises seeking to balance innovation with control. As organizations increasingly adopt AI assistants for operational tasks, the ability to deploy these solutions on familiar, secure infrastructure becomes a significant differentiator.

The integration of OpenClaw with Azure's ecosystem represents Microsoft's broader strategy of enabling AI adoption while addressing enterprise concerns around security, compliance, and operational control. By providing a middle ground between public AI services and fully self-hosted solutions, Azure aims to accelerate AI adoption across enterprise environments.

For organizations exploring AI assistant implementations, the OpenClaw on Azure deployment offers a practical approach that balances innovation with enterprise requirements. The combination of familiar infrastructure, security hardening, and operational control addresses key barriers to AI adoption in enterprise environments.

For detailed implementation instructions, organizations can refer to the Microsoft Community Hub guide and the OpenClaw documentation.

Conclusion

The deployment of OpenClaw on Azure with secure defaults represents a significant advancement in enterprise AI infrastructure. By combining the flexibility of self-hosted AI solutions with Azure's enterprise-grade security and management capabilities, Microsoft has created a compelling option for organizations seeking to adopt AI assistants while maintaining control over their infrastructure and data.

As enterprises continue to explore AI implementations, solutions that balance innovation with control will become increasingly important. The OpenClaw on Azure deployment addresses this need directly, providing a practical pathway for organizations to leverage AI assistants within their existing security and operational frameworks.

For organizations evaluating AI assistant solutions, this implementation offers a compelling alternative to both public AI services and complex self-hosted deployments. The combination of familiar infrastructure, hardened security, and operational control addresses key barriers to AI adoption in enterprise environments.

The future of enterprise AI likely involves hybrid approaches that balance cloud services with on-premises control. Microsoft's support for OpenClaw on Azure positions the company to play a significant role in shaping this evolution, providing organizations with the tools they need to adopt AI while maintaining the security and control required in enterprise environments.

Comments

Please log in or register to join the discussion