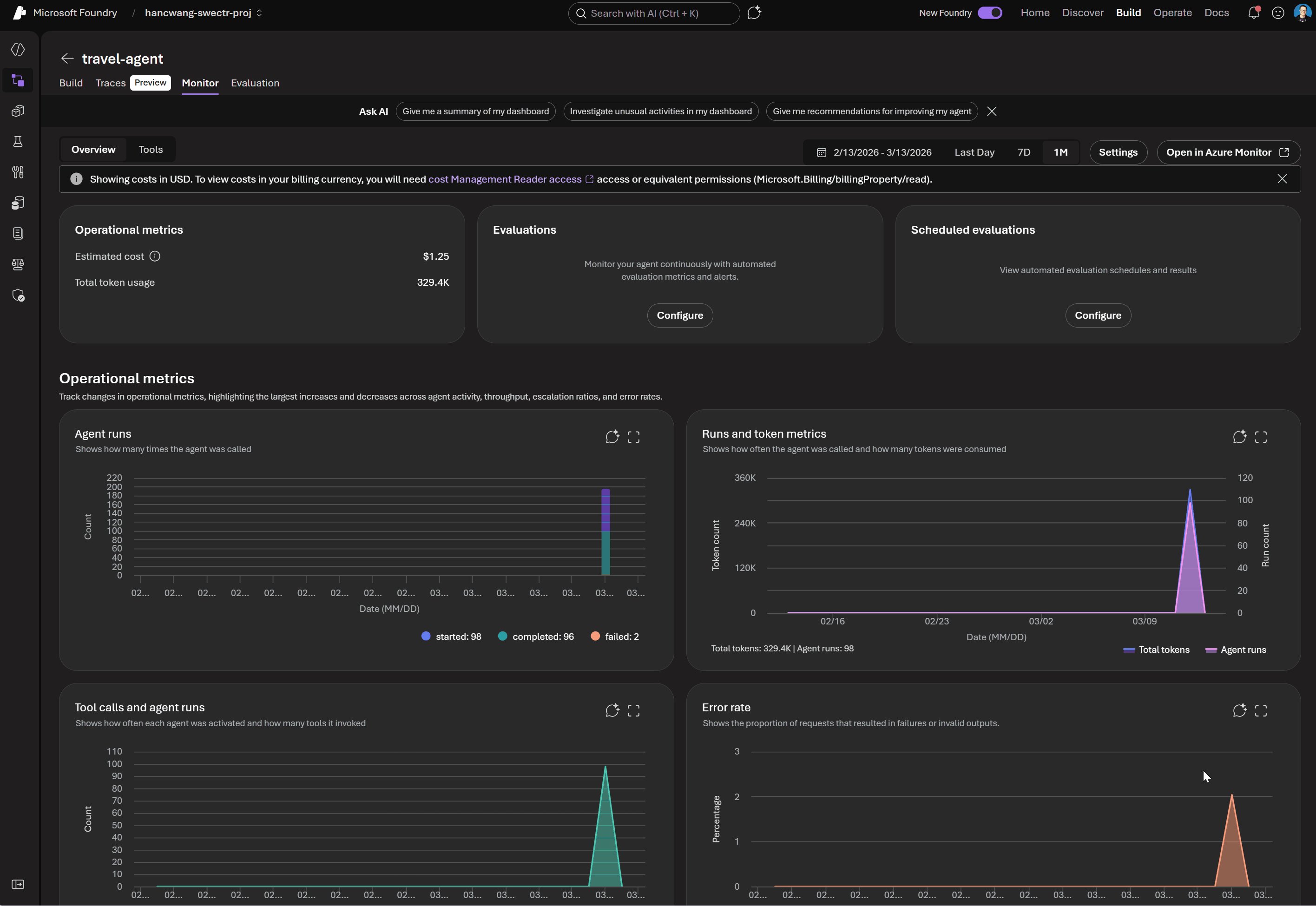

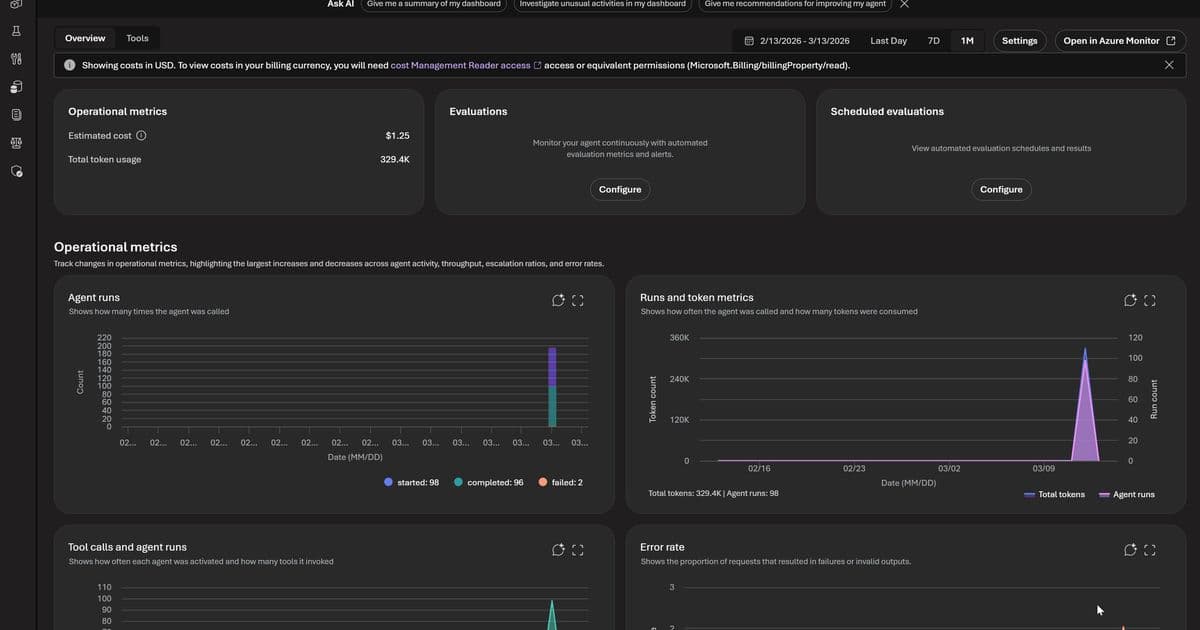

Microsoft has made Foundry Observability generally available, providing integrated evaluations, monitoring, and tracing capabilities designed specifically for AI agents in production environments. The solution addresses the critical gap between point-in-time testing and continuous quality measurement that organizations face when deploying AI systems.

Microsoft Foundry Observability Emerges as Comprehensive Solution for Production AI Agents

Organizations shipping AI agents to production consistently encounter a fundamental challenge: the difficulty of maintaining quality over time. Models update, prompts change, retrieval pipelines drift, and real-world traffic surfaces edge cases invisible during development. Microsoft addresses this gap with the general availability of Foundry Observability, a deeply integrated solution combining evaluations, monitoring, and tracing capabilities through the Foundry Control Plane.

The Critical Gap in Current AI Evaluation Approaches

Most AI evaluation workflows follow a point-in-time approach focused on pre-deployment gates. Teams build test datasets, run evaluations, review scores, and then deploy. While valuable, this approach has significant limitations in production environments:

- Foundation model updates continuously shift output styles, reasoning patterns, and edge case handling without warning

- Prompt changes create nonlinear effects in multi-step agent flows

- Retrieval pipeline drift alters what context agents actually see at inference time

- Real-world traffic distributions consistently differ from sampled test sets

- Long-tail inputs in production often seem obvious in hindsight but remained invisible during development

The implication is clear: evaluation must be continuous, not episodic. Organizations need quality signals at development time, during every CI/CD commit, and continuously against live production traffic—all using consistent evaluator definitions for comparable results across environments.

Foundry Observability Architecture and Components

Microsoft's solution addresses these challenges through several integrated components:

Built-in Evaluators

Foundry includes pre-built evaluators covering critical quality dimensions:

- Coherence and Relevance: Measures whether responses are internally consistent and on-topic

- Groundedness: Essential for RAG architectures, verifies outputs are supported by retrieved context

- Retrieval Quality: Evaluates the retrieval step independently from generation

- Safety and Policy Alignment: Ensures outputs meet deployment requirements

These evaluators operate consistently across the entire AI lifecycle:

- Local development: Run inline during prompt and logic iteration

- CI/CD pipelines: Gate commits against quality baselines

- Production monitoring: Continuously evaluate sampled live traffic

The consistency of evaluators across environments means a score in CI means the same thing as in production monitoring.

Custom Evaluation Capabilities

While built-in evaluators cover common scenarios, production agents often require domain-specific evaluation:

- LLM-as-a-Judge evaluators: Configure prompts and grading rubrics that language models apply to agent outputs. Ideal for quality dimensions requiring reasoning or contextual judgment.

- Code-based evaluators: Python functions implementing any programmable evaluation logic, from regex matching to compliance assertions

Custom and built-in evaluators naturally compose, running against the same traffic and producing results in the same schema.

Integration with Azure Monitor

All observability data from Foundry publishes directly to Azure Monitor, creating significant advantages over siloed AI monitoring tools:

- Cross-stack correlation: When groundedness scores drop, teams can quickly determine if the cause is a model update, retrieval issue, or infrastructure problem

- Unified alerting: Configure Azure Monitor alert rules on any evaluation metric

- Enterprise governance: Azure Monitor's RBAC, retention policies, and audit logging apply automatically

- Dashboard integration: Evaluation metrics flow into existing dashboards alongside operational metrics

End-to-End Tracing

Foundry provides OpenTelemetry-based distributed tracing that follows each request through the entire agent system:

- Model calls and tool invocations

- Retrieval steps and orchestration logic

- Cross-agent handoffs

The critical design decision is linking evaluation results directly to traces. When teams see a low groundedness score, they navigate directly to the specific trace that produced it, eliminating manual correlation.

Foundry auto-collects traces across common frameworks:

- Microsoft Agent Framework

- Semantic Kernel

- LangChain and LangGraph

- OpenAI Agents SDK

For custom frameworks, the Azure Monitor OpenTelemetry Distro provides instrumentation paths.

Prompt Optimizer

In public preview, the Prompt Optimizer tightens the development loop by:

- Analyzing existing prompts and applying structured engineering techniques

- Providing paragraph-level explanations for every change

- Allowing constraints and iterative optimization

- Enabling one-click application of optimized prompts

Strategic Differentiation in the AI Observability Landscape

Foundry's approach differentiates itself through several architectural choices:

Unified Lifecycle Coverage

While most evaluation tools focus on pre-deployment testing, Foundry's evaluators operate consistently across development, CI/CD, and production. This creates truly comparable quality metrics across the entire lifecycle.

No Observability Silo

By publishing all data to Azure Monitor, organizations avoid maintaining separate systems for AI quality alongside infrastructure monitoring. AI incidents route through existing on-call rotations and inherit established governance frameworks.

Framework Agnostic Tracing

Auto-instrumentation across multiple orchestration frameworks and the OpenTelemetry foundation ensures trace portability, protecting investments as the tooling landscape evolves.

Composable Evaluation System

Built-in and custom evaluators operate within the same pipeline, eliminating the need to choose between generic coverage and domain-specific precision.

Business Impact and Implementation Considerations

The general availability of Foundry Observability addresses several critical business challenges:

Quality Assurance Transformation

Organizations can shift from reactive quality issues to proactive quality management through continuous evaluation. This reduces the risk of production failures and improves user experience.

Operational Efficiency

Integration with Azure Monitor eliminates the need for separate monitoring systems, reducing operational overhead and alert fatigue while improving response times to quality issues.

Compliance and Governance

Enterprise-grade security and compliance controls apply automatically to AI observability data, simplifying regulatory adherence.

Implementation Path

Organizations can get started by:

- Having a Foundry project with an agent and Azure OpenAI deployment

- Connecting their Azure Monitor workspace in Foundry Control Plane

- Following the Practical Guide to Evaluations

- Setting up end-to-end tracing for their agents

Competitive Positioning

Microsoft's entry into the AI observability space comes as several providers offer evaluation tools. However, Foundry's deep integration with Azure and its lifecycle-wide approach differentiate it from competitors.

The solution appears particularly well-suited for:

- Organizations already invested in Azure ecosystem

- Teams building complex, multi-step AI agents

- Enterprises requiring compliance and governance

- Development teams using Semantic Kernel or other supported frameworks

For organizations evaluating observability solutions, Foundry offers a compelling combination of pre-built capabilities, extensibility, and operational integration that may reduce the total cost of ownership compared to point solutions.

Microsoft's contribution to the OpenTelemetry project, particularly around semantic conventions for multi-agent trace correlation, also positions the company to influence industry standards for AI observability.

The general availability of these capabilities, with tracing set for full GA by end of March 2026, represents Microsoft's commitment to addressing the operational challenges of AI systems in production environments.

For more information on implementing Foundry Observability, refer to the Microsoft Foundry documentation and the Azure Monitor integration guide.

Comments

Please log in or register to join the discussion