Microsoft's launch of MAI-Transcribe, MAI-Voice, and MAI-Image represents a fundamental shift in Azure's AI strategy, moving from third-party dependencies to first-party, enterprise-optimized models that promise better integration, governance, and cost predictability for cloud-native applications.

Microsoft's recent announcement of its in-house AI models—MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2—marks a significant strategic pivot in the cloud AI landscape. These models represent more than just new endpoints; they signal Microsoft's commitment to building a comprehensive, vertically integrated AI stack that reduces dependency on external providers while enhancing enterprise-grade capabilities.

What Changed: Microsoft's Strategic Shift to In-House AI

For years, Azure's AI services relied heavily on partnerships with third-party providers, particularly OpenAI for generative capabilities. This approach provided quick access to cutting-edge technology but introduced several challenges: integration complexity, governance fragmentation, and unpredictable pricing models. The MAI models directly address these pain points by bringing AI development capabilities in-house.

The transition reflects a broader industry trend where hyperscalers are moving from being mere infrastructure providers to becoming full-stack technology companies that control the entire value chain. Microsoft's approach differs from competitors like Google and AWS in its emphasis on agent-first design—these models aren't just standalone APIs but components designed to work within complex AI agent systems.

Deep Dive into the MAI Model Family

MAI-Transcribe-1: Enterprise-Grade Speech Recognition

MAI-Transcribe-1 represents Microsoft's first-generation in-house speech recognition model, optimized for real-world enterprise environments. Unlike consumer-grade speech recognition systems that perform well in controlled conditions, MAI-Transcribe-1 addresses the complexities of noisy enterprise audio environments such as meetings and call centers.

Key technical innovations include:

- Support for 25 languages with enhanced recognition of accented speech

- Advanced noise cancellation algorithms that maintain accuracy in challenging acoustic environments

- GPU optimization that reduces computational costs by approximately 40% compared to previous Azure Speech offerings

The model achieves this through a combination of transformer-based architectures and domain-specific pre-training on Microsoft's vast corpus of enterprise audio data. This approach allows the model to understand industry-specific terminology, colloquial expressions, and technical jargon that would confuse more general-purpose speech recognition systems.

MAI-Voice-1: High-Fidelity Voice Synthesis

MAI-Voice-1 addresses a critical limitation in many text-to-speech systems: the inability to maintain consistent speaker identity over extended audio sequences. This capability is particularly important for applications like long-form content narration, voice assistants, and conversational AI systems where character consistency across multiple interactions is essential.

Technical highlights include:

- Sub-second generation of up to 60 seconds of audio content

- Advanced prosody modeling that captures emotional nuance and speech patterns

- Custom voice creation capabilities that require minimal training data

The model employs a novel neural architecture that separates content representation from voice characteristics, allowing for more efficient voice cloning and better preservation of speaker identity. This approach represents a significant advancement over traditional concatenative synthesis and earlier neural text-to-speech systems.

MAI-Image-2: Production-Ready Text-to-Image Generation

MAI-Image-2 positions Microsoft among the top providers of generative image models, already powering production Copilot experiences. Unlike many research-focused image generation models that prioritize novelty over practical utility, MAI-Image-2 emphasizes reliability, consistency, and enterprise readiness.

Key technical capabilities:

- High-fidelity photorealistic image generation with improved coherence

- Accurate text rendering within generated images—a common challenge for earlier models

- Optimized latency and cost profile suitable for production applications

The model leverages Microsoft's research in diffusion models and incorporates techniques for better prompt understanding and adherence. This results in images that not only look realistic but also accurately reflect the specific details requested in the input text, a critical requirement for enterprise applications.

Provider Comparison: Azure's AI Evolution

Before MAI: The Third-Party Dependency Era

Prior to the MAI models, Azure's AI services followed a hybrid approach:

- Speech services relied on Microsoft's proprietary technology but with limited capabilities

- Text generation depended heavily on OpenAI's GPT models

- Image generation used partnerships with specialized providers

This approach created several challenges for enterprise customers:

- Integration complexity: Different models required different SDKs, authentication methods, and operational procedures

- Governance fragmentation: Compliance and security controls varied across providers

- Cost unpredictability: Token-based pricing from different providers made budgeting difficult

- Operational inconsistency: Different latency profiles, quota limits, and failure modes across services

After MAI: The First-Party Advantage

The MAI models represent a fundamental rethinking of Azure's AI architecture:

- Unified development experience: Single SDK surface, consistent authentication, and standardized operational procedures

- Native Azure integration: Models inherit Azure's security controls, compliance frameworks, and governance tools

- Agent-first design: Built for complex, multi-turn interactions rather than simple API calls

- Enterprise optimization: Cost structures designed for production workloads rather than research applications

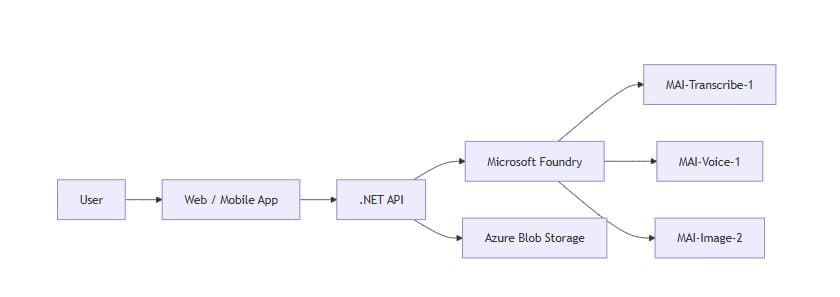

This shift creates a more cohesive AI ecosystem where developers can build sophisticated multimodal applications without managing multiple disparate services. The integration with Microsoft Foundry provides additional benefits like automated scaling, monitoring, and lifecycle management.

Business Impact: Strategic Implications for Enterprises

For Azure Developers

The MAI models significantly simplify the development of AI-powered applications:

- Reduced integration overhead: Developers no longer need to manage multiple authentication schemes, SDKs, and quota systems

- Enhanced reliability: First-party models benefit from Microsoft's enterprise support SLAs

- Improved cost predictability: Transparent pricing models optimized for production workloads

- Agent-native design: Built for complex workflows rather than simple API calls

For example, building a voice-based customer service agent becomes significantly more straightforward with MAI models, as the speech recognition, voice synthesis, and potentially language understanding can work together seamlessly within a consistent operational framework.

For Enterprise Architects

The MAI models offer several strategic advantages:

- Reduced vendor lock-in risk: While proprietary, the models are part of Azure's comprehensive ecosystem, reducing dependency on external providers

- Enhanced security posture: Integration with Azure's security and compliance frameworks simplifies regulatory adherence

- Operational efficiency: Unified tooling reduces the operational burden of managing multiple AI services

- Future-proof architecture: Models designed for agent-based systems align with the evolution toward more autonomous AI applications

For Multi-Cloud Strategists

Microsoft's in-house AI models create interesting considerations for organizations pursuing multi-cloud strategies:

- Competitive differentiation: Azure now offers capabilities that may not be available on other platforms

- Interoperability challenges: While Azure benefits from this integration, multi-cloud environments may face increased complexity

- Pricing leverage: Organizations can use Microsoft's comprehensive offering to negotiate better terms with other providers

- Specialization opportunities: Different cloud platforms may develop unique strengths in specific AI domains

Implementation Considerations and Best Practices

Migration Path

Organizations currently using Azure's third-party AI services should consider a phased approach to adopting MAI models:

- Assessment: Evaluate current workloads for compatibility with MAI models

- Proof of concept: Test MAI models in non-production environments to validate capabilities

- Parallel deployment: Run both old and new services in production during transition

- Full migration: Complete the transition once performance and reliability are validated

Optimization Strategies

To maximize the value of MAI models:

- Leverage agent frameworks: Design applications that take advantage of the agent-first capabilities

- Implement caching: For voice synthesis and image generation, implement intelligent caching to reduce costs

- Custom model fine-tuning: Where appropriate, fine-tune models with domain-specific data

- Monitor performance: Implement comprehensive monitoring to track accuracy, latency, and costs

Cost Management

The enterprise-optimized pricing of MAI models offers better cost predictability, but organizations should still implement cost controls:

- Set usage quotas: Implement programmatic limits based on business requirements

- Implement auto-scaling: Scale resources based on demand rather than maintaining constant capacity

- Use reserved instances: For predictable workloads, commit to longer terms for better pricing

- Monitor cost drivers: Track which features and usage patterns contribute most to costs

Future Outlook

Microsoft's MAI models represent just the beginning of a broader strategic shift. Future developments may include:

- Multimodal integration: Deeper integration between speech, voice, and image capabilities

- Domain-specific models: Specialized versions for industries like healthcare, finance, and manufacturing

- Enhanced customization: More granular control over model behavior and output characteristics

- Edge deployment: Optimized versions for on-premises and edge computing environments

Conclusion

Microsoft's MAI models mark a significant evolution in Azure's AI capabilities, moving from a patchwork of third-party services to a cohesive, first-party stack designed for enterprise workloads. This shift provides Azure with stronger competitive differentiation, simplifies development for Azure customers, and creates a more reliable foundation for building advanced AI applications.

For organizations evaluating cloud providers, Microsoft's in-house AI models now offer a compelling value proposition that combines Microsoft's enterprise-grade infrastructure with increasingly sophisticated AI capabilities. The agent-first design philosophy aligns with the industry's move toward more sophisticated, autonomous AI systems, positioning Azure customers for future innovations.

As the cloud AI landscape continues to evolve, Microsoft's strategic investment in in-house AI development will likely play an increasingly important role in determining competitive positioning. Organizations should begin evaluating these capabilities now to understand how they might enhance their AI initiatives and differentiate their offerings in the market.

Comments

Please log in or register to join the discussion