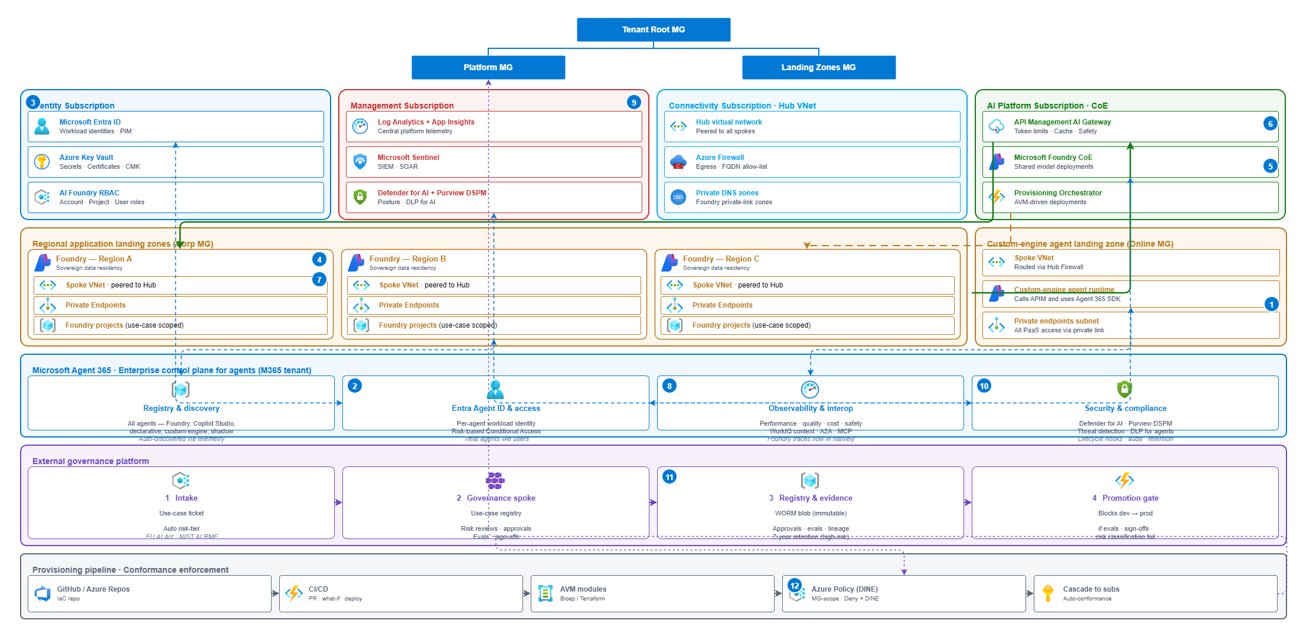

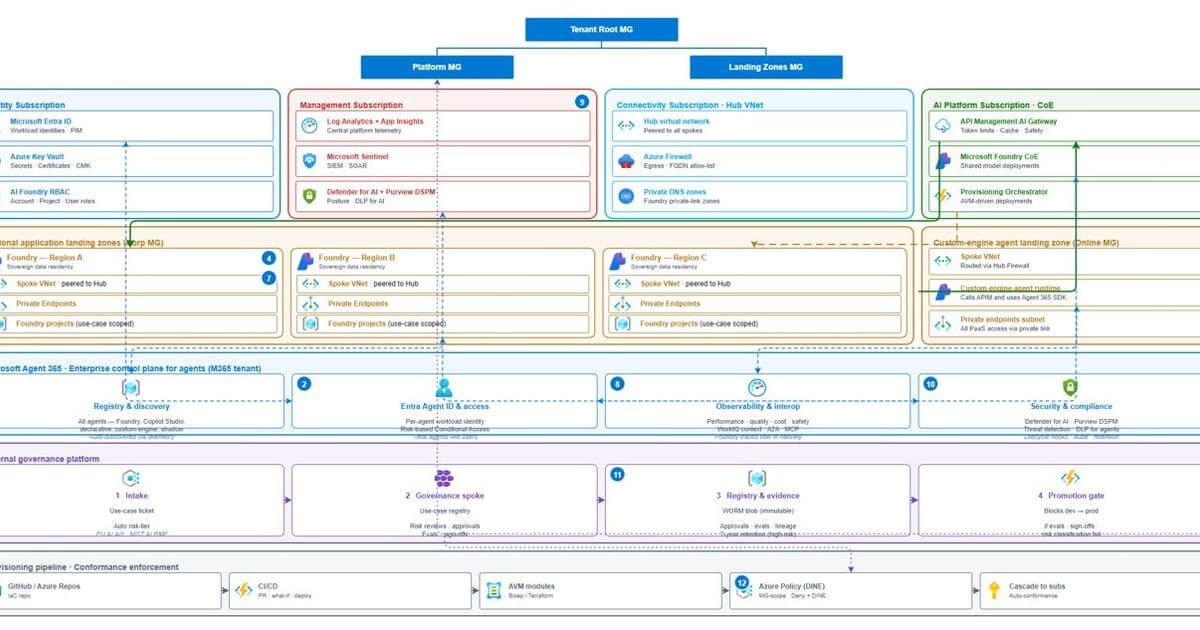

Microsoft introduces a comprehensive reference architecture for governing AI agents across multiple Azure regions, addressing the growing challenge of agent sprawl through a three-layered approach that separates control from execution.

Governing Agent Sprawl: Microsoft's Multi-Region AI Agent Architecture

The rapid proliferation of AI agents in enterprise environments has created a new governance challenge. What begins as isolated innovations—chatbots, document summarizers, API-connected tools—quickly escalates into dozens or hundreds of autonomous systems operating across subscriptions, regions, and tools. This "agent sprawl" transforms the conversation from model quality to uncomfortable operational questions: Who owns this agent? What data can it access? What happens if it misbehaves? Why did it just consume half our monthly token budget in a day?

Microsoft's new reference architecture addresses this challenge by treating AI agents as first-class, governable workloads that are provisioned automatically, constrained by policy, and observable from day one. The architecture is built around a fundamental principle: separating control from execution.

The Core Principle: Control vs. Execution

The architecture begins with a simple but non-negotiable rule: control plane concerns must be separated from runtime concerns. This mirrors Azure landing zone best practices, where management groups, Azure Policy, and RBAC are global constructs, while workloads run in specific regions.

- Runtime plane: Where agents execute, models infer, and data flows across multiple Azure regions

- Control plane: Where identity, policy, safety, evaluation, and oversight reside—independent of region

This separation enables teams to scale agents without losing control, creating a governance framework that grows with the organization's AI footprint.

Layer 1: Azure AI Gateway — Governing Every Request

The first control layer sits directly in the request path, providing policy enforcement and observability through Azure API Management's AI Gateway capabilities. This gateway isn't a separate service but an extension of Azure API Management that everything flows through:

- Microsoft Foundry model deployments

- Azure AI Model Inference API endpoints

- OpenAI-compatible third-party models

- Self-hosted models

- MCP servers and A2A agent APIs (preview)

Key Governance Functions

The AI Gateway intentionally focuses on operational controls rather than business context:

Token quotas and rate limits: The

llm-token-limitpolicy enforces tokens-per-minute or quota ceilings per consumer before requests reach the backend, preventing one application or agent from exhausting shared capacity.Content safety at ingress: The

llm-content-safetypolicy integrates Azure AI Content Safety to moderate prompts automatically, ensuring unsafe requests never reach the model.Traffic routing and resiliency: Azure API Management supports multi-region gateway deployment (Premium tier), automatically routing traffic to the next closest gateway if a region fails.

Observability: Token usage, prompts, and completions are logged to Azure Monitor and Application Insights using built-in policies like

llm-emit-token-metric.

The gateway's narrow focus on traffic governance rather than agent behavior is intentional—this layer ensures requests are safe and controlled, while more nuanced governance happens at higher layers.

Layer 2: Azure AI Foundry Control Plane — Governing Behavior at Scale

The second layer addresses what agents do, not just how requests flow. Azure AI Foundry Control Plane provides a unified management surface for AI agents, models, and tools across projects and subscriptions (currently in public preview).

Key Capabilities

Fleet-wide inventory: Every agent, model, and tool appears in a single, searchable view across projects, eliminating the visibility challenges of distributed agent deployments.

Continuous evaluation: Foundry runs evaluations that measure task adherence, groundedness, tool-call accuracy, sensitive data exposure, and other agent-specific risk dimensions on production traffic.

Centralized guardrails: Policy is enforced across inputs, outputs, and tool interactions—not just—with bulk remediation capabilities across the entire agent fleet.

Security integration: Foundry connects with:

- Microsoft Entra for agent identity

- Microsoft Defender for threat signals

- Microsoft Purview for data protection and compliance visibility

The Foundry Control Plane requires an AI Gateway to be configured for advanced governance scenarios, reinforcing the layered approach where each layer has distinct responsibilities.

Layer 3: Microsoft Agent 365 — Enterprise Oversight

The third layer recognizes that Azure governance alone is insufficient for enterprise AI operations. Microsoft Agent 365 (currently in Frontier Preview, with GA planned for May 1, 2026) serves as the tenant-level control plane for AI agents, bringing them under the same administrative model used for users and applications.

Why This Layer Matters

Agent 365 introduces controls that Azure alone cannot provide:

Agent registry: A single inventory of all agents in the tenant—including both sanctioned and shadow agents that can be quarantined if unsanctioned.

Identity-first access control: Every agent receives an Entra agent ID, allowing Conditional Access policies to apply to agents the same way they do to users.

Human-in-the-loop oversight: Agents surface in Microsoft 365 admin workflows, not just Azure portals, making them visible to IT teams responsible for enterprise productivity.

Security and compliance: Defender and Purview extend threat detection and data protection policies to agent activity, creating consistent security postures across all enterprise systems.

Crucially, Agent 365 doesn't replace Foundry Control Plane but complements it by connecting agent operations to enterprise identity, compliance, and productivity systems.

Implementation Approach

From Approval to Automated Provisioning

The architecture integrates with existing governance processes through automated workflows. When a use case is approved in an external governance system, it triggers an Azure DevOps pipeline using the REST API that:

- Provisions subscriptions and resource groups

- Deploys Foundry projects

- Configures Azure API Management with AI Gateway policies

- Enables monitoring and logging

This ensures governance is applied before the first request is made, establishing guardrails from the outset.

One Policy Model, Many Regions

The architecture follows Azure landing zone guidance by being region-agnostic at the governance layer. Policies and RBAC apply globally, while AI Gateway enforces limits locally in each region. Runtime services scale region by region, allowing expansion to new regions without introducing a new governance model—only additional capacity.

Single Operational View

Signals flow upward through the layers:

- AI Gateway emits traffic and usage metrics

- Foundry Control Plane correlates evaluations, guardrail enforcement, and security alerts

- Agent 365 aggregates tenant-level identity, compliance, and threat signals

This creates a prioritized operational view with intact context, eliminating the need for operations teams to hunt across multiple dashboards.

Business Impact and Considerations

What This Architecture Provides

This reference architecture offers enterprises a foundation for scaling agentic AI without accepting chaos as the cost of innovation. It specifically addresses:

- Visibility: Complete inventory of all agents across the enterprise

- Control: Policy enforcement at multiple levels of granularity

- Observability: Comprehensive monitoring of agent behavior and performance

- Security: Integration with existing enterprise security tools

- Compliance: Alignment with regulatory requirements and organizational policies

What It Doesn't Promise

The architecture is not a silver bullet. It doesn't eliminate the need for:

- Clear agent ownership models

- Business-level approval processes

- Ongoing evaluation of agent usefulness

- Strategic decisions about which use cases should be automated

Rather, it provides the technical foundation that makes these governance processes effective at scale.

Strategic Implications

Microsoft's approach represents a significant evolution in AI governance, reflecting several strategic priorities:

- Operational maturity: Moving beyond experimental AI to production-grade systems with proper oversight

- Enterprise integration: Connecting AI operations to existing identity, security, and productivity ecosystems

- Multi-region scalability: Designing for global deployments from the start

- Layered governance: Recognizing that different aspects of AI require different governance approaches

For organizations adopting this architecture, the transition from uncontrolled agent proliferation to a structured governance model represents a maturation of their AI capabilities—enabling innovation with appropriate safeguards.

The architecture acknowledges that agent sprawl is not merely a tooling failure but an architectural one. By separating control from execution, layering governance where it belongs, and aligning AI operations with existing Azure and Microsoft 365 control planes, Microsoft provides enterprises with a way to move fast without losing sight of what their agents are doing.

This approach creates a clear distinction between experimentation and production—allowing organizations to innovate responsibly while maintaining the controls necessary for enterprise-grade AI operations.

References

- Azure AI Gateway in Azure API Management

- Configure AI Gateway for Foundry

- Foundry Control Plane overview

- Microsoft Agent 365 announcement

- Azure landing zones and regions

- Azure DevOps pipeline REST API

Comments

Please log in or register to join the discussion