Mozilla fixed 423 Firefox security vulnerabilities in April 2026, a fivefold increase over the prior month, crediting AI tools for identifying previously undetected flaws with significant implications for data protection compliance across the software industry.

Mozilla's April 2026 Firefox security update addressed 423 unique vulnerabilities, a figure more than five times higher than the 76 fixes issued in March 2026 and nearly 20 times the browser maker's 2025 monthly average of 21.5 fixes. The company attributes this surge in remediation activity to the use of AI models including Anthropic's Mythos Preview and Opus 4.6, which identified 271 and a significant additional volume of previously unknown flaws respectively. For organizations subject to data protection regulations, this development highlights both new opportunities to meet vulnerability management requirements and open questions about how to validate the effectiveness of AI-driven security tools.

Regulatory Requirements for Vulnerability Remediation

Data protection frameworks across jurisdictions impose explicit obligations on entities that process personal data to secure that data against unauthorized access or disclosure. The EU General Data Protection Regulation (GDPR), effective May 25, 2018, Article 32 requires controllers and processors to implement "appropriate technical and organizational measures" to ensure a level of security appropriate to the risk, including measures to address vulnerabilities in systems that handle personal data. The California Consumer Privacy Act (CCPA), effective January 1, 2020, as amended by the California Privacy Rights Act (CPRA) effective January 1, 2023, includes similar mandates, requiring reasonable security procedures and practices to protect personal information.

Regulators expect these measures to include regular vulnerability testing, prompt remediation of identified flaws, and documentation of all security activities to demonstrate compliance during audits. High-severity vulnerabilities that could allow remote code execution or unauthorized data access typically require remediation within 30 days of identification, while lower-severity issues may be addressed in quarterly update cycles. Failure to meet these timelines can result in fines, enforcement actions, and mandatory breach notifications if unpatched vulnerabilities lead to data exposure.

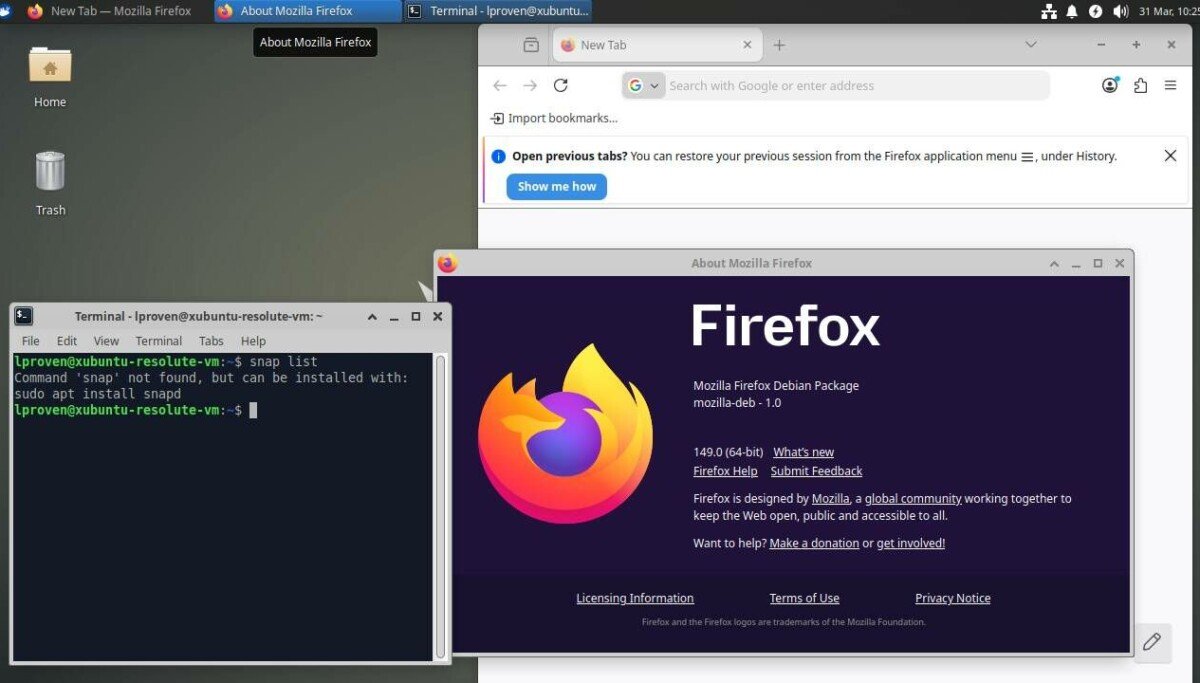

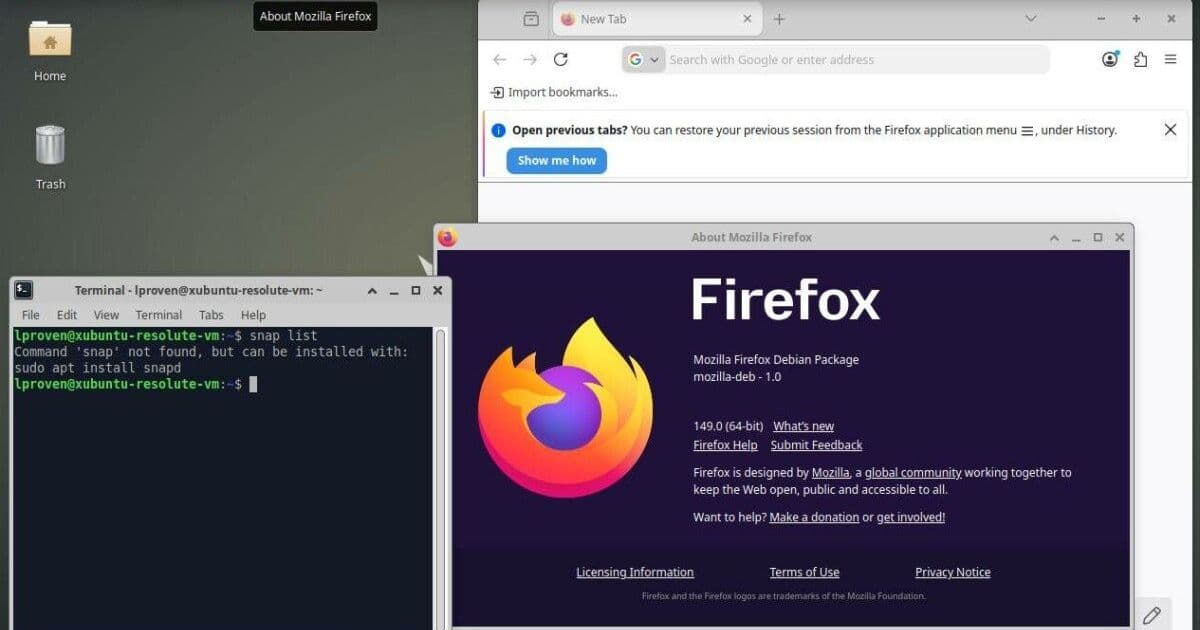

Mozilla's AI-Driven Remediation Push

Mozilla's technical leadership team, including distinguished engineer Brian Grinstead, tech lead Christian Holler, and head of security Frederik Braun, noted that AI-generated security reports have shifted from low-quality, unactionable outputs to reliable findings over the past several months. This improvement stems from two factors: the capabilities of newer AI models, and the development of agentic harnesses, or middleware that mediates between the AI model and the code being analyzed to improve signal-to-noise ratios.

Anthropic's Mythos Preview model, part of the company's Project Glasswing early access program for high-risk AI tools, identified 271 of the 423 April fixes. The company also used Opus 4.6, a lower-cost general-purpose model, to identify additional vulnerabilities. Mozilla typically keeps detailed bug reports private for several months after releasing fixes to protect users who have not yet updated their browsers. However, the team chose to publish 12 sample reports early, citing extraordinary industry interest in AI-driven security and the need to encourage broader adoption of these tools. All public Firefox security advisories are available on the Mozilla Security Advisories page, with individual bug details accessible via the Mozilla Bugzilla tracker.

Among the identified flaws is a high-severity heap use-after-free bug in the XSLTProcessor DOM API that had persisted for 20 years, which could be triggered by a malicious web page without any user interaction. Many of the fixed vulnerabilities are sandbox escapes, which are difficult to detect using traditional fuzzing techniques. Mozilla also noted that AI analysis helped validate prior browser hardening work designed to prevent prototype pollution attacks, with audit logs showing AI models attempting and failing to exploit these protections, confirming their effectiveness.

Compliance Implications for Data Processors

For organizations that develop software handling personal data, Mozilla's results demonstrate that AI tools can expand vulnerability detection coverage beyond traditional methods like fuzzing, helping meet the GDPR and CCPA requirement to implement appropriate technical measures. The ability to identify long-standing, high-severity flaws that evaded previous testing is particularly relevant for compliance, as regulators prioritize remediation of vulnerabilities that pose clear risks to user data.

Mozilla's decision to publish sample bug reports early also offers a template for balancing transparency and user protection. While regulators encourage transparency about security practices, organizations must avoid releasing vulnerability details before the majority of users have applied fixes, to prevent bad actors from exploiting unpatched systems. Documentation of AI tool usage, including which models and harnesses were used, which bugs were identified, and remediation timelines, will be critical for demonstrating compliance during regulatory audits.

Open Questions About Tool Effectiveness

Security researchers have raised concerns about unverified claims regarding AI tool effectiveness. Davi Ottenheimer, president of security consultancy flyingpenguin, noted that Mozilla's reported bug count does not include a comparison to other detection methods, making it impossible to determine whether Mythos provided unique value over lower-cost alternatives. Opus 4.6, for example, costs approximately 5 times less than Mythos, and Ottenheimer's own testing using Anthropic's Sonnet 4.6 and Haiku 4.5 models paired with a custom harness called Wirken identified 8 vulnerabilities in 2 minutes at a cost of roughly $0.75, with 2 of those findings matching bugs previously identified by Mythos.

Ottenheimer argues that Mozilla's advocacy for AI-driven remediation lacks proper evidence, as the company does not quantify how many bugs were found by Opus 4.6 before attributing the remainder to Mythos. He suggests that improvements in the agentic harness, rather than the model itself, may be responsible for the surge in identified bugs. This aligns with Mozilla's own admission that "we dramatically improved our techniques for harnessing these models" alongside adopting newer AI systems.

For compliance teams, this underscores the need to conduct independent validation of AI security tools before deploying them to meet regulatory requirements. Side-by-side testing of multiple models and harness configurations, with documentation of findings and costs, will help organizations select tools that provide measurable value and stand up to regulatory scrutiny. Relying solely on vendor marketing claims creates compliance risk if tools do not deliver the promised results.

Compliance Timeline and Recommended Actions

Organizations subject to data protection regulations should align their vulnerability remediation timelines with the severity of identified flaws, as outlined in regulatory guidance. High-severity vulnerabilities like those identified in Mozilla's April update should be remediated within 30 days of discovery, with all remediation activities documented in a central register.

Recommended steps for compliance teams include:

- Evaluate AI-driven vulnerability detection tools using side-by-side comparisons with existing methods, to validate effectiveness and cost efficiency.

- Document all tool configurations, including model versions and harness settings, to demonstrate due diligence during audits.

- Develop a transparent vulnerability disclosure policy that balances early sharing of non-sensitive findings with protection of users who have not applied updates.

- Regularly review AI tool performance to ensure they continue to meet compliance requirements as models and middleware evolve.

Mozilla's experience shows that AI can accelerate vulnerability remediation, but only when paired with proper validation and documentation. Regulators will expect organizations to prove that their security measures, including AI tools, are effective and appropriate to the risk posed to user data.

Comments

Please log in or register to join the discussion