Database partitioning seems like the perfect solution for scaling, but it introduces subtle complexities that can undermine your system's reliability. This article explores the trade-offs between isolation and performance, and how to design databases that scale without sacrificing consistency.

Database partitioning is often presented as the silver bullet for scaling relational databases. The promise is simple: divide your data into smaller, more manageable pieces, and your system can handle more concurrent operations with better performance. But after managing systems with millions of users across multiple continents, I've learned that partitioning introduces subtle complexities that can undermine your system's reliability in ways you might not anticipate.

The Allure of Isolation

When we first implemented partitioning at my previous company, the benefits were immediately apparent. Our query times dropped dramatically, and we could handle ten times the load with the same hardware. Each customer's data lived in its own logical space, which made it feel more secure and isolated. On paper, it was a perfect solution.

But the cracks began to show as we scaled. What started as a simple "customer_id" partition key quickly became more complex. We needed to support cross-customer analytics, which meant either duplicating data or implementing complex cross-partition joins. Our simple partitioning strategy evolved into a tangled web of special cases and exceptions.

The Deadlock Dilemma

The most painful lesson came during a routine maintenance operation. We had a process that needed to update records across multiple partitions. Under normal load, this worked fine. But during peak usage, we started experiencing deadlocks. The database's locking mechanism, which worked perfectly within a single partition, became problematic when operations spanned multiple partitions.

What happened was a classic distributed systems problem: our transactions now had to coordinate across multiple resources. The database's lock manager couldn't guarantee deadlock-free execution across partitions, leading to a situation where transactions would wait indefinitely for locks that would never be released.

We tried various solutions: reducing transaction isolation levels, implementing retry logic with exponential backoff, and redesigning our queries to minimize cross-partition operations. Each solution came with its own trade-offs in terms of consistency and complexity.

For example, when we reduced the isolation level from SERIALIZABLE to READ COMMITTED, we eliminated the deadlocks but introduced the possibility of lost updates. We had to implement application-level locking to ensure data consistency, which added significant complexity to our codebase.

The Consistency Conundrum

As we added more partitions, we faced the CAP theorem head-on. We wanted both consistency and availability, but partitioning forced us to choose. When network issues occurred between partitions, we had to decide whether to return potentially stale data or fail the request entirely.

Our solution was to implement a hybrid approach: strong consistency for critical operations like payments and eventual consistency for less critical operations like user preferences. But this added significant complexity to our application logic. We now had to carefully classify each operation based on its consistency requirements, which made the codebase harder to understand and maintain.

To manage this complexity, we built a consistency framework that allowed developers to specify their consistency requirements at the operation level. This framework would then automatically handle the appropriate locking, retries, and fallback mechanisms. While this solved the immediate problem, it added another layer of abstraction that developers had to learn and understand.

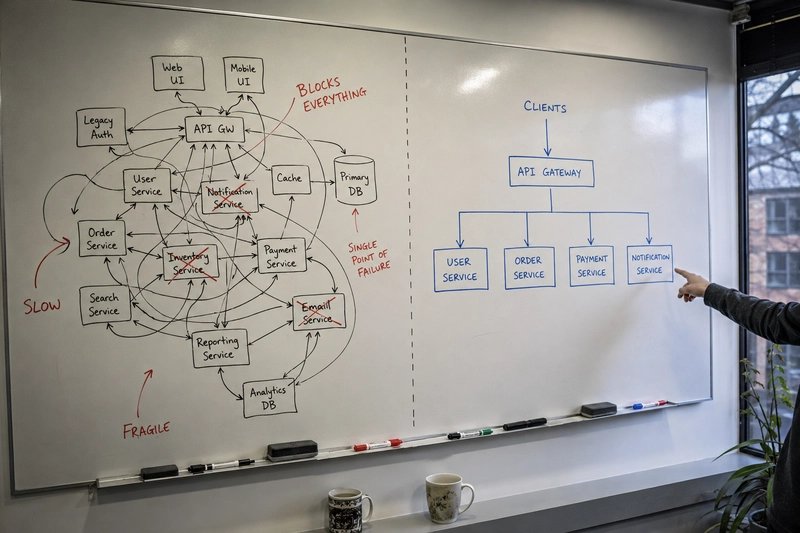

The API Design Challenge

Partitioning also affected our API design. Our initial REST APIs assumed a unified data model, but with partitioning, we had to expose different endpoints for different partitioning strategies. This led to a proliferation of API versions and complicating for our clients.

We eventually moved to a more abstract API layer that hid the partitioning details from clients. This layer translated between our internal partitioned schema and a unified external schema. While this solved the client-facing complexity, it added another layer of indirection that introduced its own performance implications.

For example, a simple GET request that appeared to fetch a single record might actually involve coordinating across multiple partitions behind the scenes. We had to implement caching strategies and batch operations to minimize the performance impact of this indirection.

The Operational Overhead

Perhaps the most surprising aspect of partitioning was the operational complexity it introduced. What used to be a simple database backup now required coordinating backups across multiple partitions. Rolling upgrades became multi-step processes that had to carefully manage data migration between partitions.

Monitoring also became more challenging. We couldn't just look at a single database instance anymore; we had to monitor the health of each partition and the relationships between them. This required building custom dashboards and alerting systems that could detect anomalies across partitions.

We eventually settled on a combination of off-the-shelf monitoring tools like Prometheus and custom-built tools for partition-specific metrics. Our monitoring system had to track not just traditional database metrics like query latency and connection counts, but also partition-specific metrics like cross-partition query rates and data skew.

Lessons Learned

After several years of managing a heavily partitioned system, I've developed a set of principles that guide my database design decisions:

Start with a simple, unified schema. Only partition when you've proven that you need to scale beyond what a single instance can handle. premature partitioning is one of the most common mistakes I see.

Choose your partition keys carefully. They should align with your access patterns, not just your data model. A poorly chosen partition key can create more problems than it solves. For example, using a timestamp as a partition key might seem logical for time-series data, but it can lead to hotspots if all your queries are for recent data.

Embrace eventual consistency where appropriate. Not all operations need strong consistency, and relaxing consistency requirements can significantly simplify your system. The key is to identify which operations can tolerate eventual consistency and design your system accordingly.

Invest in tooling. Managing partitioned systems requires custom tooling for monitoring, backup, and migration. Don't underestimate this investment. The operational overhead of partitioning is often overlooked during the design phase.

Plan for partial failure. In a partitioned system, failures are not binary—some parts of your system will be available while others are not. Design your APIs and business logic accordingly. This means implementing proper error handling and fallback mechanisms.

The Future of Partitioning

As databases evolve, new approaches are emerging that aim to reduce the complexity of partitioning. NewSQL databases like CockroachDB and TiDB claim to provide the scalability of NoSQL with the consistency of SQL, automatically handling partitioning and replication behind the scenes.

While these technologies are promising, they're not a panacea. They introduce their own complexities and trade-offs. For example, CockroachDB's automatic partitioning can lead to unexpected data movement, which can affect performance. TiDB's separation of storage and computation adds another layer of complexity to your architecture.

Conclusion

Database partitioning is a powerful tool for scaling, but it's not without costs. The key is to understand those costs and make informed decisions based on your specific requirements. There's no one-size-fits-all solution; the right approach depends on your data model, access patterns, and consistency requirements.

In my experience, the most successful systems are those that start simple and evolve complexity only when necessary. Don't over-engineer for scale you don't have yet, but do plan for the future. The goal is to build systems that can grow with your needs, not ones that require constant rearchitecting as your requirements change.

The journey from monolith to distributed systems is fraught with challenges, but with careful planning and a deep understanding of the trade-offs, it's a path worth taking. The key is to move deliberately, measuring the impact of each change and being prepared to adapt as you learn more about your system's behavior under load.

Remember, the goal isn't to build the most complex system possible; it's to build the right system for your needs. Sometimes, that means sticking with a simpler approach longer than you might think. Other times, it means embracing the complexity of distributed systems. The important thing is to make those decisions intentionally, based on real requirements and data, not on hype or fear.

Comments

Please log in or register to join the discussion