Virtual dispatch enables runtime polymorphism but introduces pointer indirection, larger object layouts, and reduced inlining opportunities. Compilers can sometimes devirtualize these calls, but when they can't, static polymorphism via CRTP or C++23's deducing this offers zero-cost abstractions with compile-time resolution.

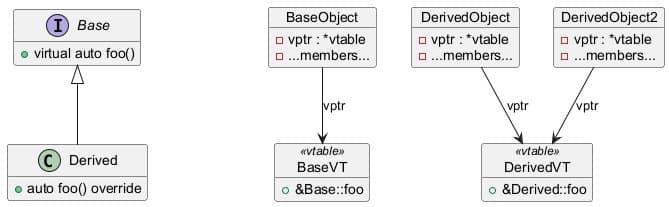

Virtual dispatch is a cornerstone of object-oriented programming, enabling runtime polymorphism by allowing derived classes to override base class methods. However, this flexibility comes at a cost that often goes unnoticed until performance benchmarks reveal the hidden overhead. When a method is declared virtual in a base class and overridden in derived classes, calls through base pointers or references must be resolved at runtime through a virtual table (vtable) mechanism. This indirection introduces several performance penalties: increased object size due to the vtable pointer (vptr), reduced inlining opportunities, and branch mispredictions that hurt cache efficiency.

To understand the impact, consider what happens at the assembly level. For a non-virtual member function, the compiler generates a direct call that can be easily inlined and optimized. However, when the same function is declared virtual, the generated code must first load the vptr from the object, then perform an indirect call through the vtable. This extra indirection makes the call harder to predict and prevents the compiler from optimizing it away. The difference is stark: a direct call becomes an indirect vtable-based call, with the compiler loading the vptr and jumping through the function pointer in the vtable slot.

Compilers employ various techniques to mitigate these costs through devirtualization—the process of converting virtual calls into direct calls when the compiler can statically determine which override will be called. This is straightforward when the runtime type is clearly fixed, such as when an object is constructed and used within the same scope. The compiler can track the allocation and prove there's only one possible concrete type, allowing it to emit a direct call instead of going through the vtable.

However, traditional compilation models create limitations. Object files are compiled and optimized in isolation, with the linker simply stitching them together. This means cross-translation unit optimizations are inherently limited. Compiler flags help bridge this gap: -fwhole-program tells the compiler that a translation unit represents the entire program, allowing it to assume no external derivations exist. Link-time optimization (-flto) keeps intermediate representation in object files and optimizes across all of them at link time, effectively treating multiple source files as a single translation unit.

The final keyword provides a more granular approach, allowing developers to give the compiler guarantees about specific methods. When a method is marked as final, the compiler knows it cannot be overridden further and can emit direct calls even though it's declared virtual in the base class. This creates an interesting hybrid where some virtual calls remain indirect while others become direct, depending on the override chain.

When devirtualization isn't possible or practical, static polymorphism offers a compelling alternative. The Curiously Recurring Template Pattern (CRTP) is the canonical approach, where a base class is templated on the derived class and invokes methods on it via static_cast. This eliminates the virtual keyword entirely, with method calls resolved at compile time. The trade-off is that each instantiation becomes a distinct, unrelated type, preventing runtime upcasting to a common base. Any shared functionality across different derived types must itself be templated.

C++23 introduces deducing this, which maintains the static-dispatch model while simplifying the syntax. Instead of templating the entire class, you template only the member function that needs access to the derived type, letting the compiler deduce self from *this. This yields identical optimized code to CRTP but with cleaner syntax and better ergonomics.

The choice between virtual and static polymorphism ultimately depends on your specific requirements. Virtual dispatch provides runtime flexibility at the cost of performance overhead, while static polymorphism offers zero-cost abstractions with compile-time resolution. Understanding these trade-offs allows developers to make informed decisions about when to embrace the abstraction of virtual functions and when to opt for the performance benefits of static polymorphism through techniques like CRTP or deducing this.

For latency-sensitive paths where every cycle counts, manually replacing dynamic dispatch with static polymorphism can yield significant performance improvements. The abstraction effectively becomes zero-cost, with the compiler inlining everything and constant-folding the result. No vtable, no vptr, no indirection—just direct, optimized calls that the compiler can fully optimize away.

This analysis reveals that the "clean" polymorphic design that looks elegant in code may not always be the most performant choice. By understanding the underlying mechanics of virtual dispatch and the tools available for static polymorphism, developers can write code that maintains the benefits of abstraction while achieving optimal runtime performance.

Comments

Please log in or register to join the discussion