A compelling analysis of how open source AI models running locally on personal hardware might outcompete centralized cloud services, driven by rapid parity gains, economic pressures, and Apple's contrarian strategy.

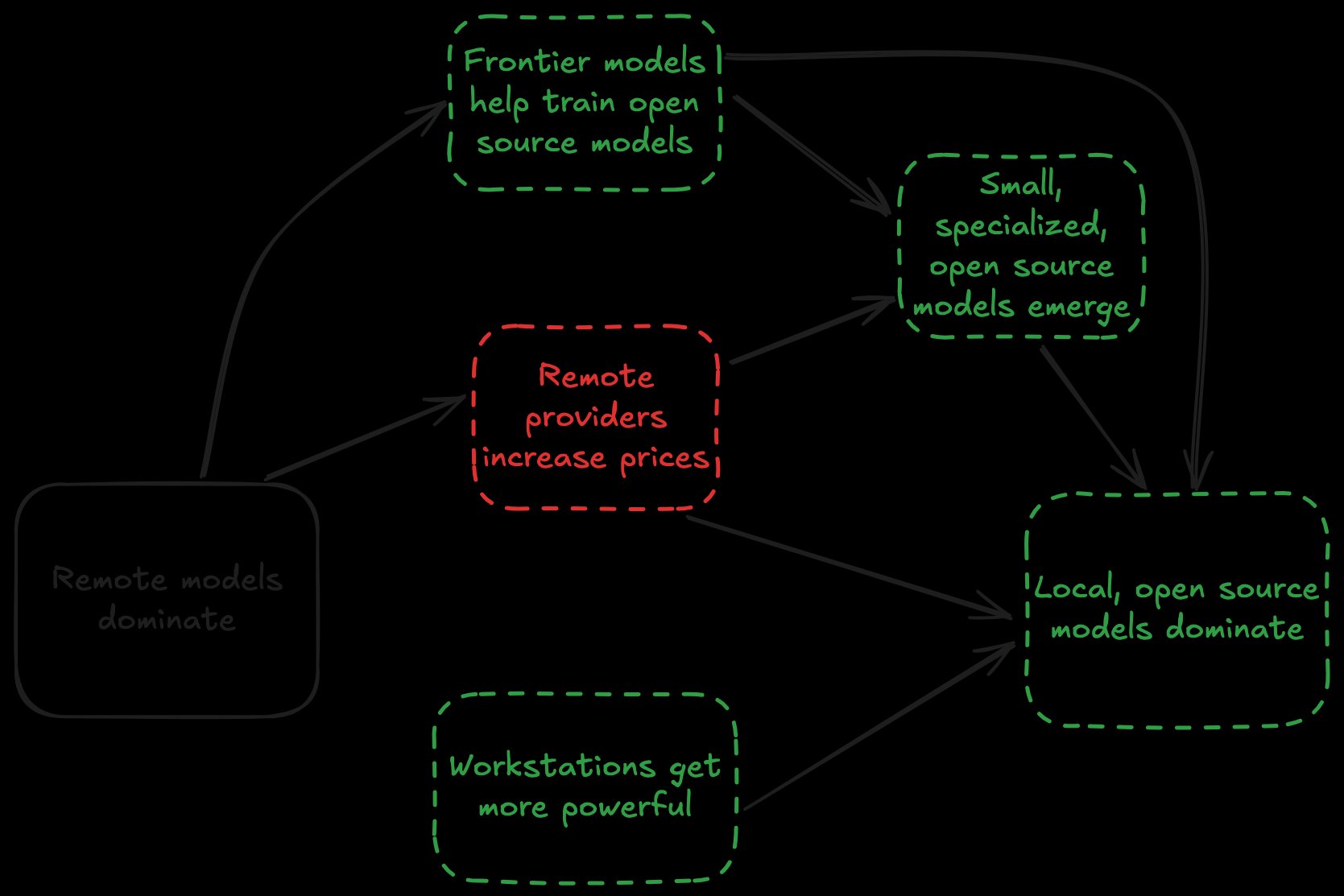

The debate over whether massive datacenter investments will pay off hinges on two scenarios: either AI adoption accelerates and justifies the spending, or it doesn't. But there's a third, increasingly plausible scenario: open source models running on local workstations could dominate the AI landscape.

This possibility rests on several converging factors that deserve serious consideration.

Open Source Models Keep Closing the Gap

With the notable exception of GPT-4, open source models have consistently matched the performance of frontier models within six months of their release. The data shows a clear trend:

Months to open source parity with frontier models:

- GPT-3.5: ~9 months (Llama 2 70B)

- GPT-4: ~16 months (Llama 3.1 405B)

- Claude 3 Opus: ~4 months (Llama 3.1 405B)

- GPT-4o: ~7 months (DeepSeek-V3)

- Claude 3.5 Sonnet: ~6 months (DeepSeek-V3)

- o1: ~4 months (DeepSeek-R1)

This pattern suggests we can expect the trend to continue. What's particularly interesting is that model providers have essentially built "waterslides" rather than moats—frontier models help train their open source competitors. Unauthorized distillation, while controversial, may be impossible to prevent entirely.

Economic Pressures Mount on Cloud Providers

The unit economics of frontier models resemble Uber's "cheap ride era"—unsustainable pricing that will eventually need to adjust. OpenAI projects $14 billion in losses for 2026 despite $13 billion in revenue, with $8 billion of that going to compute costs. Anthropic faces similar challenges, with reports suggesting a $200/month Claude Max subscription could consume up to $5,000 in compute.

This has already led to rate limits on premium subscriptions and pricing experiments like Claude Code Review, which costs $15-$25 per PR. The lack of explanation for why this should replace existing workflows suggests these are experiments to test enterprise price tolerance.

Specialized Models Will Fill the Gap

As prices increase, demand will grow for smaller, specialized models that can handle specific tasks efficiently. Today's low prices mean people default to the most powerful model regardless of need—but that will change. A Python style fix doesn't need a frontier model; a fine-tuned, smaller model can handle it at a fraction of the cost. One whitepaper claimed parity with GPT-4o using a fine-tuned GPT-4o-mini model at just 2% of the cost.

Apple's Contrarian Bet

While Amazon, Microsoft, Meta, and Google are spending over $100 billion per quarter on data centers, Apple has taken a different approach. Their capital expenditure strategy might be "the luckiest accident in history" according to some observers. Apple has been criticized for being "behind" on AI, but their strategy appears to be: let competitors burn cash training models, let advances propagate into open source, and make devices capable of running them locally.

Hardware Is Rapidly Catching Up

The most recent MacBook Pro M4 Max represents a significant leap in what's viable locally. Looking at the progression:

Max usable model by device:

- 2020 M1: 15B parameters

- 2021 M1 Pro: 17B parameters

- 2022 M1 Pro: 17B parameters

- 2023 M3 Pro: 19B parameters

- 2024 M4 Pro: 25B parameters

- 2025 M5: 34B parameters

- 2026 M5 Max (projected): 136B parameters

Today, running frontier models locally remains out of reach for most users, but the gap is closing rapidly.

The Compelling Value Proposition

If local open source models can achieve parity with hosted alternatives, they offer a compelling value proposition: fast, private, and free. This possibility hasn't received much attention because no one stands to get mega-rich from it—but that's precisely what makes it a potent threat to current leaders.

The convergence of rapid open source improvement, unsustainable cloud economics, specialized model demand, Apple's contrarian strategy, and rapidly advancing local hardware creates conditions where local AI could indeed become the dominant paradigm. The question isn't whether this is possible, but whether it's probable—and the evidence suggests it might be more likely than many realize.

The chart showing Apple's capital expenditure strategy relative to its competitors has become something of a meme in tech circles, but it might also be a prescient indicator of where the AI industry is heading. While everyone else is building bigger datacenters, Apple is betting on making your laptop powerful enough to handle the job.

This isn't just about cost savings or privacy—it's about a fundamental shift in how we think about AI infrastructure. If the future of AI is local, it could mean a more distributed, resilient, and user-controlled AI ecosystem. And that future might be arriving sooner than we think.

Comments

Please log in or register to join the discussion