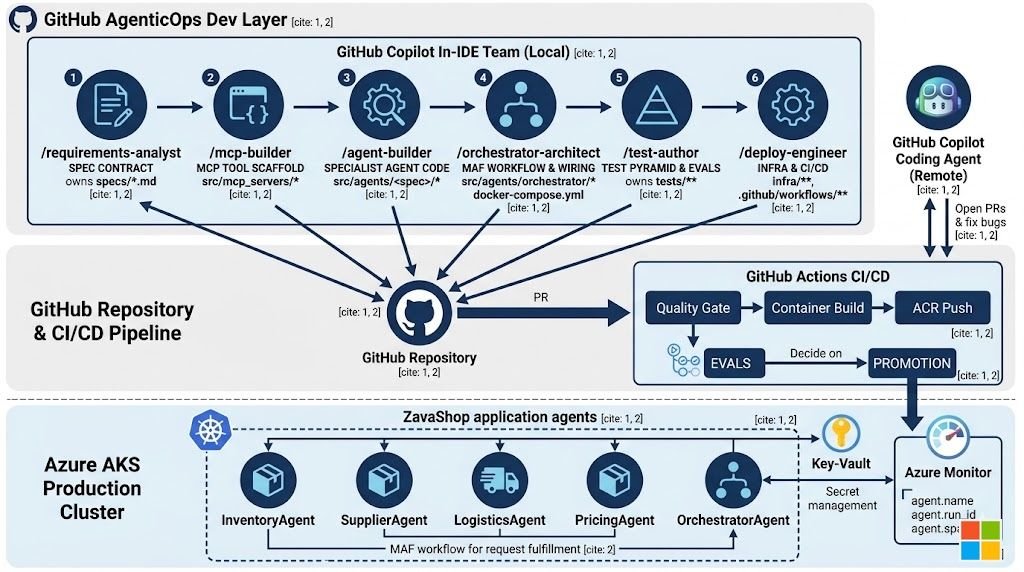

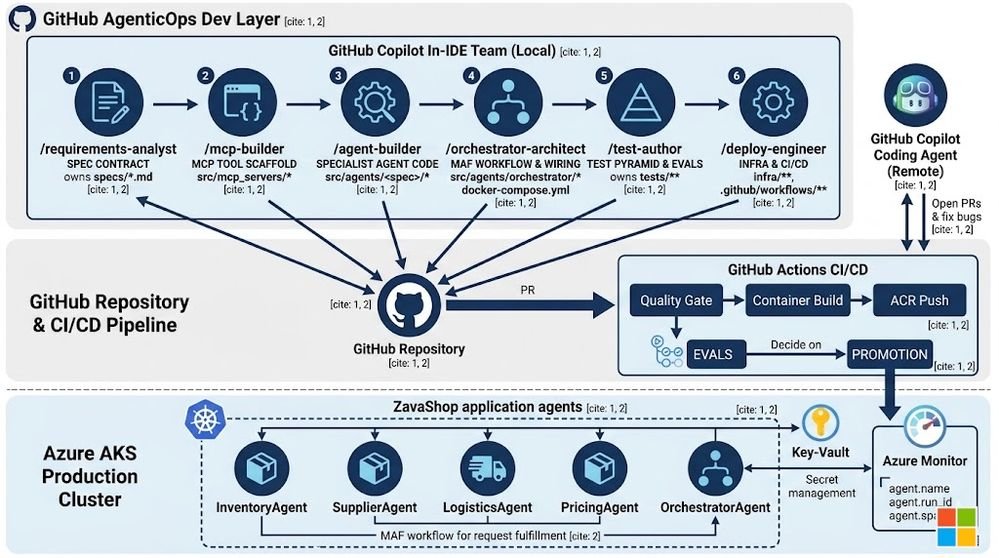

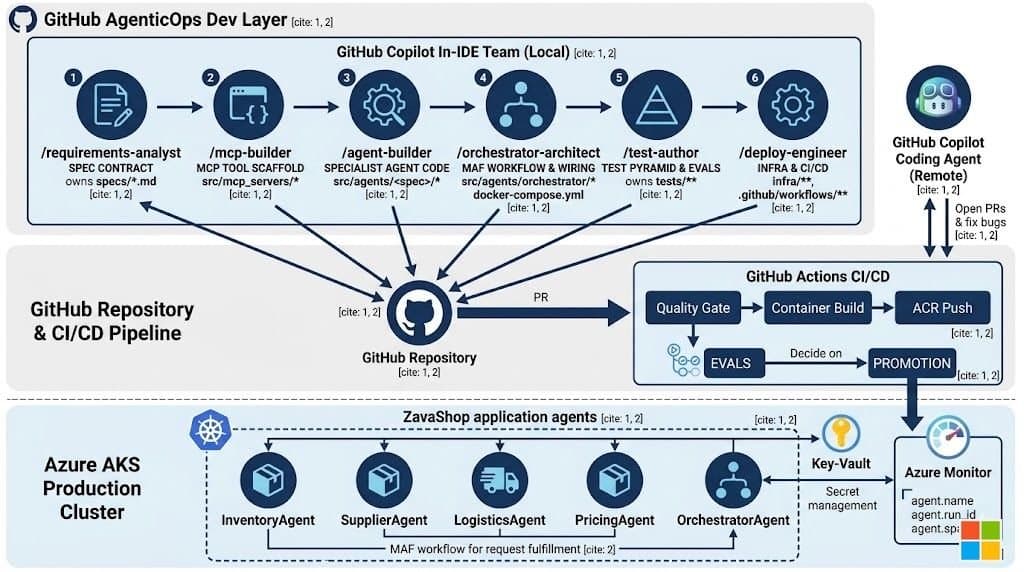

The Microsoft AKS‑Lab‑GitHubCopilot workshop shows how to move from Copilot as a pair‑programmer to a full‑stack, agent‑driven development model. By defining six custom coding agents and a remote GitHub Copilot coding agent, the lab demonstrates a programmable software lifecycle—specs → agents → tests → PRs → deployment—while enforcing scoped ownership, refusal rules, and eval‑driven quality gates.

AgenticOps in Action – Six Copilot Coding Agents Power a Retail Control Plane on AKS

What changed?

For the past two years GitHub Copilot has been marketed as an inline pair programmer that suggests the next line of code. The AKS‑Lab‑GitHubCopilot labs rewrite that narrative. Instead of a single assistant that nudges a human developer, the repository now hosts six custom coding agents plus a remote GitHub Copilot coding agent that together own every slice of the codebase, run tests, and push deployments. The result is a programmable development lifecycle where a spec triggers a chain of agents, each with its own tools, skills, and refusal rules, and the final output is a pull request that a human simply reviews.

Provider comparison – agents, tooling, and pricing implications

| Aspect | Custom Coding Agents (local) | Remote GitHub Copilot Coding Agent | Traditional Copilot (inline) |

|---|---|---|---|

| Execution context | Runs inside VS Code, discovered from .github/agents/*.agent.md. |

Runs on GitHub infrastructure, triggered by an issue. | Runs in the developer’s IDE as a suggestion engine. |

| Scope definition | Each agent declares an ownership path (e.g., src/agents/inventory/*) and a refusal envelope that blocks it from touching other directories. |

Policy is expressed in AGENTS.md; the remote agent may only open PRs under src/ and tests/. |

No explicit ownership; suggestions can affect any file. |

| Tooling | Uses the GitHub Copilot SDK (Python) together with the Microsoft Agent Framework (MAF). | Same SDK, but runs in a sandboxed container that can execute tests before PR creation. | Directly calls the Copilot model for autocomplete. |

| Cost model | Charged per token usage of the underlying model, similar to Copilot for Business, but amortized across many automated runs. | Billed per GitHub Actions minute plus Copilot usage; the remote agent can be throttled via workflow concurrency. | Per‑user subscription (e.g., $19/mo) for autocomplete. |

| Governance | Refusal rules are code; they are version‑controlled and audited. | Central policy file (AGENTS.md) enforces what the remote agent may modify. |

Governance is informal—relying on human discipline. |

| Observability | Each run emits agent.name, agent.run_id, agent.span_id via structlog, enabling traceability back to the specific agent version. |

Same telemetry is attached to the PR, and GitHub Actions logs expose the remote agent’s run ID. | No built‑in telemetry beyond editor logs. |

Why the shift matters for budgeting and migration

- Predictable spend – Because agents only run when a spec is created, token consumption follows a business‑driven cadence rather than every keystroke. Organizations can cap the number of spec‑driven runs per sprint and forecast Copilot costs accordingly.

- Migration path – Existing monolithic repos can be refactored by introducing a

requirements‑analystagent that extracts specs from legacy documentation. Once specs exist, the other agents can be incrementally added, allowing a step‑wise migration from human‑only pipelines to AgenticOps. - Compliance – Scoped ownership and refusal envelopes turn policy into code, making it auditable for standards such as SOC 2 or ISO 27001. The remote agent’s PR‑only workflow also satisfies change‑management requirements that demand human sign‑off.

Business impact – the four pillars of AgenticOps

1. Specs as contracts

The requirements‑analyst agent creates markdown specs (specs/*.md) that capture goals, API contracts, and evaluation scenarios. No code is generated until a spec exists, turning requirements into the source of truth.

2. Skills as living documentation

Shared knowledge lives in .github/skills/<skill>/SKILL.md. Before writing code, an agent consults the relevant skill file (e.g., Kubernetes patterns or MAF idioms). This eliminates drift: updates to a skill instantly affect all agents that depend on it.

3. Evals as quality gates

A four‑layer test pyramid plus five golden evaluation scenarios (S1–S5) run on every PR, whether authored by a human or an agent. The command uv run poe check enforces the same standards across the board, ensuring that the author—human or AI—cannot bypass quality checks.

4. Observability tied to identity

Every log entry includes the agent’s name and run identifiers. When a production failure surfaces, you can trace it back to the exact agent version that generated the offending code, dramatically reducing MTTR.

Walking through the labs – who does what and when?

| Lab | Primary agents involved | Key outcome |

|---|---|---|

| Lab 01 – Environment Setup | None (infrastructure provisioning) | AKS cluster, Azure Container Apps, ACR, Key Vault, and Workload Identity are provisioned. Six custom agents are installed into VS Code. |

| Lab 02 – Agent Creation | requirements‑analyst, mcp‑builder, agent‑builder |

Specs for each domain agent are generated, MCP servers are scaffolded, and typed ChatAgents are built. |

| Lab 03 – Orchestration & Config | orchestrator‑architect |

Deterministic MAF workflow is wired, secrets move to Key Vault, Docker Compose runs the whole fleet locally. |

| Lab 04 – Testing | test‑author (local) + remote GitHub Copilot Coding Agent |

Test pyramid is authored; a failing eval is filed as an issue, the remote agent creates a PR that must pass the same CI pipeline. |

| Lab 05 – Deployment & Run | deploy‑engineer |

Helm chart and Bicep modules are generated; /ship‑it workflow drives build, ACR push, ACA deployment, AKS rollout, smoke tests, and evals. |

How the DevOps pipeline transforms

- Atomic work unit – The pipeline now centers on a spec rather than a commit. A spec triggers one or more agents, which produce commits that feed CI.

- Code review focus – Reviewers evaluate whether an agent respected its refusal rules, consulted the correct skills, and passed all evals, rather than hunting for style issues.

- Governance as code – Policies are encoded in

AGENTS.mdand the agents’ refusal envelopes, removing reliance on ad‑hoc checklists. - Accelerated onboarding – New engineers read the agent definitions and skill files instead of dozens of wiki pages, shortening the ramp‑up period.

Takeaways for organizations planning a migration

- Scope before capability – Define the minimal toolset each agent needs. This reduces surface area for errors and simplifies policy enforcement.

- Invest in spec‑driven flows – Even if you don’t adopt full AgenticOps immediately, a robust requirements‑analyst process yields immediate ROI by clarifying intent early.

- Make evals non‑negotiable – Treat the evaluation stage as a hard gate; any PR—human or AI—must clear it before promotion.

- Instrument every action – Embed

agent.nameand run IDs in logs; this creates a traceable lineage from production incident back to the generating agent.

Resources

- Repository – https://github.com/microsoft/AKS-Lab-GitHubCopilot

- GitHub Copilot SDK (Python) – https://github.com/github/copilot-sdk-python

- Microsoft Agent Framework (MAF) – https://learn.microsoft.com/azure/agent-framework

- Governance patterns for delegating issues to Copilot – https://github.com/microsoft/agent-governance

Updated May 14 2026 – Version 1.0

Comments

Please log in or register to join the discussion