Cloudflare’s new Workflows V2 brings a step‑based deterministic execution model, expands concurrency to 50 000 instances, and adds richer observability. The update reshapes how developers build event‑driven pipelines, AI agents, and long‑running business processes on the edge.

Cloudflare Workflows V2 – A New Execution Model for Edge‑Native Orchestration

Cloudflare announced Workflows V2 on May 15, 2026, delivering a deterministic, replayable execution engine that can run up to 50 000 concurrent workflow instances. The upgrade lifts the previous limit of 4 500 instances and raises the per‑account throughput from 100 to 300 new executions per second. Queue capacity also doubles to 2 million items per workflow. These numbers are not just marketing fluff; they enable truly global, event‑driven systems such as AI inference pipelines, data‑sync services, and large‑scale background processing to run reliably at the edge.

Why the change matters

The original Workflows (V1) introduced durable primitives that let developers chain Workers, Queues, and Durable Objects. In practice, teams hit three pain points:

- Scaling limits – high‑traffic APIs quickly exhausted the 4 500‑instance ceiling.

- Observability gaps – step‑level tracing was coarse, making debugging of long‑running jobs difficult.

- Non‑deterministic retries – when a step failed, the entire workflow often had to replay, risking duplicate side‑effects.

Workflows V2 addresses each of these with a step‑based deterministic model. Every step is isolated, idempotent, and replay‑safe, meaning that a failure only triggers a retry of the offending step while earlier work remains untouched. This design mirrors the guarantees you expect from transactional databases but applies them to distributed, serverless code.

Core architectural changes

| Aspect | V1 | V2 |

|---|---|---|

| Execution model | Linear chain of functions, implicit state | Explicit step graph, each step has its own durable state |

| Concurrency limit | 4 500 instances | 50 000 instances |

| Throughput | 100 new executions/sec/account | 300 new executions/sec/account |

| Queue capacity | 1 M items/workflow | 2 M items/workflow |

| Observability | Workflow‑level logs | Step‑level tracing, execution history UI |

| Replay semantics | Full replay on failure | Partial replay of failed step only |

The new model builds on Cloudflare’s existing runtime stack:

- Workers execute user code at the edge.

- Queues ingest events and act as the trigger source for steps.

- Durable Objects provide region‑wide consistency for step state.

- Workers KV can be used for cheap, read‑heavy data that does not need strict consistency.

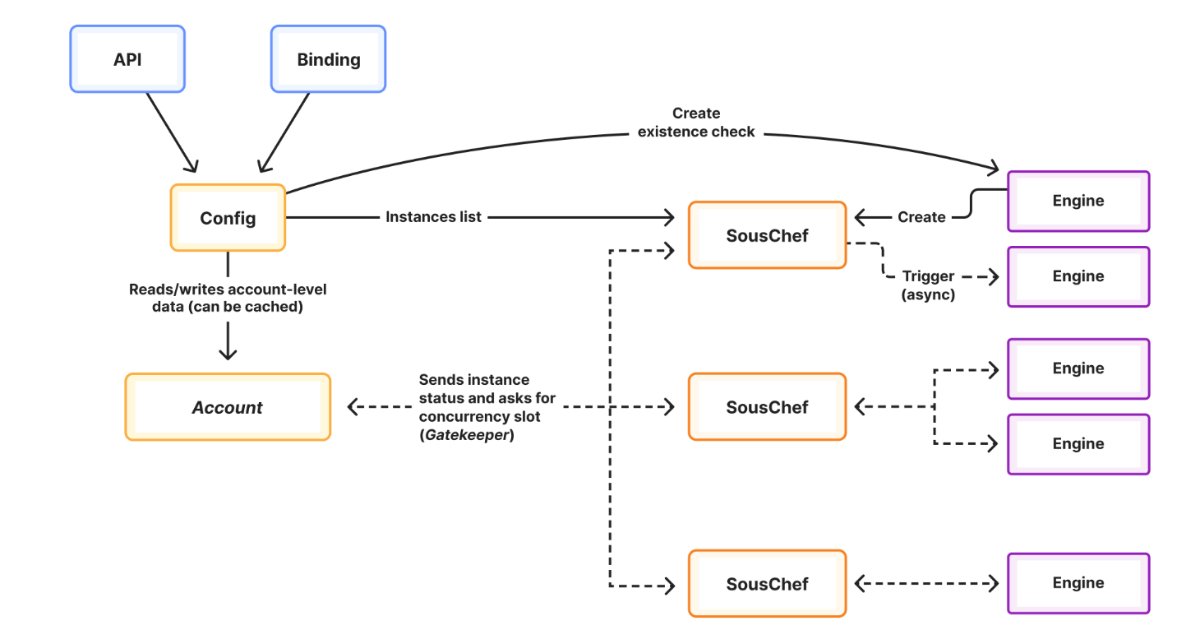

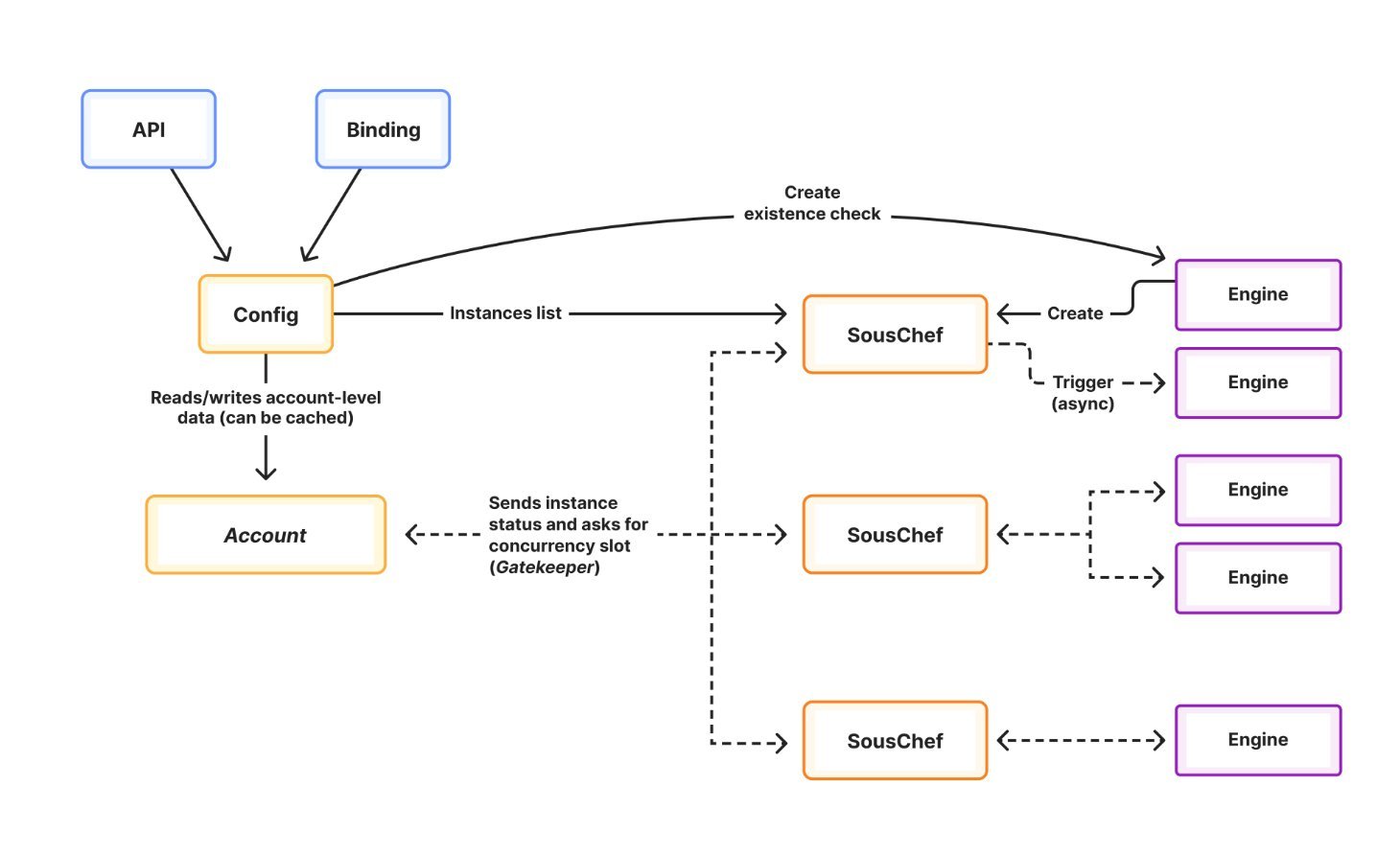

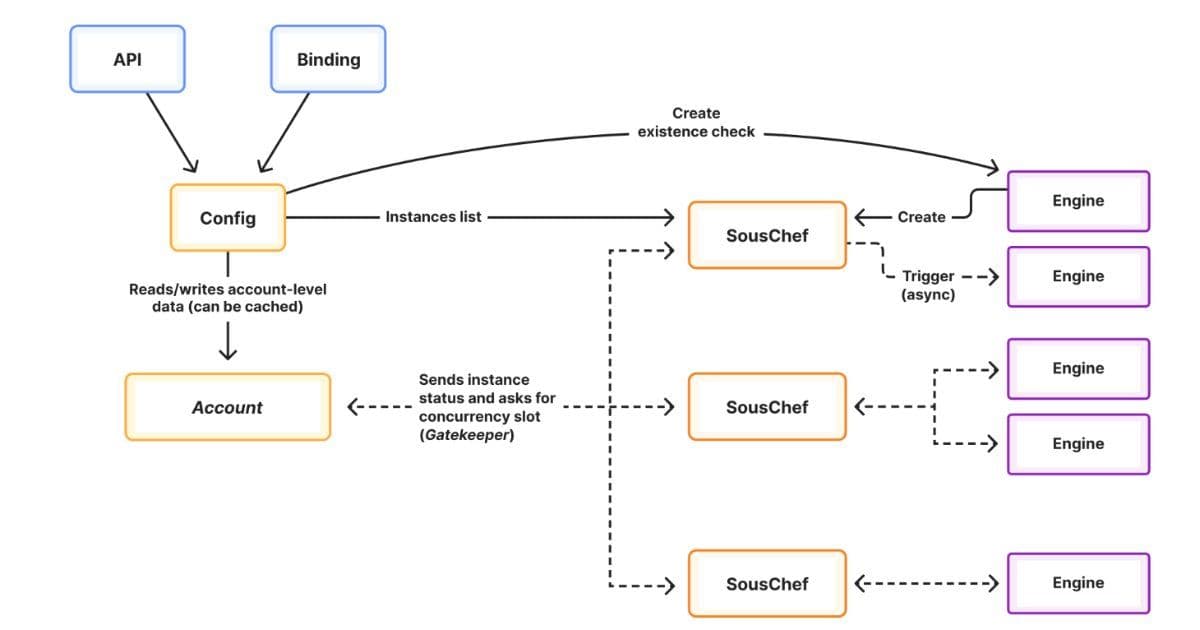

A diagram from the official blog (see image below) shows how a workflow is now a directed acyclic graph (DAG) of steps, each with its own execution record stored in a Durable Object. When a step finishes, it writes a checkpoint; the orchestrator then schedules the next ready step, possibly on a different edge location.

Typical use cases

- AI Agent Orchestration – An agent may need to fetch data, run a model inference, and store results. With V2, the data‑fetch step can retry independently of the inference step, avoiding costly duplicate model runs.

- Multi‑region Data Pipelines – A pipeline that extracts from a SaaS API, transforms data, and writes to a global store can fan‑out parallel transformation steps, then fan‑in to a final aggregation step.

- Transactional Business Processes – Order fulfillment flows that involve payment, inventory reservation, and shipping label generation benefit from step‑level idempotency, ensuring that a payment retry does not double‑charge the customer.

Migration path from V1 to V2

While the high‑level concepts remain the same, developers need to adopt the explicit step definition API. The migration checklist includes:

- Refactor linear chains into a DAG using the

workflow.step()builder. - Mark side‑effecting calls as idempotent (e.g., use

PUTwith a deterministic key or include a retry token). - Update error handling to rely on the platform’s automatic retries rather than custom loops.

- Adjust monitoring to consume the new step‑level traces via the Workflows dashboard or export them to OpenTelemetry.

Cloudflare provides a migration guide and a CLI tool (cf-workflows migrate) that can scaffold the new step definitions from existing V1 code.

Trade‑offs to consider

| Benefit | Potential drawback |

|---|---|

| Deterministic replay reduces duplicate work | Requires developers to ensure each step is truly idempotent, which may add upfront effort |

| 10× higher concurrency enables massive scale | Higher concurrency can increase load on downstream services; rate‑limiting or back‑pressure strategies become essential |

| Step‑level observability simplifies debugging | More granular logs can increase storage costs; teams should set appropriate retention policies |

| Parallel step execution shortens end‑to‑end latency | Complex DAGs may be harder to reason about; visual tooling is recommended |

Overall, the benefits outweigh the costs for most production workloads, especially those already embracing event‑driven architectures.

Pricing impact

Cloudflare kept the base pricing tier unchanged, but the higher limits are now part of the Standard plan. For teams that need the full 50 000 concurrency, the Enterprise tier adds a per‑million‑step charge of $0.0004. This is comparable to the cost of running a comparable number of Workers, and the deterministic guarantees can actually reduce overall spend by cutting down on duplicate executions.

Looking ahead

Workflows V2 positions Cloudflare as a strong alternative to other serverless orchestration platforms like AWS Step Functions or Azure Durable Functions, especially for workloads that must run close to the user. The deterministic model also lays groundwork for future features such as state versioning and time‑travel debugging, which the Cloudflare team hinted at in a recent developer summit.

For teams building AI agents, data pipelines, or any long‑running business logic at the edge, the upgrade is a clear signal to start designing workflows around explicit steps and to take advantage of the new concurrency headroom.

Resources

- Official announcement: Cloudflare Workflows V2 blog post

- Migration guide: Workflows V2 migration documentation

- API reference: Workflows V2 API docs

- Observability tutorial: Step‑level tracing with OpenTelemetry

Leela Kumili is a Lead Engineer at Starbucks and contributes regularly to InfoQ’s Architecture & Design coverage.

Comments

Please log in or register to join the discussion