Amazon's new prompt optimization tool for Bedrock enables enterprises to optimize prompts across multiple models simultaneously, with significant implications for AI model migration and performance optimization in multi-cloud environments.

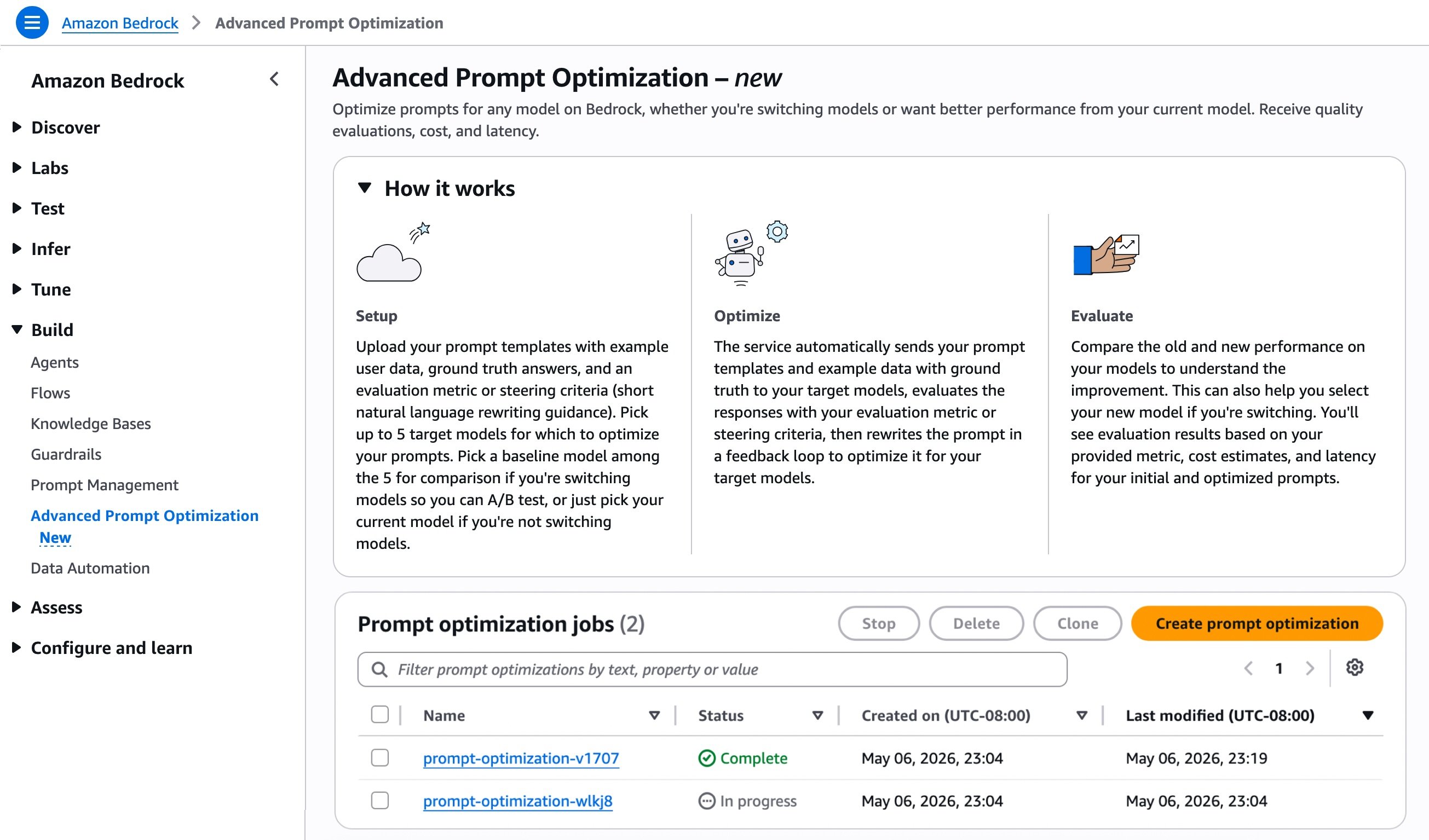

Amazon has recently announced the launch of Amazon Bedrock Advanced Prompt Optimization, a sophisticated tool designed to enhance prompt engineering capabilities across multiple AI models. This announcement represents a significant development in the cloud AI services landscape, particularly for organizations implementing multi-cloud strategies or considering model migrations.

What Changed: The New Capabilities

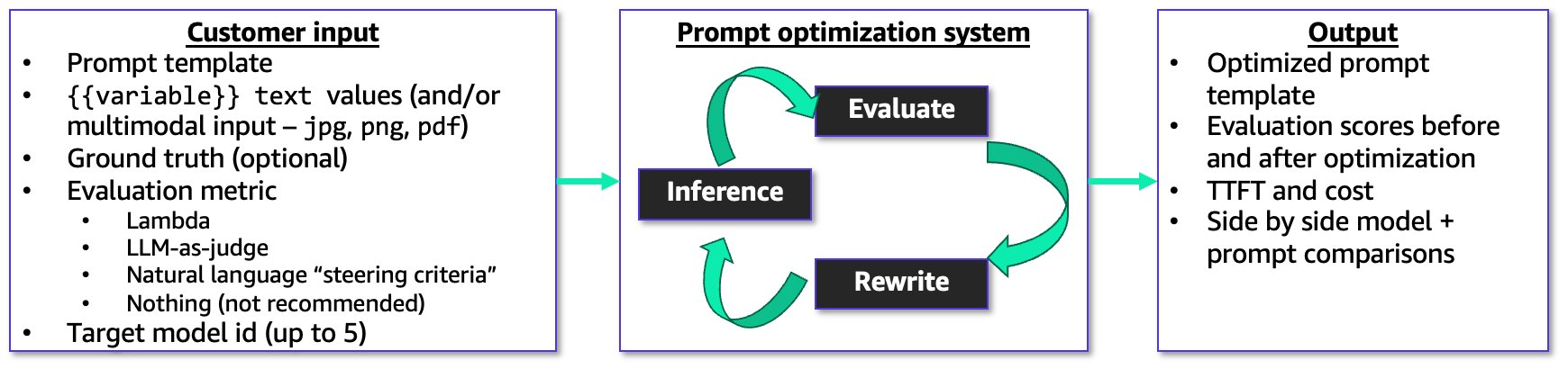

Amazon Bedrock Advanced Prompt Optimization introduces several powerful features that address common challenges in prompt engineering and model optimization:

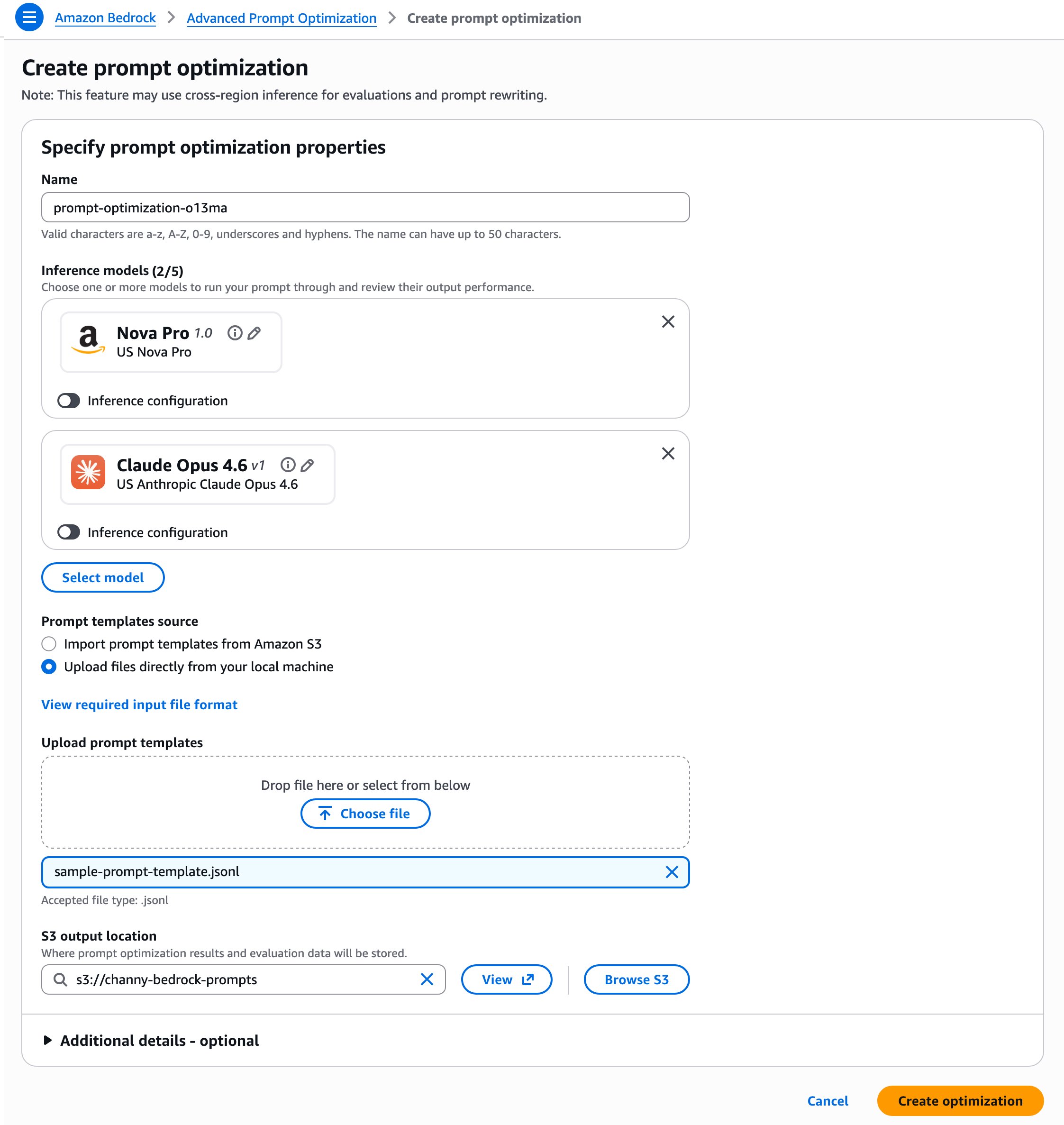

The tool allows users to input prompt templates, example user inputs, ground truth answers, and evaluation metrics. It then operates through a metric-driven feedback loop to optimize prompts and resulting model responses. Users can compare their original prompts to optimized versions across up to 5 models simultaneously, making it particularly valuable for organizations evaluating multiple AI models or planning migrations.

One notable capability is support for multimodal inputs, including PNG, JPG, and PDF files. This enables optimization of prompts for complex tasks like document analysis and image interpretation, which are increasingly important in enterprise AI applications.

The tool offers three evaluation approaches:

- Lambda functions with custom Python scoring logic for concrete metrics

- LLM-as-a-Judge with custom rubrics for open-ended tasks

- Natural language steering criteria for quality guidelines without full judge prompts

The optimization process outputs original and final prompt templates complete with evaluation scores, cost estimates, and latency metrics—providing a comprehensive view of the optimization impact.

For implementation, users can prepare prompt templates in JSONL format and upload them directly or import from Amazon S3. The tool then automatically evaluates responses through a feedback loop to generate optimized prompts.

Provider Comparison: Bedrock vs. Competitors

When evaluating Amazon's new prompt optimization offering against competitors, several strategic considerations emerge:

Amazon Bedrock distinguishes itself through its multi-model comparison capability, allowing simultaneous optimization across up to 5 models. This is particularly valuable for organizations working with multiple AI models or planning migrations. The integration with AWS ecosystem services, especially S3 for data storage and Lambda for custom evaluation logic, creates a cohesive workflow for enterprises already invested in AWS.

Microsoft Azure OpenAI Service offers prompt optimization through its Azure AI Studio, with a focus on OpenAI models. While it provides robust prompt engineering capabilities, it lacks the multi-model comparison feature that Amazon's tool offers. Azure's strength lies in its tight integration with Microsoft 365 and enterprise security features, making it attractive for organizations heavily invested in the Microsoft ecosystem.

Google Vertex AI provides prompt optimization through its Vertex AI Studio, with particular strength in Google's models and multimodal capabilities. Google's approach emphasizes integration with its data analytics and machine learning platforms, offering advantages for organizations leveraging Google's data ecosystem.

The key differentiator for Amazon's new tool is its explicit focus on migration scenarios—allowing organizations to optimize prompts for their current model while simultaneously preparing for migration to new models. This addresses a critical pain point for enterprises as they navigate the evolving AI model landscape.

Business Impact: Strategic Implications

The introduction of Bedrock Advanced Prompt Optimization carries several significant business implications for organizations adopting cloud-based AI services:

Migration Strategy Enhancement: The tool's ability to optimize prompts across multiple models simultaneously provides a systematic approach to model migration. Organizations can evaluate how their prompts will perform on target models before committing to a full migration, reducing the risk of performance regressions. This capability becomes increasingly important as new, more capable models emerge, and enterprises must balance performance gains with the costs and disruptions of migration.

Cost Optimization: By providing cost estimates alongside optimization results, the tool enables organizations to make more informed decisions about prompt engineering and model selection. The ability to compare performance across models helps identify the most cost-effective solution for specific use cases, which becomes critical as organizations scale their AI applications.

Multi-Cloud Flexibility: For organizations implementing multi-cloud strategies, Amazon's tool offers a standardized approach to prompt optimization that can be applied across different cloud providers' AI services. While the tool itself is AWS-specific, the optimization principles and methodologies can inform prompt engineering practices across cloud environments, enhancing overall operational consistency.

Operational Efficiency: The automation of the prompt optimization process reduces the manual effort traditionally required for prompt engineering. This allows teams to focus on higher-value tasks while still maintaining high-quality AI outputs. The tool's support for various evaluation methods also accommodates different organizational needs, from highly structured metrics to more qualitative assessments.

Risk Management: The ability to test optimized prompts against known use cases helps identify potential issues before deployment, reducing the risk of unexpected behavior in production environments. This is particularly important for applications where AI outputs impact business-critical processes or customer experiences.

Implementation Considerations

Organizations considering adoption of Amazon Bedrock Advanced Prompt Optimization should evaluate several implementation factors:

Data Preparation: The tool requires prompt templates in JSONL format with example user data, ground truth answers, and evaluation metrics. Organizations should assess their existing data preparation processes to determine the effort required to format data appropriately.

Evaluation Method Selection: The choice between Lambda functions, LLM-as-a-Judge, or natural language steering criteria depends on the specific use case and organizational preferences. Organizations should evaluate which approach aligns best with their existing technical capabilities and quality requirements.

Integration with Existing Workflows: The tool's integration with AWS services like S3 and Lambda creates opportunities for automation and enhanced workflows. Organizations should consider how prompt optimization can be incorporated into their existing AI development and deployment processes.

Skill Requirements: While the tool automates much of the prompt optimization process, effective use still requires prompt engineering expertise and an understanding of the target models' characteristics. Organizations should assess whether they need additional training or hiring to maximize the tool's value.

Cost Management: As with all AWS services, organizations should monitor the costs associated with prompt optimization, which are based on model inference tokens consumed during optimization. This requires careful planning, especially for large-scale optimization projects.

Future Outlook

The introduction of advanced prompt optimization tools reflects a broader trend in cloud AI services: the increasing sophistication of tools that help organizations extract maximum value from AI models. As AI models become more capable and the number of available options grows, tools that facilitate model comparison, migration, and optimization will become essential components of enterprise AI strategies.

Amazon's focus on prompt optimization also highlights the growing recognition that prompt engineering will remain a critical skill even as models become more advanced. The ability to systematically optimize prompts across multiple models represents a significant step toward making AI more accessible and manageable for enterprise applications.

For organizations implementing multi-cloud strategies, the emergence of sophisticated prompt optimization tools across different cloud providers creates opportunities for standardization while also introducing considerations for maintaining consistency across environments.

Conclusion

Amazon Bedrock Advanced Prompt Optimization represents a significant advancement in cloud-based AI tooling, offering organizations powerful capabilities for prompt optimization and model migration. By enabling simultaneous comparison across multiple models and providing comprehensive evaluation metrics, the tool addresses critical challenges in enterprise AI adoption.

For organizations evaluating cloud AI services, this tool offers particular value for those implementing multi-cloud strategies or planning model migrations. The ability to optimize prompts for current models while preparing for future migrations provides a strategic advantage in the rapidly evolving AI landscape.

As organizations continue to scale their AI applications, tools like Amazon Bedrock Advanced Prompt Optimization will play an increasingly important role in maximizing the value of AI investments while managing the complexities of working with multiple models across different cloud environments.

For more information on implementing the advanced prompt optimization tool, visit the official AWS documentation and explore the sample codes on GitHub.

Comments

Please log in or register to join the discussion