AMD has launched the Instinct MI350P, a PCIe-form-factor AI accelerator built on TSMC 3nm and 6nm processes, delivering 144GB of HBM3E memory and up to 43% higher theoretical FP16 compute than Nvidia's H200 NVL for data center inference and RAG workloads.

Launch Announcement

AMD has expanded its Instinct MI350 series of data center AI accelerators with the new MI350P, a PCIe-compatible model designed for drop-in deployment in existing air-cooled server infrastructure. The new card targets inference and retrieval-augmented generation (RAG) workloads across small, medium, and large-scale AI deployments, offering a direct competitor to Nvidia's H200 NVL PCIe accelerator. AMD Instinct MI350 Series

The MI350P is positioned as a cost-effective upgrade path for data center operators that have already invested in air-cooled PCIe server racks, avoiding the need for liquid cooling retrofits required by higher-performance OAM (Open Accelerator Module) form factor accelerators such as the MI350X or Nvidia H100 SXM.

Technical Specifications

The MI350P is built on AMD's CDNA4 architecture, fabricated using a combined TSMC 3nm and 6nm FinFET process node split. Compute dies use the 3nm node for higher transistor density and performance per watt, while I/O and memory controllers are built on the more mature 6nm process to reduce production costs. TSMC 3nm Technology

CDNA4 is AMD's fourth-generation data center GPU architecture, optimized for AI and high-performance computing workloads with dedicated matrix cores for accelerated low-precision compute, a key requirement for large language model inference. The MI350P includes 128 compute units (CUs), 8,192 stream processors, 512 matrix cores, and a maximum clock speed of 2.2GHz.

The card is equipped with 144GB of HBM3E (High Bandwidth Memory 3 Enhanced) memory, delivering 4TB/s of bandwidth, and includes a 128MB last-level cache to reduce memory latency for frequent data accesses. HBM3E is the latest iteration of stacked memory technology, offering higher bandwidth and lower power consumption than previous HBM3 generations, critical for feeding data to the accelerator's compute cores during intensive AI workloads.

Physically, the MI350P is a 10.5-inch dual-slot PCIe card with a passive cooling solution, meaning it relies on chassis fans in rack-mounted servers for thermal management. It has a default power envelope of 600W, but can be configured to run at 450W to fit into power or thermally constrained server chassis.

Up to eight MI350P cards can be linked in a single server node for scaled performance, with native support for lower-precision MXFP6 and MXFP4 formats optimized for LLM inference. The table below outlines the MI350P's specifications relative to other members of the Instinct MI350 series:

| Specification | Instinct MI350P PCIe | Instinct MI325X OAM | Instinct MI350X OAM | 8 x Instinct MI350X OAM | Instinct MI355X OAM | 8 x Instinct MI355X OAM |

|---|---|---|---|---|---|---|

| GPU Architecture | CDNA 4 | CDNA 3 | CDNA 4 | CDNA 4 | CDNA 4 | CDNA 4 |

| Dedicated Memory Size | 144 GB HBM3E | 256 GB HBM3E | 288 GB HBM3E | 2.3 TB HBM3E | 288 GB HBM3E | 2.3 TB HBM3E |

| Memory Bandwidth | 4 TB/s | 6 TB/s | 8 TB/s | 8 TB/s per OAM | 8 TB/s | 8 TB/s per OAM |

| FP64 Performance | 36 TFLOPs | N/A | 72 TFLOPs | 577 TFLOPs | 78.6 TFLOPS | 628.8 TFLOPs |

| FP16 Performance | 2.3 PFLOPS | 2.61 PFLOPS | 4.6 PFLOPS | 36.8 PFLOPS | 5 PFLOPS | 40.2 PFLOPS |

| FP8 Performance | 4.6 PFLOPS | 5.22 PFLOPS | 9.2 PFLOPs | 73.82 PFLOPs | 10.1 PFLOPs | 80.5 PFLOPs |

| FP6 Performance | N/A | N/A | 18.45 PFLOPS | 147.6 PFLOPS | 20.1 PFLOPS | 161 PFLOPS |

| FP4 Performance* | N/A | N/A | 18.45 PFLOPS | 147.6 PFLOPS | 20.1 PFLOPS | 161 PFLOPS |

Performance Comparisons

AMD positions the MI350P as a direct competitor to Nvidia's H200 NVL PCIe accelerator, the current leading PCIe-based AI accelerator from the market leader. Nvidia H200 NVL Internal testing from AMD claims the MI350P delivers 20% higher theoretical FP64 performance, 43% higher FP16 performance, and 39% higher FP8 performance than the H200 NVL. For MXFP4 workloads, AMD estimates the MI350P delivers 2,299 sustained TFLOPs and 4,600 peak TFLOPs, making it the highest-performance enterprise PCIe AI accelerator available as of its launch.

Nvidia has not announced a PCIe-compatible version of its latest B200 Blackwell GPUs, which use HBM3e memory, leaving AMD with a temporary lead in the PCIe HBM accelerator segment.

Supply Chain Context

The MI350P's production relies on TSMC's advanced node capacity, which has faced high demand from AI accelerator and smartphone chip clients over the past 24 months. TSMC's $165 billion U.S. manufacturing expansion, which includes new 3nm fabs in Arizona, is expected to increase supply chain resilience for AMD's accelerator production, reducing reliance on Taiwanese fabs for North American data center clients.

TSMC's ability to deliver stable 3nm and 6nm wafer volumes will be a key factor in AMD's ability to meet MI350P demand, as the accelerator market remains supply-constrained for advanced node chips.

Market Position and Adoption Outlook

AMD's primary challenge in the AI accelerator market remains Nvidia's dominant CUDA software ecosystem, which has become a standard for AI model training and inference across most data center operators. AMD has invested heavily in its ROCm software stack to close this gap, with company representatives noting at CES 2026 that ROCm now supports 90% of the top 100 open-source AI models with performance parity to CUDA in key inference workloads. AMD ROCm

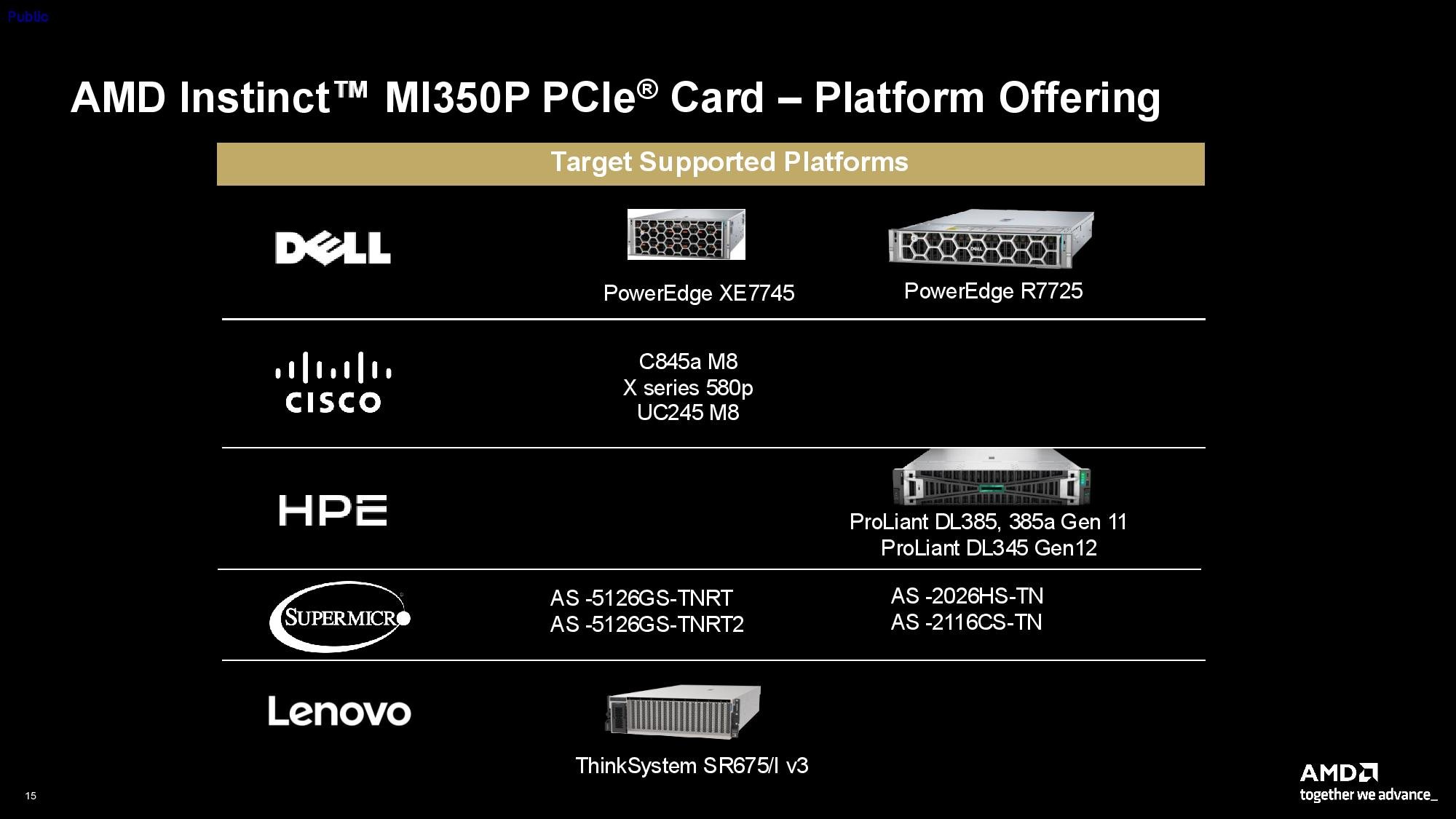

The MI350P's drop-in compatibility with existing air-cooled servers lowers the barrier to adoption for data center operators that want to avoid costly infrastructure upgrades required for liquid-cooled OAM modules. Early interest has come from mid-sized cloud service providers and enterprise data center operators with existing PCIe server infrastructure, who want to add AI acceleration capacity without retrofitting for liquid cooling.

Pricing for the MI350P has not been announced, but AMD typically prices its Instinct accelerators 20-30% lower than comparable Nvidia models to incentivize adoption. Volume shipments are expected to begin in Q3 2026, with select hyperscale clients receiving early samples in Q2.

Comments

Please log in or register to join the discussion