Anthropic's new Auto Mode in Claude Code introduces a paradigm shift in AI-assisted development, enabling autonomous coding workflows with human approval gates. This evolution addresses the tension between developer productivity and safety concerns in AI-powered development environments.

Anthropic has introduced a significant evolution in AI-assisted development with the launch of Auto Mode in Claude Code, transforming how developers interact with AI coding assistants. This new approach represents a strategic shift from the previous permission-based model to a more autonomous system with built-in safety checkpoints, fundamentally changing the developer experience while maintaining appropriate controls.

The Evolution of AI-Assisted Coding

Prior to Auto Mode, Claude Code operated on a permission-based architecture requiring explicit user approval for most actions, including command execution and file modifications. While this approach provided strong safety guarantees and user control, it introduced significant friction during extended development sessions. The repetitive approval process led to "approval fatigue," where developers spent more time managing prompts than focusing on actual development work.

As Sid Chaudhary, Head of Product at Intempt, observed, "You can now run Claude and actually walk away. Coffee break. Actual walk. You don't babysit it." This represents a fundamental change in how developers can leverage AI assistance, moving from a reactive approval model to a proactive governance system.

Architecture of Auto Mode

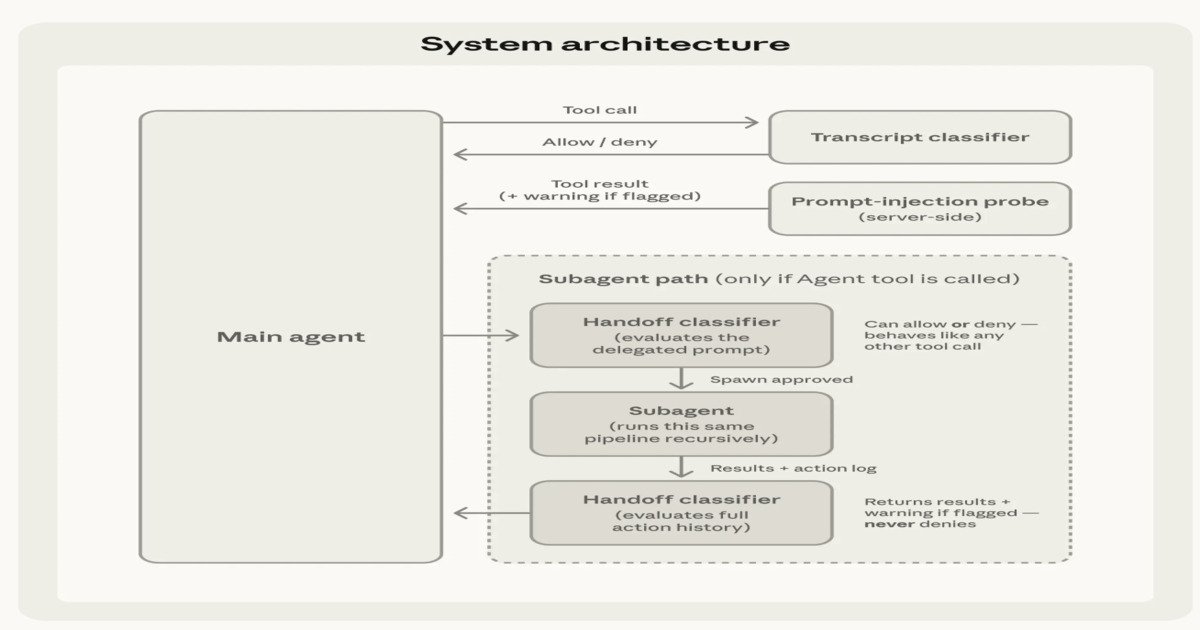

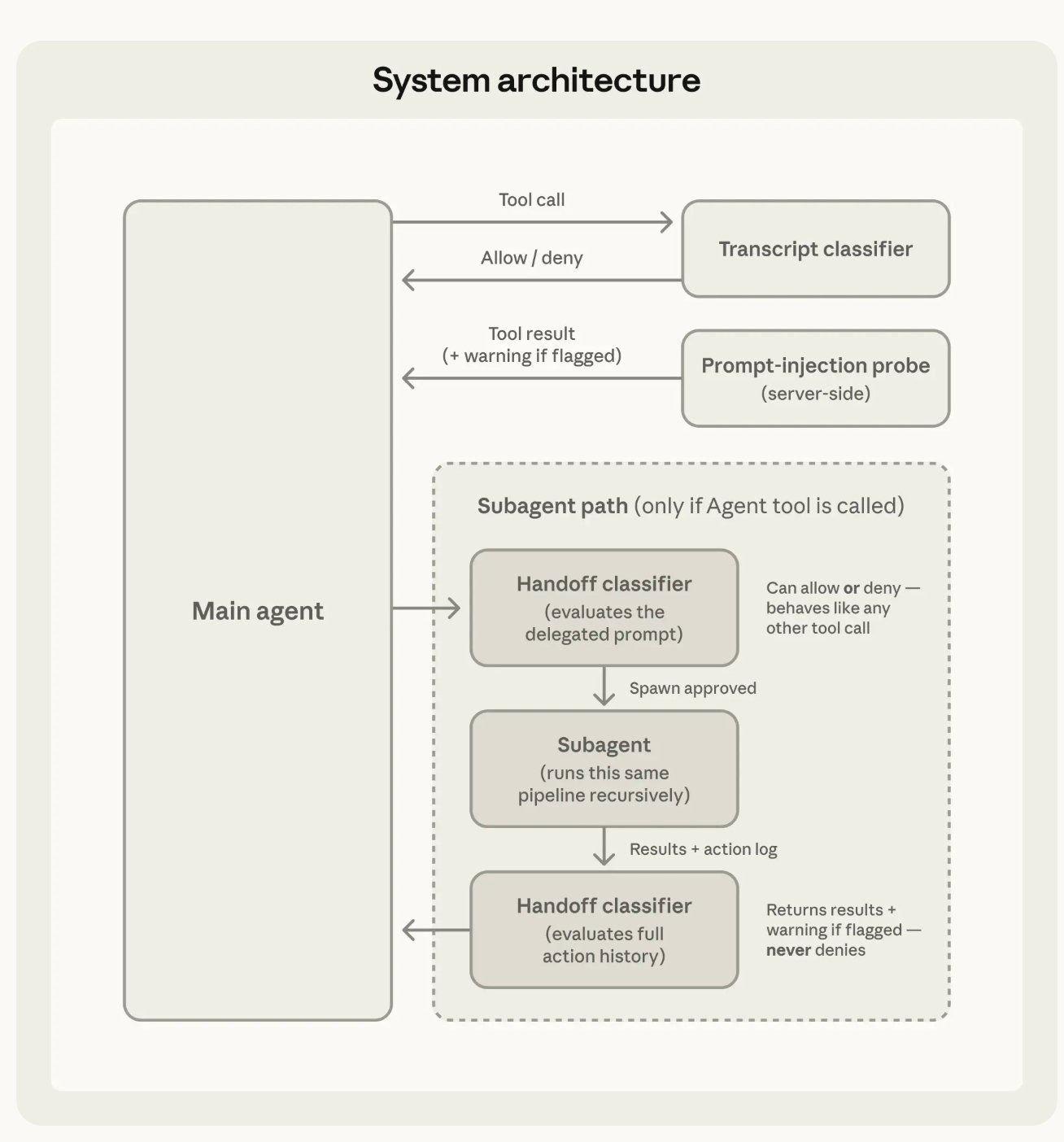

Auto Mode introduces a sophisticated layered safety and execution architecture that governs both input processing and action execution. At the input layer, the system inspects tool outputs—including file reads, shell results, and web responses—before incorporating them into the system context. When content appears malicious or attempts to alter instructions, warnings are injected to ensure it's treated as untrusted and doesn't override user intent.

At the execution layer, each proposed action undergoes evaluation before execution, functioning as an automated approval mechanism that filters safe operations while escalating ambiguous cases for additional checks. This approach reduces repetitive user intervention while preserving safeguards for high-impact operations.

The interface provides clear visual feedback during these processes. As Ankit Kalluraya, a Test Engineer, described, "In auto mode, the spinner now turns red when a permission check is triggered, giving you a clear visual signal that Claude is pausing for approval."

Two-Stage Classification for Efficiency and Safety

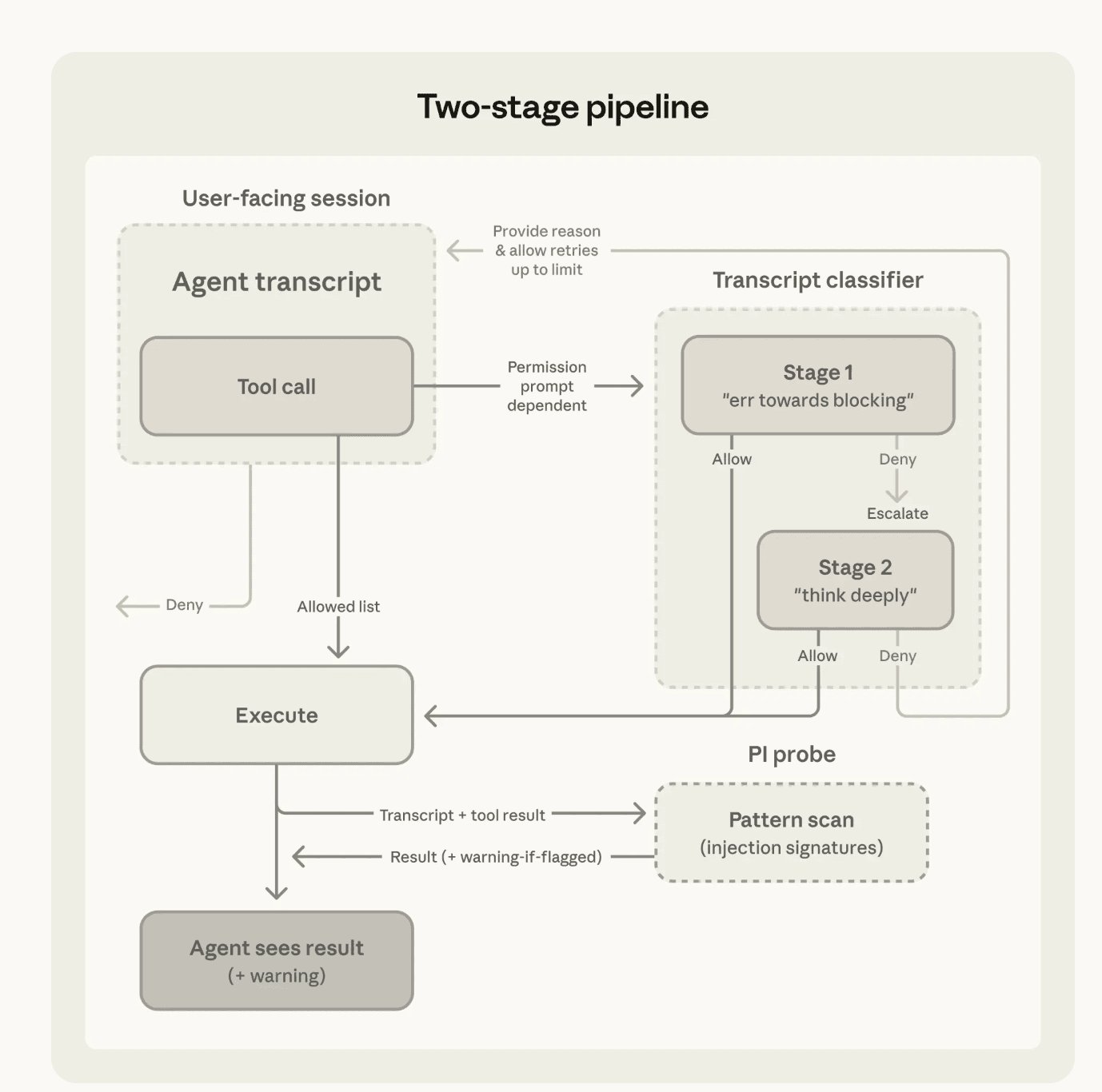

The system employs a sophisticated two-stage classification approach to balance efficiency with comprehensive safety coverage. The first stage implements a fast initial filter that processes most tool calls, allowing safe actions to proceed with minimal overhead. Only uncertain or potentially risky operations are escalated to deeper analysis in the second stage.

This approach improves recall for edge cases while controlling latency and compute costs. It maintains consistent enforcement of safety and intent alignment without creating unnecessary friction for routine operations. The architecture represents a careful balance between autonomous operation and human oversight.

Subagent Workflow Governance

Auto Mode extends safety considerations to subagent workflows through comprehensive validation mechanisms. During task delegation, outbound checks verify whether the assigned task aligns with user intent before execution begins. Upon completion, a return check evaluates the subagent's full execution history to detect potential prompt injection or manipulation during runtime.

If risks are identified, warnings are added before results are returned to the orchestrating agent. This multi-layered approach ensures that even when tasks are delegated to autonomous subagents, appropriate safety measures remain in place throughout the execution lifecycle.

Comparative Analysis with Competitor Solutions

When compared to other AI coding assistants, Claude Code Auto Mode distinguishes itself through its sophisticated safety architecture rather than pure autonomy. Competing solutions often focus on either complete automation with minimal safeguards or manual approval processes that hinder productivity.

GitHub Copilot, for example, provides code suggestions but lacks the comprehensive approval architecture of Claude Code. Similarly, Amazon CodeWhisperer focuses on generating code completions without the multi-layered safety checks that Auto Mode implements.

The approach differs significantly from Google's Bard or Microsoft's Copilot Chat, which operate primarily as conversational assistants rather than integrated development environments with autonomous capabilities. Claude Code Auto Mode represents a middle ground—sufficient autonomy to reduce developer friction while maintaining appropriate controls for sensitive operations.

Business Impact and Organizational Considerations

The introduction of Auto Mode raises important questions about organizational governance and development workflows. As Mykola Kondratiuk, Director at Playtika, noted, "With Auto Mode on, the AI is now the approver, not just the actor. Most governance docs still name a human there and haven't been updated."

This shift requires organizations to reconsider their development processes, approval chains, and quality assurance mechanisms. The balance between productivity gains and appropriate oversight becomes a strategic consideration rather than a technical implementation detail.

Mayank Agrawal, Lead Engineer at Zethra OS, highlighted a critical concern: "This is where resilience turns into a security problem." As AI systems gain more autonomy, organizations must develop new frameworks for oversight and validation that account for both the capabilities and limitations of these systems.

Migration Considerations

Organizations considering adoption of Claude Code Auto Mode should evaluate several factors:

- Existing Workflow Integration: How does Auto Mode fit into current development processes and toolchains?

- Security Posture: What additional safeguards are needed to complement Auto Mode's built-in protections?

- Team Training: How should developers be trained to work effectively with autonomous AI systems while maintaining appropriate oversight?

- Cost-Benefit Analysis: What productivity gains justify the potential risks and organizational changes required?

Future Trajectory

Anthropic has indicated plans to continue refining Auto Mode through expanded evaluation sets and iterative improvements. The company aims to catch enough high-risk actions to make autonomous operation safer than no guardrails while encouraging users to remain aware of residual risk and report issues.

As AI-assisted development continues to evolve, the balance between autonomy and control will remain a central consideration. Claude Code Auto Mode represents a significant step toward more productive AI-assisted development while maintaining appropriate safety measures—a direction likely to influence the broader AI development tools ecosystem.

For organizations evaluating AI-assisted development tools, Claude Code Auto Mode offers a compelling approach that balances productivity with appropriate oversight. The sophisticated safety architecture provides a model for how autonomous development tools can evolve without compromising security or control.

Comments

Please log in or register to join the discussion