The US Department of Defense is pressuring Anthropic to lift restrictions on military AI use, threatening legal action and contract termination if the company doesn't comply with demands for autonomous weapons and surveillance capabilities.

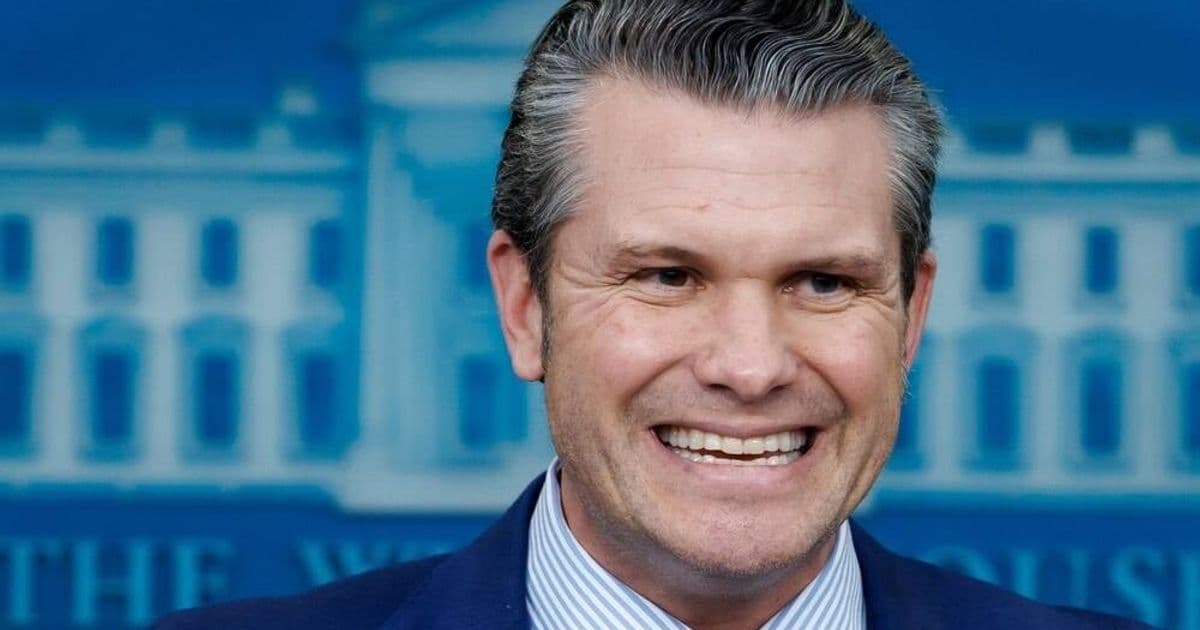

The US Department of Defense is escalating its confrontation with Anthropic over military use of the company's AI technology, with Defense Secretary Pete Hegseth threatening to invoke the Defense Production Act if the AI firm doesn't lift restrictions on autonomous weapons and domestic surveillance applications.

Pentagon's Ultimatum

The dispute reached a critical point after a Tuesday meeting between Anthropic CEO Dario Amodei and Defense Secretary Hegseth failed to resolve the impasse. According to senior Pentagon officials, the department is prepared to take several aggressive actions if Anthropic maintains its current restrictions:

- Invoke the Defense Production Act to compel Anthropic to accept military contracts

- Declare Anthropic a supply chain risk, potentially blacklisting it from government contracts

- Terminate the Pentagon's existing up to $200 million contract with Anthropic

The Pentagon's frustration centers on Anthropic's refusal to allow its AI to be used for autonomous weapons targeting without human intervention and domestic surveillance of American citizens, even when such surveillance might be legally authorized.

Anthropic's Shifting Stance

In a development that may signal flexibility, Anthropic released its third iteration of the Responsible Scaling Policy on the same day as the Pentagon meeting. The new policy notably removes a key safety pledge that had been central to the company's approach for years.

Previously, Anthropic committed to ceasing AI model training if it couldn't guarantee safety and to withholding model releases without proper risk mitigations. The company now cites competitive pressures in the AI industry as justification for abandoning these safeguards.

"We felt that it wouldn't actually help anyone for us to stop training AI models," Anthropic's science chief Jared Kaplan told Time. "We didn't really feel, with the rapid advance of AI, that it made sense for us to make unilateral commitments … if competitors are blazing ahead."

The Broader Context

The confrontation highlights the growing tension between AI safety advocates and military institutions eager to deploy advanced AI capabilities. The Pentagon argues that legal usage of AI is the responsibility of the end user—not the technology provider.

This dispute occurs against the backdrop of increasing military AI adoption, with the US military already using AI for targeting in air strikes and exploring AI agents for decision-making and operational planning.

What's at Stake

The outcome of this standoff could have significant implications for the AI industry. Anthropic faces a difficult choice: maintain its safety principles and risk financial penalties, legal compulsion, and industry blacklisting, or compromise on its stated values to maintain its government business.

The company has not responded to requests for comment on whether it might be willing to comply with Pentagon demands to avoid these consequences.

As AI capabilities continue advancing rapidly, this conflict represents a broader struggle over who controls the deployment of powerful AI systems and under what conditions they can be used—questions that will likely become more contentious as the technology becomes increasingly capable of autonomous decision-making in sensitive domains.

Comments

Please log in or register to join the discussion