After fixing an unprecedented 423 security bugs in Firefox releases in April 2026, including 271 identified with Claude Mythos Preview, Mozilla shares the technical details of its agentic AI hardening pipeline, the nature of the vulnerabilities discovered, and actionable advice for open source projects looking to adopt similar defenses.

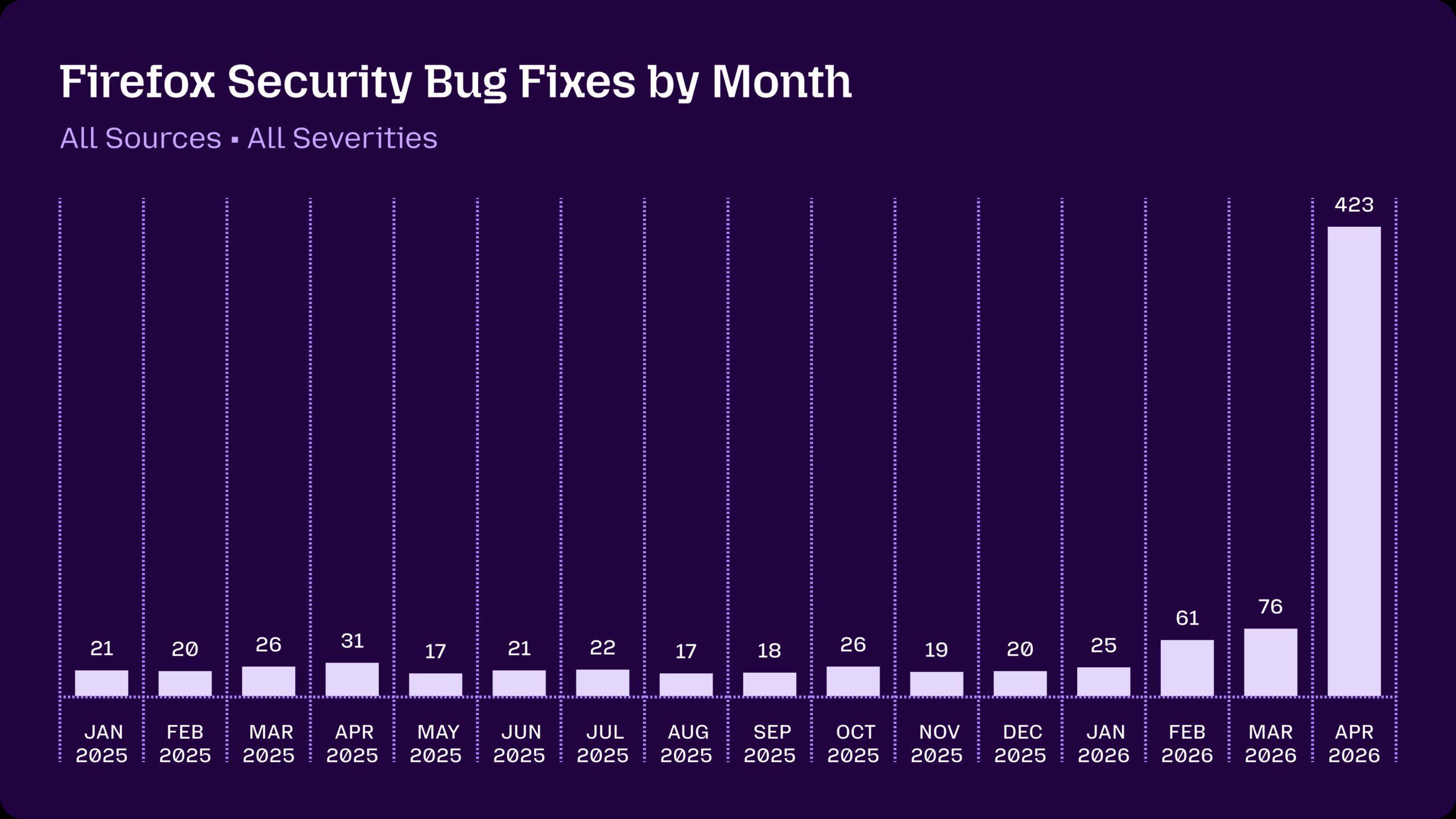

For years, open source maintainers have treated unsolicited AI-generated security reports as a nuisance, a flood of plausibly incorrect submissions that drain more time to evaluate than they save in potential fixes. The asymmetric cost of these reports is difficult to overstate, as prompting a large language model to flag a potential vulnerability requires minimal effort, while verifying whether the report is accurate and actionable can take hours of engineering time. This dynamic shifted abruptly for the Firefox team over the past few months, as a combination of more capable models and purpose-built orchestration pipelines turned AI from a source of noise into a primary driver of vulnerability discovery, culminating in the identification and remediation of 423 security bugs across Firefox releases in April 2026 alone. This figure represents a massive spike from the steady 20 to 30 monthly fixes shipped throughout 2025, with a smaller uptick to 60 or 70 fixes in February and March 2026, as illustrated in the following graph tracking Firefox security bug fix volumes.

The decision to disclose this unprecedented volume of fixes, and to unhide a sample of the underlying bug reports weeks earlier than the typical several-month embargo period, reflects the urgency of the shift underway in software security, as detailed in a new post on Mozilla Hacks, the Mozilla developer blog. Mozilla typically keeps vulnerability details private until users have had sufficient time to update to patched versions, but the rapid evolution of AI capabilities demanded a calculated exception to help other projects understand both the potential and the pitfalls of adopting these tools.

The shift from AI-driven noise to signal rests on two core factors, the first being the dramatic improvement in model capabilities over recent months. Early experiments with static analysis using models like GPT-4 and Sonnet 3.5 showed promise but produced unacceptably high false positive rates, making them impractical to scale across a codebase as large and complex as Firefox. The second factor is the refinement of techniques to steer, scale, and stack models, filtering out noise to generate large amounts of actionable signal. This is not a matter of simply prompting a model to find bugs, but of building a full pipeline that integrates model outputs with existing development and security workflows.

To demonstrate the depth of the vulnerabilities identified, Mozilla unhidden a sample of 12 bugs from the April 2026 fixes, drawn from a range of browser subsystems. These include a JIT optimization flaw in WebAssembly code that could create a fake-object primitive with arbitrary read/write capabilities, a 15-year-old bug in the element triggered by orchestrating edge cases across distant browser subsystems, and a 20-year-old XSLT bug involving reentrant key() calls that corrupt hash table memory. Many of these bugs are sandbox escapes, which require an attacker to first compromise the sandboxed content process that renders web pages, then chain the escape with additional exploits to gain control of the privileged parent process. Sandbox escapes are notoriously difficult to find with traditional fuzzing, as they require complex reasoning across multiprocess boundaries, and Mozilla notes that AI analysis provides far more comprehensive coverage of this critical attack surface than previous techniques.

Equally telling as the bugs the models found are the bugs they could not. Mozilla previously made an architectural change to freeze prototypes by default in the parent process, rather than fixing individual prototype pollution vulnerabilities one by one. When auditing harness logs, the team saw numerous attempts by models to exploit prototype pollution to escape the sandbox, all of which were thwarted by this design change. Observing this direct payoff from previous hardening work provided as much value as the new bug fixes, as it validated the effectiveness of architectural defenses against both human and AI-driven attack attempts.

Building an effective pipeline required moving beyond static analysis to agentic harnesses that can dynamically test hypotheses about vulnerabilities. Unlike earlier static analysis tools, these harnesses can create and run reproducible test cases, modify code within defined constraints, and verify whether a suspected bug is actually exploitable. Mozilla built its harness atop existing fuzzing infrastructure, starting with small-scale experiments using Claude Opus 4.6 to hunt for sandbox escapes. Even with this earlier model, the team identified previously unknown vulnerabilities that required reasoning across multiprocess browser engine code, a task that had been largely out of reach for automated tools.

Initial experiments were supervised in real time via terminal, allowing engineers to tune prompts and logic before scaling. Once the harness proved effective, the team parallelized jobs across multiple ephemeral virtual machines, each tasked with scanning a specific target file and writing findings to a shared storage bucket. Scaling the effort required integrating the discovery system with the full security bug lifecycle, including deduplication against known issues, triage, fix tracking, and release management. While the agentic harness itself can be reused across projects, this end-to-end pipeline is inherently project-specific, reflecting the unique semantics, tooling, and processes of the Firefox codebase. Standing up this pipeline required extensive iteration and a tight feedback loop with the Firefox engineers responsible for triaging and fixing incoming bugs.

Over 100 people contributed to the effort to ship the most secure Firefox release to date, including engineers writing and reviewing patches, teams building and scaling the pipeline, triage specialists, QA testers, and release managers. The volume of bugs, 271 identified specifically by Claude Mythos Preview for Firefox 150, plus additional fixes in point releases 149.0.2, 150.0.1, and 150.0.2, created an unprecedented workload that required long hours from the entire team. Mozilla continues to find bugs through traditional means, including fuzzing and manual inspection, and has seen a significant uptick in external security reports in recent months, a trend mirrored across other major software projects.

The total number of security bugs fixed in April 2026 was 423, a figure that breaks down into 271 found by Claude Mythos Preview, 41 externally reported bugs, and 111 internal bugs split between other AI models in the pipeline and traditional fuzzing techniques. Mozilla groups internally reported bugs into rollup CVEs, as tracked in the foundation-security-advisories repository, with three rollups in Firefox 150 totaling 316 bugs, meaning some fixes from the Mythos Preview effort were shipped in earlier or later point releases. The team applies security severity ratings ranging from critical to low, with 180 of the 271 Mythos Preview bugs rated sec-high, 80 sec-moderate, and 11 sec-low. Sec-high bugs are those that can be triggered by normal user behavior, such as visiting a malicious web page, but do not necessarily represent immediate remote code execution risks thanks to Firefox's defense-in-depth architecture. Exploiting a single sec-high bug typically only achieves code execution within the sandboxed content process, requiring additional chained exploits to escape the sandbox, bypass OS-level mitigations like ASLR, and gain full system control. Mozilla classifies bugs based on crash symptoms reported by tools like AddressSanitizer rather than building full exploits, a choice that reduces false negatives and allows the team to focus on finding and fixing more vulnerabilities.

Once an end-to-end pipeline is in place, swapping in new models as they become available is trivial, and each model upgrade improves the entire system's ability to find bugs, generate test cases, and articulate impact. Mozilla recommends that any software project start experimenting with agentic harnesses and modern models immediately, even beginning with simple prompts before iterating on orchestration and tooling. The core loop remains consistent across iterations, tasking the model with finding bugs in a specific code area and generating a reproducible test case. Current scanning is focused on human-selected target files, but the team plans to integrate the pipeline into continuous integration systems to scan patches as they land, a shift that is expected to improve coverage by catching vulnerabilities earlier in the development process.

The current moment presents both peril and opportunity for the software ecosystem. Attackers will inevitably adopt these same AI capabilities to find vulnerabilities faster than defenders can fix them, making urgent action necessary across the industry. Mozilla's decision to share its pipeline details and sample bugs is a call for collective defense, urging projects to adopt these tools now to harden their code before adversarial use scales. This effort also validates the long-term value of architectural security investments, as layered defenses like prototype freezing and RLBox sandboxing technology continue to block both human and AI-driven attack attempts. The RLBox sandboxing technology used by Firefox to isolate third-party libraries, for example, had a verification logic gap exploited by the AI pipeline, which was quickly fixed, demonstrating how AI can identify gaps in even mature defense systems.

Counter-perspectives to this optimistic outlook are worth considering. The asymmetric cost of AI reports has not disappeared entirely, as even a well-tuned pipeline requires significant human oversight to triage, verify, and fix every identified bug. The 423 bugs fixed in April represent a massive workload that strained the Mozilla team, a challenge that smaller open source projects with fewer resources may struggle to manage. Reliance on proprietary models like Claude Mythos Preview raises questions about access, cost, and reproducibility, as not all projects can afford or access state-of-the-art closed models. There is also the risk that adversarial actors will use these tools to find zero-day exploits faster than defenders can patch them, a dynamic that could widen the gap between attack and defense if adoption is not widespread.

Another limitation is that AI-driven discovery does not replace existing security practices, but complements them. Fuzzing remains critical for finding certain classes of bugs, manual review is still required for every patch, and architectural changes like prototype freezing provide foundational defenses that AI cannot bypass. The models also require strict guardrails, such as only allowing code modifications within the sandboxed content process when crafting sandbox escape proofs, to prevent misuse of the harness itself. Mozilla's bug bounty program enforces similar constraints, limiting the scope of allowed testing to protect users and the project.

In conclusion, the integration of agentic AI into Firefox's security workflow represents a pivotal shift in how open source projects can approach vulnerability management. The 423 bugs fixed in April 2026 are not just a tally of fixes, but evidence that scalable AI-driven hardening is possible when models are paired with project-specific pipelines that integrate with existing development workflows. The journey from AI report noise to high-signal discovery required iteration, significant engineering investment, and a willingness to adapt to rapidly evolving model capabilities. For the broader software ecosystem, the lesson is clear, the tools to harden code against AI-driven attacks are available now, and collective adoption is necessary to secure the internet against the next generation of threats. Projects that start experimenting today will be better positioned to take advantage of future model improvements, while those that delay risk falling behind attackers who are already adopting these capabilities. The work done by Mozilla's team demonstrates that the future of software security is not a choice between human expertise and AI, but a synthesis of both that uses the strengths of each to build more resilient systems.

Comments

Please log in or register to join the discussion