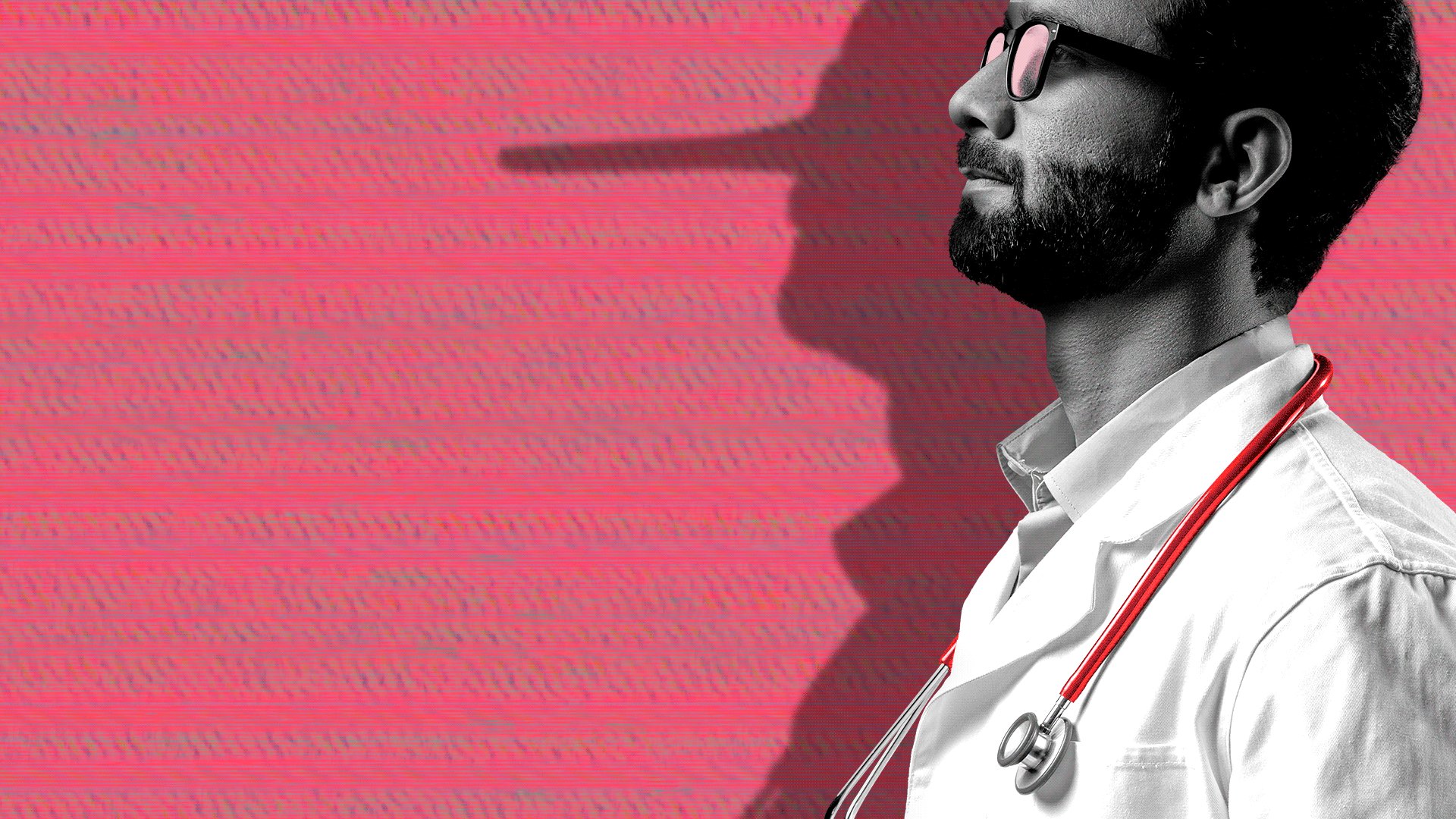

Healthcare professionals face increasing threats from AI-generated deepfakes, with financial losses, reputational damage, and potential patient safety concerns driving urgent industry response.

The healthcare industry is confronting an escalating crisis as AI-generated deepfakes targeting physicians and medical professionals proliferate at an alarming rate. According to recent data from cybersecurity firm DeepTrace, deepfake content in the healthcare sector has increased by 450% over the past 18 months, with over 12,000 documented cases involving medical professionals since 2022.

Financial losses from these incidents have reached an estimated $87 million globally, with individual doctors reporting losses ranging from $5,000 to $250,000 per incident. The most common attack vectors include fake medical advice attributed to legitimate physicians, fabricated credentials, and altered video testimonials that damage professional reputations.

"We're seeing a shift from simple impersonation to sophisticated, targeted attacks that can have real-world consequences for patient care," said Dr. Sarah Mitchell, a cybersecurity researcher specializing in healthcare at the University of Pennsylvania. "When patients encounter convincing deepfakes of their doctors providing harmful advice, the trust between healthcare providers and patients erodes, creating a ripple effect that impacts the entire healthcare system."

The market for deepfake detection technology has responded to this growing threat, with the healthcare security segment expected to reach $2.3 billion by 2027, growing at a compound annual rate of 31.2%. Companies like Sensity and Reality Defender have developed specialized solutions for healthcare providers, with detection accuracy rates now exceeding 94% for professionally generated deepfakes.

Strategic implications extend beyond individual practitioners to healthcare systems and insurance providers. Major hospital networks like Mayo Clinic and Cleveland Clinic have implemented mandatory deepfake awareness training for all staff, while malpractice insurance carriers have begun adding clauses specifically addressing deepfake-related claims. The average cost of implementing comprehensive deepfake protection measures across a medium-sized hospital network now exceeds $1.2 million annually.

Regulatory responses remain fragmented, with the FDA issuing guidance on AI in medical devices but no specific regulations addressing deepfake threats. The Healthcare Information and Management Systems Society (HIMSS) has established a task force to develop industry standards, with recommendations expected by Q4 2024. Meanwhile, state-level legislation is emerging, with California and New York proposing bills that would criminalize the creation of deepfakes targeting healthcare professionals with penalties reaching $500,000 per offense.

For individual physicians, the financial and reputational risks have become significant business concerns. Dr. James Peterson, a plastic surgeon in Beverly Hills, reported a deepfake campaign that cost him $180,000 in lost business and required $45,000 in legal and reputation management expenses. "The impact goes beyond financial loss," Peterson stated. "When potential patients see convincing videos of me recommending procedures I would never perform, it undermines years of building trust and credibility."

The healthcare industry's response has evolved from reactive to proactive, with major players investing in both defensive technologies and public awareness campaigns. The American Medical Association has launched a comprehensive deepfake education initiative, while telehealth platforms like Teladoc and Amwell have implemented multi-layered verification systems for all virtual consultations.

As AI technology continues to advance, the healthcare sector faces a persistent cat-and-mouse game with those creating malicious deepfakes. The strategic imperative remains clear: developing robust detection capabilities, implementing comprehensive education programs, and establishing clear legal frameworks to protect both healthcare professionals and the patients who rely on them.

Comments

Please log in or register to join the discussion