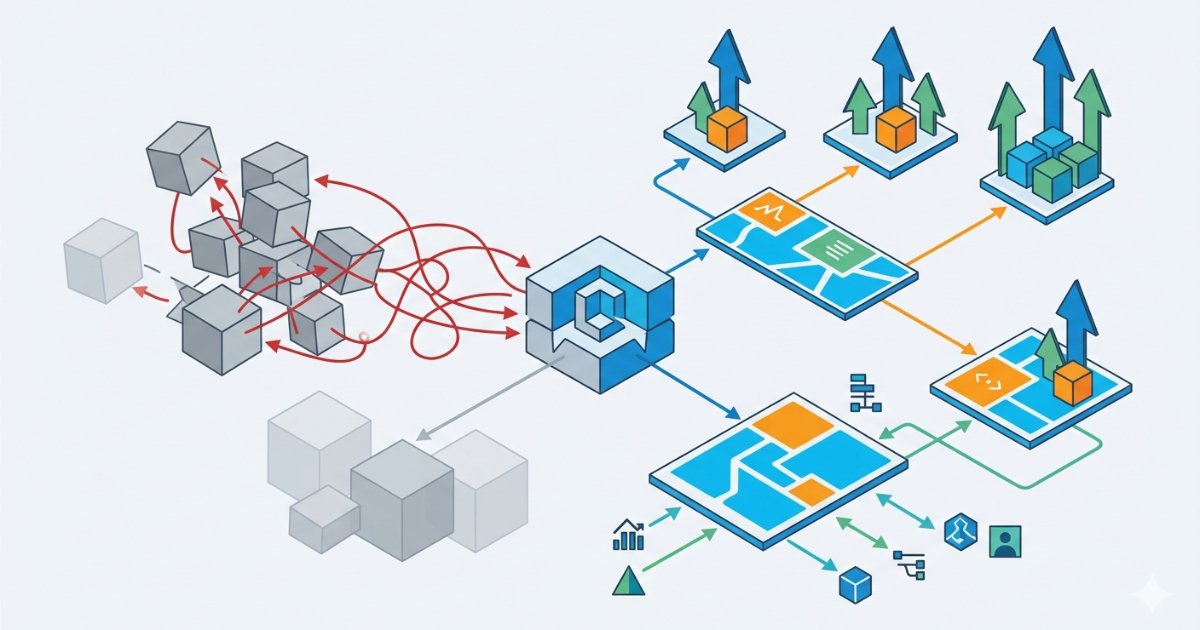

Grafana Labs has released version 4 of its Kubernetes Monitoring Helm chart, introducing significant architectural improvements that address configuration challenges as deployments scale. The update transforms how destinations, collectors, and telemetry services are managed, providing greater predictability and flexibility for teams managing single clusters to large multi-cluster environments.

Grafana Labs has released version 4 of its Kubernetes Monitoring Helm chart, representing the most significant architectural update since the chart's introduction. This release, announced in April 2026 by Pete Wall and Beverly Buchanan, directly tackles configuration challenges that emerged as users scaled their monitoring deployments from small clusters to large, multi-environment setups.

Architectural Transformations

The Kubernetes Monitoring Helm chart serves as a critical component for sending metrics, logs, traces, and profiles from Kubernetes clusters to Grafana Cloud or self-hosted Grafana instances. Version 4 introduces fundamental changes to how the chart structures and manages monitoring components.

Destination Configuration: From Lists to Maps

One of the most impactful changes is the conversion of destinations from a list-based to a map-based configuration. In version 3, destinations were defined as a list of objects, which created significant challenges for teams using GitOps workflows or managing multiple clusters with shared configuration files.

When overriding properties like passwords, teams had to reference destinations by their position in the list. If the order changed during configuration updates, overrides would silently apply to the wrong targets, leading to authentication failures or misrouted data.

Version 4 assigns each destination a stable name, making references like destinations.prometheus.auth.password consistently point to the Prometheus destination regardless of ordering. This change dramatically improves the reliability of multi-cluster GitOps workflows using tools like Argo CD, Terraform, or Flux, as Helm's merge capabilities work more predictably with map-based configurations.

Collector Restructuring for Explicit Feature Assignment

The chart's collector system has undergone similar restructuring. Version 3 used hard-coded collector names such as alloy-metrics, alloy-logs, and alloy-singleton, each tied to specific deployment types. The routing of features to collectors was embedded in the chart's internal logic, requiring users to examine source code rather than configuration files to understand which features ran on which collectors.

Version 4 eliminates these hard-coded names entirely. Users now define collectors as a map and assign deployment presets such as clustered, statefulset, or daemonset. Features are explicitly assigned to named collectors, removing the hidden routing logic from chart internals. This approach provides transparency and control, as Wall and Buchanan note: "If you forget to specify, it will give you a message telling you which feature still needs to be assigned to a collector rather than silently picking one for you."

Explicit Backing Service Management

A critical improvement in version 4 is the explicit separation of backing service deployment from feature configuration. In version 3, enabling a feature like clusterMetrics would silently deploy supporting services such as Node Exporter, kube-state-metrics, and OpenCost. This caused problems for teams that already ran these services in their clusters, leading to duplicate deployments without warning.

Version 4 introduces a telemetryServices key that makes service deployment an explicit step. Teams can instruct the chart to skip deployment of services they already manage and point features to existing instances instead. This approach eliminates surprise deployments and gives teams complete control over their monitoring infrastructure.

Modular Cluster Metrics Configuration

The handling of cluster metrics has been reorganized into three separate features: clusterMetrics, hostMetrics, and costMetrics. Version 3 combined Kubernetes cluster metrics, Linux and Windows host metrics, energy metrics via Kepler, and cost metrics via OpenCost within a single configuration block.

This separation in version 4 allows teams to enable only the metrics they need, with each feature having its own values file and exposing only relevant configuration options. This modular approach reduces configuration complexity and potential conflicts.

Memory Optimization in Pod Log Pipeline

Version 4 addresses a significant memory usage issue in the pod log pipeline. In version 3, the chart applied all Kubernetes pod labels and annotations as log labels, then used a labelsToKeep list to filter them down. This required Alloy to allocate memory for potentially hundreds of labels only to discard most of them.

Some users reported memory problems in their log-collecting Alloy instances directly attributed to this behavior. Version 4 removes labelsToKeep entirely; pod labels and annotations are no longer applied in bulk. Instead, users explicitly declare which labels they want promoted. According to Grafana documentation, adding a label is now a one-line change rather than a full redefinition of a default list.

Developer Experience and Operational Impact

These architectural changes collectively improve both developer experience and operational reliability. The shift from implicit to explicit behavior makes the chart more predictable and easier to debug. Teams can now trace exactly which services are deployed and how features are assigned to collectors.

The memory optimization in the log pipeline provides immediate performance benefits, particularly for clusters with high pod churn or large numbers of labels. The explicit management of backing services prevents resource conflicts and duplicate deployments, which is especially valuable in large organizations with standardized monitoring stacks.

For teams managing multiple clusters, the map-based destination configuration eliminates the risk of misconfiguration when sharing or overriding settings across environments. This is particularly important for organizations implementing GitOps workflows at scale.

Comparison with Alternative Approaches

The Grafana Kubernetes Monitoring Helm chart serves a distinct purpose compared to alternative monitoring solutions. The kube-prometheus-stack, maintained by the prometheus-community organization, bundles Prometheus, Grafana, Alertmanager, Node Exporter, kube-state-metrics, and the Prometheus Operator into a single Helm install. It uses Prometheus Operator custom resources like ServiceMonitors and PrometheusRules to provide declarative scrape configuration.

The kube-prometheus-stack is a common choice for teams building a self-hosted observability stack independent of Grafana Cloud. In contrast, the Grafana chart specifically targets teams sending telemetry to Grafana Cloud or a managed Grafana stack, with built-in support for profiles and cost metrics.

Both charts serve related but distinct use cases. The choice between them depends on whether teams prefer a self-contained monitoring stack or a cloud-managed solution with streamlined integration.

Migration Considerations

Grafana Labs has provided a migration tool that converts version 3 values files to version 4-compatible output. The tool handles the structural conversions, including converting lists to maps and splitting overloaded features into separate modules.

All chart examples in the Grafana documentation and repository have been updated to reflect the version 4 format. Teams considering the upgrade should review the migration documentation, particularly the changes to destination configuration and collector management.

The migration represents more than just a syntax update—it's an opportunity to reevaluate monitoring architecture and adopt more explicit, manageable patterns. As Kubesimplify noted on LinkedIn, "nearly every fragile pattern from v3 has been replaced," with the shift from lists to maps and the opt-in approach to pod log labels providing the most immediate practical benefits.

Future Implications

The version 4 release reflects a maturation of Kubernetes monitoring practices as deployments grow in complexity. The emphasis on explicit configuration, resource efficiency, and multi-cluster compatibility addresses real-world challenges that emerged as organizations scaled their observability infrastructure.

These improvements set the foundation for future enhancements, particularly in areas like automated cost optimization, enhanced security for telemetry data, and deeper integration with cloud-native services. The modular architecture also makes it easier for Grafana Labs to add new monitoring capabilities without disrupting existing configurations.

For teams evaluating monitoring solutions, the version 4 chart offers a compelling option for those invested in the Grafana ecosystem, particularly as they scale their Kubernetes deployments. The combination of improved performance, explicit configuration, and streamlined multi-cluster management addresses many common pain points in production monitoring environments.

For more information on the Kubernetes Monitoring Helm Chart v4, refer to the official documentation and GitHub repository.

Comments

Please log in or register to join the discussion