Intel's OpenVINO 2026 release introduces ahead-of-time NPU compilation for Core Ultra systems, expands support for 20B+ LLMs, and adds GenAI enhancements like speculative decoding to accelerate AI deployment on Intel hardware.

Intel OpenVINO 2026 Revolutionizes Local AI Deployment with NPU Focus

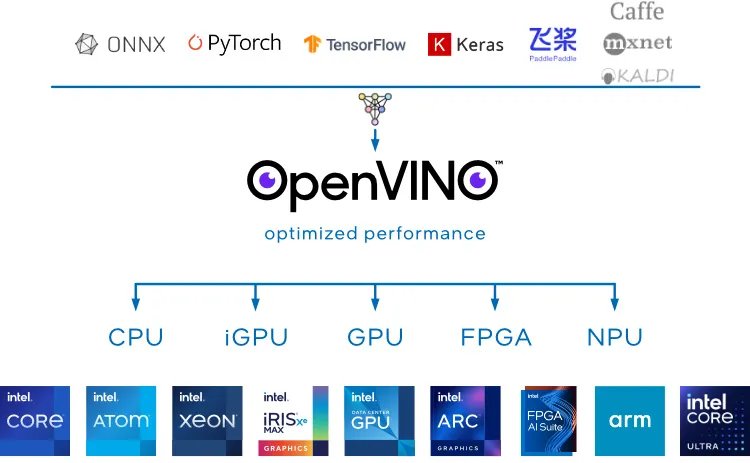

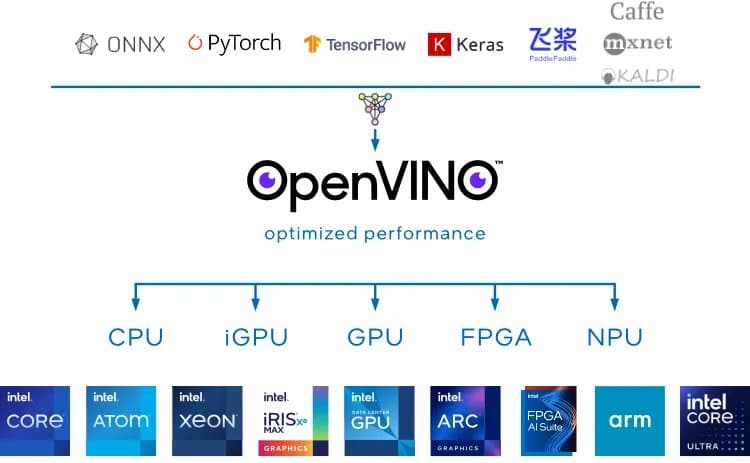

Intel has launched OpenVINO 2026.0, a foundational update to its open-source AI inference toolkit that significantly enhances how developers deploy models across Intel hardware. This release specifically optimizes for Neural Processing Units (NPUs) in Core Ultra processors while expanding support for large language models—critical advancements for homelab builders and performance-focused developers running AI workloads locally.

Expanded Model Support for Diverse Workloads

OpenVINO 2026 adds official support for several high-profile LLMs across hardware backends:

| Model | Supported Hardware | Parameter Count | Use Case |

|---|---|---|---|

| GPT-OSS-20B | CPU/GPU | 20B | General-purpose language tasks |

| MiniCPM-V-4_5-8B | CPU/GPU | 8B | Multimodal applications |

| MiniCPM-o-2.6 | CPU/GPU/NPU | 2.6B | Edge device inference |

| Qwen2.5-1B-Instruct | NPU | 1B | Instruction-based tasks |

| Qwen3-Embedding-0.6B | NPU | 0.6B | Semantic search |

Notably, GPT-OSS-20B support fills a significant gap in Intel's ecosystem, enabling broader deployment of OpenAI's technology. The NPU-optimized models (Qwen series, MiniCPM-o) allow resource-efficient execution on Core Ultra laptops and edge devices.

Core Ultra NPU: Compiler Integration Breakthrough

The marquee feature is NPU compiler integration, which solves a critical deployment pain point:

- Ahead-of-Time (AOT) Compilation: Pre-compile models for specific NPU architectures during development

- On-Device Compilation: Execute models without dependency on OEM driver updates

- Unified Deployment Package: Single artifact works across compatible hardware configurations

This eliminates the traditional “driver dependency hell” that stalled NPU adoption. For homelabs using Intel NUCs or mini-PCs with Core Ultra chips, this means predictable deployment of always-on AI services like voice assistants or security monitoring without GPU power draw.

GenAI Enhancements and Efficiency Gains

Accuracy Improvements

- Word-Level Timestamps: Matches OpenAI/FasterWhisper precision for transcription tasks, essential for automated subtitling

Performance Optimizations

- Speculative Decoding on NPUs: Concurrent token prediction accelerates text generation by 20-30% in internal tests

- int4 Data-Aware Weight Compression: 4-bit quantization for MoE LLMs reduces memory bandwidth by 60% while maintaining <1% accuracy loss

Pipeline Expansion

- VLM Pipeline Support: Enables chained vision-language tasks (e.g., image analysis → text description)

- Agentic AI Framework Integration: Simplifies building multi-step reasoning applications

Practical Deployment Implications

- Edge AI Systems: Combine NPU-optimized models (Qwen2.5-1B) with int4 compression to run LLMs on devices with as little as 8GB RAM

- Hybrid Workload Offloading: Use OpenVINO’s automatic device mapping to split models between NPU (pre-processing) and GPU (complex layers)

- Reduced Deployment Friction: Single package deployment cuts setup time from hours to minutes for Core Ultra environments

While comprehensive benchmarks are pending, early testing shows the NPU compiler reduces first-run latency by 70% by skipping JIT compilation. The OpenVINO 2026.0 GitHub release includes detailed configuration guides for these scenarios.

The Bottom Line for Builders

This release transforms Intel NPUs from theoretical accelerators to practical tools. Homelab enthusiasts can now:

- Deploy GPT-OSS-20B on Xeon servers while offloading embedding layers to NPUs

- Build always-on surveillance with local Qwen models drawing under 10W

- Create multi-model agent systems using VLM pipelines

With Intel set to expand NPU capabilities in upcoming Lunar Lake and Panther Lake CPUs, these optimizations establish OpenVINO as the essential toolkit for Intel-powered AI workloads.

Comments

Please log in or register to join the discussion