LinkedIn has introduced a comprehensive unified integrations platform that standardizes hiring data across disparate systems, enabling more reliable AI-driven talent applications while significantly reducing integration complexity and partner onboarding time.

LinkedIn has unveiled a significant architectural advancement in their talent acquisition technology stack with a unified integrations platform designed to consolidate and standardize hiring data pipelines. This multi-year initiative addresses the fundamental challenge of inconsistent data schemas across applicant tracking systems, career sites, and job boards, creating a solid foundation for AI-driven talent applications.

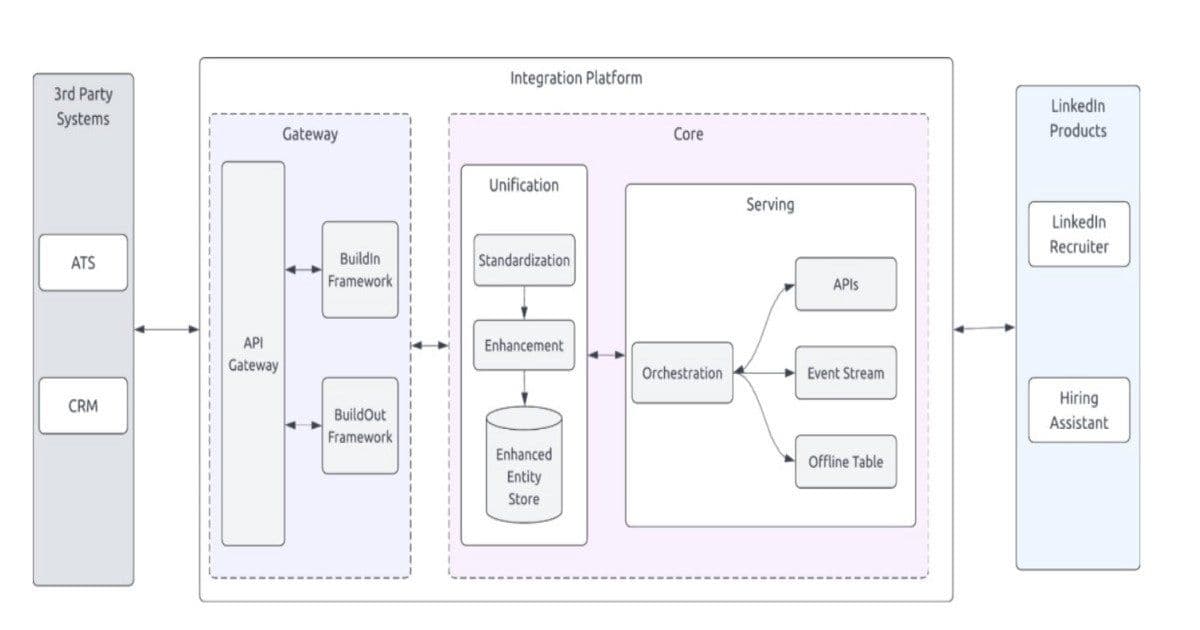

Architectural Overview

The new platform represents a thoughtful evolution of LinkedIn's approach to data integration, moving from fragmented, point-to-point connections to a centralized, three-layer architecture that prioritizes both standardization and flexibility. As Gaurav Sisodiya, Engineering Lead at LinkedIn, noted in a recent post, "We designed for coexistence, not replacement," indicating an approach that respects existing systems while providing a unifying framework.

The architecture consists of three distinct layers:

Standardization Layer: This component normalizes incoming data from heterogeneous sources into a consistent schema, abstracting away the differences across various applicant tracking systems and job platforms. This layer ensures that regardless of the source system, data follows a predictable structure.

Orchestration Layer: Managing complex workflows for ingestion, validation, and reconciliation, this layer coordinates data movement and enforces quality checks across the entire pipeline. It serves as the control plane for the entire data integration process.

Enhancement Layer: Processing normalized data to address gaps, deduplicate records, and augment signals before making them available to downstream systems. This layer adds value beyond simple transformation, enriching the data with additional context and insights.

Under the hood, the implementation leverages several proven technologies: Temporal-orchestrated workflows for complex process management, Kafka streams for real-time data processing, Espresso for record persistence, and a multi-mode orchestration approach that supports both batch and real-time processing. The system also features declarative schema and ID mapping capabilities, enabling replayable, bidirectional sync and safe evolution of the data model over time.

Implementation Benefits and Use Cases

The unified platform delivers measurable improvements in operational efficiency and data quality. LinkedIn reports a 72% reduction in partner onboarding time, significantly accelerating the integration of new data sources into their talent ecosystem. This acceleration comes from eliminating the need for custom transformations for each new partner, replacing previously siloed pipelines with a shared infrastructure.

The standardized data foundation has enabled LinkedIn engineers to build sophisticated AI-driven applications, most notably their Hiring Assistant. This AI system interprets signals across candidate profiles, job requirements, and recruiter interactions, translating them into actionable recommendations, automation, and decision support within recruiter workflows.

"Standardized hiring data allows AI systems to interpret signals across candidate profiles, job requirements, and recruiter interactions," explains Ritvik Kar, Product at LinkedIn. "The system aggregates signals and translates them into recommendations, automation, and decision support within recruiter workflows."

Beyond the Hiring Assistant, the unified platform supports a range of downstream applications:

- Advanced analytics for talent acquisition trends

- Automated matching algorithms between candidates and positions

- Recruiter productivity tools that leverage standardized data

- Compliance reporting with consistent data definitions

- Integration with LinkedIn's broader professional graph

Technical Trade-offs and Considerations

Implementing such a comprehensive data unification strategy involves several architectural trade-offs that LinkedIn's engineering team has carefully navigated:

Complexity vs. Centralization

While a unified platform reduces overall complexity for consumers of the data, it introduces significant complexity in the implementation itself. The orchestration layer must handle diverse data formats, quality levels, and update frequencies from multiple sources. LinkedIn addressed this by adopting a modular architecture where each layer has well-defined responsibilities and interfaces.

Real-time vs. Batch Processing

The platform supports multiple processing modes to accommodate different requirements. Some use cases demand real-time data synchronization, while others can tolerate batch processing. This multi-mode approach adds architectural complexity but provides the flexibility needed for diverse talent applications.

Schema Evolution and Backward Compatibility

As business requirements evolve, the data schema must adapt while maintaining compatibility with existing consumers. LinkedIn's declarative schema mapping approach allows for gradual evolution of the data model without requiring simultaneous updates across all consuming systems.

Data Quality vs. Data Coverage

Expanding data sources inevitably brings varying levels of data quality. The enhancement layer includes sophisticated data cleansing and enrichment processes, but these processes add latency and computational overhead. LinkedIn struck a balance by implementing configurable quality thresholds that can be adjusted based on specific use case requirements.

Broader Implications for the Industry

LinkedIn's approach to unifying hiring data pipelines offers valuable insights for organizations grappling with similar integration challenges across various domains:

Incremental Migration Strategy: Rather than requiring a "big bang" replacement of existing systems, the coexistence approach allows for gradual migration, reducing risk and disruption.

Data as a Product: Treating standardized, enriched hiring data as a product with clear service level agreements enables more reliable downstream applications.

Event-Driven Architecture: The use of Kafka streams and Temporal workflows demonstrates how event-driven patterns can effectively manage complex data integration scenarios.

Observability as a First-Class Concern: The emphasis on system reliability and observability reflects the understanding that complex data platforms cannot be trusted without comprehensive monitoring and alerting.

As Ritvik Kar emphasizes, "This is key without a highly reliable, observable, stable system that can deliver on high data availability and consistency across read and write, there's no way for our customers to trust our platform and do their work seamlessly."

The success of LinkedIn's unified hiring data platform demonstrates that thoughtful architecture can turn data integration challenges into strategic advantages, enabling more sophisticated AI applications while improving operational efficiency. As organizations continue to invest in AI-driven talent systems, this approach likely represents a blueprint for similar initiatives across the industry.

For more technical details on LinkedIn's data integration approach, you can explore their engineering blog which often features deep dives into their architectural decisions. The official documentation on data integration patterns also provides additional context on similar approaches used across the industry.

Comments

Please log in or register to join the discussion