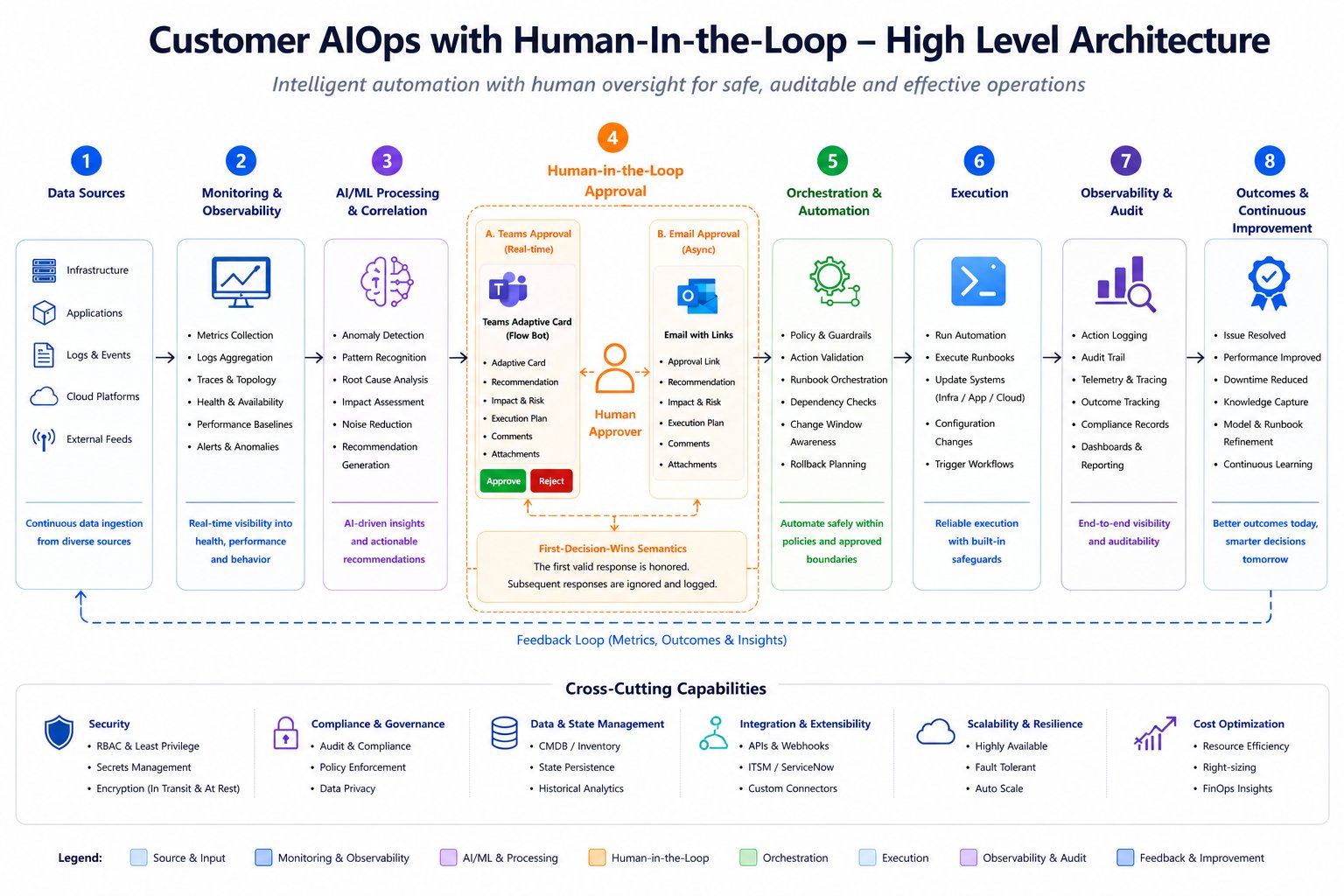

Microsoft’s new reference implementation for custom AIOps agents gives Azure customers a reusable framework to turn observability data into governed, human-approved operational actions, prioritizing control and auditability over full automation.

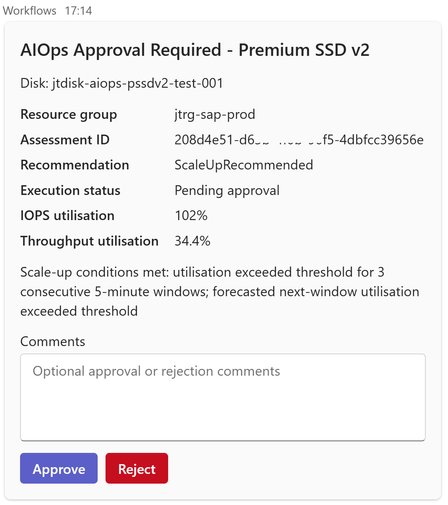

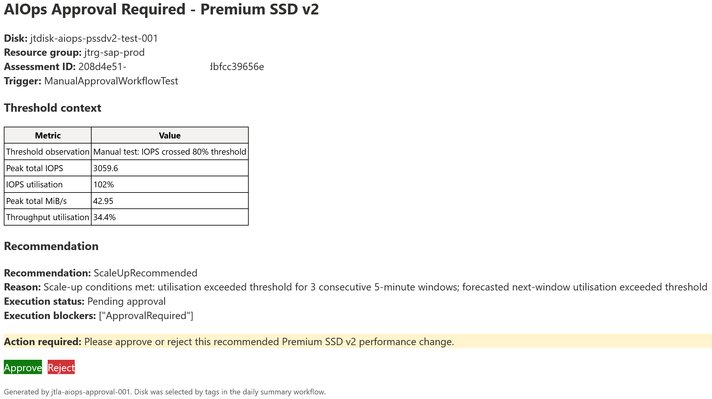

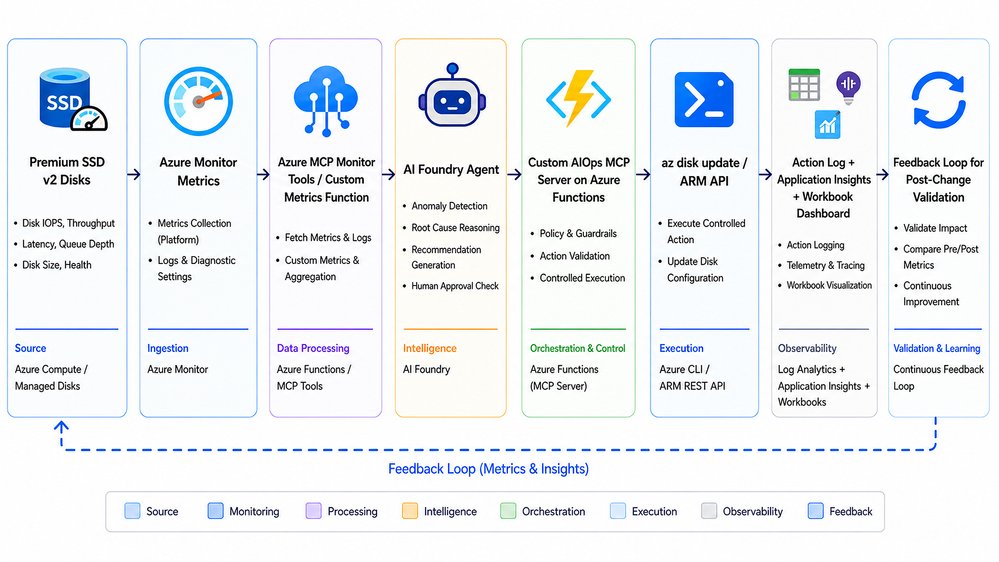

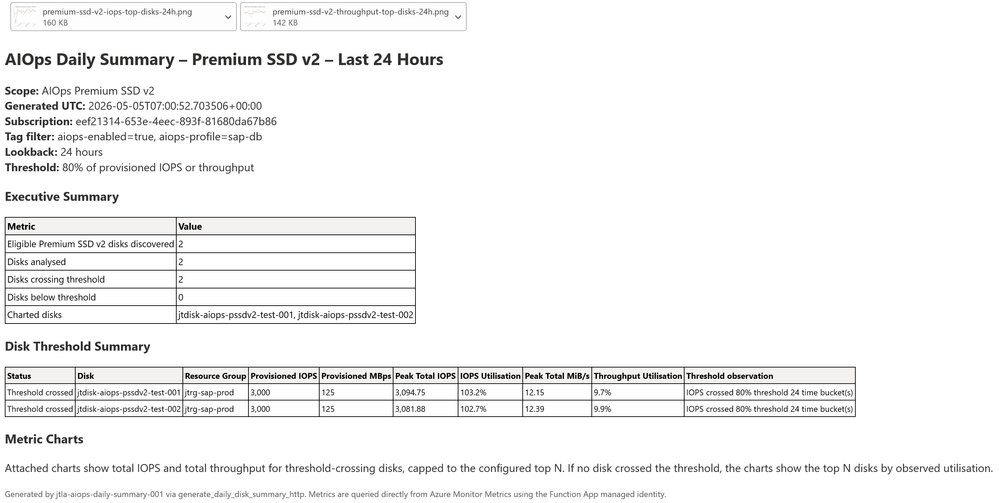

Microsoft recently published a detailed reference implementation for building customer-specific AI Operations (AIOps) agents on Azure, designed to bridge the gap between passive observability and governed operational action. The guide focuses on a repeatable pattern that combines deterministic policy logic, AI-generated explainability, human-in-the-loop approval, and complete audit trails, using Premium SSD v2 disk performance tuning as an initial low-risk use case.

What Changed

The core of the announcement is a reusable technical pattern, not a prebuilt managed service. It provides a step-by-step framework for platform teams to build custom AIOps agents tailored to their organization’s specific operational constraints, approval processes, and risk tolerance.

Traditional monitoring dashboards surface metrics but leave the burden of action on engineering teams. This pattern automates the tedious parts of operational workflows while preserving human control. The reference implementation uses Premium SSD v2 disks as a first scenario because their performance settings (IOPS, throughput, size) can be adjusted independently, making outcomes measurable and risk contained. Premium SSD v2 is a managed disk type with configurable performance, making it a practical starting point for automation.

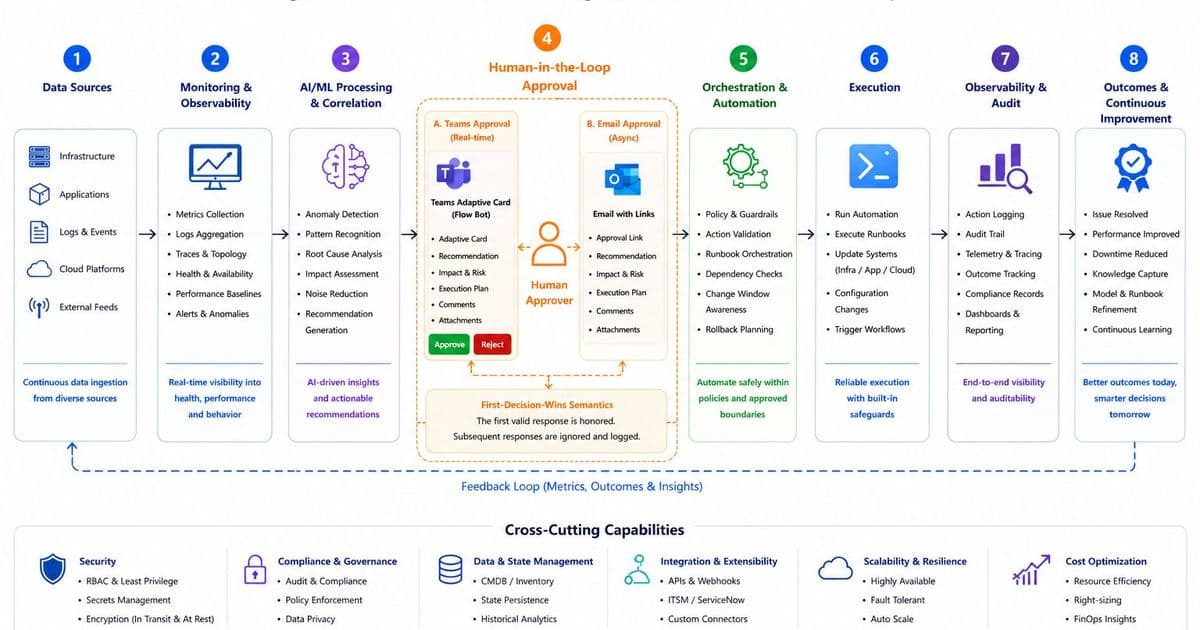

The architecture splits decision-making into two distinct layers. A deterministic policy engine handles all eligibility checks, metric collection, threshold calculations, and recommendation logic. This layer uses Azure Resource Graph to discover tagged resources, Azure Monitor Metrics to pull performance data, and customer-defined rules to generate scale-up, scale-down, or no-change recommendations.

The second layer uses an Azure OpenAI deployment (GPT-4o or similar) to convert the deterministic output into plain-language explanations, risk assessments, and approval requests. The AI model does not execute changes or override policy, which maintains auditability and trust.

The workflow follows a linear path: observe resource metrics, assess against policy, generate recommendations, route actionable items to human approvers, execute approved changes within defined guardrails, validate outcomes, and feed results back into the policy engine. Key design choices include tag-based resource selection (using tags like aiops-enabled and aiops-approval-required) to avoid scanning all subscription resources by default, Azure Managed Identities for secure authentication, and a first-decision-wins approval model across Teams Adaptive Cards and email to prevent duplicate executions.

The guide includes 13 detailed implementation steps, from tagging disks to post-change validation, with sample code snippets for Azure Resource Graph queries, Python recommendation logic, and PowerShell commands for testing. A dry-run mode is enabled by default, allowing teams to validate the entire workflow without making actual resource changes.

Provider Comparison

Most cloud providers offer AIOps-adjacent tools, but Microsoft’s approach differs in its emphasis on customer-specific control and transparency.

AWS provides DevOps Guru, a managed service that uses machine learning to detect operational anomalies and recommend fixes for AWS resources. It requires minimal setup but offers limited customization for organizational approval workflows or custom policy guardrails. The recommendation logic is largely opaque, with no option to split deterministic and AI-driven decision layers. For enterprises with strict compliance requirements, this lack of transparency can be a barrier.

GCP’s Cloud AI Ops suite includes anomaly detection and root cause analysis tools, but similar to AWS, these are managed services with fixed workflows. Customization is limited to basic alert routing, with no native support for customer-defined deterministic policy engines paired with AI explainability layers.

Microsoft’s reference implementation takes the opposite approach. It does not provide a turnkey service, instead giving teams a template to build their own agent using Azure-native components: Azure Logic Apps for workflow orchestration, Azure Functions for metric processing, Azure Resource Graph for resource discovery, and Azure OpenAI for explanation generation. This requires more upfront engineering effort but allows full alignment with internal policies, approval hierarchies, and audit requirements.

For multi-cloud customers, the pattern has partial portability. The deterministic policy logic and recommendation rules can be reused across clouds, but the execution layer must be adapted to each provider’s APIs. For example, AWS-based disk performance tuning would require replacing Azure Monitor Metrics with CloudWatch, and Logic Apps with AWS Step Functions. The core principle of separating deterministic policy from AI explanation remains applicable regardless of cloud provider.

Pricing models also differ. Azure’s component-based model charges for each service used: Logic Apps per action execution, Azure Functions per GB-second of compute, Azure OpenAI per token processed. AWS DevOps Guru charges per resource analyzed per month, with no separate AI model costs. Teams evaluating Microsoft’s pattern must model costs for their resource scale, as a large estate with thousands of disks could incur significant Azure OpenAI and Logic Apps charges if not optimized.

Business Impact

From a strategic perspective, this pattern addresses a common pain point for enterprises running complex Azure workloads. Most operations teams have mature monitoring but struggle to turn insights into action without manual toil or unacceptable risk. Microsoft’s framework provides a middle path that reduces repetitive work while maintaining compliance.

For regulated industries including finance and healthcare, the built-in audit trail and human approval steps align with common compliance requirements. Every decision, approver, and execution result is stored in a persistent audit store, with no opaque AI-driven changes. The dry-run mode and tag-based resource selection also limit blast radius during initial testing.

Platform teams should avoid the temptation to automate all operational scenarios at once. The guide recommends starting with a single low-risk use case, such as Premium SSD v2 tuning, to validate the workflow before expanding to other scenarios like VM rightsizing, backup failure triage, or configuration drift detection. This phased approach reduces the risk of unintended outages and builds organizational trust in the AIOps agent.

Cost optimization is critical for widespread adoption. Teams can reduce Azure OpenAI costs by only invoking the model for actionable recommendations, rather than every resource assessment. Using Azure Monitor Metrics Batch API for large estates lowers metric query costs and latency. Least-privilege managed identities should be used instead of broad role assignments to minimize security risk, as outlined in the reference implementation.

The post-change validation loop is an often overlooked part of operational workflows. Microsoft’s pattern includes automatic checks after execution to confirm that changes produced the expected outcomes, such as reduced IOPS pressure or improved latency. This feedback loop allows teams to refine their policy thresholds over time, improving recommendation accuracy.

For enterprises evaluating multi-cloud AIOps strategies, this pattern highlights the trade-off between managed services and custom builds. Managed tools like AWS DevOps Guru are faster to deploy but less flexible. Custom builds using Microsoft’s pattern take more effort but align perfectly with internal processes. Most large enterprises will use a mix of both, depending on the workload and compliance requirements.

Comments

Please log in or register to join the discussion