GitHub has published the security architecture for its agentic workflows, a system integrating autonomous AI agents into GitHub Actions CI/CD pipelines. The defense-in-depth design mitigates risks like prompt injection and privilege escalation with layered controls including sandboxed execution, secret isolation, and full observability, enabling safe adoption of non-deterministic AI automation in production repositories.

How GitHub Is Securing Agentic Workflows in Modern CI/CD Systems

GitHub recently detailed the security architecture behind its agentic workflows, a system that integrates autonomous AI agents into GitHub Actions CI/CD pipelines. The framework uses a defense-in-depth approach to address the unique risks posed by non-deterministic AI automation, moving beyond traditional security models built for static, deterministic pipeline scripts. The full architecture breakdown is available in the official GitHub blog post.

Leela Kumili, lead software engineer at Starbucks and author of the original InfoQ report

Leela Kumili, lead software engineer at Starbucks and author of the original InfoQ report

Leela Kumili, lead software engineer at Starbucks with expertise in cloud-native systems and AI adoption for developer productivity, authored the InfoQ report covering this architecture. Her work highlights how agentic workflows extend traditional automation by enabling AI agents to interpret intent, make decisions, and execute tasks within GitHub Actions, a shift that introduces both productivity gains and new security risks.

What's New: Layered Security Architecture

Agentic workflows differ from traditional CI/CD pipelines in a fundamental way. Traditional GitHub Actions workflows run predefined, deterministic steps: a developer writes a YAML file specifying exact commands, and the runner executes them in order with no deviation. Agentic workflows let AI agents operate within that same framework, but with the ability to interpret high-level intent, reason over live repository state, and make autonomous decisions about which tasks to execute.

This flexibility unlocks new use cases, such as automatically triaging issues, generating test cases for new code, or updating documentation based on PR changes. However, it also expands the attack surface. Risks include prompt injection, where malicious untrusted inputs (such as a crafted issue comment) trick an agent into performing harmful actions, privilege escalation, and unintended modifications to repository code or configuration. Industry discussions increasingly highlight that these systems require security models that account for non-deterministic behavior, unlike traditional pipelines that assume all steps are pre-approved and safe.

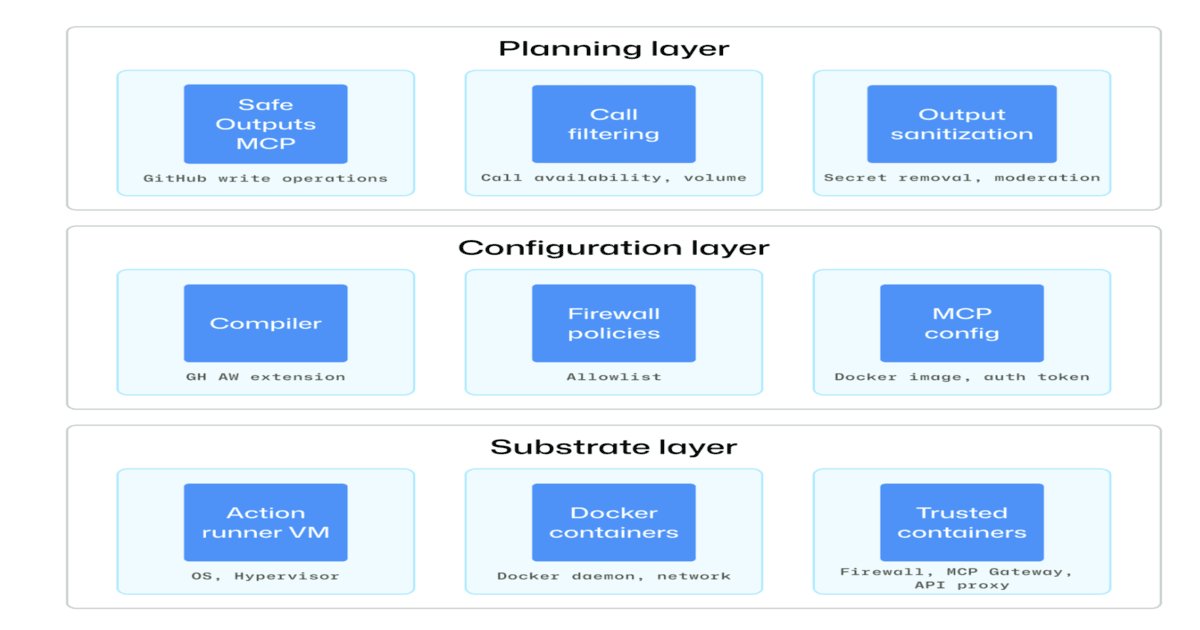

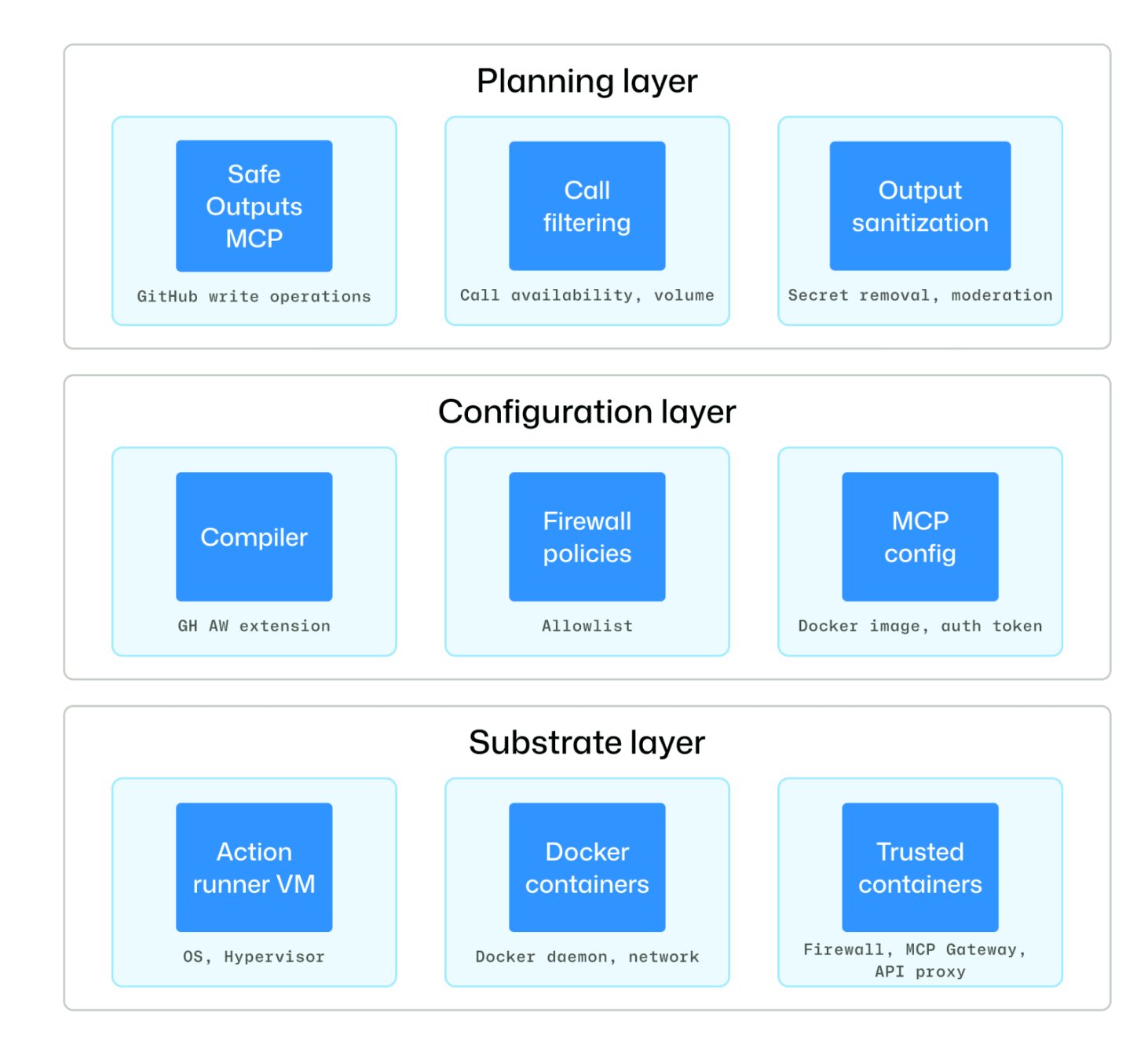

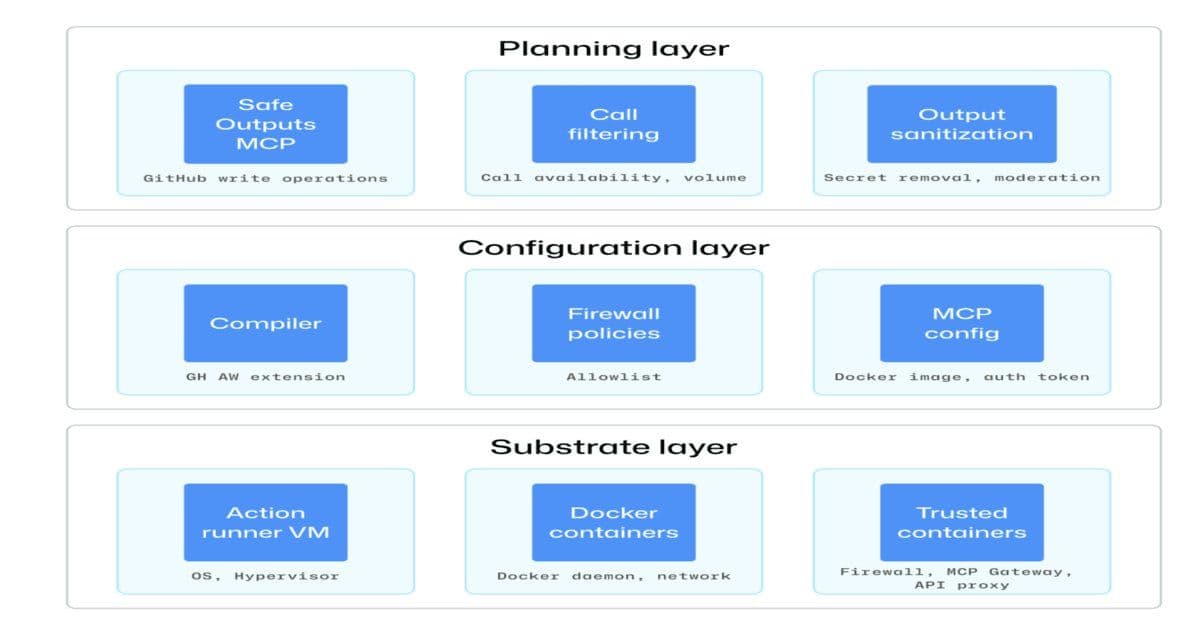

GitHub's security architecture for these workflows is built on four core layered controls:

1. Isolation and Ephemeral Execution

Agents run in sandboxed, ephemeral containers with tightly restricted permissions. These environments are destroyed immediately after execution, preventing persistence of malicious code, artifacts, or modified state. Workflows operate in read-only mode by default, so agents cannot modify repository state directly. Any write operation must pass through controlled, safe outputs such as pull requests or issue comments, which require human review and approval before being merged or applied. This ensures all agent-proposed changes are transparent, auditable, and subject to existing code review processes.

2. Secret and Credential Protection

A major risk in shared runner environments is accidental or malicious secret exposure. Agents in standard GitHub Actions runners can access environment variables, configuration files, and runtime logs, making prompt injection a viable vector for credential exfiltration. For example, a malicious user could leave a comment on an issue that tricks an agent into reading a stored API token from a local file and sending it to an external server via a repository artifact.

GitHub mitigates this by isolating agents in dedicated containers with restricted network egress. Sensitive credentials such as API tokens are routed through trusted proxies and gateways that sit outside the agent's boundary, so agents never have direct access to secrets. Even if an agent is tricked into attempting to read a secret, the credential is not present in the agent's environment.

3. Constrained Agent Capabilities

Tool access for agents is explicitly allowed via allowlists, limiting which APIs, systems, or repository actions an agent can invoke. Network isolation further reduces the risk of data exfiltration by blocking unauthorized outbound connections. This design reflects a shift away from implicit trust in agent behavior, a departure from traditional CI/CD models that assume all pipeline steps are safe because they are pre-defined.

Pravin Chandankhede noted in a LinkedIn discussion that agents are non-deterministic by design. They consume untrusted inputs, reason over live repository state, and can act autonomously at runtime. Constraining their capabilities ensures that even if an agent makes an unexpected decision, the blast radius is limited.

4. Staged Workflow Execution

Agents cannot commit changes directly to repositories. Instead, they propose modifications, which are buffered and analyzed after execution. This post-execution validation checks that all changes comply with organizational policies, such as code style rules, security scans, and approval requirements, before any commit is made.

Eddie Aftandilian, Head of Platform Engineering at XBOW, noted in a LinkedIn post that these guardrails are what make it possible to bring agentic automation into real production repositories. Without staged execution and human approval gates, the risk of unintended changes reaching production is too high for most teams.

GitHub agentic workflows security architecture (Source: GitHub Blog Post)

GitHub agentic workflows security architecture (Source: GitHub Blog Post)

Why It Matters

Traditional CI/CD security focuses on securing static pipeline definitions, scanning for known vulnerabilities in dependencies, and controlling access to runner environments. Agentic workflows break these core assumptions. As Jeremiah Snee noted in a GitHub Community discussion, "Continuous AI works best when used alongside CI/CD, extending automation to tasks that traditional pipelines struggle to express." However, this extension requires security controls that account for the agent's autonomous decision-making.

The observability pillar of GitHub's architecture is critical for addressing these gaps. GitHub logs all activity across trust boundaries, including network traffic, model interactions, tool usage, and sensitive runtime actions. This full execution traceability enables forensic analysis if an incident occurs, and provides a foundation for enforcing future policy and information flow controls. Teams can audit exactly what an agent accessed, what decisions it made, and what actions it attempted, which is impossible with traditional black-box AI tools.

For organizations adopting agentic workflows, GitHub's design provides a clear blueprint. The non-deterministic nature of agents means that security can no longer rely on pre-approving every step of a pipeline. Instead, controls must focus on limiting what agents can access, isolating their execution, and maintaining full visibility into their actions.

How to Apply These Practices

While GitHub's architecture is specific to its agentic workflows product, the core principles apply to any team integrating AI agents into their CI/CD pipelines, whether on GitHub Actions, GitLab CI, or Jenkins.

First, audit existing CI/CD permissions to apply least privilege to any agentic workflow integrations. Mirror GitHub's read-only default for agent environments, and only allow write operations through controlled, human-reviewed paths such as pull requests. Avoid granting agents direct access to production branches or sensitive repository settings.

Second, implement secret isolation for agent runners. Use dedicated runner environments for agentic workflows, with restricted network egress to prevent data exfiltration. Route sensitive credentials through external secret managers or proxies that are not accessible to the agent's runtime environment. Never pass secrets as environment variables to agent containers.

Third, constrain agent capabilities with explicit allowlists. Only grant agents access to the specific tools, APIs, and repository actions needed for their intended tasks. Block all unauthorized outbound network connections from agent runners, and log all denied requests for audit purposes.

Fourth, stage all agent-proposed changes. Agents should never commit directly to repositories. Instead, buffer their proposed changes and run post-execution validation, including security scans, policy checks, and human approval, before merging any modifications. This aligns with existing Git workflows and ensures that agent actions are subject to the same controls as human contributions.

Finally, enable full observability for agent interactions. Log all agent activity, including model prompts and responses, tool invocations, network traffic, and file system changes. Integrate these logs with existing observability stacks such as OpenTelemetry, Prometheus, or GitHub's audit log API to enable tracing and forensic analysis.

Conclusion

The shift to agentic workflows is accelerating as teams look to automate more complex tasks in CI/CD pipelines. GitHub's security architecture provides a practical, tested blueprint for balancing the productivity benefits of autonomous AI agents with the strict controls needed to protect production systems. By layering isolation, secret protection, capability constraints, and full observability, teams can adopt these workflows without expanding their attack surface unnecessarily. As agentic automation becomes more common, these defense-in-depth practices will become standard for any organization using AI in their delivery pipelines.

Comments

Please log in or register to join the discussion