A new AI model is transforming visual generation from random experimentation to professional-grade creative control.

The visual cortex takes up close to 30% of the brain's cortex. Vision has always been important to us, from the days of hunter-gatherers up to now. Visuals are how we catch attention. And it's also how AI first caught mine.

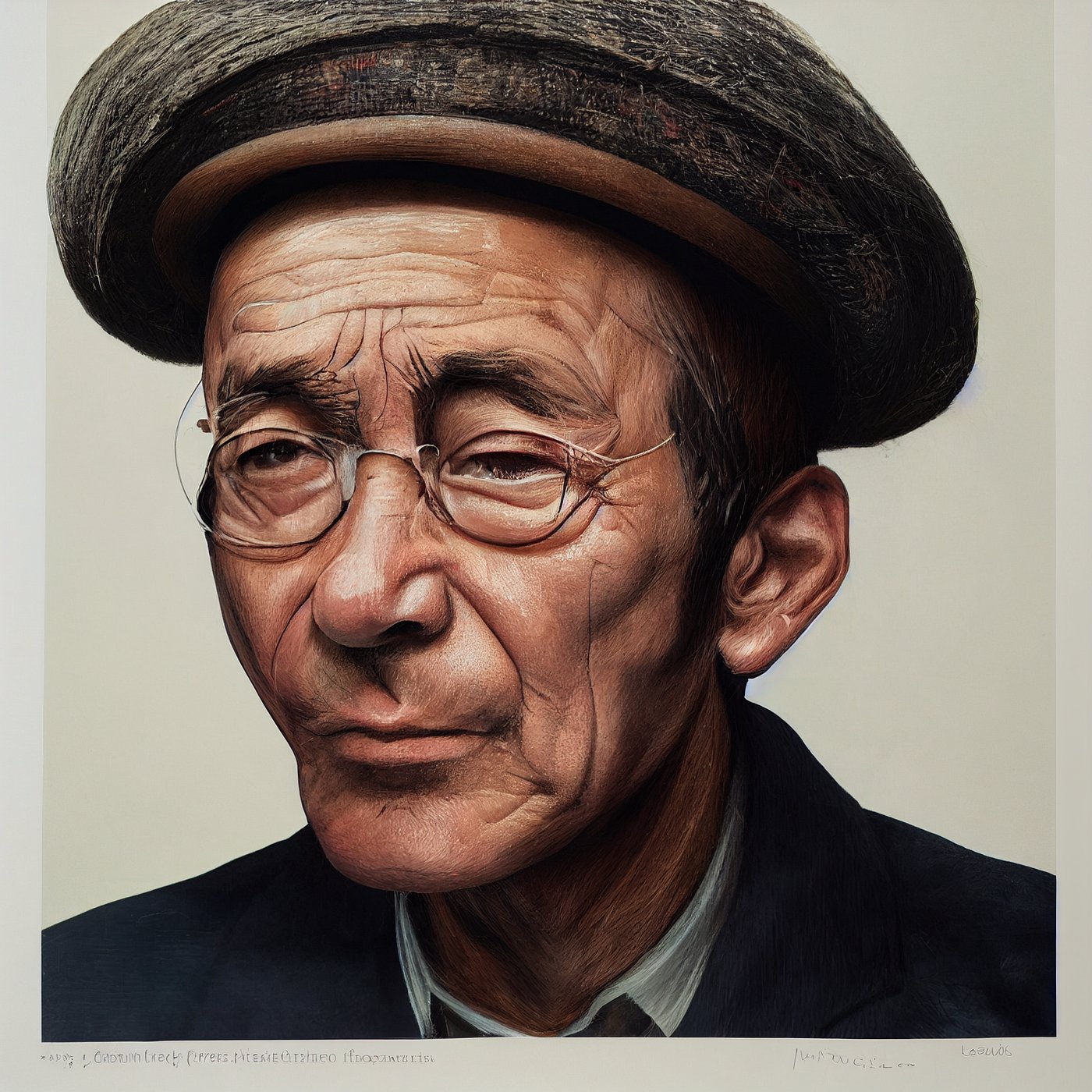

Back in the pre-ChatGPT days, large language models were mostly completing the text you gave them instead of acting like multi-tasking personal assistants. Meanwhile, early versions of Midjourney AI and OpenAI's DALL-E caught my attention and that of others, not because they were ahead of their text counterparts, but of their visual nature.

Images like these, while not the most coherent, were eye-catching. It was wild to imagine back then that they were generated from a simple text prompt. But the tools at the time offered little control over the results and were mostly useful for experimenting rather than serious work.

Those days are gone. AI is progressing at an incredible pace, and today's tools are powerful enough for professionals across the board. Today, we're going to explore Nano Banana 2, the latest advancement in image generation that's changing how we think about AI creativity.

What Makes Nano Banana 2 Different

Unlike its predecessors, Nano Banana 2 isn't just generating pretty pictures—it's thinking about composition, context, and intent. The model uses a multi-layered reasoning system that breaks down prompts into semantic components, visual hierarchies, and stylistic elements before generating anything.

This means when you ask for "a futuristic cityscape at sunset with cyberpunk elements," Nano Banana 2 understands that:

- "futuristic" implies certain architectural styles and technology levels

- "cityscape" requires urban planning logic and scale

- "sunset" dictates lighting, color temperature, and mood

- "cyberpunk elements" adds specific aesthetic rules and cultural references

How People Are Using It

The professional applications are already emerging across industries:

Marketing teams are using Nano Banana 2 to generate campaign visuals in minutes rather than days. One agency reported cutting their concept development time from 3 days to 3 hours.

Product designers are creating realistic mockups of products in various environments without physical prototypes. A furniture company used it to visualize their catalog in 50 different room styles.

Game developers are generating concept art and environment designs, with some indie studios reporting they can now prototype entire game worlds in a single afternoon.

Architects are using it to visualize building designs in different lighting conditions, seasons, and contexts—something that previously required complex 3D rendering.

How to Access Nano Banana 2

Currently, Nano Banana 2 is available through a tiered system:

Free tier: Limited to 10 generations per day with watermarked outputs. Good for experimentation and learning the system.

Pro tier ($29/month): 1000 generations monthly, higher resolution outputs, commercial usage rights, and priority processing.

Enterprise tier: Custom pricing with API access, team collaboration features, and dedicated support.

You can sign up at nanobanana.ai to get started. The platform works entirely through a web interface—no local installation required.

What This New AI Model Can Do

The capabilities go beyond simple image generation:

Iterative refinement: You can start with a rough concept and progressively refine it through conversation with the AI. It remembers previous iterations and builds on them.

Style transfer with reasoning: Instead of just applying a filter, it understands why certain artists use specific techniques and can adapt those principles to new subjects.

Scene composition: It can generate multiple elements that work together visually, maintaining consistent lighting, perspective, and style across complex scenes.

Text integration: Unlike earlier models that struggled with text, Nano Banana 2 can generate readable, contextually appropriate text within images.

3D understanding: It has a basic understanding of 3D space, allowing it to generate images that respect perspective and spatial relationships.

The Technical Breakthrough

What makes Nano Banana 2 truly remarkable is its architecture. It combines a vision transformer with a reasoning module that was trained on both visual data and human design principles. This hybrid approach allows it to understand not just what things look like, but why they look that way.

The model also uses a novel attention mechanism that prioritizes semantic relationships over pixel patterns, which is why it produces more coherent results than earlier models.

Limitations and Considerations

Despite its advances, Nano Banana 2 isn't perfect:

- It can still struggle with extremely complex scenes involving dozens of interacting elements

- The reasoning module sometimes makes "creative" decisions that don't match user intent

- It requires significant computational resources, which is why the free tier has strict limits

- Like all AI models, it reflects biases present in its training data

The Future of Visual AI

Nano Banana 2 represents a shift from AI as a tool for random generation to AI as a creative partner that understands intent. This is likely just the beginning—future versions will probably incorporate even more sophisticated reasoning, perhaps even understanding cultural context and emotional impact.

For now, Nano Banana 2 is making professional-grade visual creation accessible to anyone with an idea and an internet connection. Whether you're a designer looking to speed up your workflow or someone who's never created digital art before, it's worth exploring what this technology can do.

The days of AI-generated images being little more than interesting experiments are over. We're entering an era where visual AI can think, reason, and create with purpose—and that changes everything.

Comments

Please log in or register to join the discussion