This article explores how technological evolution can be understood through the lens of information theory and combinatorial search spaces, using Brian Arthur's circuit simulation as a model for understanding how complex technologies emerge from simpler building blocks.

The literature of technological progress presents an intriguing asymmetry: while detailed accounts of specific inventions abound, comprehensive frameworks for understanding technological evolution itself remain scarce. Within this intellectual landscape, Brian Arthur's work stands as a beacon of insight. His 2006 paper with Wolfgang Polak, "The Evolution of Technology within a Simple Computer Model," offers a fascinating simulation of how boolean logic circuits might evolve from simple elements to complex functions, providing a window into the fundamental nature of technological progress.

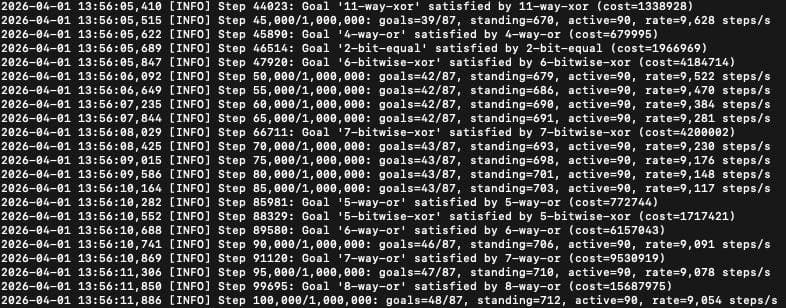

Arthur's simulation begins with three primitive elements: NAND gates (which output 0 only when both inputs are 1) and two constant elements that always output 1 or 0. The algorithm randomly combines these elements to create circuits, checking whether any of these combinations fulfill specific logical goals. When a successful circuit is discovered—perhaps an AND gate created through a particular arrangement of NAND gates—it becomes encapsulated and added to the pool of available components. These new components then serve as building blocks for more complex functions, creating a hierarchical structure of technologies that bootstraps itself toward ever-increasing complexity.

The simulation reveals several important principles of technological evolution. Complex circuits like 8-bit adders or equality comparators emerge only after simpler functions are first discovered and serve as stepping stones. If intermediate goals are removed, the simulation fails to reach complex ones, demonstrating that technological progress follows a hierarchical path where simpler technologies form the foundation for more complex ones. This mirrors biological evolution, as noted by Lenski et al., where complex features can only emerge through the sequential development of simpler functions.

However, the most profound insight from Arthur's work lies not in the mechanics of technological replacement, but in the challenge of navigating unimaginably large search spaces. Consider the combinatorial explosion of possibilities: for a function with n inputs and m outputs, there are (2^m)^(2^n) possible logic functions. An 8-bit adder, with its 16 inputs and 9 outputs, exists within a space of 10^177,554 possible functions—a number so vast it dwarfs the estimated 10^80 atoms in the universe and the mere 4×10^20 milliseconds since the Big Bang.

This brings us to Herbert Simon's watchmakers analogy, which illuminates why hierarchical organization makes technological progress possible. Tempus builds watches sequentially, such that any interruption requires starting completely over. Hora, by contrast, constructs stable subassemblies that can be combined to form more complex structures. When interrupted, Hora only loses progress since the last complete subassembly, making him thousands of times more productive than Tempus.

Technological search operates similarly. The probability of randomly discovering a complex technology like an 8-bit adder in a single attempt is effectively zero. Yet by breaking the problem into hierarchical components—first discovering a full-adder, then using it to build a 2-bit adder, then a 4-bit adder, and finally an 8-bit adder—the search becomes tractable. Each successful step screens off vast portions of the search space, making subsequent discoveries exponentially more likely.

Information theory provides the mathematical language to understand this process. Each bit of information reduces uncertainty by 50%, narrowing the space of possibilities. The entropy of a search process—the expected information gained per attempt—determines how quickly we can converge on a solution. When outcomes are equally probable, entropy is maximized, and each attempt yields the most information. When one outcome is highly likely, as in technological search where most combinations fail, entropy is low, and many attempts are needed to accumulate sufficient information.

Consider the challenge of wiring an 8-bit adder from 68 NAND gates. There are approximately 2^852 possible wiring arrangements. Randomly guessing all 68 gates simultaneously would require more time than the age of the universe, even with every atom in the universe performing computations. Yet by proceeding gate by gate, with each step verified before moving to the next, the correct arrangement can be found in about 453,000 iterations. Each correct guess screens off thousands of incorrect downstream possibilities, dramatically accelerating the search.

This insight explains several observations from Arthur's simulation. The algorithm works well whether combining up to 12 components or just 4, because the combinatorial explosion with larger numbers of components makes finding useful solutions so improbable that they contribute little to progress. Similarly, specifying only a narrow subset of simpler goals related to complex ones makes discovery easier by keeping the search space smaller.

The implications extend far beyond logic circuits. Technology in general can be viewed as arrangements of simpler components to create more complex functions. As Arthur notes, technologies form networks where novel elements are constructed from existing ones, bootstrapping themselves through hierarchical combination. The amplifier circuit, built from existing vacuum tubes and components, enabled oscillators, which combined with other elements created heterodyne mixers, and so forth, ultimately enabling radio broadcasting.

This hierarchical approach to technological organization succeeds because it maximizes information acquisition. Knowing whether an entire complex system works or not provides little information about what needs to be fixed. By contrast, verifying that each component performs its specific function correctly provides precise information about what works and what doesn't, allowing for targeted improvements.

The simulation reveals that technological evolution is fundamentally an information acquisition process. The hierarchical, modular organization of technologies isn't merely an aesthetic or structural preference—it's an information-theoretic necessity. Without this organization, the search space becomes so vast that technological progress would be effectively impossible. With it, we can systematically navigate toward increasingly complex solutions.

This perspective offers a powerful framework for understanding technological progress across domains. It explains why certain technological paths are more fruitful than others, why intermediate technologies are crucial for advancement, and how breakthroughs often emerge from the combination of existing components in novel ways. The simulation demonstrates that technological evolution isn't merely a historical process but follows deep mathematical principles related to information, complexity, and search.

Yet this view is not without limitations. The simulation operates in a highly constrained environment with well-defined goals and components. Real-world technological evolution faces additional complexities: poorly specified goals, changing requirements, emergent properties, and social factors that influence which technologies are pursued. The simulation also assumes a kind of technological determinism where progress follows a relatively direct path, whereas actual technological development often involves false starts, dead ends, and unpredictable interactions.

Moreover, the paper focuses primarily on the technical aspects of technological evolution, largely neglecting economic, institutional, and cultural factors that shape which technologies develop and how they are adopted. Technologies don't emerge in a vacuum but within social, economic, and political contexts that influence their development and implementation.

Despite these limitations, Arthur's simulation offers profound insights into the nature of technological progress. It reveals how hierarchical organization transforms the search for technological solutions from an impossible task into a manageable one, not by reducing the complexity of the final solution, but by structuring the process of discovery. In this way, the simulation illuminates a fundamental principle of technological evolution: that the architecture of complexity itself makes progress possible.

Comments

Please log in or register to join the discussion