NVIDIA's GTC 2026 keynote reveals significant advancements in AI hardware and software, with the Vera Rubin platform taking center stage alongside the integration of Groq's LPU technology and major updates to CUDA and DLSS.

NVIDIA GTC 2026 Keynote: Vera Rubin Platform, Groq Integration, and the Future of AI Acceleration

NVIDIA's annual GTC conference returned in 2026 with a keynote that solidified the company's position at the forefront of AI acceleration. Held at the SAP Center in San Jose to accommodate the record 30,000 attendees (a 20% increase from last year), the event showcased NVIDIA's latest developments in AI hardware, software, and ecosystem partnerships. The keynote, led by CEO Jensen Huang, focused heavily on the upcoming Vera Rubin platform and NVIDIA's broader AI strategy.

The Vera Rubin Platform: NVIDIA's Next Generation Server Hardware

The most anticipated announcement centered around the Vera Rubin platform, which combines NVIDIA's upcoming Vera CPU with Rubin GPU technology to form the backbone of their next-generation server hardware. This amalgamation represents NVIDIA's continued push into integrated computing solutions that span both processing and acceleration.

While NVIDIA first introduced Vera Rubin at CES 2026, GTC provided a more technical deep dive into the platform's capabilities. The Vera Rubin generation will support complete confidential computing across both GPU and CPU components, addressing critical security concerns for enterprise AI workloads. This comprehensive approach to confidential computing sets the stage for broader adoption in industries handling sensitive data.

Context Memory Storage Platform: Optimizing AI Inference

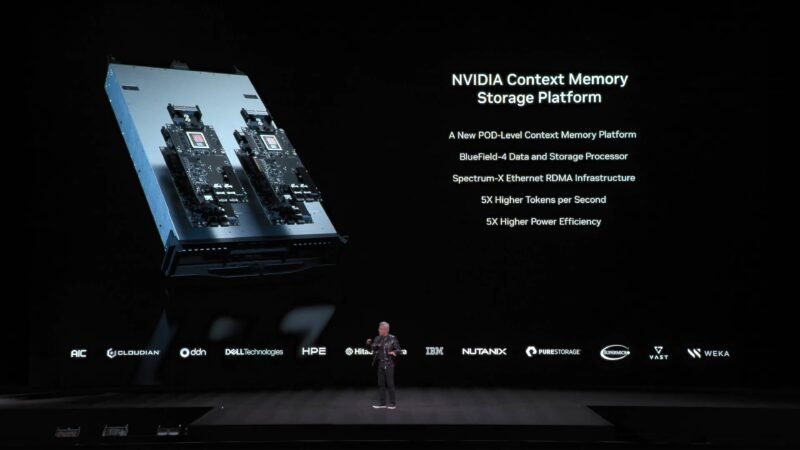

Complementing the Vera Rubin platform, NVIDIA detailed their Context Memory Storage Platform, a key-value cache designed to store massive amounts of inference context closer to GPUs. This platform leverages NVIDIA's BlueField-4 DPU and Spectrum-X networking hardware to reduce latency and improve throughput for AI inference workloads.

During CES 2026, NVIDIA had only briefly mentioned this technology, but at GTC, they elaborated on how it integrates with their broader AI infrastructure. The Context Memory Storage Platform represents NVIDIA's approach to solving the memory bottleneck in large-scale AI deployments by keeping relevant inference data closer to the processing units.

The Groq Acquisition: Expanding NVIDIA's AI Portfolio

Perhaps the most surprising development was NVIDIA's integration of recently acquired Groq technology. The $20 billion acquisition, completed on Christmas Eve 2025, included Groq's senior staff, physical assets, and a non-exclusive license for their Language Processing Unit (LPU) technology.

Groq's LPUs present an interesting contrast to NVIDIA's GPU architecture. While SRAM-heavy LPUs excel in low-latency decoding—advantageous for reducing time to first token—they haven't demonstrated the same level of total throughput as NVIDIA's GPUs. During NVIDIA's Q4 FY2026 earnings call, Huang indicated the company would explore using Groq's technology as an accelerator, similar to how they integrated Mellanox technology.

"We're going to extend our architecture with Groq as an accelerator in very much the way that we extended NVIDIA's architecture with Mellanox," Huang stated during the earnings call. The keynote provided the first public glimpse into how NVIDIA might leverage Groq's LPU technology within their existing ecosystem.

CUDA Turns 20: The Foundation of NVIDIA's Ecosystem

A significant theme of the keynote was the 20th anniversary of CUDA, NVIDIA's parallel computing platform and programming model. Huang emphasized how CUDA has become "literally integrated into every single ecosystem" and now supports an installed base of hundreds of millions of GPUs across countless industries.

"The useful life of NVIDIA GPUs is incredibly high" thanks to the wide variety of applications available for CUDA, Huang noted, citing the 6-year-old Ampere architecture as an example. This long-term compatibility creates a powerful flywheel effect, encouraging continued investment in NVIDIA's ecosystem while providing customers with exceptional ROI.

NVIDIA also announced around 100 new CUDA-X libraries at GTC, which Huang considers the "crown jewels" of the company. These specialized libraries accelerate specific workloads across domains, further strengthening NVIDIA's position as a comprehensive AI solutions provider.

DLSS 5 and Neural Rendering Advances

In consumer AI, NVIDIA announced DLSS 5, featuring 3D-guided neural rendering and "probabilistic rendering" capabilities. This advancement represents NVIDIA's continued investment in AI-powered graphics technologies, moving beyond traditional rasterization to more sophisticated rendering approaches.

The announcement underscores NVIDIA's strategy of applying AI across their entire product portfolio, from data center GPUs to consumer graphics cards. By developing AI-specific rendering techniques, NVIDIA aims to deliver higher quality graphics with lower computational requirements.

Structured Data, Unstructured Context: The Future of AI

A particularly insightful segment of the keynote focused on the relationship between structured and unstructured data in AI applications. "Structured data is the foundation of trustworthy AI," Huang stated, presenting what he called "the ground truth of enterprise computing."

Huang explained that while future AI agents will utilize structured databases, the majority of real-world data remains unstructured and previously "completely useless to the world" due to lack of indexing. AI's ability to understand and index unstructured data represents a fundamental shift in how organizations can leverage their information assets.

Expanding the Partner Ecosystem

The keynote featured numerous partner announcements, highlighting NVIDIA's strategy of vertical integration with horizontal openness:

- IBM: Accelerating WatsonX with cuDF

- Dell: Collaborating on the Dell AI Data Platform

- Google Cloud: Lowering computing costs through NVIDIA acceleration

- AWS: Expanding NVIDIA's presence in AWS infrastructure

- Microsoft Azure: Accelerating services including Bing Search

- Oracle: Continuing their partnership as "NVIDIA's first AI customer"

- CoreWeave: Cloud-native GPU computing platform

- Palantir: Data integration and analytics solutions

"Our relationship with cloud service providers are essentially us bringing customers to them by accelerating those customers' applications," Huang explained. "There are a lot of customers. We are going to accelerate everybody."

Industry Applications and Vertical Integration

Huang highlighted NVIDIA's approach across various verticals, including automotive, industrial, healthcare, and robotics. "We have 110 robots here at the show," he noted, emphasizing the practical applications of NVIDIA's technology.

The company's vertically integrated approach allows them to develop domain-specific accelerators while maintaining compatibility with broader ecosystems. "The only way for us to accelerate applications going forward is through domain specific acceleration," Huang stated. "We integrate with your technology so that we can bring accelerated computing to everyone in the world."

The Death of Moore's Law and Algorithm Optimization

A recurring theme was the evolution beyond traditional Moore's Law scaling. "Accelerating computing backed by ever increasingly optimized algorithms is the replacement for Moore's Law," Huang explained. "Optimize an algorithm once, and all of NVIDIA's GPU users benefit."

This approach represents a fundamental shift in computing architecture, where algorithmic improvements can provide performance gains that hardware scaling alone cannot achieve. NVIDIA's investment in CUDA-X libraries and specialized AI hardware reflects this strategic direction.

Conclusion: The Future of Accelerated Computing

NVIDIA's GTC 2026 keynote demonstrated the company's comprehensive approach to AI acceleration, spanning from hardware design to software optimization and ecosystem development. The Vera Rubin platform, Groq integration, and continued evolution of CUDA position NVIDIA to lead the next generation of AI computing.

As Huang emphasized, "We're going to talk about technology. We're going to talk about platforms. And most importantly, we're going to talk about ecosystems." This ecosystem approach, combining specialized hardware with optimized software and broad industry partnerships, will likely define the next decade of AI development.

For those interested in watching the keynote in full, NVIDIA has made the presentation available through their official channels. The company also announced additional technical details would be shared in follow-up blog posts, suggesting that the keynote represented just the beginning of their Vera Rubin and Groq integration story.

Comments

Please log in or register to join the discussion