Nvidia unveils Vera CPU rack systems packing 256 custom processors, targeting AI workloads that can't run efficiently on GPUs alone and directly competing with x86 chipmakers.

Nvidia is making a bold move into the CPU market with its new Vera processors, unveiled at GTC 2026. The company is packing 256 Vera CPUs into a single liquid-cooled rack system, directly challenging Intel and AMD in the datacenter space. This represents a significant shift for Nvidia, which has traditionally focused on GPUs but is now positioning itself as a full-stack AI infrastructure provider.

Why CPUs Matter for AI

The timing of this announcement is strategic. As AI systems become more sophisticated, particularly with the rise of agentic AI frameworks, the limitations of GPU-only architectures are becoming apparent. Ian Buck, VP of Hyperscale and HPC at Nvidia, explained that agents require CPU support for tasks like tool calling, SQL queries, and code compilation. These operations, known as "sandbox execution," are critical for both training and deploying AI agents across data centers.

This insight reveals a fundamental truth about modern AI workloads: they're not purely parallel computations that GPUs excel at. Many AI tasks involve sequential operations, data retrieval, and decision-making processes that benefit from traditional CPU architectures. By developing Vera, Nvidia is addressing this gap in the AI computing stack.

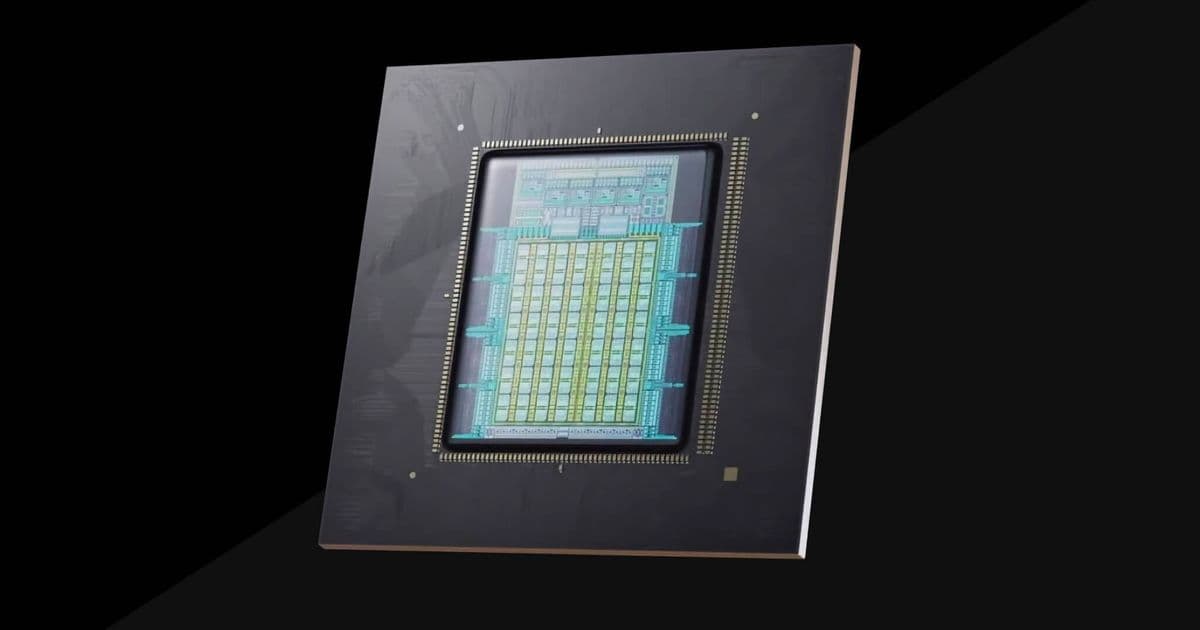

Vera's Technical Architecture

Vera represents a significant evolution from Nvidia's previous Grace CPU. The processor features 88 custom Olympus Arm cores with simultaneous multithreading support, a much wider memory bus, and faster chip-to-chip interconnects. These specifications aren't arbitrary - they're designed to eliminate bottlenecks in AI workloads.

One of Vera's most notable features is its use of LPDDR5X memory, typically found in notebook computers rather than servers. This unconventional choice allows each Vera CPU to support up to 1.5 TB of memory with 1.2 TB/s of bandwidth per socket. For comparison, Intel's top 6900P processors max out at 825 GB/s, while AMD's Turin processors reach between 560 and 600 GB/s.

Nvidia claims Vera delivers 3x more memory bandwidth and 1.5x the performance per core compared to contemporary x86 processors. The company attributes much of this performance to its new Olympus Arm cores, which feature a 10-wide decode pipeline with a "neural branch predictor" capable of performing two branch predictions per cycle. This advanced branch prediction reduces the likelihood of mispredictions, theoretically boosting overall performance.

The 256-CPU Liquid-Cooled Rack

The most impressive aspect of Vera's deployment is the new MGX reference design that packs up to 256 processors into a single liquid-cooled rack. This configuration provides more than 22,500 CPU cores and 400 TB of memory in a single chassis. The system also includes 64 BlueField-4 data processing units, creating a comprehensive compute platform for AI workloads.

Liquid cooling is essential for this density. Traditional air cooling would be insufficient for 256 high-performance CPUs in a single rack. This approach mirrors trends in GPU deployments, where liquid cooling has become standard for high-density configurations. By adopting similar cooling solutions for CPUs, Nvidia is signaling that the future of datacenter computing will be liquid-cooled regardless of processor type.

Market Impact and Competition

Nvidia's entry into the CPU market represents a direct challenge to Intel and AMD's dominance. The company is making some aggressive claims about Vera's performance, and if even half of these claims prove accurate, Vera could significantly disrupt the datacenter CPU market.

The availability strategy is also telling. Vera will be offered in both single- and dual-socket configurations from major OEMs including Foxconn, Wistron, Dell, Lenovo, and HPE. Additionally, Nvidia's NVL8 HGX systems, which traditionally used Intel processors, will be offered with Vera CPUs for the Rubin generation.

Perhaps most importantly, several major cloud providers have already committed to deploying Vera. Alibaba, ByteDance, Meta, Oracle, CoreWeave, Lambda, Nebius, and NScale have all announced plans to use the chips in their datacenters. This early adoption by major players suggests confidence in Vera's capabilities and market potential.

The Broader AI Infrastructure Landscape

Nvidia's Vera announcement comes amid broader shifts in AI hardware. Meta recently revealed plans to deploy Nvidia's standalone Grace CPUs at scale within its datacenters, indicating growing demand for CPU solutions that complement GPU deployments. Meanwhile, companies like Broadcom are developing custom AI chips, and memory prices continue to rise, making efficient memory utilization increasingly important.

Vera's high memory bandwidth addresses this concern directly. In an era where RAM is getting expensive, the ability to maximize memory efficiency becomes a competitive advantage. Vera's LPDDR5X approach, while unconventional for servers, offers a compelling balance of performance and cost-effectiveness.

What This Means for the Industry

Nvidia's move into CPUs represents more than just another product launch. It signals the company's ambition to control the entire AI infrastructure stack, from processors to networking to software frameworks. This vertical integration could provide significant advantages in optimizing AI workloads across the entire pipeline.

For Intel and AMD, Vera represents a credible threat. These companies have dominated the CPU market for decades, but Nvidia's entry brings several advantages: deep AI expertise, strong software ecosystem, and the ability to tightly integrate CPU and GPU technologies. If Vera delivers on its promises, it could force both companies to accelerate their own AI-focused CPU developments.

For customers, Vera offers an alternative to the traditional x86 ecosystem. This competition could drive innovation and potentially lower costs, though the initial premium for cutting-edge technology may limit early adoption to large cloud providers and hyperscalers.

Looking Ahead

The success of Vera will depend on real-world performance and ecosystem support. Nvidia's claims are impressive, but the true test will be how well Vera handles diverse AI workloads in production environments. The company's track record with GPUs suggests it has the engineering capability to deliver, but the CPU market is notoriously competitive and unforgiving of shortcomings.

What's clear is that the AI hardware landscape is becoming more complex and competitive. As AI workloads evolve beyond simple model training to include sophisticated agentic systems, the computing requirements are expanding beyond what GPUs alone can provide. Vera represents Nvidia's bet that the future of AI infrastructure requires a balanced approach combining specialized GPUs with high-performance, AI-optimized CPUs.

The next few years will reveal whether this bet pays off, but one thing is certain: the CPU market just got a lot more interesting.

Comments

Please log in or register to join the discussion