OpenAI’s new WebSocket-based execution mode for the Responses API cuts latency by up to 40% for agentic workflows by replacing repeated HTTP round trips with persistent bidirectional connections, a shift already adopted by developer tools including Vercel’s AI SDK, Cline, and Cursor.

OpenAI has launched a WebSocket-based execution mode forits Responses API, replacing the traditional HTTP request-response pattern to reduce latency and improve throughput for agentic workflows. The update targets a key bottleneck in multi-step AI systems, where repeated network round trips for each reasoning step, tool call, or follow-up query previously added significant overhead, even as model inference speeds improved.

The shift was analyzed by InfoQ contributor Leela Kumili, a lead software engineer at Starbucks specializing in cloud-native distributed systems and AI adoption.

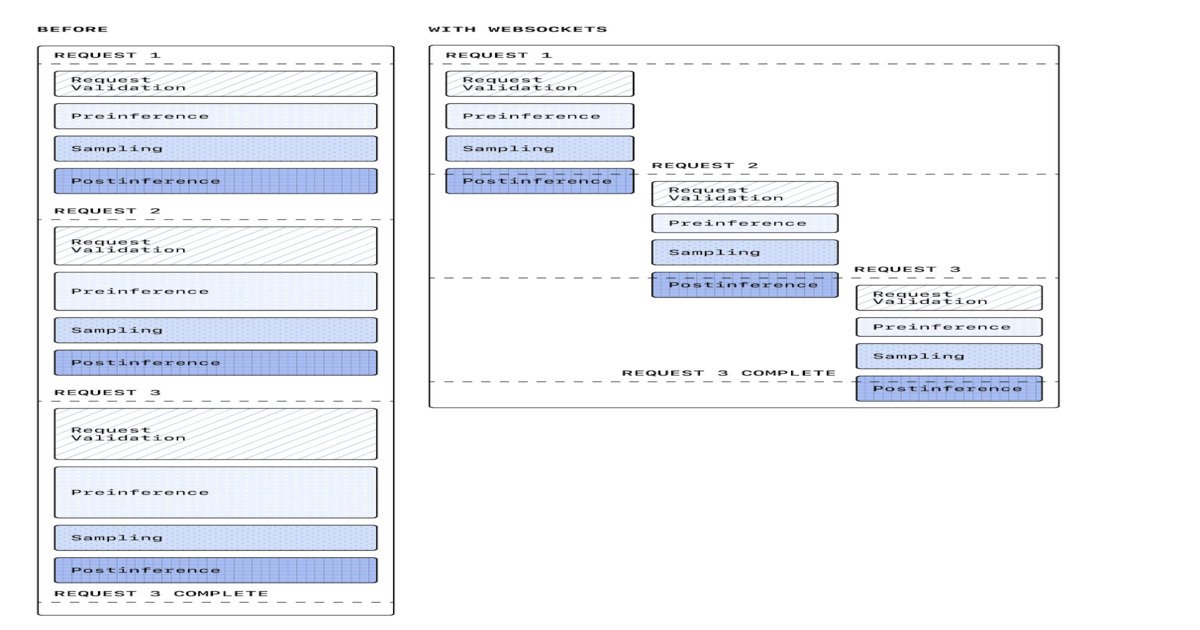

Traditional agentic workflows using the Responses API required a separate HTTP request for each step in a sequence. For a coding agent tasked with fixing a bug across multiple files, this might include steps like reading file contents, running static analysis tools, generating a fix, and validating the result. Each step triggered a new HTTP request, complete with TLS negotiation and connection setup, even when using HTTP keep-alive. As inference latency dropped for newer models, these network round trips became the dominant source of delay for many workflows.

The traditional HTTP flow for these workflows is shown below, with each step requiring a separate request-response cycle:

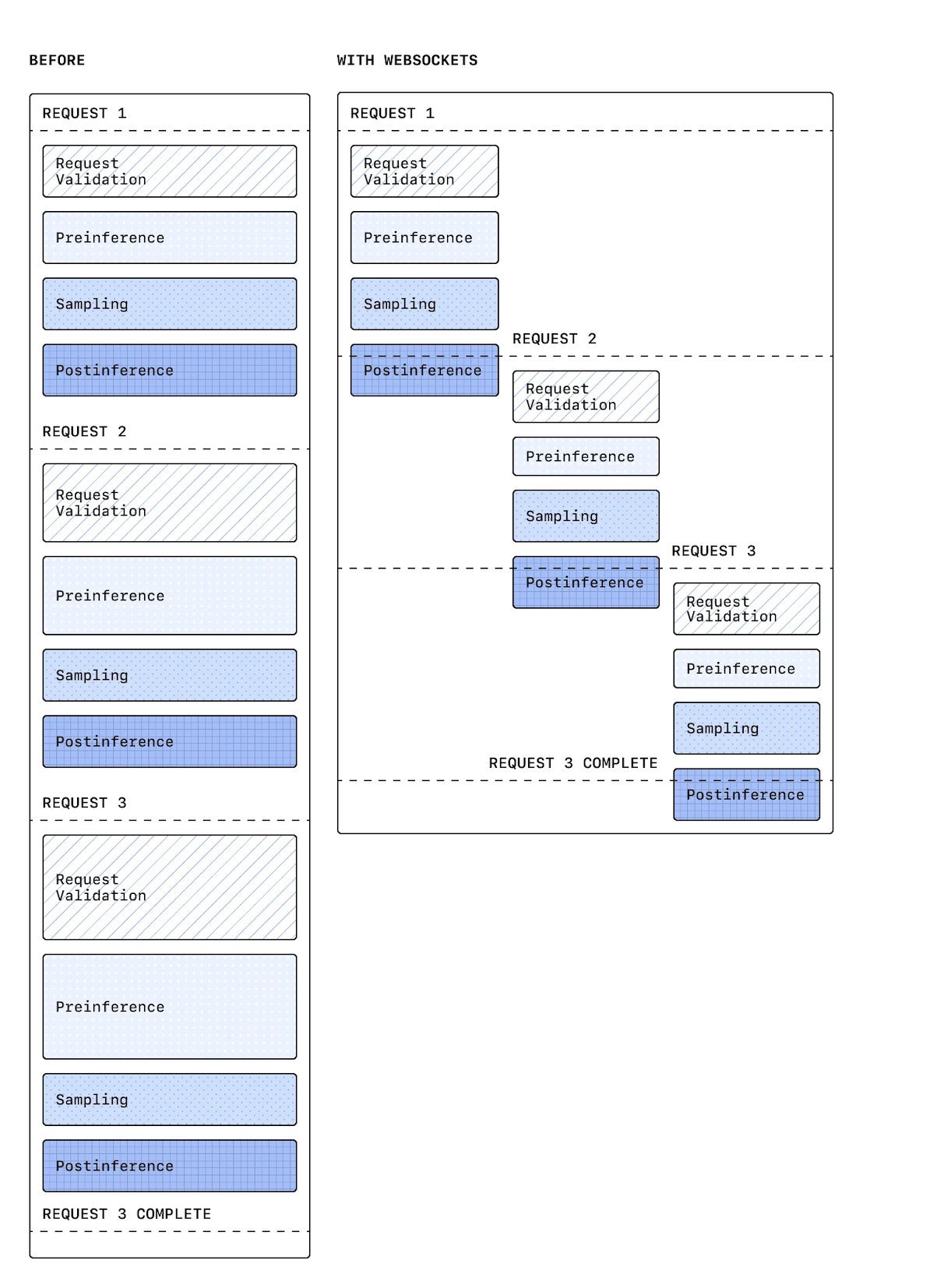

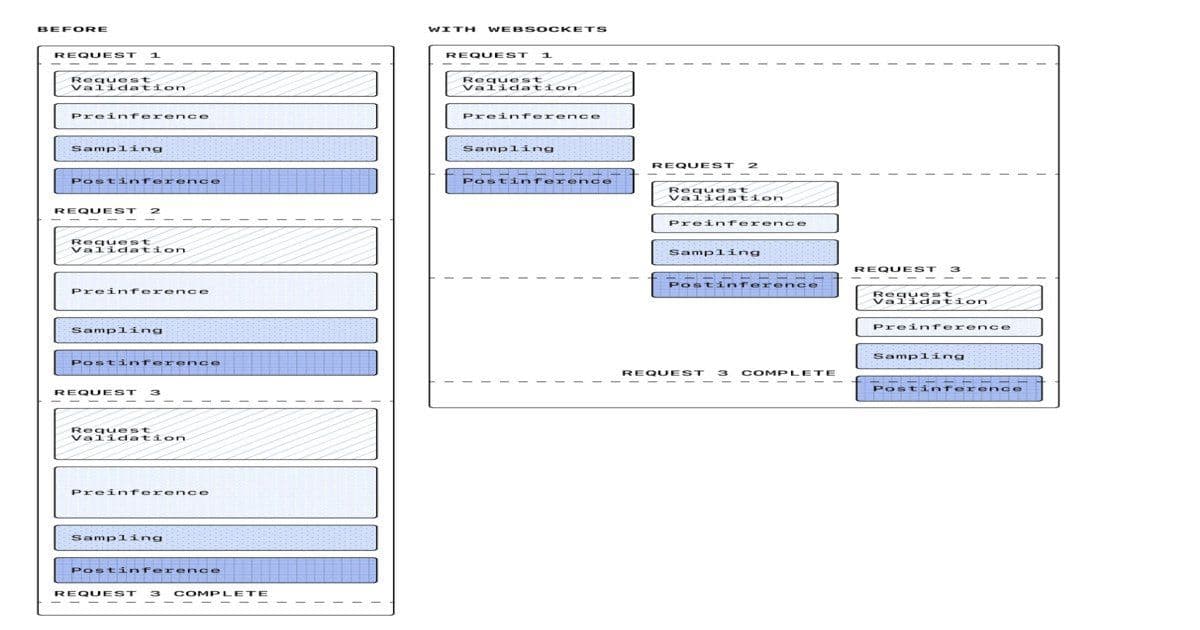

The new WebSocket mode uses a persistent, bidirectional connection between client and server, eliminating repeated handshakes for multi-step workflows. Clients can warm up connections by sending system prompts and tool definitions during an initial setup phase, avoiding cold starts for subsequent steps. The mode is compatible with OpenAI's Zero Data Retention (ZDR) program, which ensures no request data is stored on OpenAI's servers, a critical requirement for enterprise users with strict data compliance needs.

Gabriel Chua, a developer experience engineer at OpenAI, noted that the warm-up phase allows teams to preconfigure sessions with all necessary context before starting a workflow. "You can warm up the connection by sending your system prompt and tool definitions first," Chua said. "It's Zero Data Retention (ZDR) compatible."

The persistent session flow enabled by WebSockets is shown below, with a single connection supporting all steps in a workflow:

OpenAI reports up to 40% latency reduction in early production use cases, along with sustained throughput of 1,000 transactions per second and burst capacity up to 4,000 TPS. Adoption has been rapid among developer tooling providers. Vercel's AI SDK integrated the WebSocket mode and observed similar 40% latency reductions in testing. Cline, a coding agent extension, saw a 39% improvement in multi-file workflow latency, while Cursor, an AI-powered IDE, reported gains of up to 30% across common coding tasks.

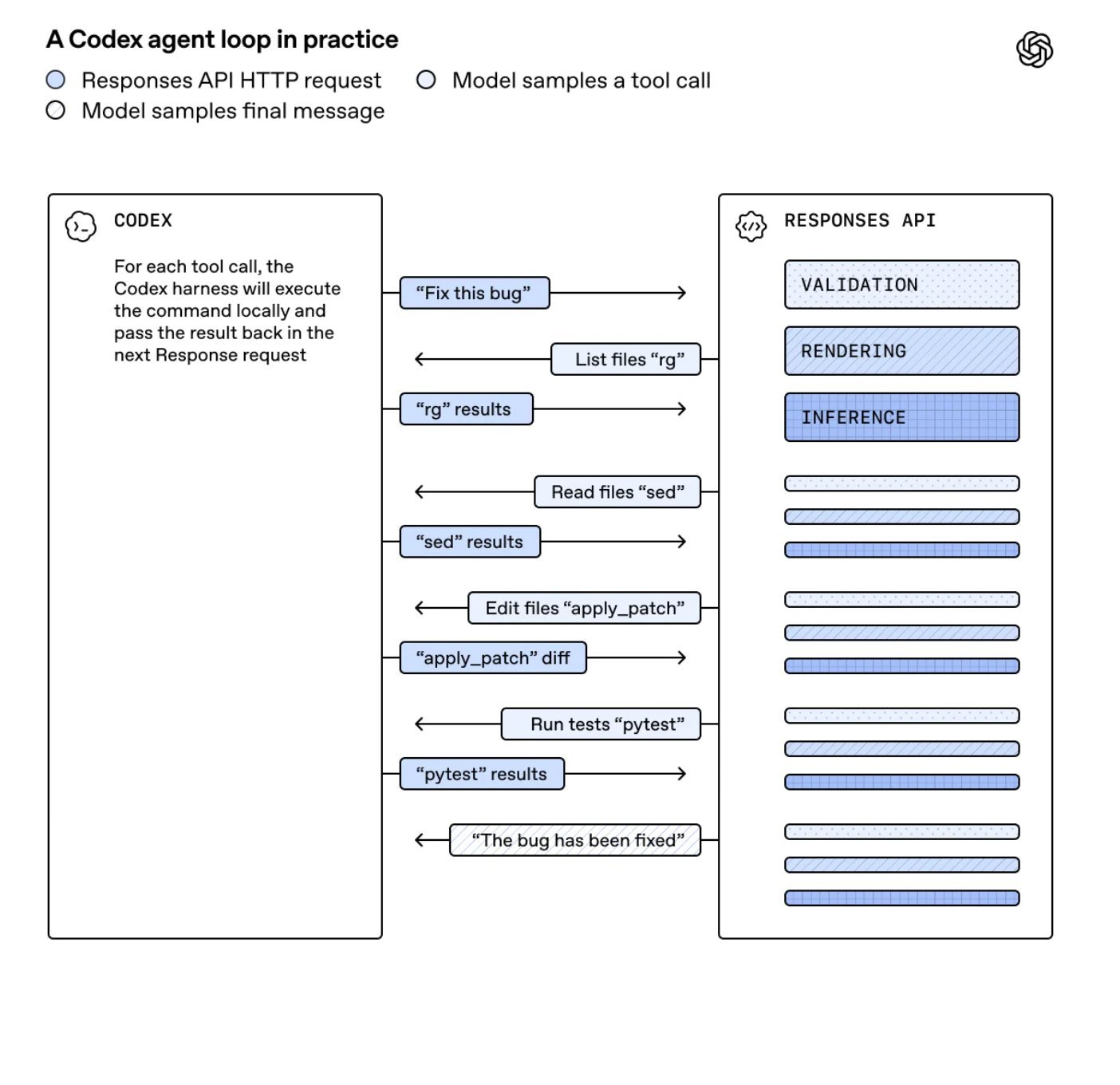

OpenAI's own Codex coding agent has already migrated most of its Responses API traffic to the WebSocket mode after a two-month alpha testing cycle with select partners. The company says the feature is now production-ready for qualified users, though it remains in alpha status for general access.

The update reflects a broader shift in AI system design, where transport layer optimizations are increasingly seen as critical to end-to-end performance, alongside model-level improvements. For years, most effort to speed up agentic systems focused on reducing inference time, quantizing models, or optimizing prompt design. The WebSocket mode highlights that connection management and communication patterns play an equally important role for high-performance workflows.

This aligns with long-standing patterns in distributed systems, where persistent connections reduce overhead for stateful, multi-step interactions. Kevin Cho, an engineer at Microsoft, noted that the shift brings AI systems back to core distributed systems challenges. "Going back to the original software stack problems. websockets and stateful connections," Cho said.

Ofek Shaked, a developer who builds agentic tools, described the update as a major practical improvement. "WebSockets for agent state is such an obvious but huge win. No more cold starts killing your multi-tool chains."

However, the shift introduces new system design considerations. Unlike stateless HTTP requests, WebSocket connections require explicit lifecycle management. Teams must handle connection drops, implement reconnection logic with state recovery, and manage backpressure when streaming responses exceed client processing capacity. High-concurrency deployments also need infrastructure capable of managing thousands of persistent connections, which differs from the stateless HTTP scaling patterns many teams are used to.

OpenAI has not announced any pricing changes tied to the WebSocket mode, with billing remaining aligned to existing token-based models for the Responses API. The company plans to expand alpha access to more users in the coming months, with general availability expected later in 2026.

Comments

Please log in or register to join the discussion