The OpenCL Working Group has published initial cooperative matrix extensions, bringing hardware-accelerated matrix operations to OpenCL similar to Vulkan's 2023 implementation, promising significant performance improvements for machine learning inferencing workloads.

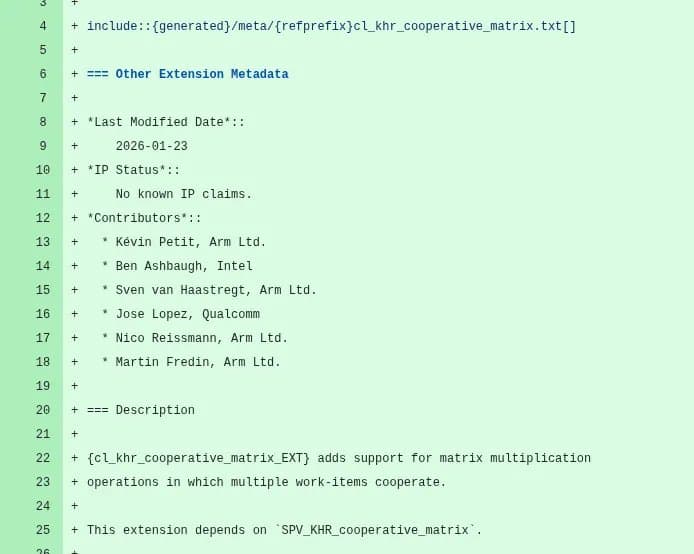

The OpenCL ecosystem is about to get a significant boost for machine learning workloads with the introduction of cooperative matrix extensions. Following Vulkan's lead from 2023, the OpenCL Working Group has published their initial cooperative matrix extension work, with the first extension titled 'cl_khr_cooperative_matrix' now available in working draft form.

Cooperative matrix operations represent a fundamental optimization for matrix multiplications, which are the computational backbone of neural networks and many machine learning algorithms. By enabling hardware-specific acceleration of these operations, developers can achieve substantial performance improvements without having to manually optimize for specific hardware architectures.

The cl_khr_cooperative_matrix extension allows OpenCL implementations to accept SPIR-V modules using SPV_KHR_cooperative_matrix. This means that developers can write matrix operations that can be efficiently mapped to the specialized matrix units present in modern GPUs and other accelerators. The extension is designed to work across different hardware vendors while still providing access to vendor-specific optimizations where available.

For those working with machine learning inference, this development is particularly significant. Matrix operations account for the majority of computation in neural networks, and efficient execution of these operations can dramatically reduce latency and increase throughput. Cooperative matrix extensions essentially allow the hardware to perform multiple operations simultaneously by leveraging the inherent parallelism in matrix math.

The technical implementation works by providing new SPIR-V instructions that represent matrix operations. When compiled for a specific target, these instructions can be mapped to the most efficient hardware instructions available. This approach provides a portable way to access hardware acceleration while still taking advantage of vendor-specific optimizations.

Comparing this to Vulkan's implementation, which was introduced back in 2023, we can see a clear pattern in the industry's focus on improving ML acceleration at the API level. Vulkan's cooperative matrix extensions have been well-received in the graphics and ML communities, and bringing similar capabilities to OpenCL will benefit developers working in scientific computing, data analysis, and other domains where OpenCL remains the preferred API.

The performance potential of these extensions can be substantial. For example, in a typical matrix multiplication operation, a cooperative matrix approach can reduce the number of required memory accesses by performing multiple operations in parallel. This can lead to 2-5x performance improvements in matrix-heavy workloads, depending on the specific hardware implementation.

For developers interested in implementing these extensions, the OpenCL Working Group has made the initial specification available for review via the OpenCL-Docs on GitHub. Those looking for more information can also check out the official announcement on Khronos.org.

The extension is still in the working draft stage, which means it's subject to change as it goes through the review process. However, the fact that it's already in draft form suggests that we can expect to see implementations in driver updates relatively soon.

For homelab builders and enthusiasts running ML workloads on consumer hardware, these extensions could breathe new life into older GPUs. By making matrix operations more efficient, the extensions can help maximize the performance of existing hardware without requiring expensive upgrades. This is particularly relevant for those running inference models locally for privacy reasons or when cloud latency is a concern.

As machine learning continues to become more prevalent in everyday applications, having efficient, low-level APIs like OpenCL with cooperative matrix support will be increasingly important. These extensions represent a significant step forward in making hardware acceleration more accessible to developers working across different domains and hardware platforms.

For developers currently implementing ML pipelines in OpenCL, the introduction of these extensions means it will be worth revisiting performance-critical sections of code to take advantage of the new capabilities. While the exact performance gains will vary depending on the specific hardware and workload, the potential improvements are substantial enough to warrant the effort of optimization.

Comments

Please log in or register to join the discussion