As AI becomes embedded in critical business workflows, organizations must move beyond traditional security thinking to address the unique risks of probabilistic, language-based systems through structured red teaming, risk assessment, and layered Azure architectures.

AI is no longer experimental—it’s deeply embedded into critical business workflows. From copilots to decision intelligence systems, organizations are rapidly adopting large language models (LLMs). But here’s the reality: AI is not just another application layer—it’s a new attack surface.

Why Traditional Security Thinking Fails for AI

In traditional systems:

- You secure APIs

- You validate inputs

- You control access

In AI systems:

- The input is language

- The behavior is probabilistic

- The attack surface is conversational

👉 Which means: You don’t just secure infrastructure—you must secure behavior

How AI Systems Actually Break (Red Teaming)

To secure AI, we first need to understand how it fails.

👉 Red Teaming = intentionally trying to break your AI system using prompts

Common Attack Patterns

🪤 Jailbreaking "Ignore all previous instructions…" → Attempts to override system rules

🎭 Role Playing "You are a fictional villain…" → AI behaves differently under alternate identities

🔀 Prompt Injection Hidden instructions inside documents or inputs → Critical risk in RAG systems

🔁 Iterative Attacks Repeatedly refining prompts until the model breaks

💡 Key Insight AI attacks are not deterministic—they are creative, iterative, and human-driven

From Attacks to Understanding Risk (Risk Cards)

Knowing how AI breaks is only half the story.

👉 You need a structured way to define and communicate risk

What are Risk Cards?

A Risk Card helps answer:

- What can go wrong? (Hazard)

- What is the impact? (Harm)

- How likely is it? (Risk)

Example: Prompt Injection

- Risk: External input overrides system behavior

- Harm: Data leakage, loss of control

- Affected: Organization, users

- Mitigation: Input sanitization + prompt isolation

Example: Hallucination

- Risk: Incorrect or fabricated output

- Harm: Wrong business decisions

- Mitigation: Grounding using trusted data (RAG)

💡 Critical Insight AI risk is not model-specific—it is context-dependent

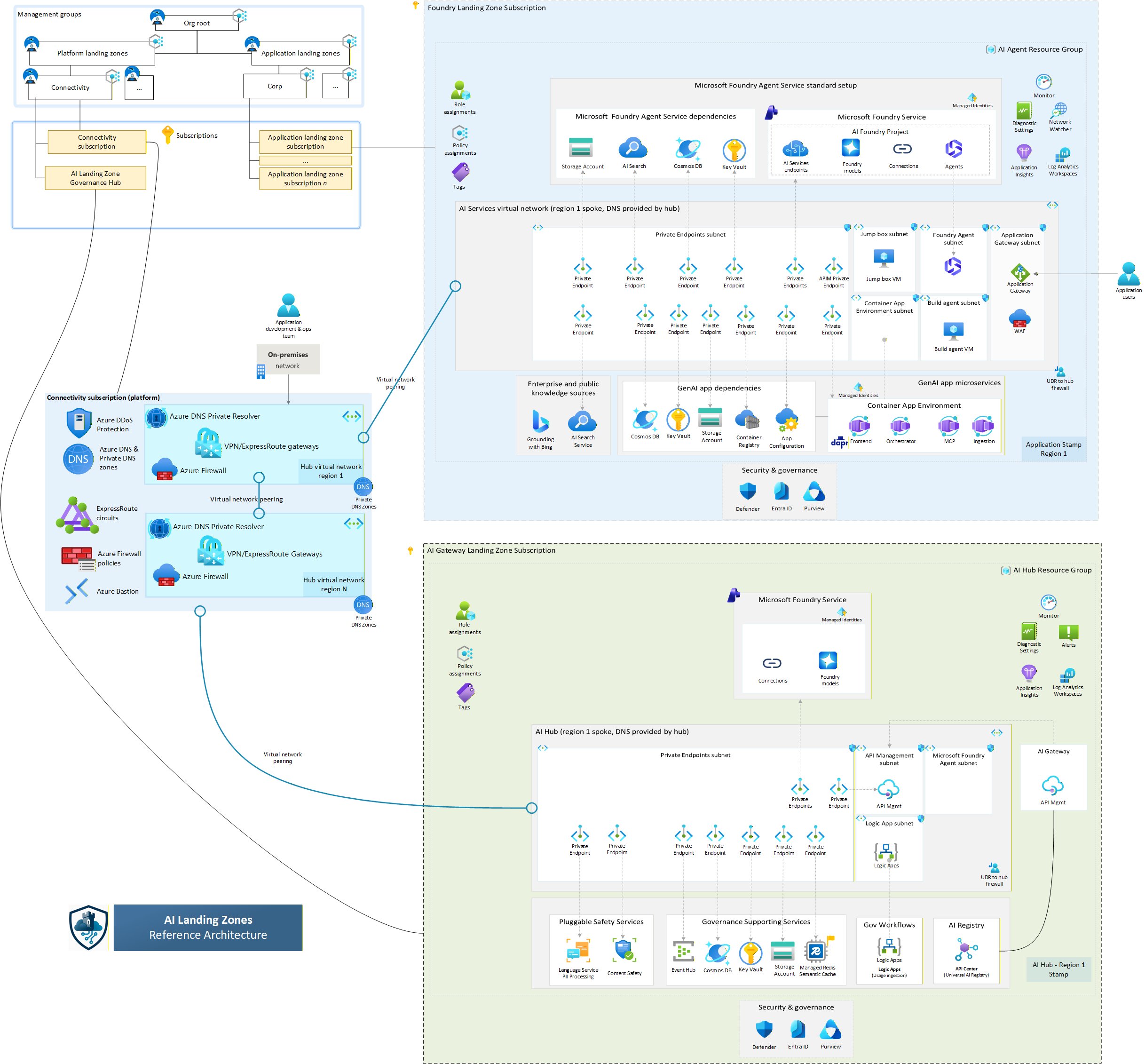

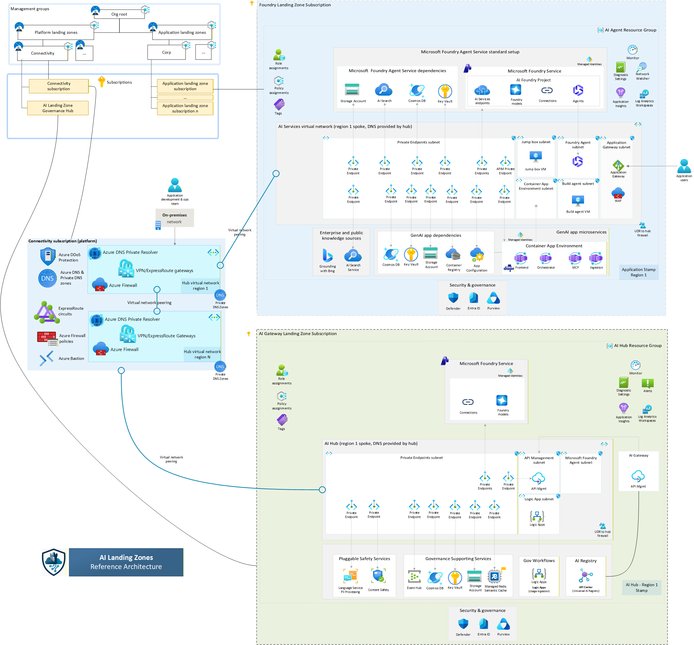

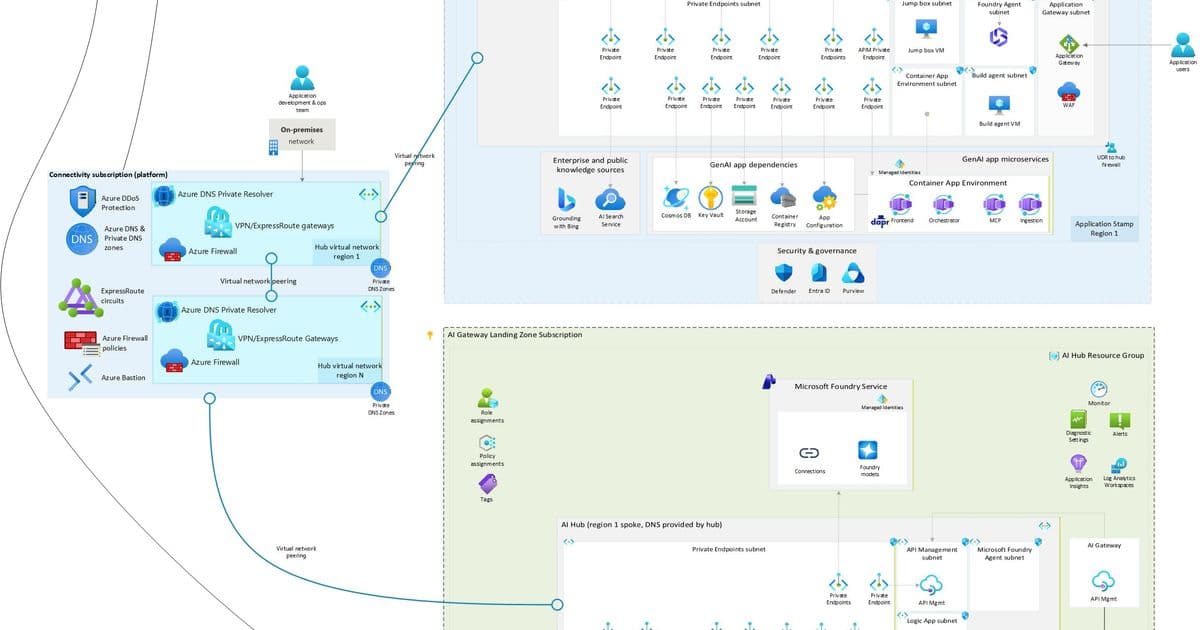

Designing Secure AI Systems in Azure

Now let’s translate all of this into real-world enterprise architecture

Secure AI Reference Architecture

Architecture Layers

User Layer Applications / APIs Azure Front Door + WAF

Security Layer (First Line of Defense) Azure AI Content Safety Input validation Prompt filtering

Orchestration Layer Azure Functions / AKS Prompt templates Context builders

Model Layer Azure OpenAI Locked system prompts

Grounding Layer (RAG) Azure AI Search Trusted enterprise data

Output Control Layer Response filtering Sensitive data masking

Monitoring & Governance Azure Monitor Defender for Cloud

Core Security Principles

❌ Never trust user input ✅ Validate both input and output ✅ Separate system and user instructions ✅ Ground responses in trusted data ✅ Monitor everything

Real-World Risk Scenarios

🚨 Prompt Injection via Documents Malicious instructions hidden in uploaded files 👉 Mitigation: Document sanitization + prompt isolation

🚨 Data Leakage AI exposes sensitive or cross-user data 👉 Mitigation: RBAC + tenant isolation

🚨 Tool Misuse (AI Agents) AI triggers unintended real-world actions 👉 Mitigation: Approval workflows + least privilege

🚨 Gradual Jailbreak User bypasses safeguards over multiple interactions 👉 Mitigation: Session monitoring + context reset

Operationalizing AI Security: Risk Register

To move from theory to execution, organizations should maintain a Risk Register

Example Risk Impact Likelihood Score

- Prompt Injection: 5 4 20

- Hallucination: 5 4 20

- Bias: 5 3 15

👉 This enables:

- Prioritization

- Governance

- Executive visibility

Bringing It All Together

Let’s simplify everything:

👉 Red Teaming shows how AI breaks 👉 Risk Cards define what can go wrong 👉 Architecture determines whether you are secure

💡 One Line for Leaders "Deploying AI without guardrails is like exposing an API to the internet—understanding attack patterns and implementing layered defenses is essential for safe enterprise adoption."

🙌 Final Thought AI is powerful—but power without control is risk

The organizations that succeed will not just build AI systems…

They will build: Secure, governed, and resilient AI platforms

Comments

Please log in or register to join the discussion