Spotify’s expansion of its AI-powered DJ feature to 75+ countries and four new languages raises the bar for personalized media experiences, creating concrete requirements for mobile developers building conversational AI features across iOS and Android platforms.

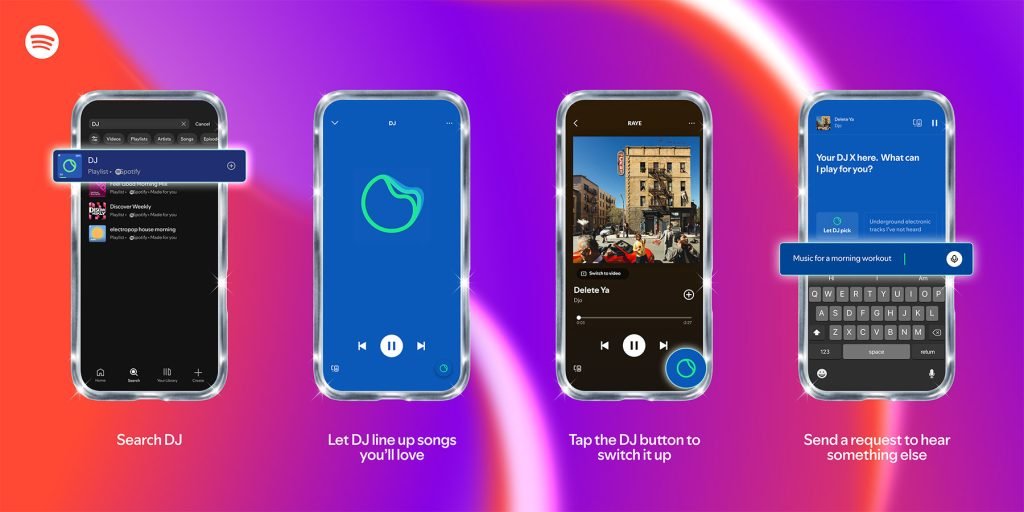

Spotify announced on May 7, 2026, that its AI-powered personal DJ feature is now available to Premium users in more than 75 countries, with added support for four new languages: French, German, Italian, and Brazilian Portuguese. The expansion builds on previous updates that added Spanish language support and typed request functionality, moving the feature closer to global availability for the streaming service’s paid user base. The DJ feature offers personalized music mixes paired with AI-generated commentary about tracks and artists, accessible via search or a dedicated home page shortcut in the Spotify iOS and Android apps.

Platform Update Details

The latest Spotify app updates for iOS and Android include the expanded DJ functionality, with strict platform requirements to support the new language packs and AI processing needs. For iOS, the update requires devices running iOS 16.0 or later, as it uses Apple’s Natural Language framework to process user requests, which added full support for French, German, Italian, and Brazilian Portuguese in iOS 17. Spotify’s iOS app is built with Xcode 16, using the iOS 19 SDK and Swift 6 for modern concurrency when handling API calls to its backend AI services. Voice transcription for user requests relies on Apple’s Speech framework, which requires the NSMicrophoneUsageDescription key in the app’s Info.plist to request microphone access from users.

On Android, the update requires Android 10 (API level 29) or later, covering roughly 95% of active Android devices as of 2026. The app uses Android ML Kit for text processing, which added support for the four new languages in ML Kit 18.0. Spotify’s Android app is built with Android Studio 2024.3 (Iguana), targeting API level 35 (Android 15), and uses Kotlin 2.0 coroutines to manage asynchronous requests to its backend. Voice recognition uses Android’s built-in SpeechRecognizer class, which requires the RECORD_AUDIO permission in the app’s AndroidManifest.xml, with a runtime permission prompt for devices running Android 6.0 (API 23) or later.

The feature uses a hybrid cloud and on-device processing model: user request transcription happens on-device to reduce latency, while music curation and AI commentary generation are handled by Spotify’s cloud-based AI services. This requires the app to maintain a stable network connection for full functionality, with fallback behavior to play cached music queues if connectivity is lost. Custom text-to-speech voices for each regional DJ (Maïa for French, Ben for German, Alex for Italian, Dani for Brazilian Portuguese) are downloaded as separate asset packs, managed via Apple’s Asset Catalog and Android’s DownloadManager API respectively.

Cross-platform consistency is maintained despite Spotify’s use of separate native codebases for iOS and Android. Both client apps send identical request payloads to Spotify’s shared backend AI service, ensuring music queues and commentary are identical across platforms for the same user account. Localization for the new languages uses platform-standard resource systems: iOS uses Localizable.strings files for translated UI text, while Android uses strings.xml files in the res/values directory for each supported language. Voice pack assets are stored in platform-specific directories, with iOS bundling them in the app’s Assets catalog and Android storing them in res/raw.

For developers building similar features with cross-platform tools like React Native or Flutter, this split between shared backend logic and platform-specific client implementation is a common pattern. Cross-platform frameworks do not include built-in support for system-level speech recognition or ML processing, so developers must either use community libraries or write custom native modules. React Native developers can use the react-native-voice library to wrap native iOS and Android speech APIs, along with react-native-localize for handling localized strings. Flutter developers can use the speech_to_text package for voice recognition and flutter_localizations for translated content.

A key trade-off for cross-platform development is balancing code reuse with platform-specific performance. Using a single cross-platform codebase reduces development time, but may require additional work to match the latency and integration quality of native apps, especially for real-time audio processing features like voice transcription. Teams building media apps with AI features should evaluate whether their target user base prioritizes cross-platform consistency or platform-specific performance when choosing a development approach.

Developer Impact

This expansion sets a new baseline for user expectations around AI-powered media features. As one of the most widely used streaming apps globally, Spotify’s DJ feature normalizes conversational, personalized AI interactions for music listeners, meaning users will increasingly expect similar functionality in competing apps. Mobile developers building music, podcast, or audio streaming apps should prioritize adding natural language request handling and personalized commentary to remain competitive, especially as Spotify rolls the feature out to more markets.

For developers working on existing apps, the update highlights the importance of planning for global localization early in the development cycle. Adding four new languages required Spotify to update not just in-app text, but also voice models, commentary scripts, and UI layouts to accommodate longer text strings in languages like German. Developers expanding to new markets should audit their app’s layout system to handle variable text lengths, and test voice models for accuracy across regional accents and dialects.

Third-party developers using Spotify’s public Web API cannot access the AI DJ feature directly, as it is not exposed via public endpoints. However, the expansion signals Spotify’s increased investment in AI-driven personalization, suggesting future API updates may include more endpoints for user taste profiles and personalized recommendations that developers can integrate into their own apps.

Migration Path for Similar Features

Developers looking to add AI DJ functionality to their existing apps can follow a structured migration path to minimize disruption:

- Audit platform support: Verify that your app’s minimum SDK versions meet the requirements for required ML and speech frameworks. For iOS, this means supporting iOS 16.0 or later to use the Natural Language framework’s full language support. For Android, Android 10 (API 29) or later is required for ML Kit’s language features. Use feature flags to disable the AI DJ feature for users on unsupported OS versions.

- Choose an AI backend: Decide between cloud-based or on-device processing. Cloud-based solutions like Spotify’s model use shared backend services with NLP APIs such as Google Cloud Natural Language or AWS Comprehend, which support the same languages as the Spotify update. On-device processing uses frameworks like Core ML for iOS or TensorFlow Lite for cross-platform, which improve privacy and latency but increase app size.

- Implement speech processing: For native apps, integrate Apple Speech and Android SpeechRecognizer. For cross-platform apps, add the relevant community packages and write custom native modules for any unsupported functionality, such as custom voice pack downloads.

- Add localization: Create resource files for all supported languages, including translated UI text, commentary scripts, and voice pack metadata. Test layouts with the longest expected text strings for each language to avoid truncation.

- Handle permissions: Add microphone and network permissions to platform-specific configuration files, with clear user-facing explanations for why each permission is needed. On iOS, include usage description keys in Info.plist. On Android, request runtime permissions for devices running API 23 or later.

- Test across platforms: Verify that voice requests, music playback, and AI commentary work consistently across iOS and Android devices, including different screen sizes, OS versions, and network conditions. Test voice recognition accuracy with regional accents for each supported language.

As Spotify continues to expand its AI features, mobile developers will need to keep pace with growing user expectations for personalized, conversational interactions. The DJ feature’s expansion to 75+ countries provides a clear roadmap for integrating similar functionality across iOS and Android, with practical lessons for both native and cross-platform development teams.

Comments

Please log in or register to join the discussion