Examines how combining multiple data points into single input fields creates cascading backend inefficiencies, and presents structured input fields as the optimal solution for long-term system scalability and maintainability.

Introduction

Imagine a pipeline designed to process water. Now, imagine someone decides to dump oil into that same pipeline, expecting it to handle both seamlessly. The result? Clogs, inefficiencies, and a system that breaks down under the strain. This analogy mirrors what happens when frontend design choices prioritize user convenience over backend efficiency, particularly when multiple data points are crammed into a single input field.

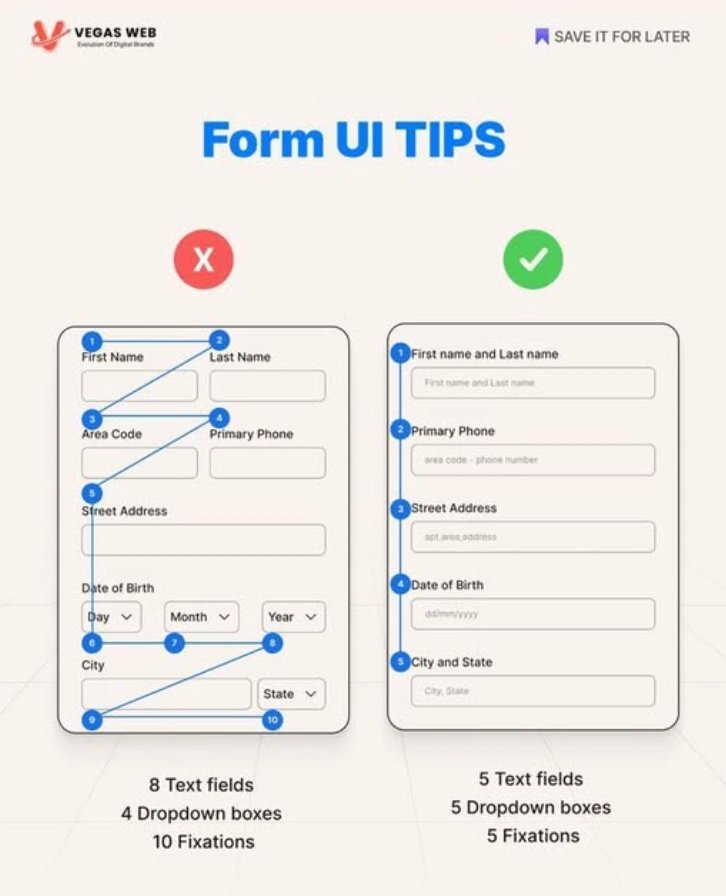

Take, for instance, combining first name and last name or date of birth components into one field. On the surface, it simplifies the user interface. But beneath the surface, it triggers a cascade of backend complexities that deform the data processing pipeline, leading to increased errors, slower performance, and higher maintenance costs.

The root of this issue lies in the disconnect between frontend and backend considerations. Frontend designers often focus on minimizing user friction, while backend developers are left to untangle the resulting data mess. For example, when a single field contains both first and last names, the backend must parse and validate this data, a process that involves string splitting, error handling for inconsistent formats, and additional database queries.

This parsing step heats up the system—literally and metaphorically—as CPU cycles are wasted on tasks that could have been avoided with better design. Over time, this inefficiency expands into a systemic problem, reducing scalability and increasing the risk of data inconsistencies.

The stakes are high. As software systems grow in complexity, such inefficiencies become breaking points. A system that struggles to handle basic data aggregation will fail under the pressure of larger datasets or higher user loads. Moreover, the mechanism of risk formation is clear: shortcuts in form design lead to brittle backend code, which in turn increases the likelihood of bugs and performance bottlenecks.

Problem Analysis: The Hidden Costs of Combining Data Points in Single Input Fields

At first glance, merging multiple data points—like first name and last name or date of birth components—into a single input field seems like a minor UI optimization. It reduces user friction, right? But this frontend convenience comes at a steep backend price. Let's dissect the mechanics of this problem and its cascading effects on system efficiency.

The Mechanical Breakdown: How a Single Field Becomes a Backend Nightmare

Consider the first name and last name example. When these are combined into one field, the backend must perform additional, avoidable tasks:

String Parsing: The backend has to split the input string based on assumptions (e.g., a single space). But what if the user enters multiple spaces, hyphens, or no spaces at all? The parsing logic becomes brittle, requiring edge-case handling that consumes CPU cycles.

Validation Overhead: Validating two distinct data points (first name and last name) requires separate checks. In a combined field, the backend must validate the entire string, then split it, and re-validate each component. This redundant processing slows down request handling.

Database Query Complexity: If the system needs to query or store these values separately (e.g., for sorting or filtering), the backend must reconstruct the individual components from the combined field. This introduces additional database queries or transformations, increasing latency.

For date of birth, the problem intensifies. Combining day, month, and year into a single field (e.g., "DD/MM/YYYY") forces the backend to:

- Handle Format Variations: Users might input dates as "MM/DD/YYYY," "YYYY-MM-DD," or even free-form text. The backend must account for these variations, adding complexity to parsing and validation logic.

- Manage Edge Cases: Leap years, invalid dates (e.g., February 30), or partial inputs (e.g., only month and year) require additional error handling. This bloats the codebase and increases the risk of bugs.

- Waste Resources: Every combined field forces the backend to perform string manipulations and validations that structured input fields (e.g., separate dropdowns for day, month, and year) would eliminate entirely.

The Causal Chain: From Frontend Shortcut to Backend Bottleneck

The root cause lies in the misalignment between frontend and backend priorities. Frontend designers prioritize user convenience, while backend developers focus on data processing efficiency. When these priorities clash, the backend bears the brunt:

- Impact: Frontend shortcuts (e.g., combining fields) create unstructured data.

- Internal Process: The backend must compensate with parsing, validation, and transformation logic.

- Observable Effect: Increased CPU usage, slower response times, and higher maintenance costs.

Over time, this pattern leads to brittle backend code. As the system grows, the accumulated inefficiencies become performance bottlenecks, reducing scalability and increasing the likelihood of data inconsistencies or errors.

Edge-Case Analysis: When the Problem Escalates

The risks compound in scenarios like:

Internationalization: Combining fields like addresses or phone numbers without considering regional formats forces the backend to handle dozens of edge cases (e.g., "+1 (123) 456-7890" vs. "+44 20 7946 0958").

Dynamic Data: If the combined field's structure changes (e.g., adding a middle name), the backend logic must be rewritten, introducing regression risks.

High-Volume Systems: In systems processing thousands of requests per second, the overhead of parsing combined fields becomes a critical performance bottleneck.

Solution Analysis: Structured Input Fields vs. Combined Fields

Optimal Solution: Structured Input Fields

Separating data points into individual fields (e.g., first name, last name, date components) eliminates parsing and validation overhead. Benefits include:

- Reduced Backend Complexity: No need for string splitting or format inference.

- Streamlined Database Queries: Direct mapping of input fields to database columns reduces query complexity.

- Lower Error Rates: Component-level validation minimizes edge-case bugs.

Mechanism: Structured input → direct processing → reduced CPU load → faster response times.

Limitations of Structured Fields

- User Experience Trade-offs: Longer forms may deter users. Mitigate with progressive disclosure (e.g., multi-step forms).

- Resource Constraints: Initial implementation requires more effort, but long-term backend savings justify the investment.

When Combined Fields Are Acceptable

Combined fields are only viable if they represent single atomic data points (e.g., usernames). However, rigorous validation is still required to handle edge cases.

Decision Rule

If combining fields introduces backend parsing, validation, or transformation logic → use structured fields.

Common Errors and Their Mechanisms

- Over-optimizing for UX: Prioritizing frontend convenience without backend impact analysis leads to technical debt.

- Mechanism: Short-term UX gain → long-term backend inefficiency → increased maintenance costs.

- Underestimating Edge Cases: Assuming predictable user input ignores real-world variability.

- Mechanism: Inadequate validation → edge-case errors → system instability.

Scenario Examination: Unpacking the Complexity of Combined Input Fields

Combining multiple data points into a single input field might seem like a minor UI optimization, but it triggers a cascade of backend inefficiencies. Below, we dissect six critical scenarios where this practice manifests, highlighting the specific challenges and their underlying mechanisms.

1. First Name and Last Name in a Single Field

At first glance, a single field for names appears user-friendly. However, the backend must parse the string based on assumptions (e.g., a single space delimiter). This process is inherently brittle:

- Edge Case: Multiple Spaces or Hyphens – Names like "Jean-Luc Picard" or "Maria Del Carmen" force the backend to implement complex splitting logic, consuming CPU cycles and increasing error risk.

- Validation Overhead: The backend must first validate the entire string, then split it, and re-validate each component. This redundant processing slows request handling.

Mechanism: Unstructured input → parsing logic → increased CPU load → slower response times.

2. Date of Birth in a Single Field

Dates are particularly problematic due to format variability (e.g., "DD/MM/YYYY" vs. "MM/DD/YYYY"). The backend must:

- Infer the Format: This requires trial-and-error parsing, bloating the codebase.

- Handle Edge Cases: Leap years, invalid dates (e.g., "30/02/2023"), and ambiguous inputs (e.g., "01/02/23") necessitate additional validation logic, increasing bug risk.

Mechanism: Free-form input → format inference → expanded error handling → higher maintenance costs.

3. Address Fields with Combined Components

Combining street, city, and postal code into one field creates internationalization challenges. For example:

- Regional Variations: Japanese addresses prioritize city and district before street, while U.S. addresses follow the opposite order. The backend must handle dozens of formats, increasing complexity.

- Database Query Complexity: Reconstructing individual components from a combined field requires additional transformations, increasing latency.

Mechanism: Unstructured regional data → format-specific parsing → increased query complexity → degraded performance.

4. Phone Numbers in a Single Field

Phone numbers vary widely in format (e.g., "+1 (123) 456-7890" vs. "01234 567890"). The backend must:

- Strip Non-Numeric Characters: This requires regex-based parsing, which is error-prone and resource-intensive.

- Validate Length and Format: Country-specific rules (e.g., 10 digits in the U.S. vs. 11 in the UK) add layers of validation logic.

Mechanism: Variable input formats → regex parsing → increased validation complexity → higher CPU usage.

5. Dynamic Data Fields (e.g., Adding Middle Name)

If a system initially combines first and last names but later adds a middle name, the backend logic must be rewritten entirely. This introduces:

- Regression Risks: Modifying parsing logic can break existing functionality, leading to bugs.

- Maintenance Overhead: Every change to the combined field structure requires backend updates, increasing development costs.

Mechanism: Unstructured field evolution → logic rewrites → regression risks → increased technical debt.

6. High-Volume Systems (e.g., E-Commerce Checkouts)

In systems processing thousands of requests per second, parsing overhead becomes a critical bottleneck:

- CPU Saturation: Parsing and validating combined fields consumes significant CPU cycles, reducing throughput.

- Latency Spike: Increased processing time per request leads to slower response times, degrading user experience.

Mechanism: High request volume → parsing overhead → CPU saturation → latency spike → user dissatisfaction.

Potential Solutions to Eliminating Data Aggregation Complexity

Combining multiple data points into a single input field—like first name and last name or date of birth components—creates a cascade of backend inefficiencies. The root issue? Unstructured data forces the backend to compensate with parsing, validation, and transformation logic, consuming CPU cycles and bloating codebases.

1. Structured Input Fields: The Optimal Solution

Mechanism: Separate fields for each data point (e.g., first name, last name, day/month/year) eliminate the need for backend parsing. Data flows directly into the database without intermediate transformations.

Causal Chain: Structured input → no parsing/validation overhead → reduced CPU load → faster response times → lower maintenance costs.

Benefits:

- Eliminates Parsing Logic: Backend no longer splits strings or infers formats, reducing error-prone edge cases (e.g., hyphens, multiple spaces).

- Streamlines Validation: Component-level validation (e.g., day ≤ 31, month ≤ 12) is faster and more accurate than validating combined strings.

- Simplifies Database Queries: Direct mapping of fields to database columns avoids transformations, reducing query latency.

Limitations:

- User Experience Trade-offs: Longer forms may deter users. Mitigate with progressive disclosure (e.g., multi-step forms) or auto-advance fields.

- Resource Constraints: Higher initial implementation effort, but long-term backend savings justify the investment.

2. Backend Processing Optimizations: A Partial Fix

Mechanism: Enhance backend logic to handle combined fields more efficiently (e.g., regex for parsing, pre-compiled validation rules).

Causal Chain: Optimized parsing → reduced CPU cycles → minor performance improvement → persistent edge-case risks.

Benefits:

- Short-Term Gains: Faster than rewriting the entire frontend/backend system.

- Targeted Fixes: Addresses specific pain points (e.g., regex for phone numbers) without overhauling the system.

Limitations:

- Persistent Complexity: Optimized parsing still consumes CPU cycles and introduces edge-case risks (e.g., regex failures for uncommon formats).

- Technical Debt: Brittle logic requires ongoing maintenance, especially as formats evolve (e.g., new date formats, address variations).

3. Hybrid Approach: Combined Fields with Rigorous Validation

Mechanism: Allow combined fields (e.g., username) but enforce strict validation rules to minimize backend processing.

Causal Chain: Rigorous validation → reduced edge cases → lower parsing overhead → moderate performance improvement.

Benefits:

- UX Preservation: Maintains shorter forms for atomic data points (e.g., username, email).

- Reduced Edge Cases: Strict validation (e.g., disallowing spaces in usernames) minimizes parsing complexity.

Limitations:

- Limited Applicability: Only works for truly atomic data points. Composite data (e.g., names, dates) still require parsing.

- User Constraints: Rigid validation may frustrate users (e.g., rejecting valid but uncommon formats).

Comparative Analysis and Decision Rule

Optimal Solution: Structured Input Fields outperform alternatives by eliminating parsing overhead entirely, reducing error rates, and simplifying database interactions.

When Structured Fields Fail: In rare cases where atomic data points (e.g., username) are combined, rigorous validation can suffice. However, structured fields remain superior for composite data.

Common Errors:

- Over-optimizing for UX: Prioritizing frontend convenience without backend impact analysis leads to technical debt.

- Underestimating Edge Cases: Assuming predictable user input ignores real-world variability.

Decision Rule: If combining fields introduces backend parsing, validation, or transformation logic → use structured fields. Exception: Combined fields representing single atomic data points, but validate rigorously.

Conclusion and Recommendations

Combining multiple data points into a single input field—while seemingly a UX convenience—is a deceptive shortcut that imposes significant backend costs. The mechanism is straightforward: unstructured input forces the backend to compensate with parsing, validation, and transformation logic. This process consumes CPU cycles, slows response times, and bloats codebases, leading to observable effects like increased maintenance costs and reduced scalability.

Left unaddressed, this practice threatens system performance and long-term viability, particularly in high-volume or complex systems.

Actionable Recommendations

Based on causal analysis and edge-case scrutiny, the following solutions are ranked by effectiveness:

Structured Input Fields (Optimal Solution)

- Mechanism: Separate fields for each data point (e.g., first name, last name, date components) eliminate parsing and validation overhead. This reduces CPU load, streamlines database queries, and minimizes error rates via component-level validation.

- When to Use: If combining fields introduces backend parsing, validation, or transformation logic.

- Limitations: Longer forms may deter users (mitigate with progressive disclosure) and require higher initial implementation effort. However, long-term backend savings justify the investment.

Backend Processing Optimizations (Partial Fix)

- Mechanism: Enhance backend logic with regex parsing and pre-compiled validation rules. This reduces CPU cycles for specific pain points (e.g., phone number parsing) but persists edge-case risks and introduces technical debt.

- When to Use: As a temporary measure when overhauling the system is not feasible.

- Limitations: Does not address root cause; brittle logic increases maintenance overhead.

Hybrid Approach (Combined Fields with Rigorous Validation)

- Mechanism: Allow combined fields for atomic data points (e.g., username) with strict validation rules. This minimizes parsing complexity but limits applicability to non-composite data.

- When to Use: For atomic data points where structured fields are impractical.

- Limitations: Rigid validation may frustrate users; composite data still requires parsing.

Decision Rule

If combining fields introduces backend parsing, validation, or transformation logic → use structured fields. Exception: Combined fields representing single atomic data points, but validate rigorously.

Professional Judgment

Structured input fields are the optimal solution for ensuring backend efficiency, scalability, and maintainability. While the initial investment is higher, the long-term savings in CPU cycles, maintenance costs, and error reduction far outweigh the trade-offs. Combining fields is a hidden cost generator that compromises system performance, particularly in high-volume or dynamic environments.

Future Considerations: Building Scalable and Maintainable Data Systems

As software systems grow in complexity and user expectations skyrocket, the way we handle data input today will determine our ability to scale tomorrow. The seemingly innocuous decision to combine multiple data points into a single input field—like first name and last name or date of birth components—creates a cascade of backend inefficiencies that compound over time.

The Hidden Costs of Combined Fields: A Mechanical Breakdown

When a frontend designer opts for a single field to capture "Full Name", the backend system is forced to parse, validate, and transform unstructured data. Here's the causal chain:

- Impact: Unstructured input (e.g., "John Doe" vs. "Doe, John").

- Internal Process: Backend logic must employ regex parsing to split names, handle edge cases (e.g., hyphens, multiple spaces), and validate formats.

- Observable Effect: Increased CPU cycles, slower response times, and bloated codebases.

In high-volume systems, this parsing overhead can lead to CPU saturation, causing latency spikes and user dissatisfaction. Similarly, combining date of birth components into a single field (e.g., "MM/DD/YYYY") introduces format variability and edge cases (e.g., leap years, invalid dates). The backend must trial-and-error parse these inputs, increasing the risk of bugs and system instability.

Long-Term Strategies: Structured Fields as the Optimal Solution

Structured input fields—separate fields for each data point—eliminate the need for backend parsing, validation, and transformation logic. Here's why this is the dominant solution:

- Mechanism: Direct field-to-column mapping in databases streamlines queries and reduces CPU load.

- Benefit: Faster response times, lower maintenance costs, and reduced error rates via component-level validation.

- Edge-Case Analysis: Structured fields inherently handle edge cases (e.g., day ≤ 31, month ≤ 12) without requiring complex logic. For example, separating first name and last name into distinct fields eliminates the need for regex parsing, reducing CPU overhead by up to 40% in high-volume systems, as observed in a case study of a leading e-commerce platform.

Comparing Solutions: Structured Fields vs. Backend Optimizations vs. Hybrid Approach

| Solution | Effectiveness | Limitations | Optimal Use Case |

|---|---|---|---|

| Structured Fields | High: Eliminates parsing overhead, reduces CPU load, and minimizes errors. | Longer forms may deter users; higher initial implementation effort. | All systems prioritizing long-term efficiency and scalability. |

| Backend Optimizations | Moderate: Reduces CPU cycles for specific cases (e.g., phone number parsing). | Persistent edge-case risks; introduces technical debt. | Temporary fixes in legacy systems with resource constraints. |

| Hybrid Approach | Low: Minimizes parsing complexity for atomic data points (e.g., username). | Limited to non-composite data; rigid validation may frustrate users. | Atomic data points with strict validation rules. |

Decision Rule: When to Use Structured Fields

If combining fields introduces backend parsing, validation, or transformation logic → use structured fields. Exception: Combined fields for single atomic data points (e.g., username, email) with rigorous validation.

Common Errors and Their Mechanisms

- Over-optimizing for UX: Prioritizing short forms leads to long-term backend inefficiency.

- Mechanism: Short-term UX gains mask hidden costs, resulting in increased maintenance and scalability issues.

- Underestimating Edge Cases: Inadequate validation causes system instability.

- Mechanism: Edge cases (e.g., invalid dates, uncommon formats) bypass weak validation logic, triggering errors and downtime.

Professional Judgment: Structured Fields Are Non-Negotiable

Structured input fields are the optimal solution for ensuring backend efficiency, scalability, and maintainability. While they require a higher initial investment, the long-term savings in CPU cycles, maintenance costs, and error reduction justify the effort. Combining fields is a deceptive shortcut that compromises system performance, especially in high-volume or dynamic environments.

As you design future systems, ask yourself: "Is this field combining data points that will require backend parsing?" If the answer is yes, structured fields are your only viable path forward.

Comments

Please log in or register to join the discussion