MIT master's student Mariano Salcedo is developing AI systems that transform music into dynamic visual performances using neural cellular automata, bridging his passions for music and technology while exploring how self-organized systems can enhance human creativity.

Growing up in Mexico and Texas, Mariano Salcedo couldn't readily indulge his passion for creating music. "There are no bands in Mexican public schools," he says. While some families could pay for instruments and lessons, others, like Salcedo's, were less fortunate. "I've always loved music," he continues. "I was a listener."

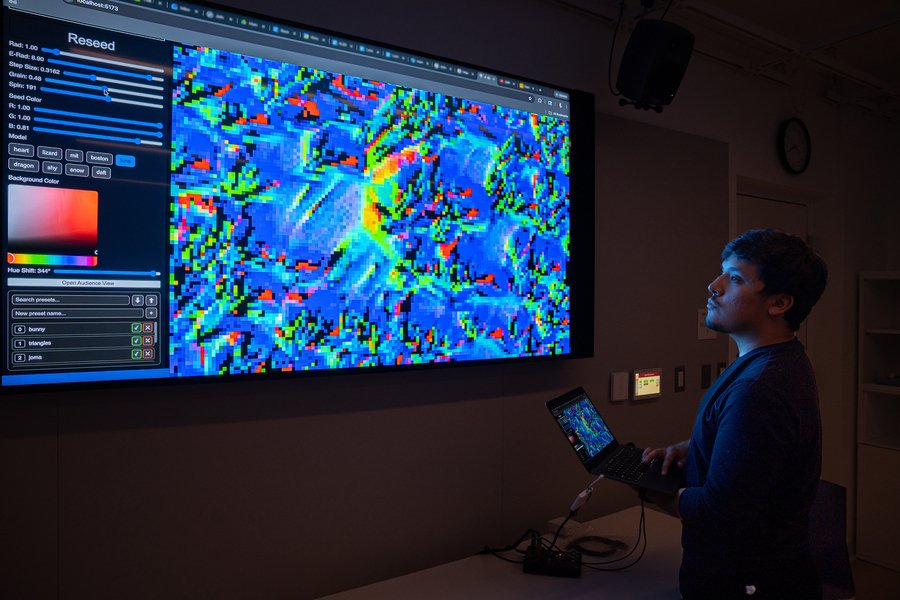

Now a master's student in MIT's new Music Technology and Computation Graduate Program, Salcedo is designing an AI system that visualizes and expresses music and other sounds through neural cellular automata (NCA). His work represents a fascinating intersection of computational research and artistic expression, allowing anyone to create music-driven visuals while leveraging the expressive and sometimes unpredictable dynamics of self-organized systems.

From Mechanical Engineering to AI and Music

Salcedo's journey to this point has been anything but linear. He began his MIT career as a mechanical engineering student, applying through the Questbridge program. "I heard if you like engineering and science that attending MIT would be a great choice," he recalls. "Nerds are welcomed and embraced."

While dutifully working toward completing his mechanical engineering curriculum, music and technology came calling after a chance encounter with a large language model (LLM). "I was introduced to an LLM chatbot and was blown away," he recalls. "This was something that was speaking to me. I was both awed and frightened."

After this encounter, Salcedo switched his major from mechanical engineering to artificial intelligence and decision-making. "I basically started over, after being two-thirds of the way through the MechE curriculum," he says. He learned about the possibilities available with AI but also confronted some of the challenges bedeviling researchers and developers, including its potential power, ensuring responsible use, human bias, limited access for people from underrepresented groups, and a lack of diversity among developers.

Neural Cellular Automata: Growing Images That Can Regenerate

Salcedo's research focuses on neural cellular automata, which merges classical cellular automata with machine learning techniques to grow images that can regenerate. When paired with a stimulus like music, these images can "show" sounds in action.

Through the web interface Salcedo designed, users can adjust the relationship between the music's energy and the NCA system to create unique visual performances using any music audio stream. "I want the visuals to complement and elevate the listening experience," he says.

The technical approach involves using cellular automata—discrete models composed of cells on a grid that evolve according to simple rules—enhanced with neural networks. This combination allows the system to learn patterns from data while maintaining the spatial and temporal coherence that makes cellular automata so visually compelling.

When music plays through the system, the audio signal is analyzed in real-time, with features like amplitude, frequency content, and rhythmic patterns extracted. These features then influence the rules governing how the cellular automaton evolves, creating a dynamic visual representation that responds to the music's energy and structure.

Building Community and Finding Mentorship

While completing his undergraduate studies, Salcedo's love of music resurfaced. "I began DJing at MIT and was hooked," he says. While he hadn't learned to play a traditional instrument, he discovered he could create engaging soundscapes with technology.

Salcedo and Eran Egozy, professor of the practice in music technology and director of the graduate program, met in 2024 while Salcedo completed an Undergraduate Research Opportunities Program rotation as a game developer in Egozy's lab. "He was incredibly curious and has grown tremendously over a very short time period," Egozy says.

Egozy became an informal but important mentor to Salcedo. "He brings great energy and thoughtfulness to his work, and to supporting others in the [music technology and computation graduate] program," Egozy notes.

Salcedo also took Egozy's class 21M.385/21M.585/6.4450 (Interactive Music Systems), which further fed his appetite for the creativity he craved while also allowing him to indulge his fascination with music's possibilities. By taking advantage of courses in the SHASS curriculum, he further developed his understanding of music theory and related technologies.

Beyond Music Visualization

Salcedo believes his research can potentially move beyond music visualization. "What if we could improve the ways we model self-organized systems?" he asks. "That is, systems like multicellular organisms, flocks of birds, or societies that interact locally but exhibit interesting behaviors."

Any system, Salcedo says, where the whole is more than the sum of its parts. Developing the technology used to design his application can potentially help answer important ethical questions regarding AI's continued expansion and growth.

The path to his work's development is both daunting and lonely, but those challenges feed his work ethic. "It's intimidating to pursue this path when the academy is currently focused on LLMs," he says. "But it's also important to explain and explore the base technology before digging into more nuanced work, which can help audiences understand it better."

Recognition and Future Directions

Salcedo has been selected to deliver the student address at the 2026 Advanced Degree Ceremony for SHASS. "It's an honor, and it's daunting," he says. "It feels like a huge responsibility," though one he's eager to embrace.

He presented his work—"Artificial Dancing Intelligence: Neural Cellular Automata for Visual Performance of Music"—at the Association for the Advancement of Artificial Intelligence conference in Singapore in January 2026.

Ultimately, Salcedo wants people to experience the joy he feels working at the intersection of the humanities and the sciences. Music and technology impact nearly everyone. Inviting audiences into his laboratory as participants in the creative and research processes offers the same kind of satisfaction he gets from crafting a great beat or solving for a thorny technical challenge.

"I want users to feel movement and explore sounds and their impact more fully," he says.

The Music Technology and Computation Graduate Program, directed by Egozy and funded in part by a fellowship from Alex Rigopulos (1992) SM '94, represents a unique collaboration between MIT's School of Humanities, Arts, and Social Sciences and the School of Engineering. It invites practitioners to study, discover, and develop new computational approaches to music.

"MIT is where I was first able to pursue my passion for music technology decades ago, and that experience was the springboard for a long and fulfilling career," says Rigopulos, former CEO of Harmonix Music Systems. "So, when MIT launched an advanced degree program in music technology, I was thrilled to fund a fellowship to help propel this exciting new program."

Salcedo's work exemplifies how computational approaches can enhance human creativity rather than replace it. By creating systems that visualize music through neural cellular automata, he's not just building a technical tool—he's creating a new way for people to experience and interact with sound, one that bridges the gap between the auditory and visual worlds while exploring fundamental questions about how complex systems emerge from simple rules.

Comments

Please log in or register to join the discussion