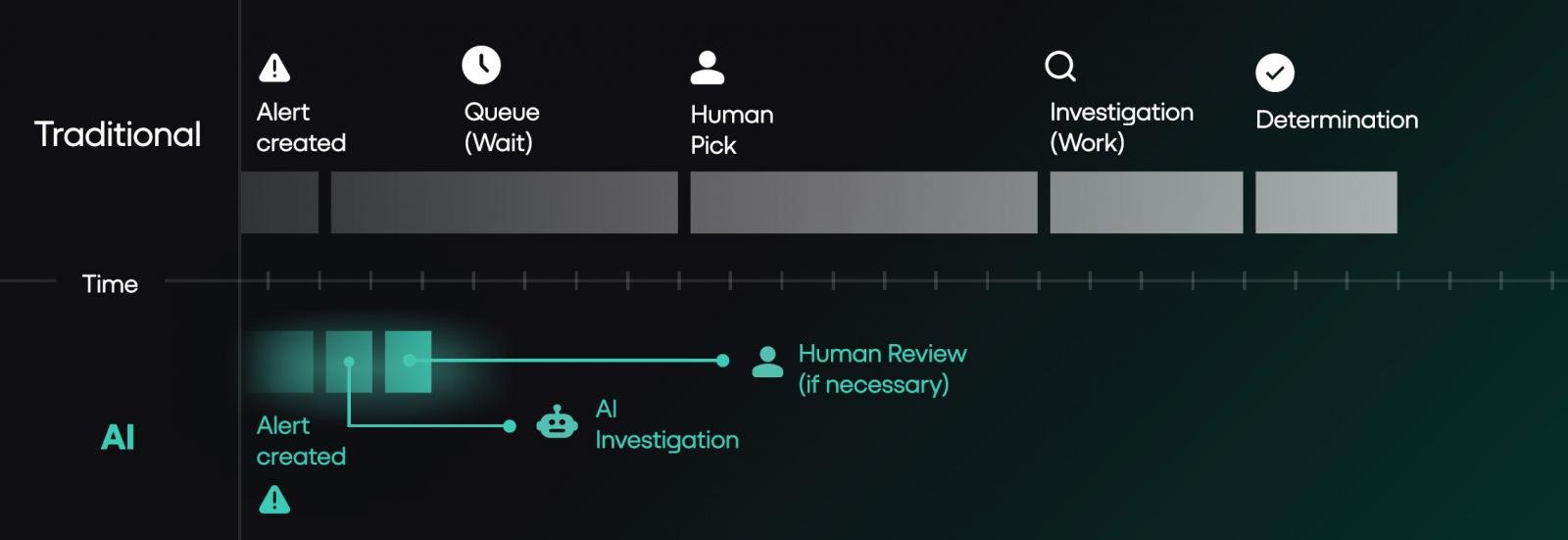

Security operations centers are doubling spending and headcount but seeing little improvement in breach detection times, as alert volume outpaces human investigation capacity. Industry experts say fixing the underlying operating model, rather than hiring more staff, is the only way to close the gap, with AI-driven investigation emerging as a key solution for high-volume alert triage.

Security operations centers are struggling to close the gap between rising alert volumes and investigation capacity, even as global security spending has roughly doubled over the past six years. Recent reports from Google Mandiant, Crowdstrike, and IBM highlight the disconnect: while threat actor speed has increased dramatically, SOC response times have barely improved.

Google Mandiant's 2026 M-Trends report puts global median dwell time at 14 days, meaning attackers remain in networks for two weeks before detection on average. The report also found that the window between initial access and hand-off to secondary threat groups collapsed to 22 seconds in 2025, a 95% drop from the 8-hour window recorded in 2022. Crowdstrike's 2026 Global Threat Report aligns with this trend, showing average breakout time (from initial access to exfiltration) fell to 29 minutes. IBM's 2025 Cost of a Data Breach Report found the average time to identify and contain a breach was 241 days, with an average cost of $4.88 million. While that represents a 16% improvement from 2020's 281-day average, the pace of improvement lags far behind security spending growth.

Rich Perkins, principal sales engineer at Prophet Security, notes that most SOCs have already implemented standard efficiency measures, including alert tiering, auto-closing known benign alerts, suppressing noisy rules, and tuning detection logic. "The problem is that even after all of that work, the volume that lands on humans for actual investigation still exceeds what humans can investigate at the depth required," Perkins says. "You can't hire enough analysts to investigate 100% of post-tiering volume at the depth the work requires. You can hire your way to better coverage at the margins. You cannot hire your way to the model change."

Perkins cites deployment data showing post-tiering alert volume typically lands at 120 to 150 alerts per day per SOC. At 20 minutes per investigation including documentation, that requires 40 to 50 analyst-hours daily. A SOC team of 5 to 10 analysts can cover the top of that range during business hours, leaving the rest of the queue for later shifts or days, creating a persistent gap that additional headcount cannot close.

New agentic AI platforms are designed to address this gap by handling investigation work at machine speed.

For SOCs looking to assess their own capacity gaps, Perkins outlines four diagnostic questions to run internally:

- What percentage of alerts above your defined investigation threshold did your team actually investigate last quarter? If less than 90%, you have a coverage gap driven by workflow limitations, not team performance.

- How many detection rules has your team suppressed in the last 12 months without an engineering ticket to replace the coverage? Suppressing noisy rules is healthy tuning, but failing to replace the coverage creates undetected attack surface.

- What was your senior analyst turnover last year, and how long did each replacement take to reach productive contribution? Turnover above 15% or ramp times exceeding 6 months indicate a fragile bench reliant on tribal knowledge.

- If alert volume doubled tomorrow, what's the first thing your team would stop doing? The answer reveals which parts of your program are already operating at capacity.

If three or more answers raise concerns, the focus should shift from hiring to evaluating the underlying operating model, Perkins says.

Organizations that have shifted their operating model by adopting AI-driven investigation are seeing measurable results. JB Poindexter & Co, an 8,500-employee diversified manufacturer, deployed Prophet Security's AI platform in 2025. In the first 60 days, the team ran 4,407 investigations through the platform with a mean time to investigate under 4 minutes, equivalent to 73 investigations per day. This returned roughly 1,469 hours of analyst time to the team, or 6.3 analyst-years of capacity at full annualization. "We're faster, more focused, and able to scale without adding immediate headcount," says John Barrow, CISO at JB Poindexter.

Cabinetworks, another enterprise adopter, ran 3,200 alerts through the same platform in 33 days, with only six escalating to human analysts. The deployment also reduced SIEM costs by 90%, primarily by eliminating the need to ingest and store raw EDR and identity telemetry that was previously pulled into the SIEM for analyst pivot queries. When AI handles pivots directly against source systems, that ingest tier becomes optional, creating secondary cost savings that often exceed the price of the AI platform for organizations with seven-figure SIEM contracts.

The most effective SOC models pair AI-driven investigation with human expertise for complex cases.

Perkins emphasizes that AI is not a replacement for human analysts in all scenarios. Three categories require human leadership:

First, insider threat investigations where context lives outside of logs, such as performance improvement plans, manager conversations, or contract end dates. AI can handle telemetry-based insider threat work, but struggles with human context that isn't captured in system logs.

Second, novel TTPs with no analog in training data. AI investigation relies on pattern-matching against historical examples, so it is weakest against attacks that don't resemble known threats. Senior threat hunters should lead these investigations.

Third, highly regulated environments with data residency rules that restrict where alert telemetry can be stored. Most AI SOC platforms require specific architecture adjustments to comply with these rules, and some may not be compatible at all.

For CISOs evaluating AI SOC platforms, Perkins recommends asking three key questions:

What happens when the AI makes an error? Platforms that document every step of the investigation, including queries, evidence pulled, and reasoning, allow teams to correct errors and update logic to prevent repeats. This audit trail is also critical for regulators and board inquiries.

What happens to detection engineering? Instead of relying on manual triage to spot noisy detections, detection engineering teams can use comprehensive AI investigation data as a feedback loop, shifting to a proactive, scheduled discipline rather than reactive tuning between alerts.

Who needs to be involved in the buying committee? AI SOC platforms touch security operations, IT, compliance, legal, and procurement. Bringing all stakeholders in early avoids delays later in the evaluation process.

Finally, Perkins advises evaluating vendor risk by asking three questions: Can you export investigation history, configuration, and detection logic if you switch vendors? Are the runbooks you create specific to the vendor, or can they be rebuilt elsewhere? What happens to service obligations and data handling if the vendor is acquired or shuts down? "Most vendors can answer the first two. Few have a clean answer to the third," Perkins says.

The traditional SOC model of human-driven alert triage has not kept pace with the speed and volume of modern threats. While hiring more analysts may provide marginal improvements, only shifting the operating model to automate investigation work can close the gap between detection and response. Teams that make this shift will see improved metrics and more efficient use of analyst time, while those that rely on headcount growth will continue to face the same gaps as threat actor speed increases.

Comments

Please log in or register to join the discussion