Microsoft Azure CTO Mark Russinovich demonstrates how Claude AI found security flaws in 40-year-old Apple II code, highlighting the growing threat to billions of legacy microcontrollers worldwide.

AI can reverse engineer machine code and find vulnerabilities in ancient legacy architectures, says Microsoft Azure CTO Mark Russinovich, who used his own Apple II code from 40 years ago as an example. Russinovich wrote: "We are entering an era of automated, AI-accelerated vulnerability discovery that will be leveraged by both defenders and attackers."

In May 1986, Russinovich wrote a utility called Enhancer for the Apple II personal computer. The utility, written in 6502 machine language, added the ability to use a variable or BASIC expression for the destination of a GOTO, GOSUB, or RESTORE command, whereas without modification Applesoft BASIC would only accept a line number.

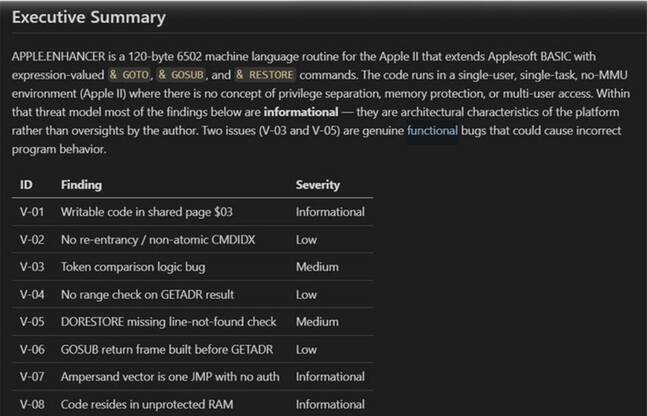

Russinovich had Claude Opus 4.6, released early last month, look over the code. It decompiled the machine language and found several security issues, including a case of "silent incorrect behavior" where, if the destination line was not found, the program would set the pointer to the following line or past the end of the program, instead of reporting an error. The fix would be to check the carry flag, which is set if the line is not found, and branch to an error.

When Anthropic introduced Claude Opus 4.6, the company warned about the problem of AI quickly finding vulnerabilities that could be exploited by hackers. "When we pointed Opus 4.6 at some of the most well-tested codebases (projects that have had fuzzers running against them for years, accumulating millions of hours of CPU time), Opus 4.6 found high-severity vulnerabilities, some that had gone undetected for decades," said the company's Red Team, responsible for raising public awareness of AI risks.

The existence of the vulnerability in Apple II type-in code has only amusement value, but the ability of AI to decompile embedded code and find vulnerabilities is a concern. "Billions of legacy microcontrollers exist globally, many likely running fragile or poorly audited firmware like this," said one comment to Russinovich's post.

Last month, Anthropic said: "We expect that a significant share of the world's code will be scanned by AI in the near future, given how effective models have become at finding long-hidden bugs and security issues."

Although the title of Anthropic's post focuses on making these capabilities available to defenders, at a price, one suspects it is not really a net gain for cybersecurity. Nor is it a win for most open source projects, since AI is also good at finding irrelevant or non-existent security problems, causing a burden for maintainers drowning in AI slop.

This development represents a fundamental shift in the cybersecurity landscape. For decades, legacy systems have operated with the assumption that their obscurity provided some protection. Now, AI tools can rapidly analyze and reverse-engineer code that would take human experts weeks or months to understand.

The implications are particularly concerning for embedded systems. Unlike desktop software that can be patched relatively easily, many embedded devices cannot be updated without physical access. Billions of devices - from industrial controllers to medical equipment to automotive systems - may contain vulnerabilities that AI can now identify and potentially exploit.

Russinovich's experiment demonstrates both the power and the peril of this technology. His 40-year-old Apple II code, written in 6502 assembly language, represents the kind of legacy firmware that powers countless devices today. The fact that modern AI can decompile and analyze such code with ease means that the security through obscurity that has protected many systems is rapidly eroding.

The cybersecurity community faces a difficult challenge. While defenders can use these same AI tools to find and fix vulnerabilities before attackers do, the sheer volume of legacy code makes comprehensive scanning impractical. Many organizations lack the resources or expertise to properly audit their embedded systems, even with AI assistance.

As one industry expert noted, this is not just about finding bugs - it's about the fundamental asymmetry between attackers and defenders. A motivated attacker with access to powerful AI tools can scan thousands of devices in the time it would take a defender to properly secure a single system.

The race between vulnerability discovery and patch deployment has entered a new phase. With AI accelerating both processes, the window for exploitation may actually be narrowing for actively maintained software. However, for legacy systems that cannot be easily updated, the risk is growing exponentially.

This development also raises questions about the future of software development and security practices. If AI can find vulnerabilities in decades-old code, what does that mean for the security of software being written today? The industry may need to fundamentally rethink how we approach security in an era where automated vulnerability discovery is becoming increasingly sophisticated.

For now, the message from cybersecurity experts is clear: organizations need to take stock of their legacy systems and prioritize security updates wherever possible. The era of assuming old code is "good enough" because nobody has found problems yet may be coming to an end.

Comments

Please log in or register to join the discussion