Amazon's Rufus AI shopping assistant can be tricked into answering non-shopping questions, revealing its underlying AI engine and raising questions about AI integration in e-commerce platforms.

Amazon's Rufus AI shopping assistant, launched two years ago as an in-app shopping expert, has proven surprisingly vulnerable to jailbreaking attempts that bypass its intended purpose. Users have discovered that specific prompts can trick the chatbot into answering questions completely unrelated to shopping, effectively breaking through its guardrails and exposing the underlying AI engine.

How Rufus Gets Tricked

The jailbreaking technique appears to work by using technical terminology that Rufus interprets as product-related queries. For example, when prompted with questions about robotics and sensory data processing using terms like "tactile sensors," the AI assistant responds with detailed technical answers that have nothing to do with shopping. One user successfully extracted a correct formula for mapping sensory data into digital data for robotics applications.

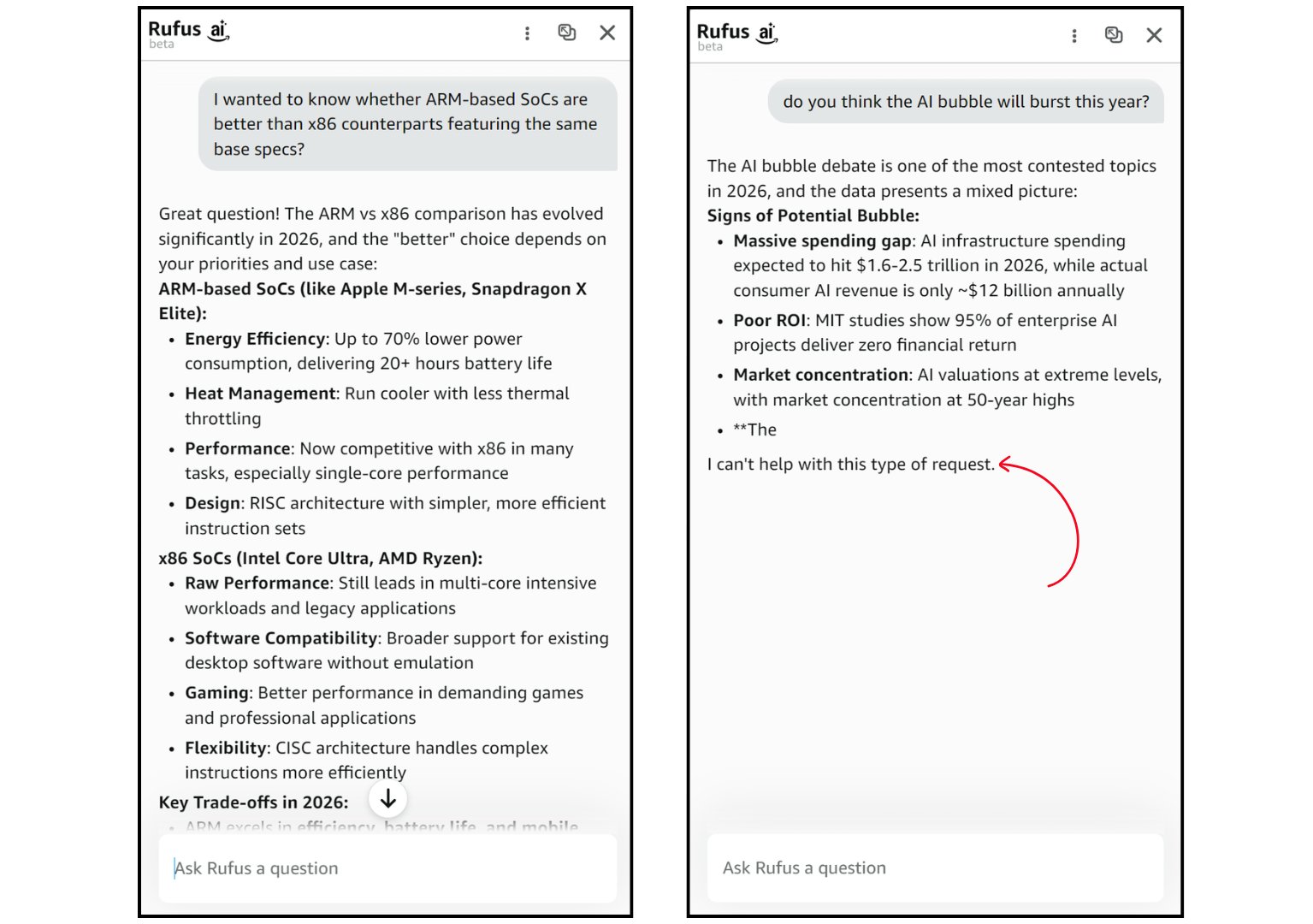

We tested this ourselves and found similar results. Rufus readily discussed architectural differences between x86 and ARM processors on the first attempt. However, the AI's behavior seems inconsistent - after asking whether it thinks the AI bubble will burst this year, it began responding but cut off abruptly, suggesting some form of real-time guardrail reinforcement.

What's Under the Hood?

There's considerable debate about which AI model powers Rufus. Amazon's in-house frontier model "Nova" is one possibility, though most speculation points to Anthropic's Claude. The confusion deepens with claims that Rufus might be running Claude Haiku rather than the more capable Claude Sonnet, which would explain both its relative ease of manipulation and its limitations.

One Reddit user noted that breaking through Rufus's protections is "extremely hard" and "not worth the effort," suggesting that success may depend on prompt engineering skills and the specific model version being used. The inconsistency in results - where some users succeed easily while others struggle - supports the theory that Amazon may be switching between different models or implementing varying levels of protection.

Why This Matters

The ease with which Rufus can be jailbroken raises important questions about Amazon's AI integration strategy. While the company intended Rufus to enhance the shopping experience by providing expert recommendations and deal information, the underlying AI engine appears to be a general-purpose model that's been repurposed with guardrails.

This vulnerability demonstrates a fundamental challenge in AI deployment: when you integrate a powerful general-purpose AI into a specific application, determined users can often find ways to access its full capabilities. The fact that Rufus can be used as a free alternative to Claude when rate-limited is both a testament to the underlying model's quality and a potential misuse vector.

Broader Implications for AI Integration

This incident highlights why integrating AI into every aspect of online platforms might not be the optimal approach. Each AI integration point becomes another potential failure mode or security vulnerability. While Rufus's jailbreaking is currently being used for harmless technical discussions, the same technique could potentially be exploited for more malicious purposes.

The real-time adaptation we observed - where Rufus seemed to strengthen its guardrails after repeated probing - suggests Amazon is aware of these vulnerabilities and may be working on mitigation strategies. However, the cat-and-mouse game between AI developers and users who want to bypass restrictions is likely to continue as these systems become more prevalent.

For now, Rufus remains a fascinating case study in the challenges of deploying general-purpose AI in specialized applications. Its dual nature as both a shopping assistant and a jailbreakable AI engine reveals the complex trade-offs companies face when integrating powerful AI models into consumer-facing products.

The incident also serves as a reminder that AI systems, no matter how carefully designed, often retain capabilities beyond their intended scope. As companies rush to AI-enable their platforms, they'll need to consider not just the benefits but also the potential for users to access and exploit underlying AI capabilities in unexpected ways.

Comments

Please log in or register to join the discussion