Google unveils eighth-generation TPUs with two distinct architectures optimized for training and inference, targeting the demands of AI agents and large language models.

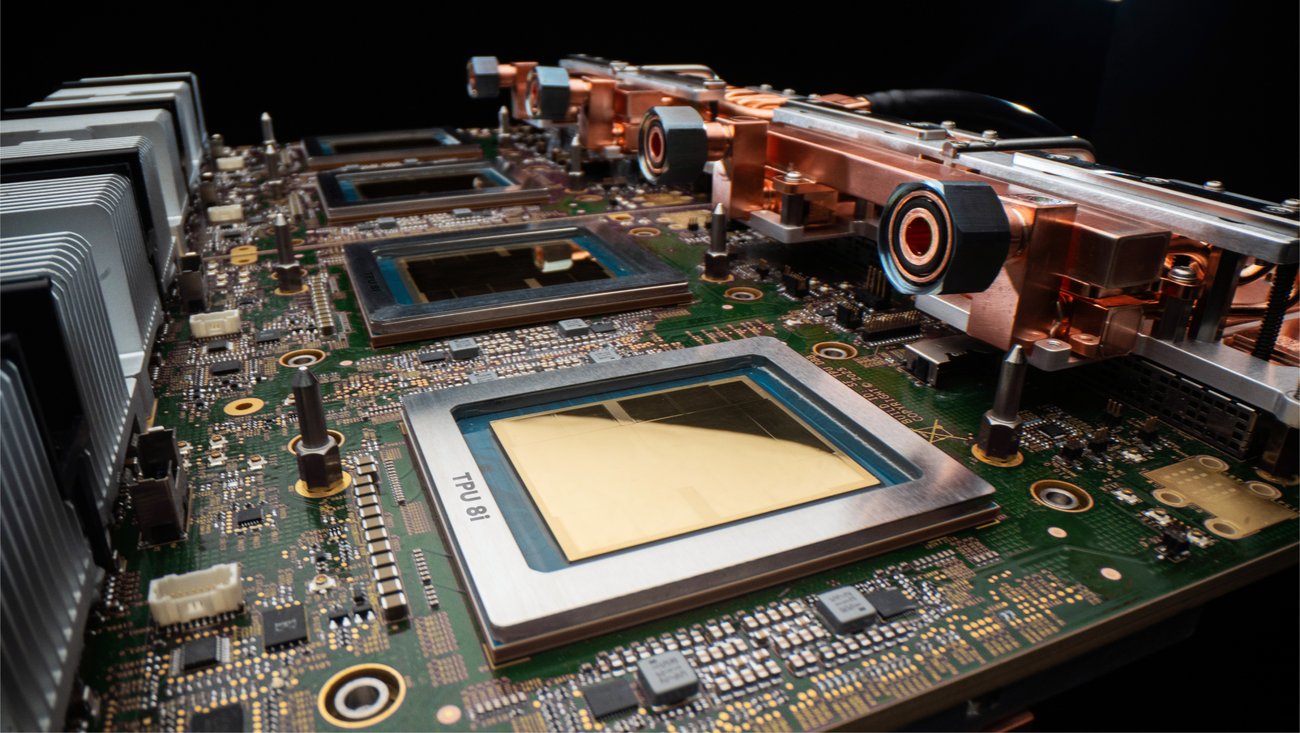

Google has announced its eighth-generation Tensor Processing Units (TPUs), introducing two specialized chips designed to address the evolving demands of artificial intelligence. The TPU 8t and TPU 8i represent a decade of development and are purpose-built for different phases of the AI lifecycle, as the industry moves toward increasingly sophisticated agentic systems.

What Changed: Specialization for the Agentic Era

The most significant shift in this generation is the deliberate separation of training and inference capabilities into distinct architectures. TPU 8t serves as the training powerhouse, while TPU 8i acts as the reasoning engine optimized for inference workloads. This specialization reflects Google's anticipation of diverging requirements as AI systems evolve from simple models to complex agents capable of reasoning, planning, and executing multi-step workflows.

"In this age of AI agents, models must reason through problems, execute multi-step workflows and learn from their own actions in continuous loops," explains Amin Vahdat, SVP and Chief Technologist for AI and Infrastructure at Google. "This places a new set of demands on infrastructure, and TPU 8t and TPU 8i were designed in partnership with Google DeepMind to take on the most demanding AI workloads and adapt to evolving model architectures at scale."

Provider Comparison: TPU 8t vs. TPU 8i

TPU 8t: The Training Powerhouse

TPU 8t is engineered to accelerate the development cycle for frontier models, reducing training times from months to weeks. Key specifications include:

- Massive scale: A single TPU 8t superpod scales to 9,600 chips with two petabytes of shared high bandwidth memory

- Enhanced compute performance: Delivers nearly 3x the compute performance per pod over the previous generation

- High interchip bandwidth: Double the interchip bandwidth of the previous generation

- Storage integration: 10x faster storage access with TPUDirect for maximum utilization

- Near-linear scaling: New Virgo Network enables scaling for up to a million chips in a single logical cluster

- Reliability features: Targets over 97% "goodput" through comprehensive RAS capabilities including real-time telemetry and automatic rerouting

The TPU 8t architecture is particularly suited for large-scale model training where massive parallel processing and high memory bandwidth are critical. Its design focuses on maximizing throughput for compute-intensive workloads while maintaining power efficiency.

TPU 8i: The Reasoning Engine

TPU 8i is optimized for the latency-sensitive inference requirements of AI agents. Key innovations include:

- Memory optimization: 288 GB of high-bandwidth memory paired with 384 MB of on-chip SRAM (3x more than previous generation)

- Axion CPU integration: Custom Arm-based CPUs with non-uniform memory architecture (NUMA) for isolation

- MoE model support: Doubled Interconnect bandwidth to 19.2 Tb/s with Boardfly architecture reducing network diameter by over 50%

- Collectives Acceleration Engine: Offloads global operations, reducing on-chip latency by up to 5x

- Performance gains: Delivers 80% better performance-per-dollar compared to the previous generation

The TPU 8i architecture addresses the "memory wall" problem that can cause processors to sit idle waiting for data, a critical issue for real-time agent interactions where even small latency magnifies at scale.

Business Impact: Efficiency and Scale for AI Workloads

The introduction of these specialized TPUs carries significant implications for organizations developing and deploying AI systems:

Training Efficiency

TPU 8t's massive scale and near-linear scaling capabilities enable organizations to train larger models faster, reducing development cycles and accelerating time-to-market for AI innovations. The 9,600-chip superpod architecture allows complex models to leverage a single, massive pool of memory, eliminating the need for model partitioning and associated communication overhead.

"Every hardware failure, network stall or checkpoint restart is time the cluster is not training, and at frontier training scale, every percentage point can translate into days of active training time," notes Vahdat. The reliability features built into TPU 8t directly address this concern, maximizing productive compute time.

Inference Economics

For inference workloads, TPU 8i's performance-per-dollar improvement of 80% enables businesses to serve nearly twice the customer volume at the same cost. This economic advantage becomes increasingly important as organizations scale AI agents to handle more complex, multi-step interactions.

"In the agentic era, users expect to be able to ask questions, delegate tasks and get outcomes," explains Vahdat. "TPU 8i is designed to handle the intricate, collaborative, iterative work of many specialized agents, often 'swarming' together in complex flows to deliver solutions and insights for the most challenging tasks."

Power Efficiency

Both chips deliver up to two times better performance-per-watt over the previous generation, addressing the growing power constraints in data centers. This efficiency extends beyond the chips themselves to the entire system stack, including Google's fourth-generation liquid cooling technology that sustains performance densities air cooling cannot achieve.

"In today's data centers, power, not just chip supply, is a binding constraint," Vahdat states. "By owning the full stack, from Axion host to accelerator, we can optimize system-level energy efficiency in ways that simply cannot be achieved when the host and chip are designed independently."

Ecosystem Integration

Both platforms support native JAX, MaxText, PyTorch, SGLang and vLLM frameworks, ensuring compatibility with existing development workflows. The bare metal access option provides customers with direct hardware access without virtualization overhead.

Google's co-design philosophy extends to software integration, with open-source contributions including MaxText reference implementations and Tunix for reinforcement learning support facilitating the transition from capability to production deployment.

Strategic Considerations for Organizations

For organizations evaluating these new TPUs, several strategic factors should be considered:

- Workload specialization: TPU 8t excels at large-scale training, while TPU 8i optimizes inference latency—organizations should match chip selection to primary workload requirements

- Scalability needs: TPU 8t's massive scale suits organizations training frontier models, while TPU 8i's efficiency benefits those deploying production AI agents

- Total cost of ownership: While initial investment may be significant, the performance-per-watt improvements offer long-term operational cost savings

- Ecosystem compatibility: Integration with existing ML frameworks and tools reduces migration friction

Both chips will be generally available later this year as part of Google's AI Hypercomputer, which combines purpose-built hardware, open software, and flexible consumption models into a unified stack. This integrated approach simplifies deployment and management of complex AI workloads.

As AI systems evolve toward increasingly autonomous agents capable of continuous learning and adaptation, infrastructure requirements will continue to diverge between training and inference phases. Google's specialized TPUs represent a strategic response to this trend, offering purpose-built solutions for each phase of the AI lifecycle.

Organizations looking to stay at the forefront of AI development should consider how these specialized architectures might accelerate their own AI initiatives, whether through faster model training, more efficient inference, or the ability to deploy more sophisticated agent systems. The TPU 8t and 8i chips, born from a decade of development and co-designed with Google DeepMind, position Google to continue leading in the infrastructure race that will define the agentic era.

For organizations interested in adopting these new TPUs, additional information is available through Google Cloud's TPU documentation and the official Google Cloud Next announcement.

Comments

Please log in or register to join the discussion