Cerebras Systems achieved a $66 billion valuation through its IPO, driven by its unique wafer-scale AI accelerators that challenge Nvidia's dominance in the AI chip market.

Cerebras Systems has accomplished what few semiconductor startups ever achieve: a successful initial public offering that values the company at over $66 billion. On Thursday, the AI chip maker raised $5.55 billion in its IPO, marking the first major tech IPO of 2026 and establishing Cerebras as a serious competitor to industry giant Nvidia.

The journey to this milestone spans more than a decade and represents a bold bet on fundamentally different chip architecture. Founded in 2015 by former SeaMicro head Andrew Feldman, Cerebras challenged the conventional approach to AI accelerators by creating what would become known as the Wafer-Scale Engine (WSE).

A radical approach to AI chips

When Cerebras entered the market, most high-end GPUs followed a familiar pattern: manufacturers would create dies measuring approximately 800 square millimeters, cut multiple chips from a larger wafer, then stitch them together using high-speed interconnects like NVLink to function as a single accelerator.

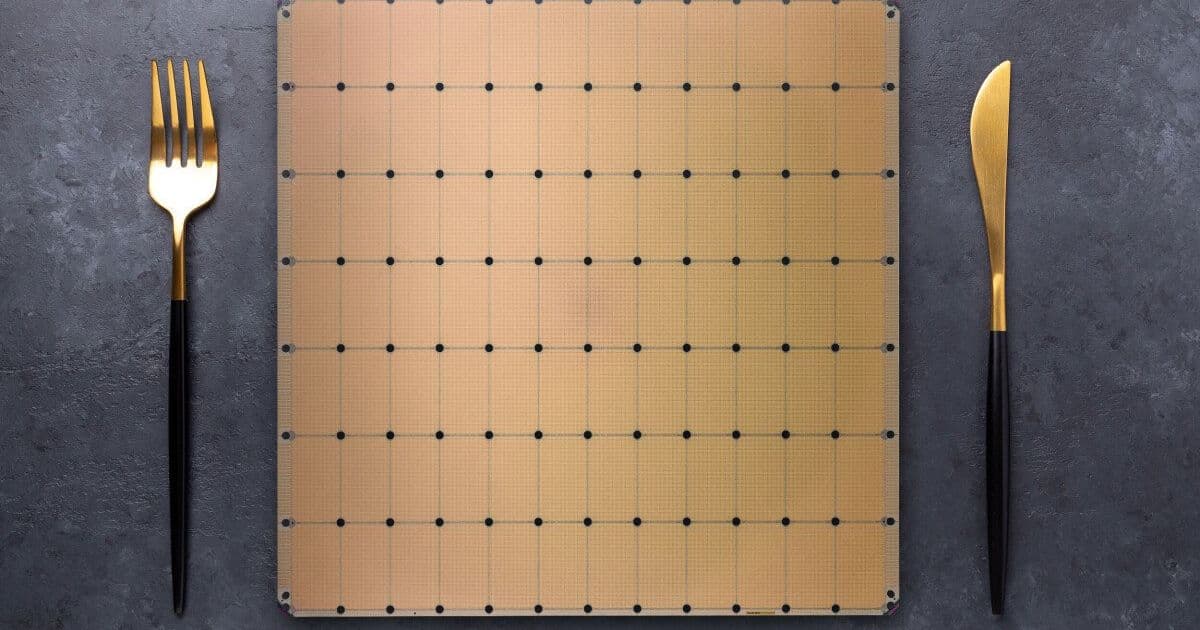

Cerebras took a different path. Instead of cutting up a wafer only to reconnect the pieces, the company decided to etch an entire compute system onto a single, massive wafer-scale chip. The result was the WSE, a chip measuring 46,225 square millimeters—roughly the size of a dinner plate. For more details on this unique architecture, you can explore Cerebras' technical documentation.

This approach offered several advantages. First, by eliminating the need for multiple chips and interconnects, Cerebras reduced communication bottlenecks that typically limit performance in multi-chip systems. Second, the company designed a novel compute engine specifically optimized for the sparse matrix multiply-accumulate operations prevalent in deep learning.

The hardware sparsity implementation was particularly innovative. Large portions of neural network parameters often end up as zeros during training. Cerebras' design leveraged this fact to boost the effective computational output of its first-generation WSE accelerators from 2.65 16-bit petaFLOPS to 26.5 petaFLOPS. Nvidia later added sparsity support in its Ampere generation, but with more restrictive ratios that limited effectiveness to specific use cases.

Evolution of the wafer-scale architecture

Cerebras didn't stop with its first-generation chip. In 2021, the company launched the WSE-2, which maintained the same physical size but moved to TSMC's 7nm manufacturing process. This transition more than doubled the transistor count, compute density, SRAM capacity, and bandwidth compared to the first generation.

The WSE-2 also supported larger clusters, scaling up to 192 systems, though practical deployments typically ranged between 16 and 32 systems per site. Around this time, Cerebras attracted significant investment from United Arab Emirates-based cloud provider G42, which quickly became the company's largest financier.

By mid-2023, Cerebras had secured orders worth $900 million for nine supercomputing sites delivering 36 exaFLOPS of super sparse AI compute. The following year, the company moved to TSMC's 5nm process with the WSE-3, doubling compute performance once again to reach 125 petaFLOPS of sparse compute (12.5 petaFLOPS dense) at 16-bit precision.

The inference pivot

Initially focused on AI training, Cerebras made a strategic pivot toward inference in mid-2024 with a boutique inference-as-a-service offering. This move proved prescient, as the company's accelerators turned out to be exceptionally well-suited for high-speed large language model inference.

The massive SRAM capacity of Cerebras' chips—combined with extraordinary memory bandwidth (21 PB/s in the WSE-3, nearly 1000x faster than Nvidia's latest GPUs)—created an ideal foundation for LLM inference. When combined with speculative decoding techniques, Cerebras' systems could generate tokens faster than any GPU-based system available at the time.

According to benchmarks from Artificial Analysis, Cerebras' hardware can process more than 2,200 tokens per second when running GPT-OSS 120B High, 2.8x faster than the next fastest GPU cloud from Fireworks. This performance advantage helped Cerebras attract major customers including Alphasense, AWS, Cognition, Meta, Mistral AI, Notion, Perplexity, and eventually OpenAI. For more on their inference capabilities, see Cerebras' performance benchmarks.

The road to IPO

Cerebras first filed to go public in September 2024, but withdrew its S-1 filing a year later. The initial filing revealed that G42 accounted for 87% of the company's revenue, which raised concerns about customer concentration. However, the rapid growth of Cerebras' inference business in the interim provided a more compelling story for potential investors.

The successful IPO, which priced shares at $52 and saw them surge nearly 70% on the first day of trading, reflects market confidence in Cerebras' unique approach to AI acceleration. The company now trades on NASDAQ under the ticker CBRS. You can track their stock performance and find investor materials on their investor relations page.

Looking ahead

Technologically, Cerebras faces pressure to refresh its product line. The WSE-3 accelerators that powered the IPO are aging, and the architectural advantages provided by the SRAM-heavy design are narrowing as competitors catch up. Nvidia's acquisition of Groq, which brought an SRAM-packed inference platform into the fold, particularly intensifies competitive pressure.

Industry observers expect Cerebras to introduce WSE-4 with significant improvements in floating-point performance, likely aligning with industry trends toward lower precision data types like FP8 and FP4. The company may also leverage TSMC's 3D chip stacking technology to increase SRAM capacity beyond the current 44GB limit, which would enable more efficient deployment of larger models.

Strategically, Cerebras is likely to expand partnerships similar to its collaboration with AWS, which combines Trainium3 accelerators with Cerebras' WSE-3 systems to optimize inference performance. This hybrid approach could extend to other chipmakers, positioning Cerebras as a specialized decode accelerator that handles bandwidth-intensive aspects of inference while partners manage compute-heavy prompt processing.

As Cerebras transitions from a private startup to a public company, investors will be watching closely whether the company can maintain its technological edge while expanding its customer base beyond current concentrations. The successful IPO provides both financial resources and market validation, but the real test lies in delivering the next generation of wafer-scale innovation that can sustain growth in an increasingly competitive AI chip market.

Comments

Please log in or register to join the discussion