An examination of Claude Code's capabilities in building dbt projects, revealing that while AI can significantly enhance data engineer productivity, it still requires human oversight and cannot fully replace the nuanced judgment and domain expertise of experienced data professionals.

The narrative that artificial intelligence will replace data engineers has gained considerable momentum in recent discussions. However, after a thorough examination of Claude Code's capabilities in building dbt projects, it becomes evident that this technology serves better as a powerful productivity enhancer rather than a replacement for skilled data engineers.

The Experiment: Testing Claude Code with dbt

The author conducted an experiment to determine how effectively Claude Code could build a dbt project for UK Environment Agency flood monitoring data. The prompt provided was comprehensive, outlining requirements for proper data modeling, handling data quality issues, implementing SCD type 2 snapshots, historical backfill, documentation, tests, and source freshness checks.

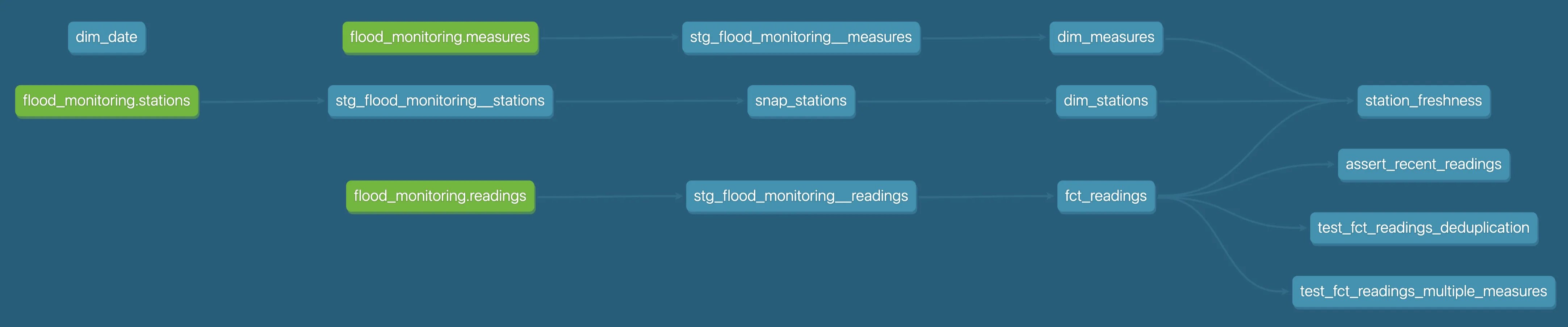

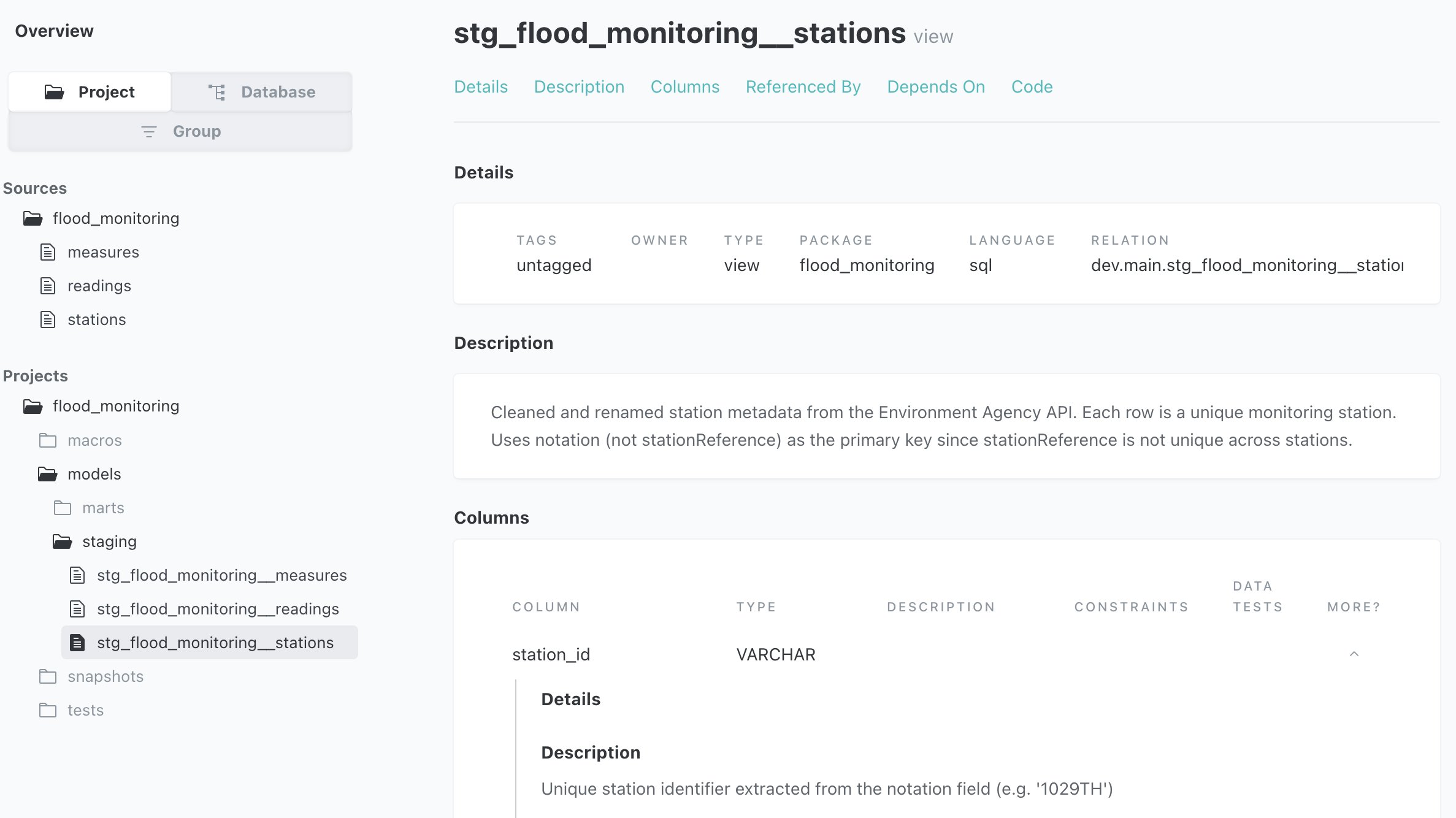

The experiment revealed that Claude Code, when given sufficient context and requirements, could construct a functional dbt project with appropriate directory structure, models, tests, and documentation. The project successfully compiled and executed, producing the expected data outputs in DuckDB.

What Claude Code Did Well

Claude demonstrated several impressive capabilities that align with data engineering best practices:

Proper Data Modeling: It created appropriate staging layers and dimensional models with correct relationships between entities.

Incremental Loading: Implemented incremental fact table loads with proper unique keys, optimizing performance for large datasets.

n3. Data Quality Handling: Addressed messy source data by implementing cleaning logic, such as handling pipe-delimited values by extracting the first value and casting appropriately.

Slowly Changing Dimensions: Successfully implemented SCD type 2 snapshots for station metadata, though interestingly only for stations as specified in the prompt.

Documentation and Testing: Generated appropriate documentation and comprehensive tests including not_null, unique, accepted_values, and freshness checks.

Backfill Functionality: Created a macro to generate archive URLs for historical data backfill, demonstrating understanding of temporal data requirements.

Code Quality: Avoided SELECT * statements, instead explicitly specifying columns, and implemented more elegant date logic than typical hand-written alternatives.

Where Claude Code Fell Short

Despite these successes, Claude Code made several significant errors that reveal current limitations:

Critical Data Incompleteness: The most concerning error was Claude's failure to fetch all station records. It implemented a Python script that only retrieved 1,493 out of 5,458 total stations due to misunderstanding API pagination limits. This created a substantial data gap that wouldn't be immediately apparent.

Silent Data Dropping: Claude silently dropped important columns (datumOffset, gridReference) from the dimension models, potentially losing valuable reference data without indicating this was happening.

Structural Issues: Duplicated the date_time field in the fact table, creating unnecessary redundancy.

Incomplete Implementation: While implementing SCD for stations, it only included some of the columns that might change, omitting potentially important fields like northing/easting.

Unit Handling: Dropped the unit column from measures, which while perhaps an optimization, represents a loss of potentially valuable data.

The Trust Problem

The most significant issue identified is not that Claude makes mistakes, but that it makes mistakes with confidence. As the author states: "WRONG IS WORSE THAN ABSENT BECAUSE YOU CAN'T TRUST IT."

When Claude confidently builds something incorrectly, it creates a trust crisis. Unlike a human who might ask clarifying questions or indicate uncertainty, AI proceeds with incorrect implementations as if they are correct. This makes verification more challenging than when starting from scratch, as one must not only check what was implemented but also identify what was missed or implemented incorrectly.

The Role of Prompt Engineering

The experiment revealed that prompt quality significantly impacts Claude's performance. When given a minimal prompt without detailed requirements, Claude only produced basic functionality. However, even with comprehensive prompts, Claude still made critical errors.

The author notes that "relying on prompting alone is cute for tricks, but it's not a viable strategy for reliable hands-off dbt code generation." This highlights that prompt engineering alone cannot overcome the fundamental limitations of current AI systems.

Model Comparison

Interestingly, the author found that the specific Claude model used (Opus 4.6 vs. Sonnet 4.5) mattered less than the quality of the prompt and available context. With proper context, Sonnet 4.5 produced respectable results, suggesting that model selection should not be the primary focus for improving AI-assisted data engineering workflows.

Implications for Data Engineering

The findings suggest several important implications for the data engineering profession:

Productivity Enhancement: Claude Code can significantly accelerate routine tasks, allowing data engineers to focus on higher-value work.

Shift in Responsibilities: Data engineers will increasingly shift from pure coding to supervisory roles, reviewing AI-generated code, making strategic decisions, and handling edge cases.

New Skill Requirements: Effective data engineers will need to develop skills in prompt engineering, AI output evaluation, and integration of AI tools into their workflows.

Quality Assurance Processes: Organizations will need to establish robust review processes for AI-generated code, particularly for critical data pipelines.

The Future: DE + AI > DE

The author concludes that the most effective approach is not choosing between human data engineers and AI, but leveraging both. Claude Code represents a powerful tool that, when used appropriately, can make data engineers vastly more productive.

The analogy presented is compelling: using Claude Code for data engineering is like an accountant choosing to do their sums by hand instead of using a calculator. While technically possible, it represents an unnecessary limitation on productivity and capability.

Conclusion

Claude Code demonstrates impressive capabilities in building dbt projects and can significantly enhance data engineer productivity. However, it still makes critical errors that require human oversight and correction. The technology is best viewed as a productivity booster rather than a replacement for data engineers.

As the author states, "if you value your job, use it [Claude Code] to one-shot a dbt project!" Instead, data engineers should embrace Claude Code as a collaborative tool that handles routine implementation while human experts provide strategic oversight, domain knowledge, and quality assurance.

The future of data engineering lies not in replacement by AI, but in augmentation through AI—a future where human expertise and artificial intelligence combine to create more robust, efficient, and valuable data solutions than either could achieve alone.

Comments

Please log in or register to join the discussion