Anthropic removes the long-context premium for Opus 4.6 and Sonnet 4.6, making the full 1M token window available at standard pricing with expanded media limits.

Anthropic has eliminated the long-context premium for its flagship models, making the full 1 million token context window available at standard pricing for Claude Opus 4.6 and Sonnet 4.6. The change, announced March 13, 2026, means developers can now access the complete context window without paying extra, with pricing remaining at $5/$25 per million tokens for Opus 4.6 and $3/$15 for Sonnet 4.6 regardless of context length.

The removal of the long-context premium represents a significant shift in how developers can approach complex AI applications. Previously, requests exceeding 200,000 tokens incurred additional costs, creating a barrier for applications requiring extensive context. Now, a 900,000-token request costs the same per-token rate as a 9,000-token one.

Beyond pricing, the update brings expanded media capabilities. Users can now include up to 600 images or PDF pages per request, up from the previous limit of 100. This expansion particularly benefits applications involving document analysis, code review, and multimedia processing.

For developers already using the beta version of 1M context, no code changes are required. The system automatically handles requests over 200,000 tokens without needing a special header. The feature is available today across multiple platforms including Claude Platform natively, Microsoft Azure Foundry, and Google Cloud's Vertex AI.

Claude Code users on Max, Team, and Enterprise plans with Opus 4.6 access will automatically default to the full 1M context window. This enhancement allows for longer, more coherent conversations without the context compaction that previously forced details to vanish during extended sessions.

Real-World Impact: From Code Review to Legal Discovery

The expanded context window addresses a fundamental limitation in AI-assisted workflows. Anton Biryukov, a software engineer, describes how Claude Code previously "burned 100K+ tokens searching Datadog, Braintrust, databases, and source code. Then compaction kicks in. Details vanish. You're debugging in circles."

With 1M context, developers can now search, re-search, aggregate edge cases, and propose fixes all within a single window. This capability transforms debugging from a fragmented process into a cohesive investigation.

Legal and research applications particularly benefit from the expanded context. Mauricio Wulfovich, ML engineer at Eve, explains that "plaintiff attorneys' hardest problems demand it. Whether it's cross-referencing a 400-page deposition transcript or surfacing key connections across an entire case file, the expanded context window lets us deliver materially higher-quality answers than before."

Scientific research represents another domain where the 1M context window proves transformative. Dr. Alex Wissner-Gross, co-founder of a physics research organization, notes that "scientific discovery requires reasoning across research literature, mathematical frameworks, databases, and simulation code simultaneously. Claude Opus 4.6's 1M context and expanded media limits let our agentic systems synthesize hundreds of papers, proofs, and codebases in a single pass, helping us dramatically accelerate fundamental and applied physics research."

Performance and Efficiency Gains

The practical benefits extend beyond simply having more context. Adhyyan Sekhsaria, founding engineer at Cognition Labs, reports that their Devin Review agent became "significantly more effective" with 1M context. Previously, large diffs didn't fit in a 200K context window, forcing the agent to chunk context and lose cross-file dependencies. "With 1M context, we feed the full diff and get higher-quality reviews out of a simpler, more token-efficient harness."

Jon Bell, CPO at an AI company, observed a 15% decrease in compaction events after raising their Opus context window from 200K to 500K. "Now our agents hold it all and run for hours without forgetting what they read on page one."

Izzy Miller, AI research lead, found that expanding context actually improved efficiency: "We raised our Opus context window from 200k to 500k and the agent runs more efficiently — it actually uses fewer tokens overall. Less overhead, more focus on the goal at hand."

Technical Foundation: Performance at Scale

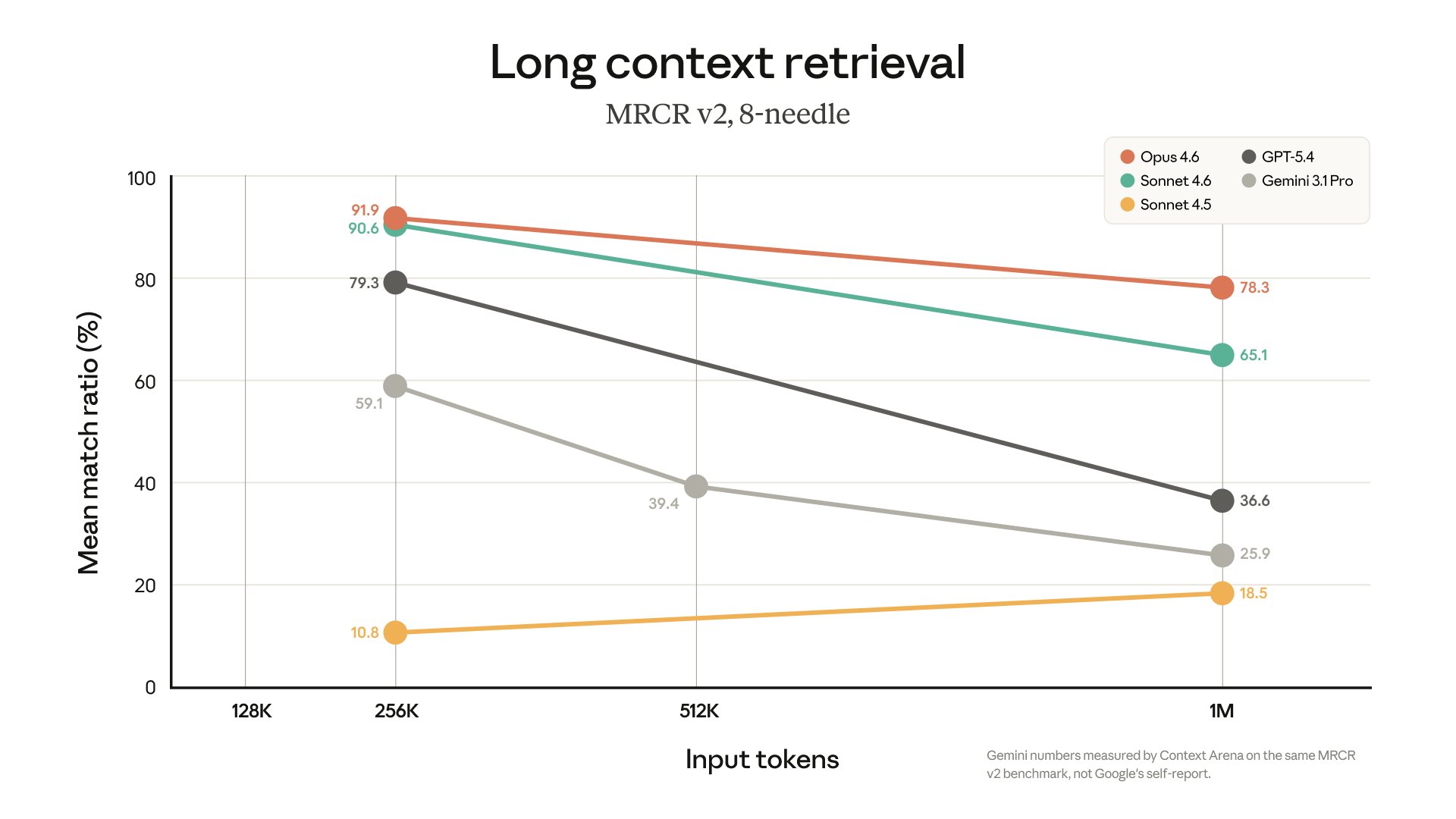

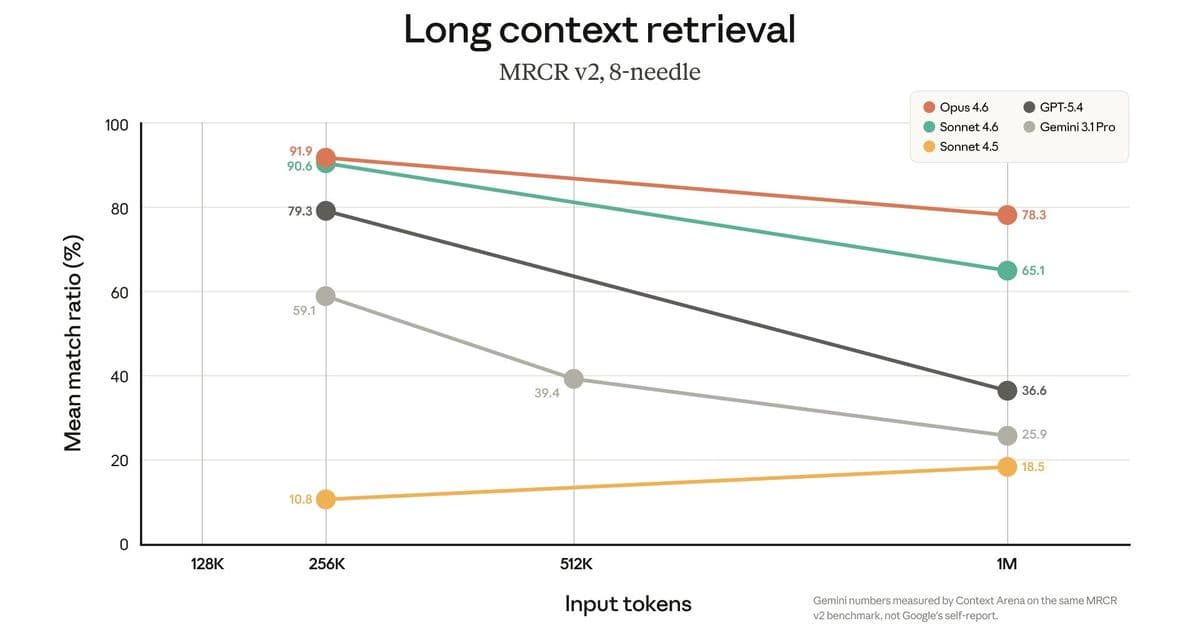

The expanded context window isn't just about quantity — it's about maintaining quality at scale. Opus 4.6 achieves 78.3% on MRCR v2, the highest score among frontier models at the 1M token context length. This performance demonstrates that the model can effectively reason across extensive information without degradation.

Anthropic emphasizes that "long context retrieval has improved with each model generation," addressing a common criticism of large context windows where models struggle to locate relevant information buried deep within the context.

Industry Context and Competitive Landscape

Anthropic's move to standardize 1M context pricing follows a broader industry trend toward larger context windows. OpenAI's GPT-4 Turbo offers 128K context, while Google's Gemini models provide up to 1M tokens. However, Anthropic's decision to eliminate the premium for long context represents a more aggressive pricing strategy.

This approach could pressure competitors to reconsider their own pricing models for extended context. The move aligns with Anthropic's broader strategy of making advanced AI capabilities more accessible to developers and enterprises.

Getting Started with 1M Context

The feature is available immediately through multiple channels. Developers can access it natively on Claude Platform or through cloud providers including Amazon Bedrock, Google Cloud's Vertex AI, and Microsoft Foundry. Claude Code users on supported plans will automatically utilize the expanded context.

Anthropic provides comprehensive documentation and pricing details for teams looking to implement the new capabilities. The company suggests that applications involving document analysis, code review, scientific research, and complex reasoning tasks will see the most immediate benefits.

The removal of the long-context premium, combined with expanded media limits and maintained performance at scale, positions Claude as a more capable platform for complex, context-heavy applications. As AI systems increasingly handle multi-step reasoning across vast information spaces, the ability to maintain coherent context without cost penalties becomes a critical competitive advantage.

Comments

Please log in or register to join the discussion