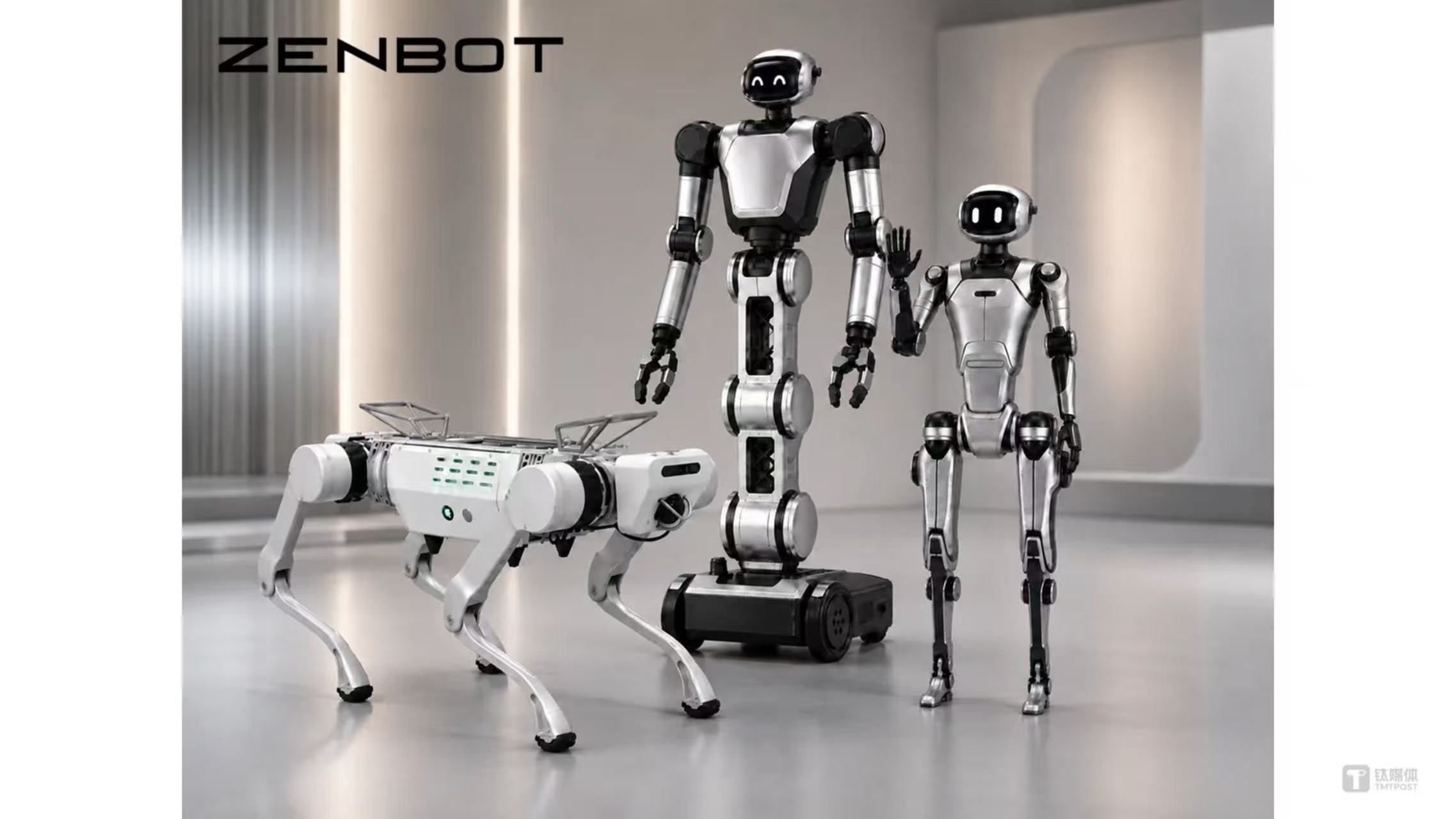

Zenbot closed an angel round of roughly 100 million yuan, backed by several precision‑manufacturing firms. The money will fund a general‑purpose embodied AI world model, GaN‑based joint modules, and a brain‑spine communication stack, but the roadmap still hinges on unproven hardware pipelines and a lack of demonstrated large‑scale deployments.

Zenbot’s Angel Round: What’s Real and What Remains to Be Shown

Zenbot, a Shanghai‑based company that describes itself as an "embodied artificial intelligence infrastructure" provider, announced a closing of an angel round worth close to 100 million CNY (about US$14 M). The round was led by three listed precision‑manufacturing groups—ChangYing Precision (300115.SZ), Kedali (002850.SZ) and Zhaoming Technology (301000.SZ)—along with L2F Light Source Entrepreneur Fund and Sirius Capital. Light Source Capital acted as exclusive financial adviser.

What the announcement claims

- General‑purpose embodied AI world model – a unified representation that supposedly lets a robot understand and act in any environment, similar in ambition to OpenAI’s World Model research but with a hardware‑centric twist.

- Third‑generation GaN joint drivers – a custom semiconductor driver stack built on gallium‑nitride (GaN) technology, advertised as lighter, faster, and more power‑efficient than the silicon‑based actuators common in current service robots.

- Brain‑spine real‑time communication architecture – a proprietary low‑latency link between perception (the "brain") and actuation (the "spine"), claimed to enable sub‑millisecond response times.

- Full‑stack system design for mass production – the funding will supposedly allow Zenbot to move from lab prototypes to a production line capable of delivering dozens of units per month.

What’s actually new?

1. A world‑model focus that leans on existing research

The term world model has been circulating in the robotics community since the 2018 paper by Ha and Schmidhuber, and more recently in DeepMind’s Gato and Perceiver families. Zenbot’s public materials do not disclose whether their model will be a transformer‑style architecture, a diffusion‑based generative model, or something else. Without a technical whitepaper or open‑source code, the claim remains a high‑level goal rather than a concrete contribution.

2. GaN drivers for joint actuation

GaN transistors are indeed gaining traction in power‑electronics (e.g., Tesla’s Model 3 inverter). Their advantage is higher switching frequency, which can reduce motor size and improve torque density. However, integrating GaN into compact robotic joints is non‑trivial: thermal management, packaging, and controller firmware all need bespoke engineering. Most commercial robot arms still rely on silicon MOSFETs because the ecosystem around GaN is immature. Zenbot’s announcement is the first public claim of a dedicated "third‑generation" GaN driver for humanoid joints, but no performance numbers (peak current, thermal resistance, latency) are provided.

3. Brain‑spine communication stack

Low‑latency links between perception and actuation have been explored in projects like Boston Dynamics’ Atlas (using a custom EtherCAT‑style bus) and Nvidia’s Isaac platform (leveraging ROS 2 DDS). Zenbot’s "brain‑spine" architecture sounds similar to a proprietary DDS variant, but again, the lack of protocol specifications or benchmark data makes it hard to assess whether it offers any practical advantage over existing open standards.

4. Manufacturing backing from precision‑tool firms

The involvement of ChangYing, Kedali, and Zhaoming is noteworthy because these companies specialize in high‑precision CNC, laser machining, and micro‑assembly. Their participation suggests Zenbot plans to outsource the fabrication of joint modules and perhaps the chassis of its robots. This could accelerate a move to volume production, but it also ties the startup’s timeline to the capacity and quality control of external fabs—an often‑overlooked risk for hardware‑first AI firms.

Limitations and open questions

| Area | Current status | What’s missing |

|---|---|---|

| World model | Conceptual goal; no paper or demo | Architecture details, training data scale, benchmark results (e.g., Sim2Real transfer) |

| GaN drivers | Prototype claim; no specs | Efficiency numbers, thermal tests, comparison to silicon baselines |

| Communication stack | Named but undocumented | Latency measurements, compatibility with ROS 2 or other middleware |

| Manufacturing pipeline | Funding earmarked for tooling | Yield rates, cost per joint, supply‑chain resilience for GaN components |

Without at least a prototype video or a technical pre‑print, investors and potential customers have little to evaluate beyond the headline numbers. The funding amount, while respectable for a Chinese angel round, is modest compared to the multi‑hundred‑million budgets required to bring a humanoid robot from prototype to market.

How this fits into the broader embodied AI trend

China has been nurturing a cluster of hardware‑focused AI startups, often backed by state‑linked manufacturing groups. Zenbot’s approach mirrors that of companies like CloudMinds (cloud‑connected robots) and UBTech (service humanoids), but it tries to differentiate by pushing the hardware stack—GaN actuation and a custom communication bus—further upstream. If the engineering challenges can be solved, the company could offer a more power‑efficient platform for applications such as warehouse automation, inspection, or low‑payload service robots.

Bottom line

Zenbot’s near‑100 M CNY angel round signals serious intent to build a vertically integrated embodied AI platform, and the backing of precision‑manufacturing investors adds credibility to the hardware ambition. However, the public information stops at marketing‑style bullet points; there is no independent validation of the world‑model algorithm, GaN driver performance, or the claimed sub‑millisecond brain‑spine link. Until a technical paper, open‑source demo, or at least a performance video appears, the startup remains an interesting hardware hypothesis rather than a proven solution.

For further reading on GaN in robotics, see the recent IEEE review "Gallium Nitride Power Electronics for Mobile Robotics" (2025).

Comments

Please log in or register to join the discussion