Microsoft Security Store Advisor was created using an Agentic Software Development Life Cycle (SDLC) that couples specialized AI agents with mandatory human gates. The approach reduced cycle time by 60%, cut pre‑production defects by 38%, and delivered a defensible, security‑focused AI assistant for the Microsoft Security Store marketplace.

From Idea to Production – Building Microsoft Security Store Advisor with an Agentic SDLC

Published May 14, 2026

Authors: Sudarshan Jagannathan and Janaki Ramachandran

What changed?

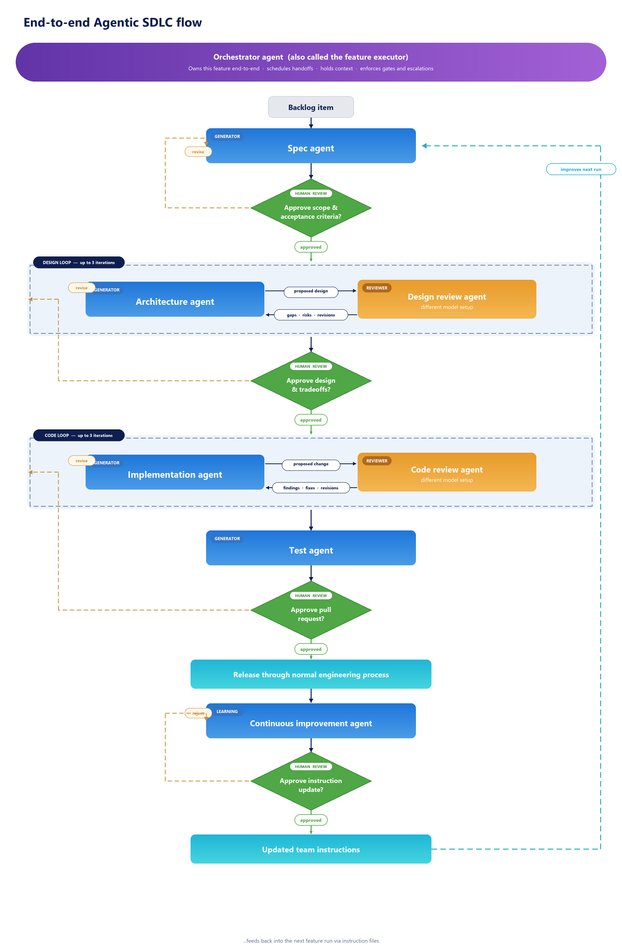

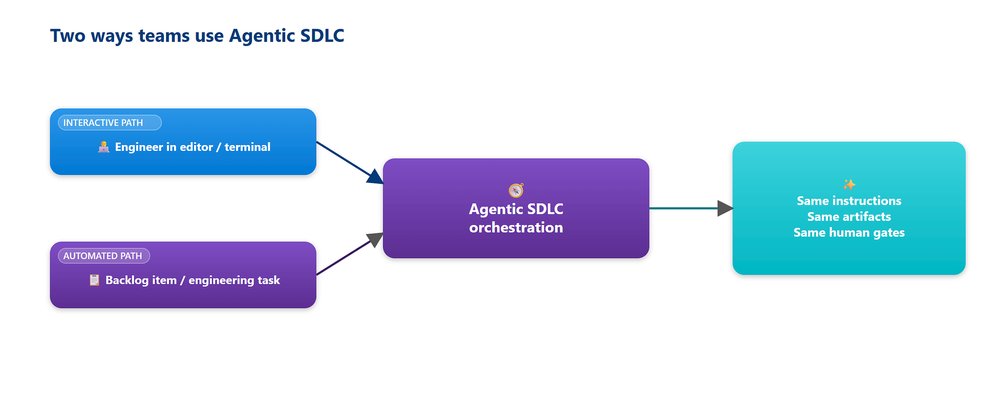

Microsoft Security Store needed an AI‑driven assistant that could understand natural‑language queries, surface relevant security solutions, and do so with a level of accountability suitable for a security‑sensitive product. Traditional AI usage patterns – isolated code suggestions or fully autonomous agents – either failed to compound learning across releases or introduced unacceptable risk. The team therefore introduced an Agentic SDLC, a pipeline where each step is handled by a dedicated AI agent, each agent is reviewed by a different model, and every transition is gated by a human decision.

Provider comparison and architectural choices

| Aspect | Conventional AI‑assist workflow | Agentic SDLC (Microsoft) |

|---|---|---|

| Model usage | Single model for generation and review (often the same Copilot instance) | Separate model families for generators and reviewers – e.g., gpt‑4o‑mini for generation, gpt‑4‑turbo with a skeptical system prompt for review |

| Human involvement | Optional, usually after a PR is opened | Mandatory gate after each stage (spec, design, code, test, post‑production) |

| Instruction handling | Ad‑hoc prompts, no version control | Version‑controlled instruction files travel with every agent, defining coding patterns, security expectations, dependency rules, and review checklists |

| Observability | Logs in separate systems, manual correlation | Automatic audit trail in Azure DevOps: agent name, artifact produced, approval version, linked work items – all captured in a standardized review template |

| Continuous improvement | Manual retrospectives, occasional post‑mortems | Dedicated continuous‑improvement agent that reads the full run record, proposes instruction‑file updates, and opens a PR for human approval |

Why the Agentic approach matters for Microsoft Security Store Advisor

- Security‑first gating – No agent can merge code or change scope without a human sign‑off, ensuring every recommendation is defensible.

- Model diversity – Using distinct models for generation and critique prevents the well‑known “self‑approval” problem where a model agrees with its own output.

- Instruction‑file discipline – Plain‑language, version‑controlled policies give the team a single source of truth that every agent consumes, making compliance auditable.

- Cost and latency control – Iteration caps (three rounds per stage) keep AI usage predictable while still allowing the model to self‑correct before involving a person.

Business impact – Stage‑by‑stage lessons

1. Spec – From intent to scoped work

The spec agent originally treated every “may eventually want” clause as a hard goal, inflating designs. The team added a non‑goals section to the instruction file and required the spec agent to emit goals, non‑goals, and open questions before seeking approval. Result: scope decisions were locked in after one or two human turns, eliminating re‑work later in the pipeline.

2. Architecture – Making the reviewer actually review

Running the design generator and reviewer on the same model led to a 95 % agreement rate – essentially a single opinion. Switching the reviewer to a different model family and giving it a skeptical system prompt (focused on security, scalability, privacy, operability) introduced a real check. An explicit checklist in the instruction file further sharpened the review, catching misuse cases before any human looked at the design.

3. Implementation – Avoiding whole‑file rewrites

The implementation agent initially produced full‑file replacements, creating massive pull requests. Two disciplines solved this:

- The orchestrator now loads only files within the spec’s blast radius (computed via a dependency‑graph cut).

- The implementation agent must output diff‑only edits with per‑hunk justification; full rewrites require an explicit “reason for rewrite” flag. PR size dropped ~70 %, and reviewers could focus on judgment rather than line‑by‑line diffing.

4. Test – Beyond green tests

Generated tests covered the spec but missed real‑world edge cases such as malformed queries, partial authentication, and prompt‑injection strings. The test agent was fed synthetic inputs derived from sanitized production telemetry and a security‑cases checklist from the instruction file. Defects per feature in pre‑production fell by 38 %.

5. Post‑production – Agents that assist, not alarm

The first bug‑hunter agent generated alerts for every anomaly, quickly overwhelming on‑call staff. A severity × novelty scoring system now filters alerts; only high‑score items page on‑call, while the rest are batched for weekly triage. The incident‑response agent now assembles runbooks and context for the human on‑call rather than acting autonomously. The telemetry & feedback agent proposes backlog items and instruction‑file updates, but a person must approve each change.

Governance and observability – Three rails of control

- Human approval rail – Every stage pauses for a person before the next agent runs.

- Logging rail – Each agent logs its run, artifact, and approval version to Azure DevOps, linking to work items.

- Audit‑template rail – A standardized template captures the full decision trail, enabling auditors to reconstruct any change months later.

These rails make the pipeline auditable and feed the continuous‑improvement agent, which diffs instruction‑file proposals against the current set, attaches evidence (session IDs, PR numbers, incident tickets), and opens a PR for review.

Quantitative outcomes

| Metric | Before Agentic SDLC | After (first four March features) | Change |

|---|---|---|---|

| Cycle time (spec → production) | 4.5 weeks (baseline) | ~1.8 weeks | –60 % |

| Pre‑production defects per feature | 5.2 | 3.2 | –38 % |

| PR size (lines changed) | 1,200 avg. | 360 avg. | –70 % |

| Human review time per PR | 45 min | 15 min | –66 % |

Security improvements are reflected in the fact that every spec, design, and PR is independently challenged by a different model before any human sees it, and the instruction files enforce consistent security expectations across the entire lifecycle.

What remains hard

- Evaluating continuous‑improvement impact – Measuring whether an instruction‑file change improves the next feature requires more data points; the signal is still stabilizing.

- Cross‑repo / cross‑team orchestration – The orchestrator works well for single‑repo features, but coordinating changes across multiple repositories or teams still relies on a human lead.

How to try it yourself

- Visit the Microsoft Security Store and launch the Security Store Advisor.

- Review the Microsoft Security Store documentation for integration details.

- Read the Responsible AI FAQ for Security Store Advisor to understand the safeguards built into the pipeline.

- See the launch announcement in the Microsoft Security Store blog post.

Closing thoughts

The Agentic SDLC shows that AI can be a productive partner when its output is always reviewed by a distinct model and always approved by a person. By codifying security expectations in version‑controlled instruction files and closing the loop with a continuous‑improvement agent, Microsoft turned a risky AI experiment into a repeatable, auditable production system. The result is a faster delivery cadence, fewer defects, and a defensible AI assistant that customers can trust.

Comments

Please log in or register to join the discussion