An in‑depth look at the new std::simd library in C++26, its historical roots, how it compares to existing SIMD solutions, and why its design choices make it a poor fit for performance‑critical code such as high‑frequency trading, image codecs, and scientific kernels.

Why C++26’s std::simd Misses the Mark for Real‑World SIMD

Thesis

The inclusion of std::simd in C++26 fulfills a promise that has long existed—write SIMD code once and compile it everywhere—but the library arrives after compilers, third‑party libraries, and domain‑specific languages have already solved the hard parts more efficiently. Its fixed‑width, template‑heavy design hides SIMD instructions from the optimizer, leads to massive compile‑time overhead, and cannot express the cross‑lane operations that dominate real SIMD workloads. Consequently, in most performance‑critical domains, std::simd is slower than a plain scalar loop and far less useful than alternatives such as Google Highway, SIMDe, xsimd, EVE, or ISPC.

1. The Historical Path to std::simd

- 2009‑2010 – Vc library – Matthias Kretz built a portable, zero‑overhead C++ abstraction for vectorizing high‑energy‑physics simulations. It demonstrated that the type system could express data‑parallelism without intrinsics.

- 2016 – P0214 – The idea entered the standardization process as a Parallelism Technical Specification (TS 19570:2018). GCC 11 shipped an experimental

<experimental/simd>header in 2021, while Kretz maintained a standalone reference implementation. - 2026 – P1928 – After nearly a decade of committee discussion,

std::simdgraduated from experimental to the C++26 standard.

During that decade, three forces reshaped the SIMD ecosystem:

- Auto‑vectorizers in GCC, Clang, and MSVC grew powerful enough to lift most straight‑line loops to the widest registers available.

- ISPC proved that a language‑level SPMD model could generate better code than any library abstraction.

- ARM’s SVE introduced scalable‑width vectors, a concept that clashes with the fixed‑width model used by

std::simd.

The result is a library that solves a problem that compilers already handle well, while ignoring the problems that still force developers to write hand‑crafted intrinsics.

2. The Libraries That Already Own the Space

| Library | Key Feature | Runtime Dispatch | Width Model | Notable Users |

|---|---|---|---|---|

| Google Highway | Length‑agnostic SIMD with runtime dispatch | Yes | Scalable (SVE) | Chromium, Firefox, JPEG‑XL, libaom |

| SIMDe | Portable intrinsics – translate Intel intrinsics to ARM/Power | No (compile‑time) | Fixed (Intel‑centric) | FFmpeg, libjpeg‑turbo |

| xsimd | Batch types for many ISAs, tight integration with xtensor | No (compile‑time) | Fixed | Numerical‑computing stack |

| EVE | Concepts‑driven, modern C++20 design | No | Fixed | Research prototypes |

| Agner Fog VCL | Thin wrappers around x86 intrinsics, predictable codegen | No | Fixed (x86 only) | Scientific code bases |

Highway’s runtime dispatch lets a single binary adapt to the best ISA at execution time, a capability that std::simd lacks entirely. SIMDe’s approach of making intrinsics portable is pragmatic for migration, but it still forces developers to think in Intel‑centric terms. None of these libraries suffer from the optimizer‑visibility problem that plagues std::simd because they either expose the intrinsics directly or generate code that the compiler can still reason about.

3. Compile‑Time Tax of a Template Library

Including <experimental/simd> pulls in a cascade of nested templates (simd.h, simd_x86.h, simd_builtin.h, …). A trivial function that computes sin on a SIMD vector can take ≈2.2 s to compile, while the equivalent scalar loop compiles in ≈0.2 s. In a large HFT code base with hundreds of translation units, this overhead translates into minutes of wasted build time for code that ultimately runs slower.

Error messages also become unwieldy. A misuse of where() with std::float16_t generates more than a hundred lines of template instantiation traces, obscuring the original source location. A language‑level SIMD feature could emit diagnostics that point directly at the offending expression.

4. Performance: When std::simd Is Slower Than Nothing

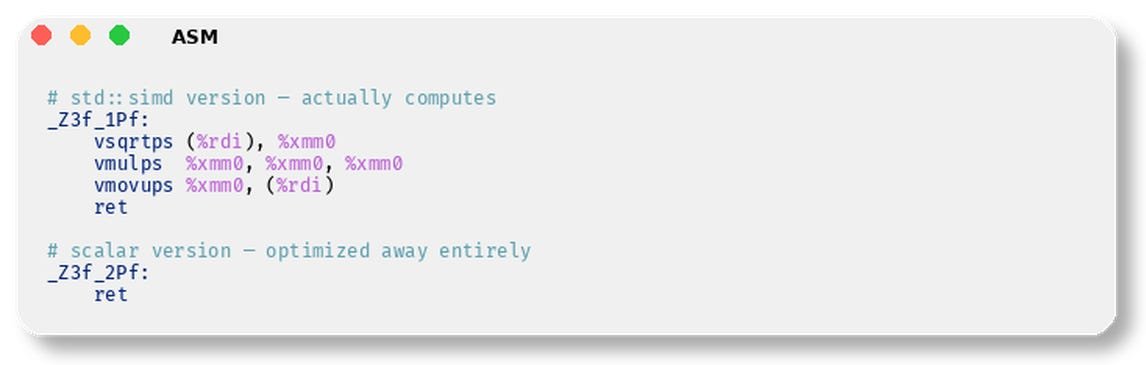

4.1 Auto‑vectorizer Wins

With -O3 -ffast-math -march=native, a scalar loop that calls std::sin is auto‑vectorized by GCC/Clang using the libmvec implementation. The generated code runs ≈2.4× faster than the explicit std::simd version because the optimizer can inline the vector math library and apply algebraic simplifications.

4.2 The Default Width Problem

std::simd<int>::size() reports the ABI‑safe width, which on most current compilers is 128 bits (four 32‑bit ints) even on AVX‑512 hardware. Consequently, a hand‑written std::simd loop on an AVX‑512 machine processes only half the data per iteration, while the scalar loop auto‑vectorizes to the full 512‑bit width.

| Platform | Default width used by std::simd | Width used by auto‑vectorizer |

|---|---|---|

| AVX2 (256‑bit) | 128 bits | 256 bits |

| AVX‑512 (512‑bit) | 128 bits | 512 bits |

The benchmark in the original article shows 326 ns for the std::simd version versus 137 ns for the scalar loop on an AVX‑512 CPU.

4.3 ARM SVE – Fixed Width vs. Scalable Width

On aarch64 with SVE, the compiler can generate predicated load/store instructions that adapt to the runtime vector length. std::simd falls back to fixed‑width NEON instructions, producing roughly three times more instructions and no use of SVE’s scalable registers. The abstraction therefore compiles everywhere but optimizes for nowhere.

5. The 90 % of SIMD Work That std::simd Cannot Express

Real SIMD code spends most of its time on cross‑lane operations:

- Byte shuffles (

_mm256_shuffle_epi8) - Horizontal reductions (

_mm256_hadd_epi32) - Saturating arithmetic (

_mm_adds_epu8) - Pack/unpack (

_mm256_packus_epi16) - Mask extraction (

_mm_movemask_epi8) - Complex permutations (

_mm256_permutevar8x32_epi32)

std::simd only provides element‑wise arithmetic and logical operators—exactly what the auto‑vectorizer already handles. The missing primitives are the reason codecs, image processing pipelines, and string‑search algorithms rely on hand‑written intrinsics or domain‑specific languages.

6. What a Real Solution Would Look Like

- Language‑level SIMD – A dedicated SPMD language (e.g., ISPC) or a built‑in C++ construct that allows the compiler to see the actual SIMD instructions, enabling full optimization passes.

- Fixed‑width alignment in the type system –

aligned_ptr<float, 64>would let the optimizer propagate alignment across function calls. - Correct integer promotion – Operations on

uint8_tshould stay in 8‑bit registers; this requires a change to the core language rules, not a library shim. - Portable cross‑lane primitives – A single portable byte‑shuffle primitive would unlock the majority of codec‑level kernels.

- Standardized restrict / noalias qualifier – Giving the optimizer reliable aliasing information would dramatically improve vectorization of memory‑bound loops.

Until such language changes appear, developers should follow a pragmatic split:

- Let the auto‑vectorizer handle trivial loops.

- Use intrinsics or a battle‑tested library (Highway, SIMDe, EVE) for the hard parts.

- Reserve

std::simdfor experimental code where portability is more important than raw performance.

7. Implications for Low‑Latency Trading and Other Critical Domains

High‑frequency trading systems demand deterministic latency and the absolute best instruction throughput. The extra compile‑time, the hidden vector width, and the inability to express packed arithmetic make std::simd a liability in such environments. Existing codebases that already employ hand‑crafted intrinsics will see no benefit from switching, and the risk of regressions outweighs any marginal convenience.

For image/video codecs, the story is identical: the performance‑critical inner loops rely on shuffles, saturating adds, and pack/unpack operations—all absent from std::simd. The industry’s response—building Highway, SIMDe, and ISPC—demonstrates that a one‑size‑fits‑all library cannot satisfy these workloads.

8. Counter‑Perspective

Proponents argue that std::simd lowers the barrier for developers unfamiliar with intrinsics and that future compiler improvements could mitigate the current performance gap. There is also the aesthetic appeal of a standard‑library solution that does not require an external dependency. However, the empirical data from the reproduced benchmarks, the compile‑time penalties, and the structural mismatch with scalable ISAs suggest that these benefits are outweighed by the practical costs for anyone who truly needs SIMD performance.

9. Conclusion

std::simd arrives as a well‑intentioned, committee‑driven abstraction that solves a problem already addressed by modern auto‑vectorizers, and it does so with a design that fundamentally limits optimizer visibility. Existing third‑party libraries and language‑level approaches provide richer feature sets, better performance, and more realistic portability. For the foreseeable future, the most sensible strategy for performance‑critical C++ developers is to keep using intrinsics (or a proven library) for the hard parts and rely on the compiler’s auto‑vectorizer for the easy parts. The standard’s SIMD offering, while a nice academic exercise, is unlikely to see adoption in high‑frequency trading, codec development, or any domain where every cycle counts.

Low Latency Trading Insights is a reader‑supported publication. Consider subscribing to receive future analyses.

Comments

Please log in or register to join the discussion